Generating Statistical Charts with Validation-Driven LLM Workflows

Source: arXiv:2605.00800 · Published 2026-05-01 · By Pavlin G. Poličar, Andraž Pevcin, Blaž Zupan

TL;DR

This paper addresses a practical bottleneck in chart-dataset construction: generating statistical charts from tabular data is not just a code-synthesis problem, because many chart failures only become obvious after rendering. The authors argue that existing chart corpora often miss key aligned artifacts such as executable code, dataset context, descriptions, and question-answer pairs, which limits both inspection and downstream evaluation. Their response is a staged LLM workflow that explicitly separates dataset screening, plot proposal, code synthesis, rendering, visual validation, refinement, description generation, and QA generation.

The main result is a corpus built from UCI tabular datasets containing 1,500 retained charts from 74 datasets, spanning 24 chart families, with 30,003 question-answer pairs. The workflow discarded 725 of 2,228 chart candidates because severe readability or semantic-fit issues could not be fixed within three correction attempts. The authors then used this corpus to evaluate 16 multimodal LLMs on chart-grounded questions and found a clear difficulty stratification: chart-syntax questions are nearly saturated, while value extraction, comparison, and reasoning remain substantially harder. The paper’s value is therefore as much methodological as empirical: it shows that rendering-aware validation can make synthetic chart corpora more reliable and diagnostically useful.

Key findings

- The workflow produced 1,500 retained statistical charts from 74 UCI datasets, paired with 30,003 question-answer pairs, spanning 24 chart families.

- Out of 2,228 chart candidates, 725 were discarded after up to three refinement attempts, i.e. about 33% of candidates failed validation.

- The generation pipeline uses 20 questions per retained chart, split into 7 easy, 6 medium, and 7 hard questions.

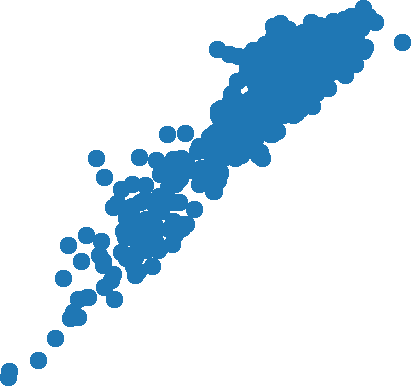

- The authors report that chart-syntax questions are nearly saturated: the strongest model reaches over 99% accuracy on this category (Fig. 3).

- The best overall model reaches about 94% accuracy across all question types in the diagnostic evaluation (Fig. 3).

- Even the strongest models top out at 92% accuracy on value extraction and 90% on reasoning, indicating a large gap between syntax reading and grounded reasoning (Fig. 3).

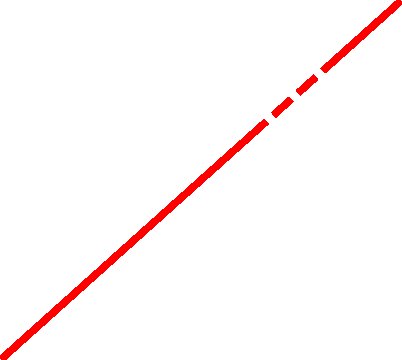

- Common chart families such as scatter plots, bar charts, heatmaps, and pie/donut charts are easier, while radar charts, composition/set diagrams, parallel coordinates, and 3D scatter plots are harder (Fig. 4).

- The refinement loop specifically targets severe readability and semantic-fit failures such as low contrast, overplotting, unreadable axes, and unclear legends; these were the most common unresolved problems.

- The paper uses a chart-level bootstrap to compute 95% confidence intervals for accuracy, resampling whole charts to account for multiple questions per chart.

Threat model

The relevant adversary is not a malicious external attacker but the combination of ambiguous tabular structure and LLM generation failure modes. The workflow assumes an LLM may propose charts that are technically executable yet visually unreadable, semantically mismatched, or poorly grounded in the dataset. It also assumes that a post-render checker can detect many but not all severe problems, while some subtle factual or interpretive errors may survive. The system does not model adversaries with tool access, code injection, or data poisoning; it is concerned with generation quality under benign but noisy inputs.

Methodology — deep read

The threat model is not an adversarial security model in the classic sense; it is a generation-quality model. The implicit “adversary” is failure-prone LLM generation and ambiguous tabular structure: datasets may contain identifiers, opaque codes, weakly documented fields, or variable combinations that lead to unreadable or semantically misleading charts. The workflow assumes access to public tabular datasets and a general-purpose LLM, but no special external tools beyond code execution for plotting and an LLM-based checker/judge. It does not assume the generator can perfectly infer semantics from raw tables, which is exactly why the screening and description-rewrite stages exist.

Data provenance is the UCI Machine Learning Repository. The authors randomly sampled tabular datasets and retained only those with at least 200 instances and at most 2,000 features. They then ran a screening step using dataset metadata, schema, and textual description to reject datasets unlikely to support meaningful comparisons, distributions, or relationships. For retained datasets, the workflow rewrites the raw dataset description into a shorter, data-focused summary and, when possible, rewrites feature names into clearer axis and legend labels. The final corpus contains 1,500 charts from 74 datasets and 30,003 QA pairs. The paper also states that each retained example stores the dataset description, generated chart, final plotting code, intermediate rendered versions, and refinement feedback, but it does not give a public train/test split because this is not a supervised learning benchmark in the usual sense; rather, it is a generated dataset for downstream diagnostic evaluation. The text does not specify a formal manual-labeling process for the chart family labels beyond the workflow’s own chart-type assignment.

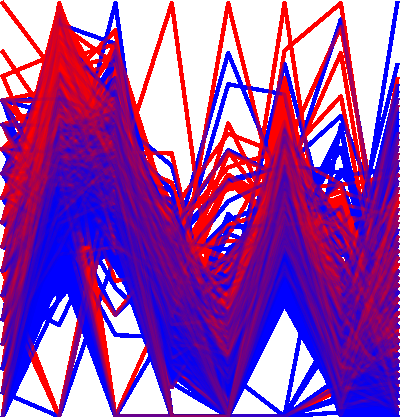

Architecturally, the workflow is a multi-stage pipeline built to keep each step narrow and inspectable. First, a dataset-screening LLM decides whether the sampled table is suitable for statistical charts and produces a shorter semantic summary. Second, a plot-proposal call generates ten candidate chart specifications per dataset, each with a chart type, selected features, and a short intended interpretation; the paper says these proposals are constrained to chart types implementable in a standard scientific Python plotting stack. Third, a code-generation stage writes executable Python for each candidate using only the selected features; the generated code records variables, encodings, transformations, filters, aggregations, and row counts used in the chart. Fourth, after rendering, an MLLM-based checker inspects the image and code for severe readability and semantic-fit problems such as clutter, label collisions, poor scaling, indistinguishable groups, unreadable text, and encoding mismatches. If the checker flags a severe problem, the previous code and feedback are sent back to code generation, preserving the selected chart type and variables whenever possible. This render-check-regenerate loop repeats until either the checker accepts the chart or three correction attempts have been exhausted; unresolved charts are discarded. Finally, for each retained chart, the system generates a chart description from the image, code, and dataset context, then generates 20 chart-grounded questions with an easy/medium/hard mix (7/6/7), and labels each question as syntax, value extraction, comparison, trends, or reasoning.

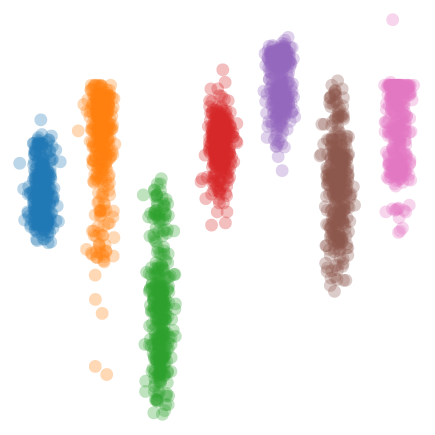

A concrete end-to-end example is shown in Fig. 1. For a dataset about air and sea surface temperature, the workflow generates a chart with those variables, retains the dataset context describing the TAO buoy source, and then produces QA pairs such as identifying the y-axis variable and reading an approximate value from the trendline at a given x-value. The same figure shows that the corpus is meant to pair the rendered visualization with semantically aligned text artifacts, rather than just a chart image. Another example in Fig. 1 shows the Pima Indians Diabetes dataset and a question that requires interpreting overlap between groups, illustrating how the QA layer extends beyond simple extraction into visual reasoning. The paper’s own discussion of spot checks indicates that some generated questions were ambiguous or unsupported by the rendered chart, and that these observations informed prompt revisions and filtering decisions.

Training and generation details are only partially specified, but what is explicit is that the authors use Qwen3.5-27B for all LLM-based generation and checking stages. The paper does not report epochs, batch sizes, learning-rate schedules, or seed strategy, because the system is not trained end-to-end in the conventional sense; it is a staged generation workflow. For downstream evaluation, each multimodal model receives the rendered chart, the dataset description, and one question, and must answer without tool access. A separate Qwen3.5-9B model acts as judge, comparing the candidate answer against the reference answer using the dataset description, question, reference answer, and model response as context. For value-extraction questions, near-numeric answers are accepted when approximately consistent with visual reading. Accuracy is aggregated with 95% confidence intervals via a chart-level bootstrap that resamples whole charts so questions from the same chart do not artificially inflate certainty. The paper explicitly treats these judgements as approximate rather than definitive correctness labels.

Evaluation is diagnostic rather than benchmark-defining. The authors evaluate 16 MLLMs and report accuracy overall and by question type in Fig. 3, plus centered effects by chart family and question type in Fig. 4. The strongest model achieves about 94% overall, chart-syntax questions reach above 99%, and harder categories like value extraction and reasoning remain below that ceiling. Common chart families are easier, while radar charts, parallel coordinates, 3D scatter plots, and composition-oriented diagrams are harder. The evaluation protocol is therefore designed to expose systematic weaknesses in chart-grounded multimodal reasoning, not to establish a single leaderboard. Reproducibility is reasonably good at the workflow level: the implementation is released on GitHub under BSD 3-Clause, and an interactive viewer is available; however, the generated corpus itself is only described through the paper, and the exact prompts and model settings are not fully enumerated in the excerpt provided.

Technical innovations

- A validation-driven table-to-chart workflow that explicitly inserts rendered-output inspection between code synthesis and artifact retention, unlike one-shot chart generation.

- A paired chart corpus where each retained example includes the image, executable plotting code, dataset context, intermediate renders, refinement feedback, description, and typed QA pairs.

- A chart-generation pipeline that separates dataset screening, plot proposal, rendering, and semantic validation so failures can be localized and regenerated rather than silently kept.

- A downstream diagnostic evaluation setup that uses the generated chart families and question types to study multimodal reasoning weaknesses beyond aggregate chart-QA accuracy.

Datasets

- UCI Machine Learning Repository tabular datasets — 74 datasets retained; 2,228 chart candidates screened to 1,500 final charts — public source

- Generated chart-question corpus — 1,500 charts and 30,003 QA pairs — generated from UCI tabular data by the authors

Baselines vs proposed

- Qwen3.5-9B judge vs human spot-checks: not reported quantitatively; used as approximate automatic evaluator for downstream QA

- Best evaluated MLLM overall: accuracy ≈ 94% vs chart-syntax accuracy > 99% (Fig. 3)

- Best evaluated MLLM on value extraction: accuracy ≈ 92% vs overall ≈ 94% (Fig. 3)

- Best evaluated MLLM on reasoning: accuracy ≈ 90% vs overall ≈ 94% (Fig. 3)

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2605.00800.

Fig 1: Three outputs generated by the proposed workflow. Each panel pairs a statistical chart generated from a

Fig 2: Schematic overview of the structured LLM-based generation workflow. The pipeline separates dataset

Fig 3 (page 2).

Fig 4 (page 2).

Fig 3: Evaluation accuracy across all 16 models. The left panel shows overall accuracy for each model, while the

Limitations

- The validation loop checks readability and semantic fit, but it does not guarantee full factual correctness of descriptions or QA pairs; the authors explicitly acknowledge that unsupported or ambiguous claims may remain.

- UCI data bias the corpus toward public machine-learning tables, which may not reflect messy real-world business, telemetry, or product analytics data.

- The paper does not provide full generation hyperparameters, seed strategy, or a detailed prompt appendix in the excerpt, so exact reproducibility of the LLM workflow may be limited.

- The downstream judge is another LLM, so accuracy estimates are approximate and depend on judge behavior, especially for near-numeric answers.

- The evaluation is diagnostic rather than a formal benchmark with a fixed split, so comparisons to prior datasets are informative but not strictly leaderboard-grade.

- The pipeline discards difficult charts after three failed correction attempts, which likely biases the final corpus toward more readable and semantically cooperative examples.

Open questions / follow-ons

- How much of the validation loop can be automated with non-LLM image metrics or rule-based checks without losing semantic sensitivity?

- Would the corpus remain useful if extended beyond UCI-style tables to messier enterprise datasets with missingness, long-tail categories, and time dependence?

- Can the generated aligned artifacts improve training of chart-reasoning models more than chart images alone, and if so which artifact matters most: code, dataset context, or QA pairs?

- What is the best way to audit and repair unsupported statements in generated descriptions and questions at scale?

Why it matters for bot defense

For bot defense and CAPTCHA work, the paper is relevant less as a chart-generation recipe and more as a general lesson in multimodal dataset construction. The authors show that if you want reliable diagnostic data, you should not trust a single generation pass: you need inspectable intermediate artifacts, render-time validation, and a rejection loop for outputs that are technically valid but semantically poor. That pattern maps well to CAPTCHA-like evaluation assets, where small visual defects, ambiguity, or unintended shortcuts can completely change model performance.

A bot-defense engineer could borrow the workflow design to build harder, cleaner multimodal challenges and to analyze failure modes by task subtype. The key takeaway is that downstream model evaluation becomes much more informative when each example is traceable back to source data and generation decisions. Conversely, if you are defending against automation, this paper is a reminder that synthetic corpora can become highly structured and internally aligned; the challenge is no longer just image generation, but ensuring the generated content preserves the intended reasoning bottlenecks and does not leak shortcuts through metadata or layout regularities.

Cite

@article{arxiv2605_00800,

title={ Generating Statistical Charts with Validation-Driven LLM Workflows },

author={ Pavlin G. Poličar and Andraž Pevcin and Blaž Zupan },

journal={arXiv preprint arXiv:2605.00800},

year={ 2026 },

url={https://arxiv.org/abs/2605.00800}

}