TopBench: A Benchmark for Implicit Prediction and Reasoning over Tabular Question Answering

Source: arXiv:2604.28076 · Published 2026-04-30 · By An-Yang Ji, Jun-Peng Jiang, De-Chuan Zhan, Han-Jia Ye

TL;DR

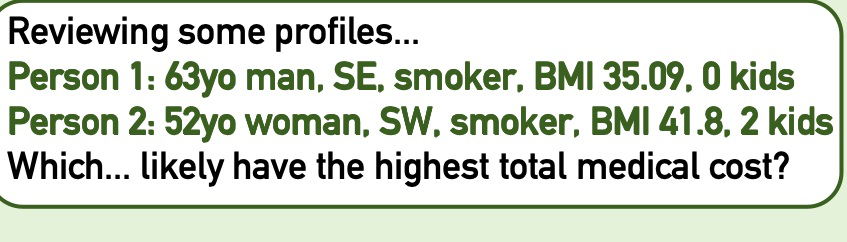

This paper addresses a critical gap in Table Question Answering (TQA) by focusing on implicit predictive reasoning tasks rather than explicit retrieval or aggregation of tabular facts. Existing TQA datasets and benchmarks largely evaluate models on queries answerable by direct lookup or simple statistics within tables. However, many real-world use cases require models to infer unobserved outcomes by recognizing latent predictive intents and performing inference over historical tabular data. To study and quantify this capability, the authors introduce TOPBENCH, a new benchmark containing 779 samples over 35 real tables from healthcare, finance, and consulting domains. TOPBENCH covers four subtasks: Single-Point Prediction, Decision Making, Treatment Effect Analysis, and Ranking and Filtering, each requiring both natural language reasoning and structured outputs. Evaluation of a diverse set of large language models (LLMs) reveals that most struggle to disambiguate predictive intents, often defaulting to retrieval or hallucination instead of rigorous predictive modeling, leading to poor accuracy especially on Decision Making and Treatment Effect tasks. Agentic methods with code execution help in some scenarios but can also exacerbate intent confusion. The study highlights that accurate intent recognition is a prerequisite for successful implicit prediction, and significant architectural or training advances are needed to improve precision. TOPBENCH thus sets a novel standard for tabular implicit predictive reasoning and guides future research towards integrating predictive modeling and reasoning capabilities in TQA systems.

Key findings

- TOPBENCH consists of 779 samples from 35 unique tables across healthcare, finance, and consulting domains, balanced between regression (384) and classification (395) queries.

- Current state-of-the-art general LLMs achieve only ~0.65 accuracy in the easiest Single-Point Prediction task, with significantly worse results (~0.2-0.4 accuracy) in Decision Making and Treatment Effect Analysis tasks.

- Agentic execution with code generation improves some models' performance in Treatment Effect Analysis (GPT-5.2 trend score rises from 0.51 to 0.65) but causes degradations in others (Qwen3-Instruct drops from 0.57 to 0.43 in Single-Point Prediction).

- Reasoning-enhanced LLM variants like Qwen3-Thinking exhibit instability and frequent repetitive loops, reducing effectiveness especially on longer context tables.

- Domain-specialized tabular models underperform general LLMs, failing especially on implicit reasoning and showing nearly zero recall in filtering due to faulty code execution.

- A detailed analysis shows a strong correlation between models that invoke predictive modeling tools (e.g., scikit-learn Random Forest) and higher predictive accuracy, whereas models relying on heuristic data retrieval perform worse.

- Evaluation combines both predictive precision scores and quality of reasoning assessment using an LLM-as-judge framework to verify extracted conclusions and confidence intervals.

- Ranking and Filtering tasks produce the lowest overall precision, with F1 scores for leading models around 0.58 and normalized mean absolute error (NMAE) around 0.30–0.40.

Threat model

The adversary considered is the language model or system expected to perform implicit predictive reasoning from paired queries and historical tabular data. The model has no access to explicit target labels or instructions besides the natural language query and the data. It cannot query external databases or receive human clarifications. The challenge is to correctly infer latent predictive intent and perform accurate prediction without erroneously falling back on simple retrieval or hallucinated answers.

Methodology — deep read

Threat Model & Assumptions: The benchmark targets scenarios where the adversary is essentially the model or system tasked with understanding and reasoning over tabular data to answer natural language questions that implicitly require prediction rather than mere retrieval. The model knows the historical tabular data and a natural language query and must infer a predictive task from the query. It cannot rely on having explicit task instructions or the answer in the table.

Data: TOPBENCH contains 779 curated query-table pairs derived from 35 real-world historical tables sourced primarily from Kaggle datasets in healthcare, finance, and daily consulting. The tables vary from under 1,000 rows to several million rows to test scalability. Labels include both regression and classification targets. Queries are generated through a multi-stage pipeline involving logic-driven sampling to select hard examples and dual-perspective prompting to simulate both non-technical user and data-holder queries. All samples underwent hybrid validation using independent LLM auditors and expert human review to ensure consistency and solvability of implicit predictive intent.

Architecture / Algorithm: Nine models were evaluated across three categories: general LLMs (e.g., GPT-5.2, Claude Sonnet 4.5, Gemini 3 Flash), reasoning-enhanced variants (e.g., DeepSeek-V3.2-Thinking), and domain-specific tabular specialists (e.g., TableLLM based on Llama and CodeLlama). Two inference paradigms were employed: a Text-Based Reasoning mode, where a serialized table snippet is appended to the query and models reason internally without additional tools; and an Agentic Code-Execution mode, where models interact via ReAct-style loops generating Python code executed in a sandbox to iteratively inspect and manipulate data. The agentic mode is used especially for Ranking/Filtering tasks requiring large-scale table processing.

Training Regime: Models evaluated consist of pre-existing proprietary and open-weight LLMs finetuned or instruction-tuned per their original releases; the authors did not train or fine-tune models from scratch. Hyperparameters and evaluation seeds are not explicitly provided as this is a benchmark evaluation paper rather than proposing a new model.

Evaluation Protocol: Evaluation tailored metrics per task and output modality. For predictive reasoning tasks producing text responses, an LLM-as-a-Judge framework extracts predicted values and reasoning and evaluates both numerical accuracy (using regression accuracy combining point estimates and confidence intervals) and reasoning quality on a 5-point logical coherence scale. For Ranking/Filtering tasks producing structured CSV outputs, deterministic metrics like F1-score for filtering subsets, recall of correct top-k items, NDCG for ranked order quality, and NMAE for regression precision were used. The evaluation pipeline also verifies that extracted predicted values are grounded in the response rather than hallucinated using strict fuzzy matching and natural language inference checks.

Reproducibility: The authors release the TOPBENCH benchmark dataset and evaluation pipeline publicly on GitHub, enabling replication and extension. Model weights are not released as proprietary LLMs are used. However, the dataset with paired queries, tables, and ground truths is openly available.

Technical innovations

- Definition and formalization of implicit predictive Tabular Question Answering as a two-stage inference problem combining intent abstraction and predictive modeling over historical data.

- Creation of TOPBENCH, a novel benchmark featuring four implicit predictive tasks (Single-Point Prediction, Decision Making, Treatment Effect Analysis, Ranking and Filtering) with paired natural language queries and large-scale real-world tables.

- Development of a rigorous LLM-as-a-Judge evaluation framework that jointly assesses numeric prediction accuracy including confidence intervals and reasoning quality via logical coherence scoring and hallucination checks.

- Use of dual inference paradigms: Text-based direct reasoning with truncated tabular contexts versus agentic code-execution leveraging Python sandbox iterations, to simulate real-world tabular workflows.

Datasets

- TOPBENCH — 779 queries over 35 historical tables — sourced from Kaggle healthcare, finance, and daily consulting datasets, publicly released by authors

Baselines vs proposed

- GPT-5.2 text-based Single-Point Prediction accuracy: 0.55 vs agentic: 0.60

- Gemini 3 Flash text-based Single-Point Prediction accuracy: 0.65 vs agentic: 0.66

- Qwen3-Instruct text-based Single-Point Prediction accuracy: 0.57 vs agentic: 0.43 (performance drop)

- DeepSeek-V3.2-Instruct Ranking task NMAE: 0.26 (best among general LLMs), F1 not reported

- Tabular specialists TableLLM-Llama3.1-8B Single-Point Regression accuracy: 0.38 text-based, 0.10 agentic, showing poor performance

- Decision Making accuracy for top model Gemini 3 Flash: 0.42 text-based, 0.46 agentic

- Treatment Effect Decision accuracy for GPT-5.2: 0.57 text-based, 0.55 agentic; trend accuracy improves from 0.51 to 0.65 with agentic code execution

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2604.28076.

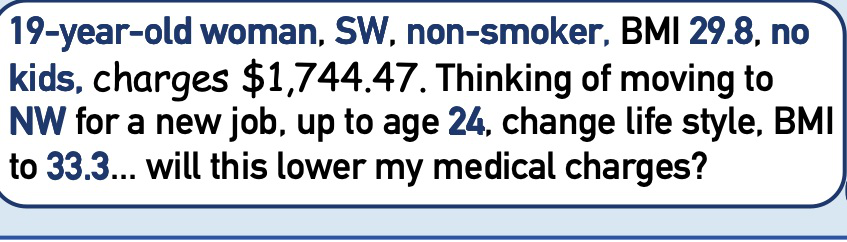

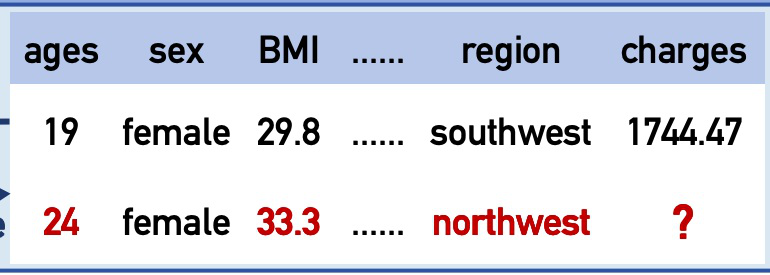

Fig 2: Overview of TOPBENCH. TOPBENCH requires inferring unobserved outcomes from historical data across four tasks:

Fig 1: Comparison between Traditional TQA and Implicit

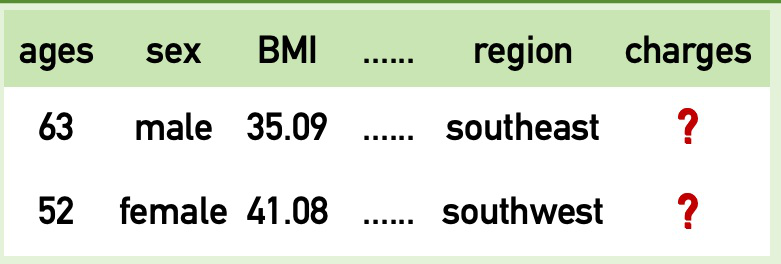

Fig 3 (page 2).

Fig 4 (page 2).

Fig 5 (page 2).

Fig 6 (page 2).

Fig 7 (page 2).

Fig 6: The Multi-Stage Data Synthesis Pipeline. The process begins with Foundation Curation, where logic-driven sampling selects

Limitations

- The benchmark sample size (779 queries) is still modest compared to some large-scale TQA datasets, limiting generalizability.

- Evaluations focus on existing generalist and specialist LLMs without proposing new architectures or training tweaks to improve implicit prediction.

- Agentic mode improvements are model-dependent and inconsistent, sometimes causing performance drops through misaligned code generation.

- Context truncation for text-based reasoning may artificially handicap models on large tables, not fully reflecting real-world context management strategies.

- Treatment Effect Analysis and Decision Making results remain near random baseline, exposing gaps but without extensive adversarial or out-of-distribution robustness testing.

- Tabular specialist models evaluated may not be state-of-the-art, and lack of closed-loop tuning or hybrid retrieval plus generative methods limit potential performance gains.

Open questions / follow-ons

- How can future model architectures better disentangle intent recognition from predictive modeling to reduce hallucinated retrieval in implicit predictive TQA?

- What specialized training regimes or multi-task objectives effectively improve LLM performance on latent intent disambiguation and rigorous predictive reasoning over large tabular data?

- Can hybrid retrieval-generation or multi-modal approaches incorporating tabular and textual evidence improve the robustness and accuracy in decision making and treatment effect analysis tasks?

- How can agentic frameworks be optimized to reduce code execution errors and unstable reasoning loops while scaling to massive industrial tabular datasets?

Why it matters for bot defense

Bot-defense and CAPTCHA practitioners often face scenarios where user intent is implicit or ambiguous, and systems must infer hidden goals or behaviors rather than simply match explicit query strings. TOPBENCH highlights analogous challenges in tabular question answering where models must recognize latent predictive intent behind queries and perform sophisticated reasoning beyond fact retrieval. This is directly relevant for CAPTCHA-related bot detection systems aiming to identify automated actors performing predictive, adversarial, or pattern-extrapolating actions on structured data. The benchmark and evaluation methodologies provide useful templates for assessing how well language models or integrated AI agents can differentiate genuine implicit intents requiring reasoning versus straightforward data lookups, a critical distinction for discerning advanced bot behavior. Moreover, the agentic evaluation framework demonstrates ways to leverage iterative code execution safely in sandboxed environments for large-scale tabular inference—parallels that could inform CAPTCHA systems deploying layered detection logic incorporating logical reasoning and predictive modeling. Overall, TOPBENCH underlines the importance of developing and testing robust latent intent recognition and predictive capabilities that may underpin advanced bot challenges and defense strategies.

Cite

@article{arxiv2604_28076,

title={ TopBench: A Benchmark for Implicit Prediction and Reasoning over Tabular Question Answering },

author={ An-Yang Ji and Jun-Peng Jiang and De-Chuan Zhan and Han-Jia Ye },

journal={arXiv preprint arXiv:2604.28076},

year={ 2026 },

url={https://arxiv.org/abs/2604.28076}

}