RopeDreamer: A Kinematic Recurrent State Space Model for Dynamics of Flexible Deformable Linear Objects

Source: arXiv:2604.28161 · Published 2026-04-30 · By Tim Missal, Lucas Domingues, Berk Guler, Simon Manschitz, Jan Peters, Paula Dornhofer Paro Costa

TL;DR

RopeDreamer tackles long-horizon dynamics prediction for deformable linear objects (DLOs) such as ropes and cables, where standard learned predictors often drift, over-stretch links, or lose track of self-intersections. The key problem is not just forecasting positions one step ahead, but preserving physically plausible geometry and topology over many steps when the object is manipulated by contact-rich pick-and-place actions.

The main idea is to combine a recurrent state space model (RSSM) with a quaternionic kinematic-chain representation. Instead of directly forecasting Cartesian positions for every segment, the model encodes the DLO as a base point plus relative rotations between segments, which enforces fixed link lengths by construction. A dual-decoder design separates reconstruction of the current observation from prediction of the next state, so the latent space is trained both to stay grounded in the observed DLO and to support multi-step latent rollouts. In simulation, the authors report substantially better long-horizon RMSE, better topological consistency under crossings, and faster inference than the strongest baselines they evaluated.

Key findings

- On 50-step open-loop rollouts, the best RopeDreamer variant reduces RMSE by 40.52% versus the best baseline; the paper states the best baseline reaches 64.94 mm at t=50 while RopeDreamer Large reaches 19.05 mm.

- RopeDreamer Large has a single-step inference time of 0.53 ms versus 0.77 ms for the small GA-Net baseline, a 31.17% reduction in computation time per prediction step.

- In Fig. 4a, GA-Net baselines have lower short-term error initially, but their error grows to 64.94 mm by t=50 for GA-Net (S), whereas RopeDreamer’s error growth is described as stable, increasing by only 19.05 mm by t=50 for the best model.

- The topology metric in Fig. 6 shows RopeDreamer maintaining mean Gauss-code match rates between 65% and 38% across the 50-step horizon, while all baselines fall below 10% by step 30.

- The GA-Net + quaternion ablation (GA-Net XS / Quat) drops to 0% topology accuracy by step 15, indicating that quaternion constraints alone do not solve long-horizon topology drift.

- The dataset contains 10,000 simulated trajectories with 100 steps each, totaling 1,000,000 transitions, split 80/10/10 into train/validation/test.

- Training used up to 200 epochs with learning rate 1e-4, batch size 32, and beta=1 for the KL term; the authors say most configurations converged near epoch 50.

Threat model

The relevant adversary is not a malicious actor but the combination of long-horizon compounding error, self-intersections, and physically invalid state predictions in flexible-object dynamics. The model is assumed to receive ground-truth segment states in simulation, or tracked states in a real deployment, plus a grasp index and planar displacement action; it does not need to infer topology from raw pixels. What it cannot do is rely on external corrections during the open-loop horizon beyond the initial warmup, because the evaluation intentionally removes ground-truth state updates after the first few steps.

Methodology — deep read

The threat model is not a cyber-adversarial one; the task is supervised dynamics prediction for robot manipulation. The implicit adversary is a hard physical setting: high-dimensional flexible DLO motion, long-range temporal dependencies, and topological events like self-intersections that break local predictors. The paper’s assumptions are that the DLO is discretized into L equidistant segments, manipulated on a plane by a pick-and-place end-effector, and that the model has access to segment-state observations and the action description (grasp index plus planar displacement). The authors explicitly assume the DLO has already been picked up at planning time, which lets them represent actions as incremental XY displacements and chain them into larger motions by repeating smaller steps.

The data is entirely simulated. They generate 10,000 trajectories in MuJoCo 3.3.7, each 100 steps long, giving 1,000,000 state-action transitions. The simulated DLO is a 70-capsule chain of length 10 mm and thickness 10 mm, with ball joints, capsule friction 0.8, joint bending stiffness 0.005, ground friction 1.0, and damping 0.05. Each trajectory begins by grasping a random segment and lifting it 50 mm along z, then applying 100 random actions; each action is a 50 mm translation in the XY plane with a heading sampled uniformly from [0, 2π), executed via a mocap object welded to the target segment, and the rope is returned to the surface after the final action. The data split is 80% train, 10% validation, 10% test. There is no indication of multiple random seeds, cross-validation, or any real-world dataset in the reported experiments. The paper says code, models, and dataset will be released in a future revision, so reproducibility is not yet fully available.

Architecturally, the paper starts from an RSSM world-model backbone and adapts it to DLO dynamics. Inputs are encoded through an action encoder and a state encoder. The action encoder is link-aware: it embeds the grasped-segment index and separately encodes the displacement vector, which is meant to let the model learn different dynamics for different rope positions. The RSSM then updates a deterministic hidden state h_t with a GRU and a stochastic latent z_t. During training, a posterior q(z_t | h_t, e_t) is computed from the current encoded observation, while a prior p(z_t | h_t) predicts the latent state without seeing the future. The key novelty is the state representation: the first segment is kept as a Cartesian point p_0, and all other links are represented by unit quaternions q_1...q_{L-1} encoding relative rotations in a kinematic chain. The authors claim this preserves link-length constancy by construction and makes the model less prone to non-physical stretching than Cartesian segment regression. Forward kinematics reconstructs all segment positions from the base point and the quaternion chain.

The second main design choice is the dual-decoder training setup. One decoder reconstructs the current observation from the posterior latent state, acting as a grounding mechanism; the other decoder predicts the next state from the prior latent state after a latent “dream” step. The loss is a composite objective L_total = L_recon + L_pred + β L_KL, with β set to 1 in experiments. L_recon is an MSE-style reconstruction loss on the current state, L_pred is an MSE-style loss on the next state, and L_KL regularizes posterior vs prior latent distributions. This differs from a plain ELBO/RSSM setup because the prediction target is made explicit and separated from reconstruction, which the authors argue prevents reconstruction from dominating the long-horizon transition learning. A concrete forward pass is: observe current DLO state and action, encode them, update the RSSM posterior with the observation to reconstruct the current rope, then roll the prior forward one latent step using the future action and decode the predicted next DLO configuration.

Training and evaluation are fairly standard but important for interpreting the results. The authors train all RopeDreamer variants and baselines for up to 200 epochs using Adam-style optimization is not explicitly named in the excerpt, but the learning rate is 1e-4, batch size is 32, beta is 1, and early stopping is triggered after 10 epochs without validation improvement. They select the best checkpoint on validation performance. Three RopeDreamer scales are tested: Small (7.85M params), Medium (15.83M), and Large (47.86M). Baselines are GA-Net variants and IN-BiLSTM variants; GA-Net uses a transformer encoder plus attention network, and IN-BiLSTM uses an interaction network plus LSTM. The main open-loop evaluation uses a 5-step warmup where the model receives ground-truth state-action pairs, then it must roll out 50 future steps using only future actions. They run 500 independent rollouts and report average RMSE over the horizon. Topology is evaluated separately using Gauss codes: predicted and ground-truth crossing sequences are compared stepwise, and exact matches count as topological success. The paper also reports single-step inference latency on an Nvidia 4060Ti GPU; the authors note that RopeDreamer’s compact latent rollout avoids graph construction overhead. One additional ablation replaces GA-Net’s Cartesian state with the quaternionic representation, which improves short-term consistency but not long-horizon stability, supporting the claim that the RSSM contributes more than the coordinate parameterization alone.

Technical innovations

- A quaternionic kinematic-chain state representation for DLOs that replaces per-segment Cartesian prediction with a base point plus relative rotations, enforcing fixed link lengths by construction.

- An RSSM-based latent dynamics model adapted to high-segment deformable objects, using deterministic and stochastic latent components to support long open-loop rollouts.

- A dual-decoder objective that explicitly separates current-state reconstruction from next-state prediction, reducing the chance that reconstruction dominates predictive dynamics learning.

- A link-aware action encoder that embeds the grasped segment index separately from the planar displacement, so the model can learn segment-dependent manipulation effects.

Datasets

- Simulated DLO pick-and-place trajectories — 10,000 trajectories / 1,000,000 transitions — synthetic MuJoCo 3.3.7 simulation (not public at time of writing)

Baselines vs proposed

- GA-Net (best baseline): 50-step open-loop RMSE = 64.94 mm vs proposed = 19.05 mm (best RopeDreamer model)

- GA-Net (S) inference time: 0.77 ms vs proposed Large = 0.53 ms per prediction step

- GA-Net XS / Quat topology accuracy: 0% by step 15 vs proposed maintains 65% to 38% mean success across the horizon

- Baseline models in Fig. 4: short-horizon RMSE lower initially than RopeDreamer, but all show steep growth; RopeDreamer shows stable error growth over 50 steps

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2604.28161.

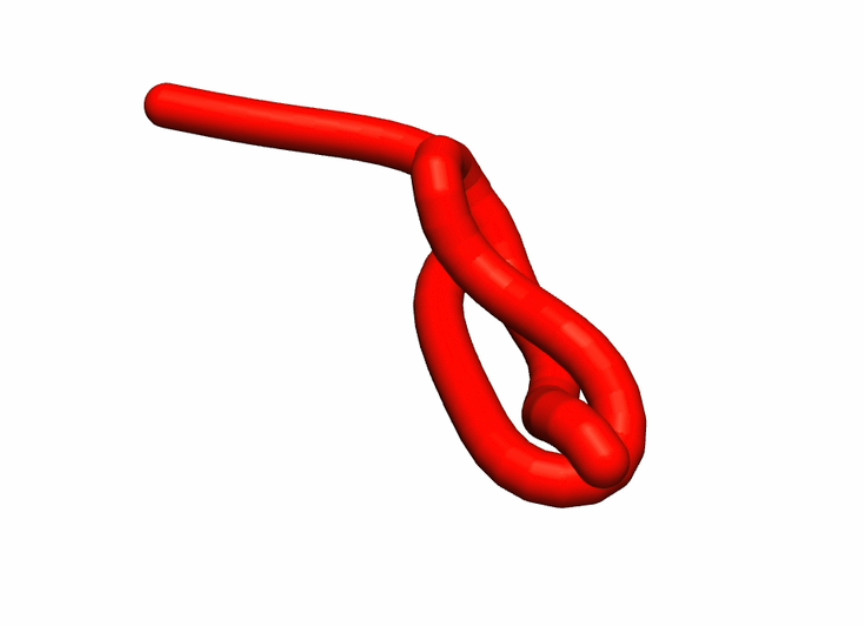

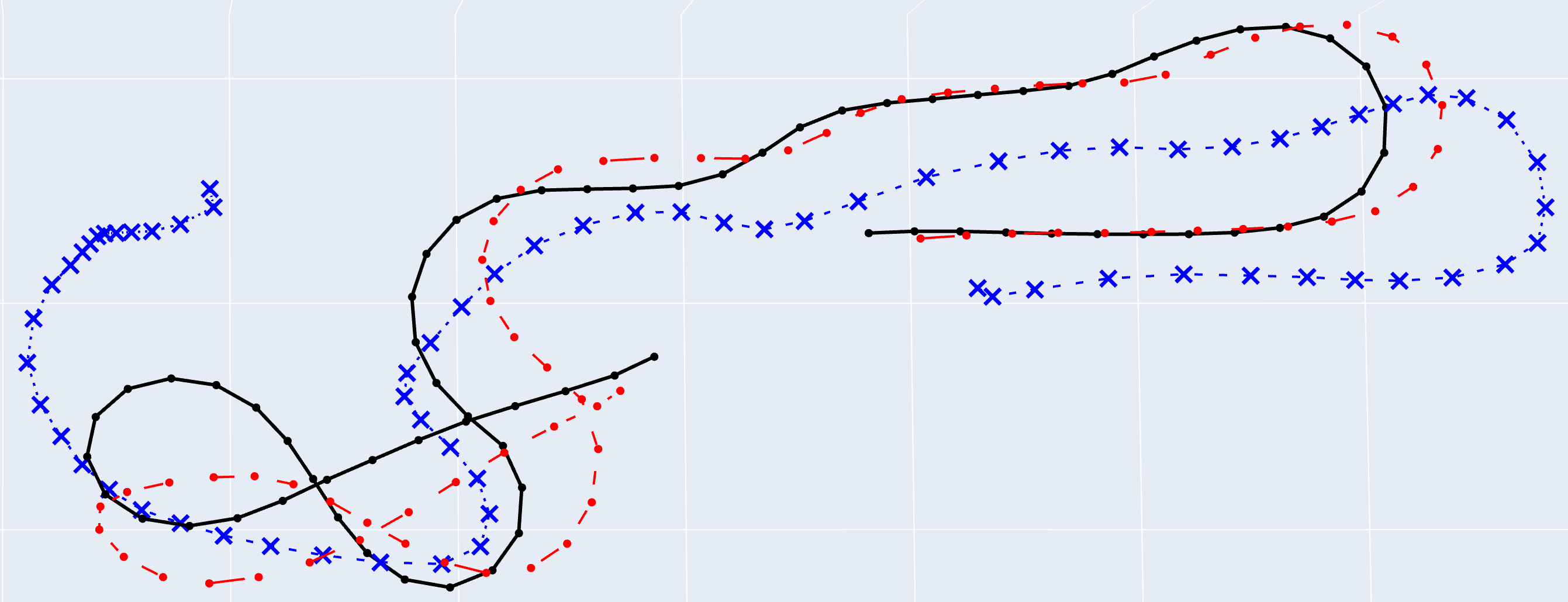

Fig 1: After observing an initial state s0 from simulation, RopeDreamer predicts the following evolution of the Deformable Linear Object given a

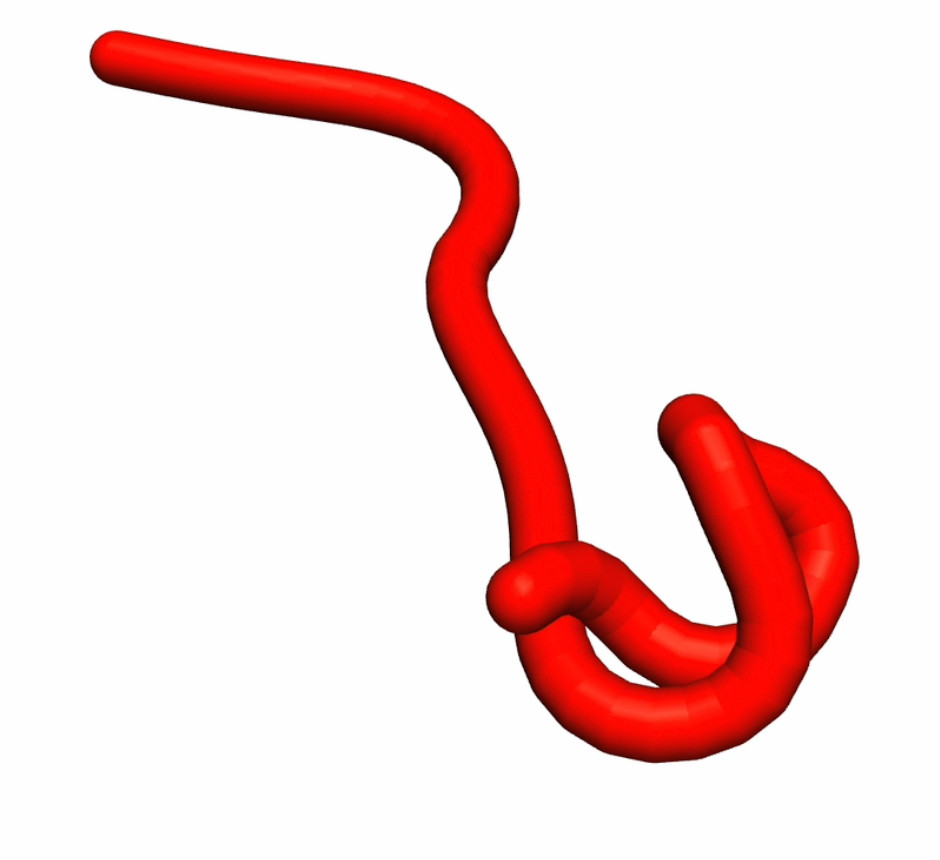

Fig 2: Problem formulation of DLO shape prediction task. a) The DLO

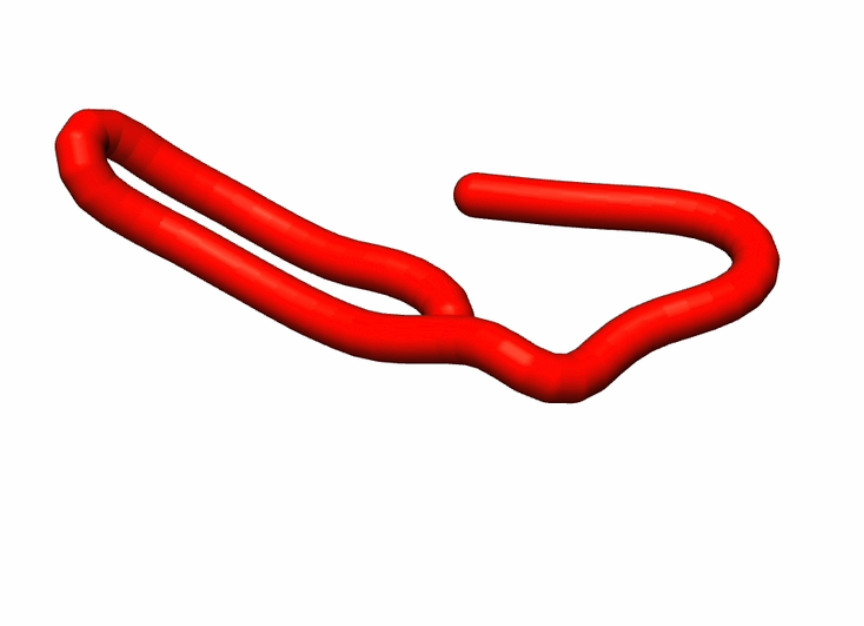

Fig 3 (page 1).

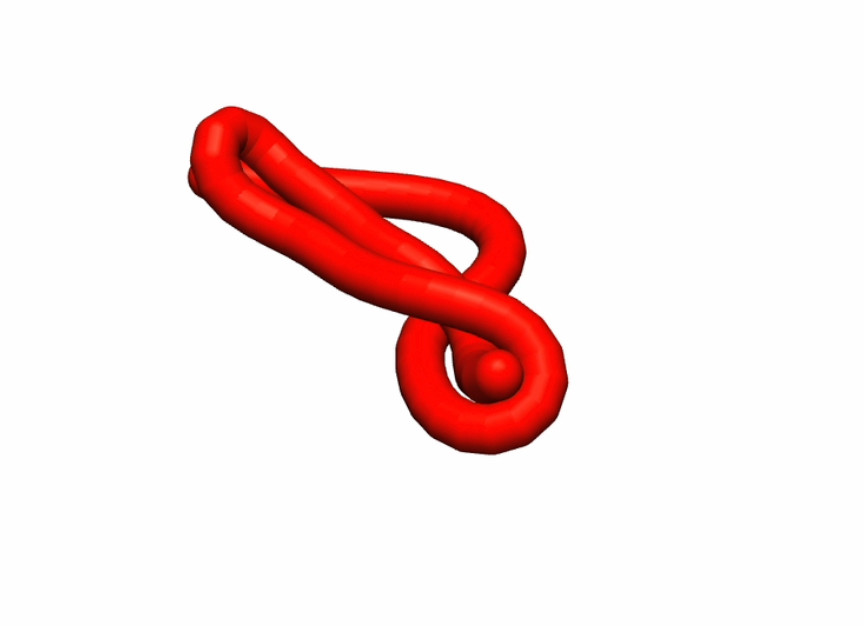

Fig 4 (page 1).

Fig 3: Architecture of the World Model for DLO manipulation. The current DLO state and manipulation actions are first fed into their respective encoders

Fig 5: Comparison of mean single-step inference time over different model

Limitations

- The entire evaluation is in simulation; there is no real-robot or sim-to-real result, so the method’s robustness to sensor noise, unmodeled friction, and tracking errors remains untested.

- The dataset is a single synthetic distribution of 70-capsule ropes with fixed physical parameters and 50 mm actions; generalization to different stiffness, thickness, lengths, or grasping policies is not demonstrated.

- The paper reports 500 rollouts and a validation-selected checkpoint, but does not mention multiple random seeds or statistical significance tests, so the uncertainty in the reported deltas is unclear.

- The quaternionic representation improves physical plausibility, but the paper acknowledges an initial reconstruction penalty; this may matter for tasks needing very accurate short-horizon state estimates.

- Code, models, and dataset are not yet released in the version provided, so exact reproduction of preprocessing and training details is not currently possible.

- The topology metric is based on exact Gauss-code matches; near-miss topological errors or task-level manipulation success are not separately analyzed.

Open questions / follow-ons

- How well does the quaternionic RSSM transfer to real rope/cable data with tracking noise, occlusion, and inaccurate grasp localization?

- Can the latent model be extended to heterogeneous DLOs with varying stiffness, thickness, or branching-like artifacts without retraining from scratch?

- Would a hierarchical latent structure reduce the initial reconstruction penalty while preserving long-horizon stability?

- How should topology metrics be combined with task-success metrics for downstream manipulation planning, rather than reporting them separately?

Why it matters for bot defense

For bot-defense practitioners, the relevant lesson is architectural rather than domain-specific: if the target behavior has hard structural constraints, encoding those constraints into the state representation can materially improve long-horizon stability. In CAPTCHA or bot-detection settings, that suggests treating user/session trajectories as constrained sequences rather than flat feature vectors when there are known invariants, because unconstrained predictors may fit short-term patterns but drift badly under distribution shift or long rollouts.

The paper also illustrates a practical evaluation pattern worth borrowing: measure not only immediate prediction error but also compounding error over many open-loop steps, and include a task-specific structural metric, not just a generic loss. For bot defense, that means testing held-out attacker strategies, multi-step rollout drift, and invariants such as interaction consistency or challenge progression validity. The main caution is that the gains here come from strong simulation assumptions and explicit state access; in a CAPTCHA system, those assumptions may not hold unless the observables and constraints are engineered carefully.

Cite

@article{arxiv2604_28161,

title={ RopeDreamer: A Kinematic Recurrent State Space Model for Dynamics of Flexible Deformable Linear Objects },

author={ Tim Missal and Lucas Domingues and Berk Guler and Simon Manschitz and Jan Peters and Paula Dornhofer Paro Costa },

journal={arXiv preprint arXiv:2604.28161},

year={ 2026 },

url={https://arxiv.org/abs/2604.28161}

}