Neural Aided Kalman Filtering for UAV State Estimation in Degraded Sensing Environments

Source: arXiv:2604.28107 · Published 2026-04-30 · By Akhil Gupta, Erhan Guven

TL;DR

This paper addresses the problem of accurate state estimation for nonlinear, agile unmanned aerial vehicles (UAVs) operating under degraded sensing conditions such as high sensor noise and sparse measurements. Classical nonlinear Kalman filtering methods (Extended Kalman Filter - EKF and Unscented Kalman Filter - UKF) degrade significantly under these conditions due to reliance on approximations and inability to adapt process noise dynamically. The authors propose a hybrid Bayesian Neural Kalman Filter (BNKF) that couples a variational Bayesian Neural Network (BNN) predictive model with a Kalman filter correction step. The BNN models nonlinear UAV dynamics and outputs both state estimates and uncertainty via Monte Carlo weight sampling, which is incorporated into the Kalman covariance update. Evaluation on synthetic nonlinear UAV flight trajectories with varying radar noise and sampling rates shows BNKF substantially improves accuracy, uncertainty quantification, and truth containment metrics compared to EKF and UKF. An ensemble variant, BNKFe, further reduces uncertainty at a slight accuracy cost. Runtime benchmarking shows BNKF is computationally feasible for real-time deployment. This work demonstrates the benefits of hybridizing probabilistic deep learning and classical filters for robust UAV tracking in challenging sensing environments.

Key findings

- BNKF reduces Euclidean distance state estimation error from 35.16m (EKF) and 66.78m (UKF) to 8.63m under high noise conditions (Table II).

- BNKF maintains lower and better calibrated Mahalanobis distance (MD) values (~1.72 to 5.68) compared to EKF (up to 9.35) and UKF (up to 25.17) across all noise regimes.

- The state estimate covariance determinant under BNKF is significantly smaller (e.g., 13.66 m^3 at high noise) than EKF (15740 m^3) and UKF (7262 m^3), indicating improved uncertainty quantification.

- BNKFe ensemble variant achieves lower state uncertainty determinants (e.g., 8.11 m^3 at high noise) while maintaining comparable accuracy to BNKF.

- Standalone BNN without Kalman correction performs substantially worse than BNKF and BNKFe, indicating correction step improves accuracy and uncertainty.

- Inference runtime for BNKF is lower than EKF and UKF for single trajectory CPU predictions (Fig 7), supporting real-time feasibility.

- Performance improvements are most pronounced as measurement noise and data sparsity increase, where classical methods diverge quickly.

- Five-fold cross-validation on ~15,000 synthetic UAV trajectories across noise and sampling conditions confirms consistent performance gains.

Threat model

The threat model assumes an environment with degraded sensing due to high noise levels and sparse, intermittent radar observations simulating real-world adversarial interference, sensor defects, or hostile jamming. The adversary’s capabilities do not explicitly include control input knowledge or altering the model or training data; rather, the challenge is to track agile UAVs with unknown control policies under noisy, incomplete measurements.

Methodology — deep read

Threat Model & Assumptions: The adversary or challenge is environmental/realtime degradation of UAV state estimation due to noisy, sparse radar sensor inputs and unknown UAV control inputs causing nonlinear, agile trajectories. No explicit adversarial attack (e.g., jamming) modeling is done, but the sensing degradation simulates adversarial or real-world noise. The adversary cannot alter the system model or training data.

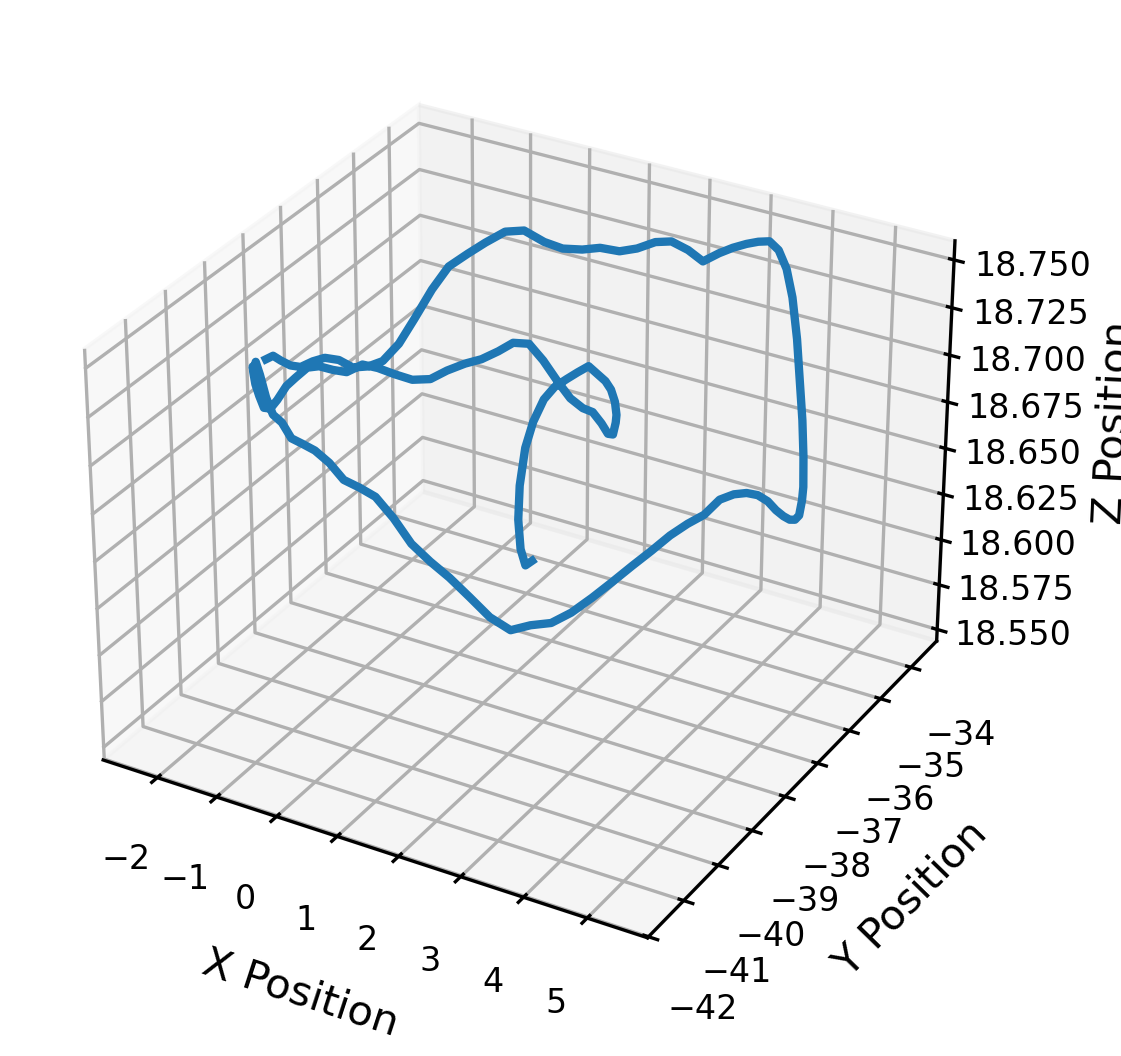

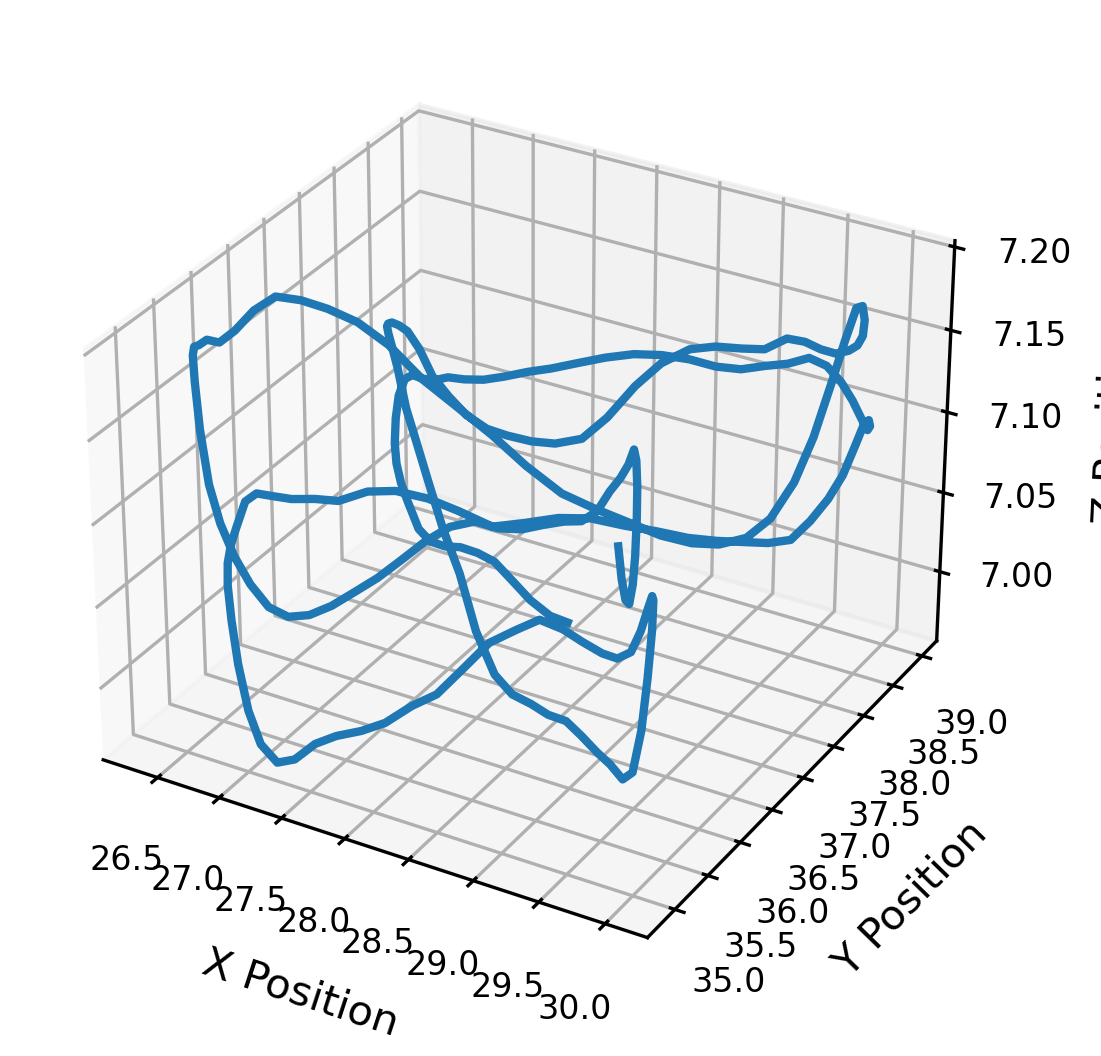

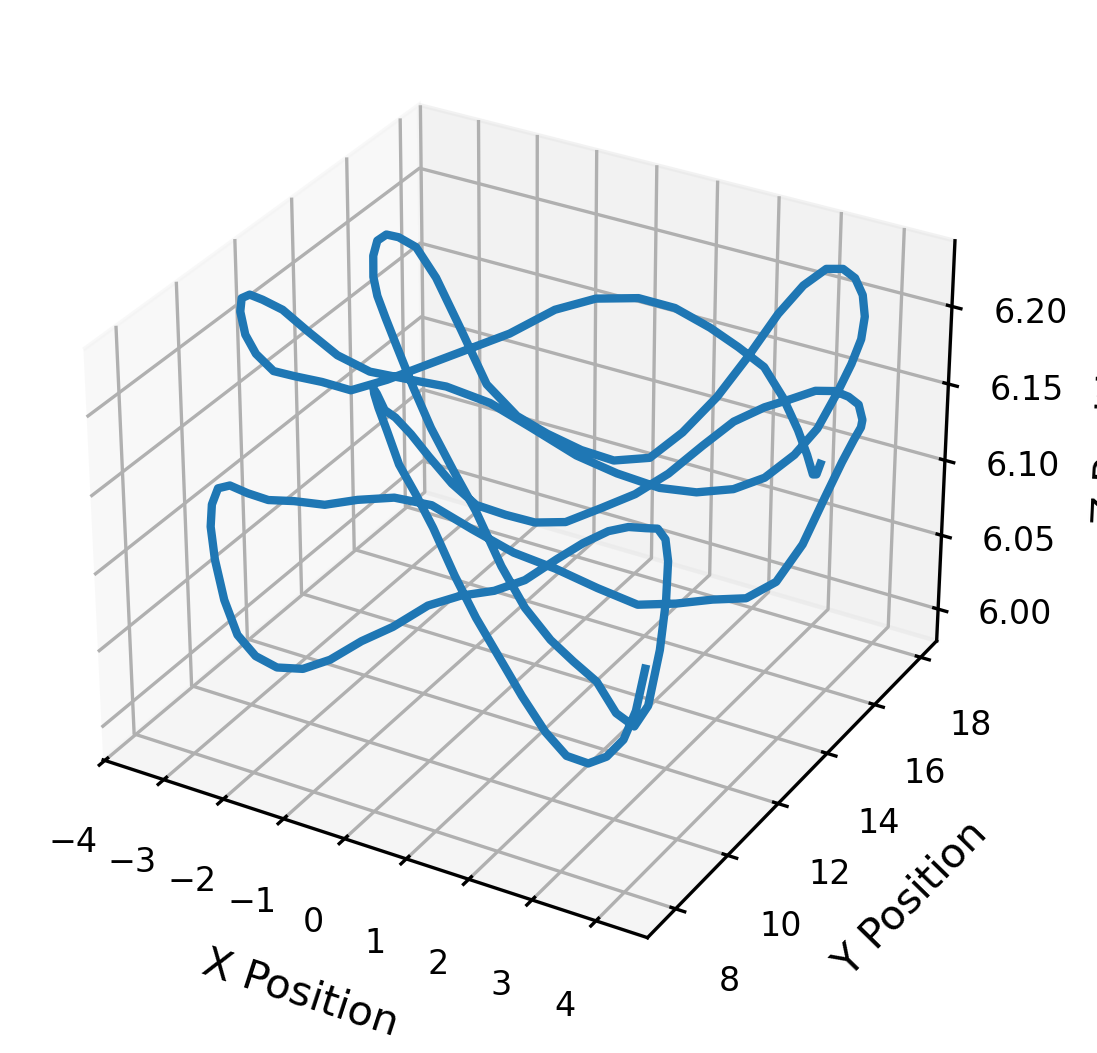

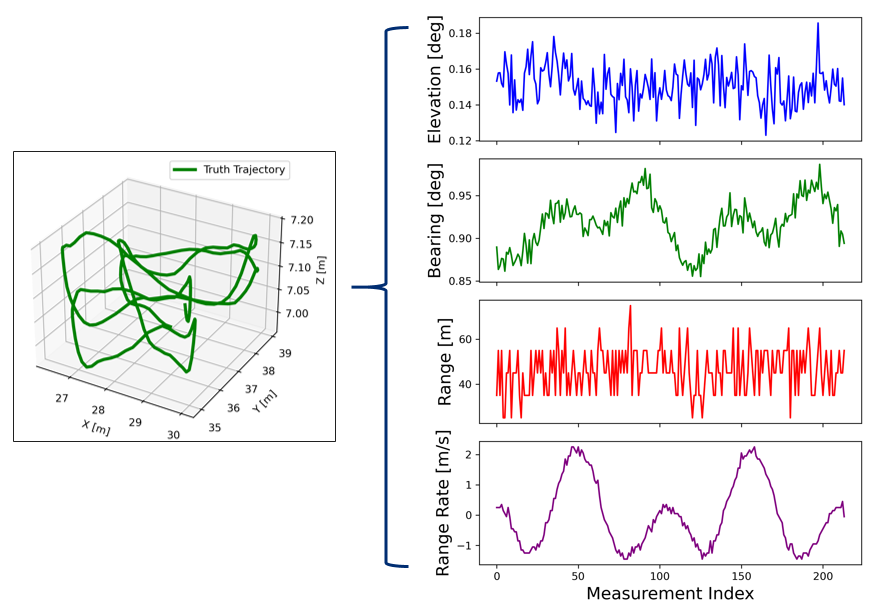

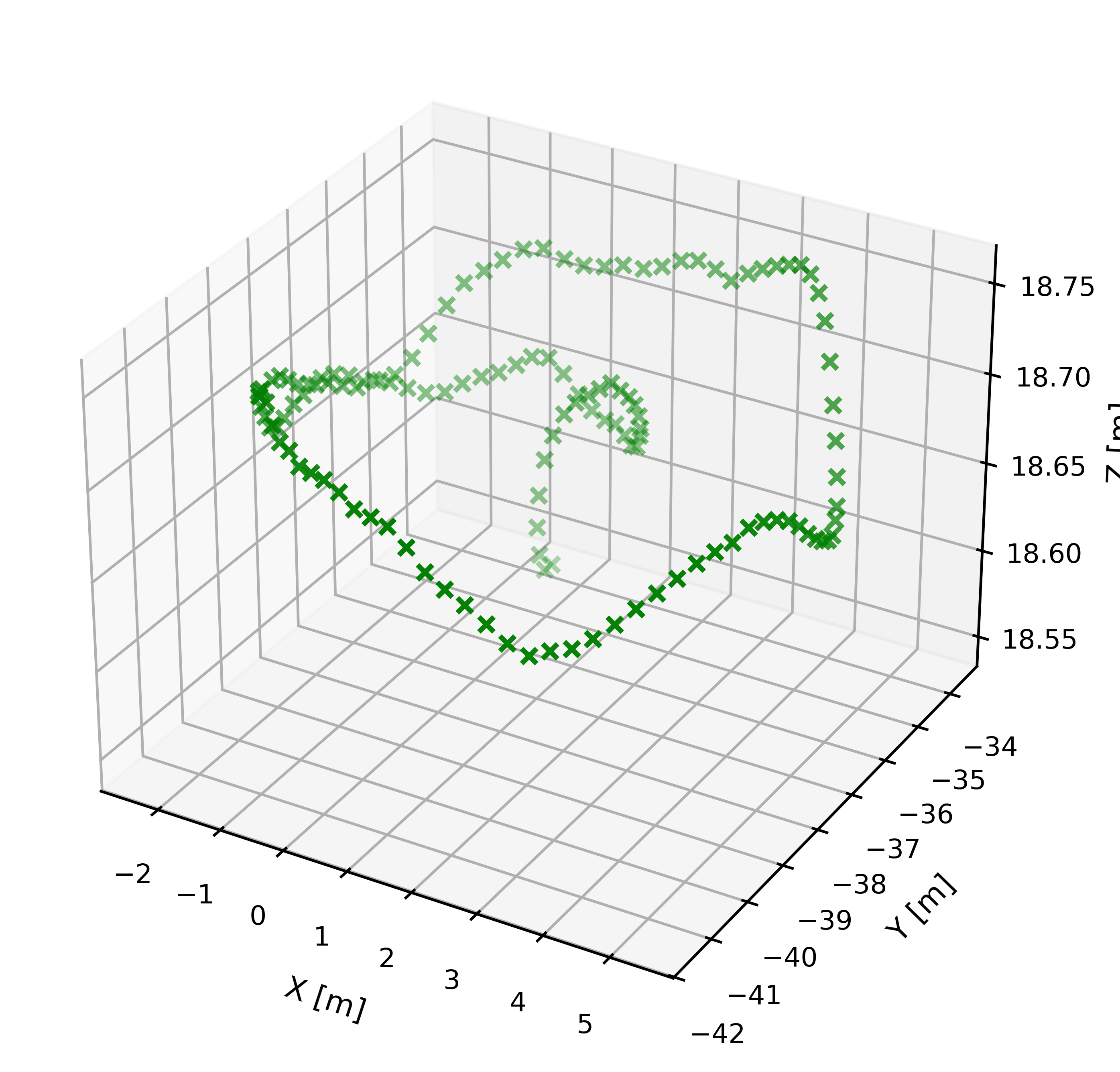

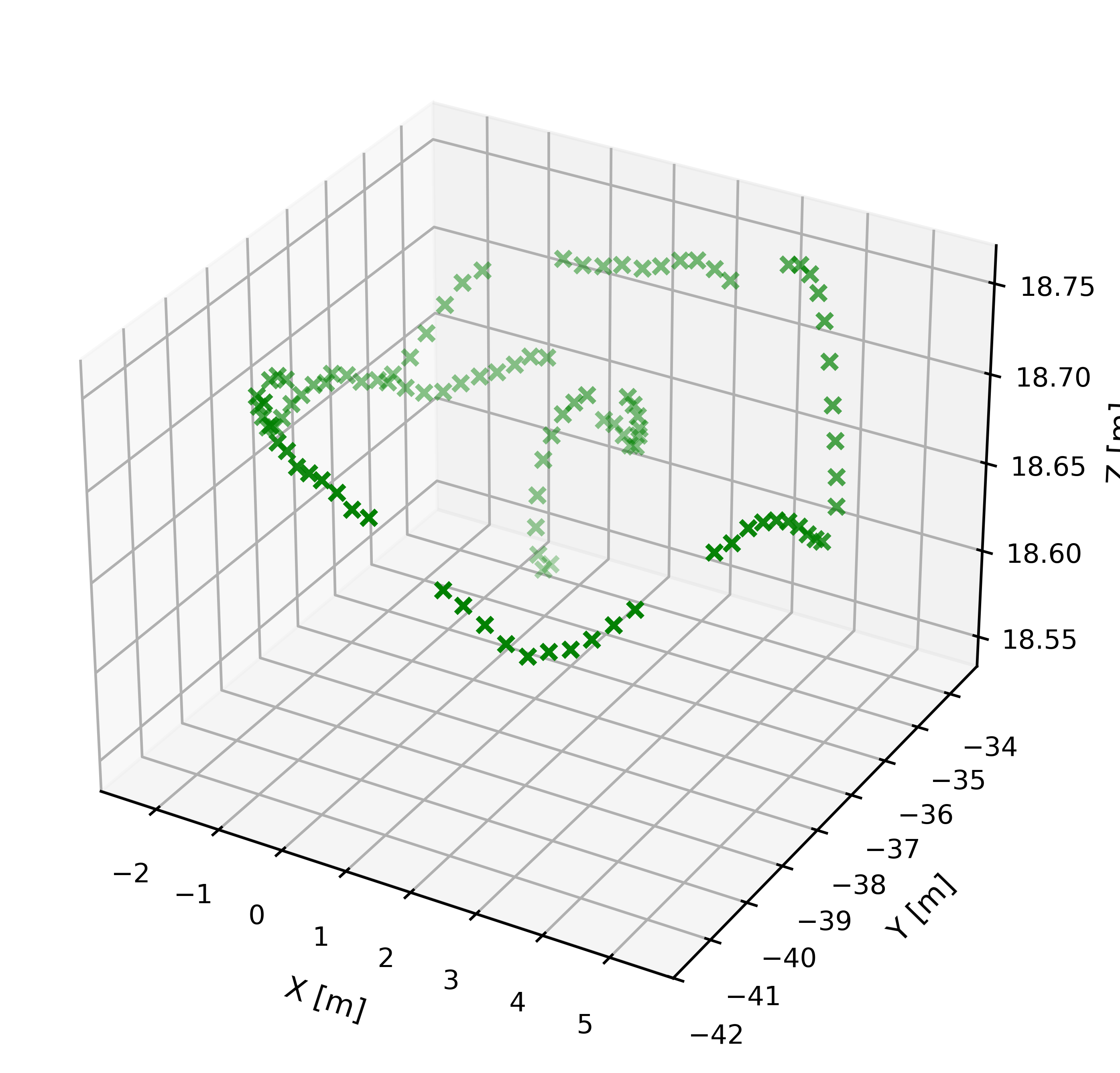

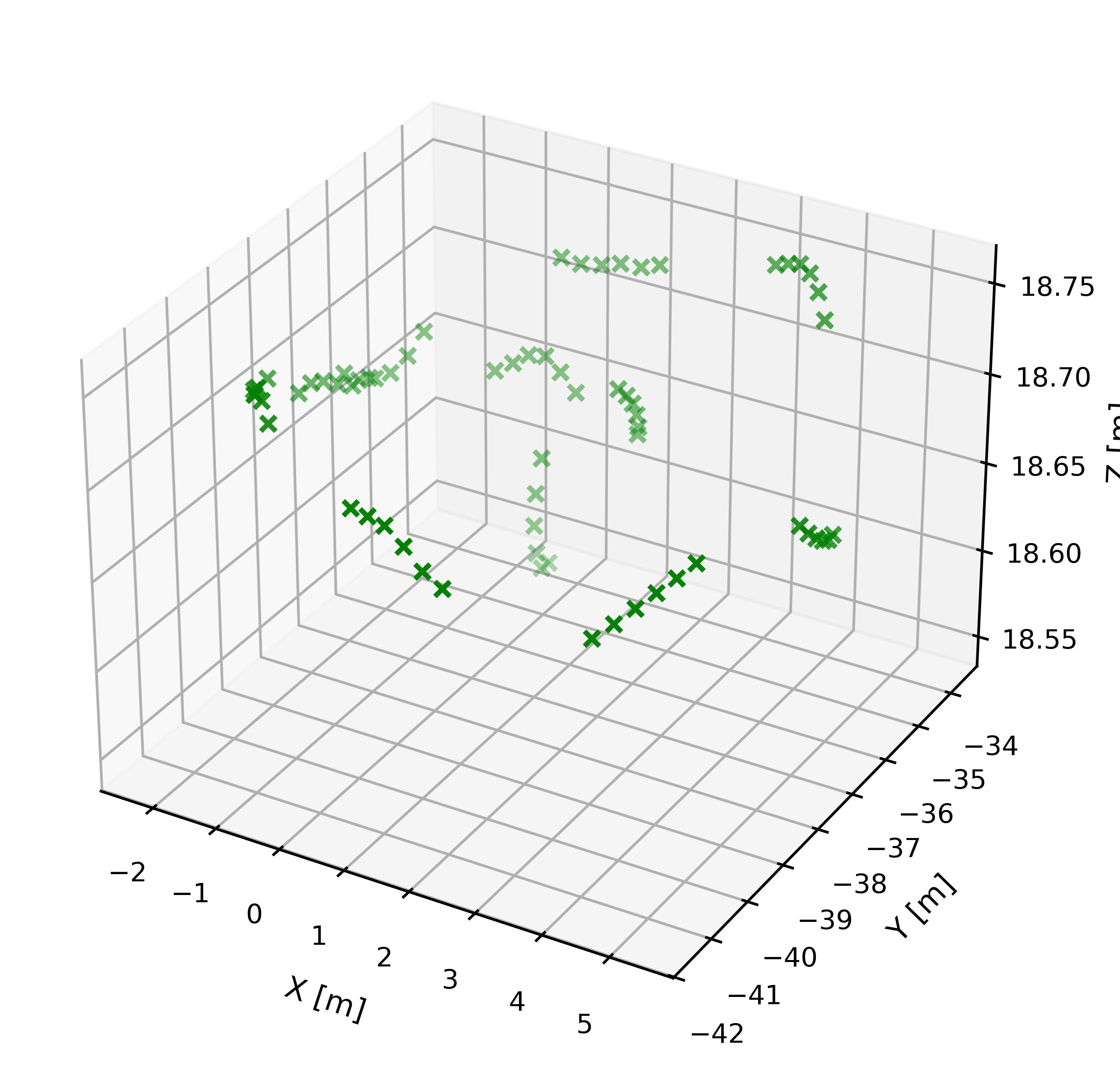

Data: The data is drawn from the publicly available Synthetic-UAV-Flight-Trajectories dataset on Hugging Face, generated via a high-fidelity Gazebo simulator modeling nonlinear UAV dynamics and environment effects. This includes over 5,000 randomized 3D UAV trajectories with 20 hours of simulated flight time providing true position and velocity states. Radar sensor measurements (range, range-rate, elevation, azimuth) with Gaussian noise at three sigma levels (low, medium, high) are synthetically generated using the Stone Soup Python library from the true states. Additional sampling rate conditions (1.0, 0.75, 0.50) simulate missing/intermittent measurements. Dataset size per condition is about 15,000 trajectories.

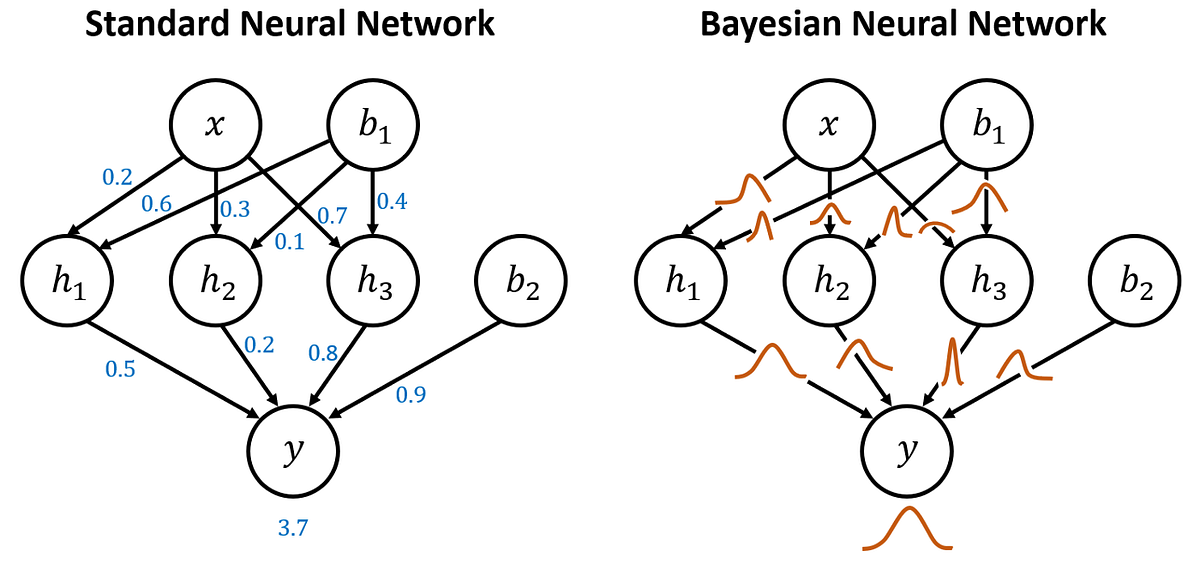

Architecture/Algorithm: The BNKF couples a variational Bayesian Neural Network (BNN) as the nonlinear state predictor with a classical Kalman correction step to fuse new noisy measurements. The BNN outputs the predicted state mean vector and covariance matrix using Monte Carlo dropout-like sampling over learned weight distributions. This predictive uncertainty is explicitly incorporated into Kalman covariance propagation, unlike prior neural-Kalman approaches. The BNN uses five fully connected Bayesian linear layers with 64 neurons each. The loss optimizes mean squared error plus a KL divergence on learned weights for regularization and uncertainty calibration. The Kalman filter uses the standard extended Kalman filter (EKF) correction equations with the BNN prediction plugged into the prediction step.

Training regime: The BNNs are trained using Adam optimizer for an unspecified number of epochs with batch training; 100 Monte Carlo samples are drawn at inference to approximate uncertainty. Five-fold cross-validation is performed for robustness. Ensemble variant BNKFe trains three separate BNNs on x, y, and z coordinates respectively and combines outputs into full state estimates.

Evaluation Protocol: Metrics include Euclidean distance error between estimated and true states, the determinant of the covariance matrix as uncertainty magnitude, and Mahalanobis distance for truth containment calibration. Baselines include EKF, UKF, and standalone BNN without Kalman correction. Experiments vary sensor noise (low, medium, high) and sampling rate (1.0, 0.75, 0.50) on synthetic test sets. Cross-validation reports mean and standard deviation across folds. The evaluation captures robustness under degraded sensing conditions.

Reproducibility: The authors provide source code publicly on GitHub. The dataset used is publicly available Synthetic-UAV-Flight-Trajectories from Hugging Face. Model weights are not explicitly frozen or released but can be retrained from code.

Concrete example: For one high noise (100m range sigma) trajectory downsampled at 75% measurements, BNKF achieves average Euclidean position error ~9m compared to 30+m for EKF/UKF, demonstrating its ability to track more accurately and robustly through noisy, sparse observations using learned dynamics and uncertainty propagation.

Technical innovations

- Integration of Bayesian Neural Network predictive mean and epistemic uncertainty estimates directly into Kalman filter covariance propagation.

- Proposed hybrid Bayesian Neural Kalman Filter (BNKF) that couples offline-trained variational BNN with classical Kalman correction step for online UAV state estimation.

- Design of an ensemble BNKF variant (BNKFe) that decomposes Cartesian components into separate smaller BNNs to reduce complexity while improving uncertainty quantification.

- Systematic evaluation across varying sensor noise and sampling rates to demonstrate robust performance under degraded sensing compared to EKF and UKF baselines.

Datasets

- Synthetic-UAV-Flight-Trajectories — 5000+ trajectories (~20 hours simulated flight) — Publicly available on HuggingFace

Baselines vs proposed

- EKF: Euclidean Distance Error (ED) at high noise = 35.16m vs BNKF = 8.63m

- UKF: ED at high noise = 66.78m vs BNKF = 8.63m

- EKF: Mahalanobis Distance (MD) at high noise = 9.35 vs BNKF = 5.68

- UKF: MD at high noise = 25.17 vs BNKF = 5.68

- EKF: Covariance determinant at high noise = 15740 m^3 vs BNKF = 13.66 m^3

- UKF: Covariance determinant at high noise = 7262 m^3 vs BNKF = 13.66 m^3

- Standalone BNN: ED at high noise = 13.13m vs BNKF = 8.63m

- BNKFe ensemble: covariance determinant at high noise = 8.11 m^3 vs BNKF = 13.66 m^3 (uncertainty reduction)

- BNKFe ED at high noise = 9.24m vs BNKF = 8.63m

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2604.28107.

Fig 1: Example Simulated Trajectories.

Fig 2: Radar Sensor Measurement Generation Example

Fig 3: Example Downsampled Trajectories

Fig 4: NN versus BNN [23]

Fig 5: BNKF Process Flow

Fig 6: BNKFe Process Flow

Fig 7 (page 3).

Fig 7: Single Trajectory Inference Time Comparison

Limitations

- Evaluation uses only synthetic UAV trajectories simulated under Gaussian noise assumptions; real-world noise may be non-Gaussian or more complex.

- Fixed sensor platform assumed; results may not generalize to moving or distributed sensor scenarios.

- No adversarial manipulation or jamming explicitly modeled—evaluation limited to degraded sensing conditions only.

- Training and testing rely on a single dataset with simulated dynamics; external validation on real flight data is missing.

- The BNN training hyperparameters, training duration, and sensitivity to architectures are not fully detailed.

- Mahalanobis distance results show BNKF uncertainty sometimes larger in low noise, potentially inflating containment metrics.

Open questions / follow-ons

- How does BNKF perform on real-world UAV datasets with complex, possibly non-Gaussian noise and disturbances?

- Can the approach be adapted or extended for heterogeneous, moving, or networked multi-sensor fusion scenarios?

- What are the robustness limits of the BNN uncertainty calibration under adversarial sensor attacks or jamming?

- How does BNKF scale or adapt to other nonlinear dynamical systems beyond UAVs?

Why it matters for bot defense

This paper’s contributions are directly relevant to bot-defense and CAPTCHA systems that rely on sensor fusion or state estimation under uncertainty, such as analyzing user device motion or behavior patterns amidst noisy or adversarial input data. The BNKF approach shows how neural networks can be combined with classical filters to better propagate predictive uncertainty and improve robustness in degraded sensing scenarios common in bot detection (e.g., spoofed or missing signals). Captcha engineers might leverage similar hybrid Bayesian neural filtering to more reliably estimate user states or activity contexts, improving confidence in authentication or challenge-response decision-making. Furthermore, the use of uncertainty-aware models helps avoid overconfident predictions, a known risk factor in security-critical inference systems.

Cite

@article{arxiv2604_28107,

title={ Neural Aided Kalman Filtering for UAV State Estimation in Degraded Sensing Environments },

author={ Akhil Gupta and Erhan Guven },

journal={arXiv preprint arXiv:2604.28107},

year={ 2026 },

url={https://arxiv.org/abs/2604.28107}

}