Global Optimality for Constrained Exploration via Penalty Regularization

Source: arXiv:2604.28144 · Published 2026-04-30 · By Florian Wolf, Ilyas Fatkhullin, Niao He

TL;DR

This paper addresses the challenging problem of constrained maximum-entropy exploration in reinforcement learning (RL), where an agent seeks a policy that maximizes the entropy of the induced state-action occupancy while satisfying convex constraints representing safety, resource, or imitation requirements. Existing approaches either rely on model-based methods that do not scale well or policy-gradient methods with weak guarantees limited to ergodic averages rather than the actual final deployed policy. The authors propose the Policy Gradient Penalty (PGP) method, which formulates the constrained problem as an unconstrained quadratic penalty problem directly in policy parameter space, using pseudo-rewards to compute policy gradient estimates compatible with standard policy gradient theorem techniques. They leverage hidden convexity of the occupancy formulation to prove global last-iterate convergence guarantees to an approximately feasible and optimal policy, despite the non-convexity introduced by parameterization. Empirical evaluation on a grid-world benchmark shows robustness to penalty parameter choice and improved constraint satisfaction compared to a previous primal-dual baseline, while experiments on continuous control tasks (PointMass and SafeCartpole) demonstrate scalability and effective navigation of constraint-exploration tradeoffs.

Key findings

- PGP achieves global last-iterate convergence to (ε, ε)-optimal solutions with sample complexity scaling as e^{O(ε^{-6})} trajectory rollouts (Corollary 1).

- PGP enforces convex constraints through a quadratic penalty without dual variables or inner optimization loops, simplifying implementation and hyperparameter tuning.

- In grid-world experiments, PGP constrains violations below threshold robustly across penalty parameters spanning several orders of magnitude (Fig 2).

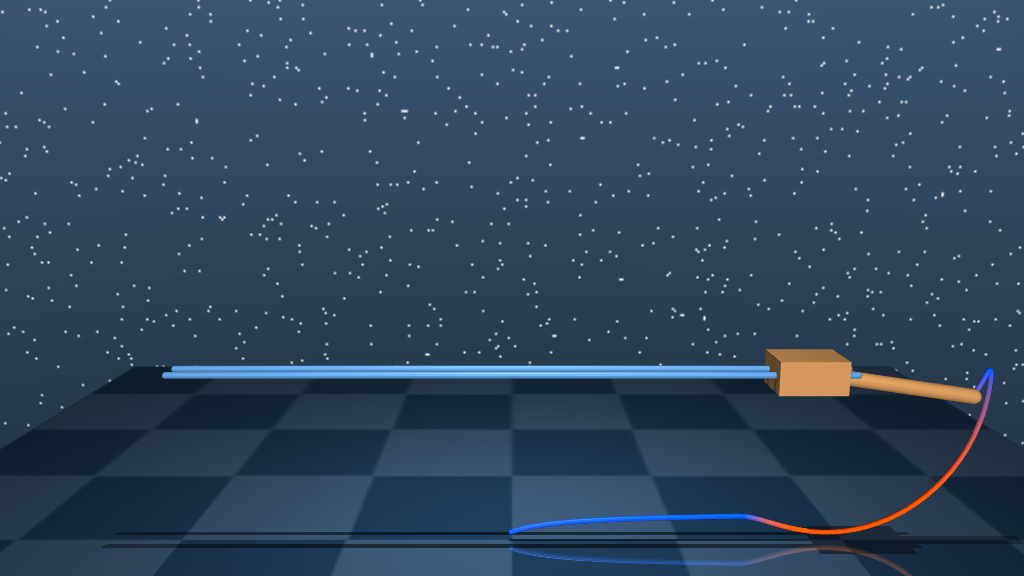

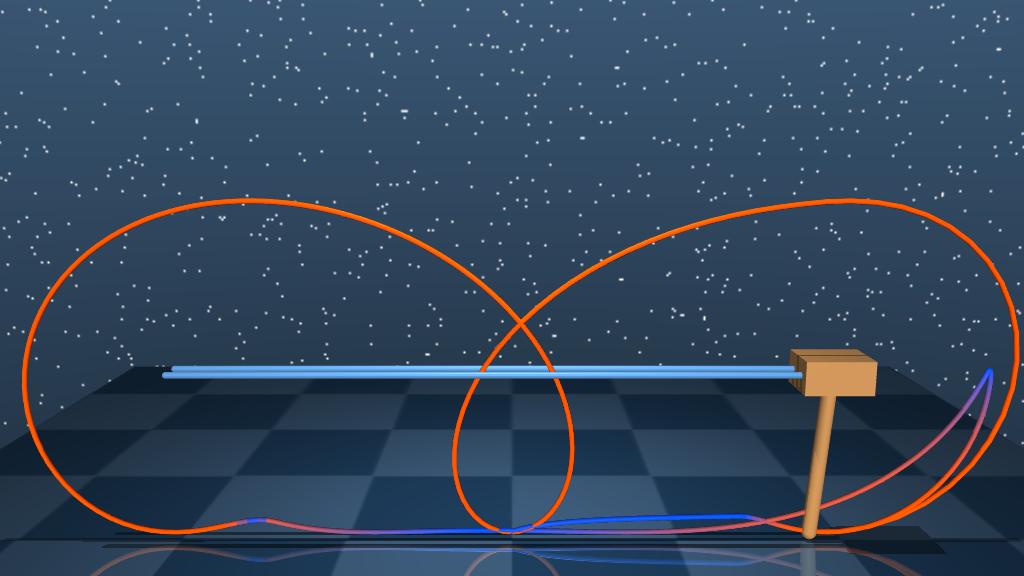

- PGP outperforms the state-of-the-art primal-dual method of Ying et al. (2025) by producing a single near-feasible deployable policy rather than weak ergodic average guarantees (Fig 1).

- In continuous PointMass environment under an imitation (KL divergence) constraint, PGP learns policies with progressively more uniform state-space coverage as the constraint budget increases (Fig 3).

- In SafeCartpole with a box constraint on cart position, PGP successfully balances entropy maximization (exploration) and constraint feasibility through training (Fig 4).

- The theoretical analysis establishes that the penalized objective is L-smooth and that the softmax policy class is dense in stationary policies, validating assumptions for global convergence.

- The batch size required to control bias from occupancy measure estimation scales as B = O(ε^{-4}), which is likely conservative as ablations suggest better practical efficiency.

Threat model

The adversary in this setting is implicit as environmental constraints—such as safety, resource limits, or imitation requirements—that must not be violated by the exploratory policy. The agent samples trajectories under stochastic transitions and does not have perfect model knowledge, working under convex constraints on the state-action occupancy distribution. The agent cannot violate these constraints beyond a small ε margin but must still maximize exploratory entropy when learning the policy.

Methodology — deep read

- Threat Model & Assumptions: The adversary here is implicit in the RL setting as the environment imposing constraints that the exploration policy must satisfy (e.g., safety budgets, imitation closeness). The agent does not have perfect model knowledge and only accesses stochastic rollouts. The work assumes convex constraints on state-action occupancy measures, strong duality holds, and policies are parameterized via softmax functions. Assumptions include smoothness bounds on policy score functions and Lipschitzness/local invertibility of the occupancy-measure mapping.2. Data: Experiments use classic grid-world FrozenLake (discrete finite MDP) and two continuous control tasks (PointMass and SafeCartpole). Trajectories of length H=O(log(1/ε)) are sampled per iteration, with batch size B=O(ε^{-4}). The occupancy measure is estimated via Monte Carlo rollouts or maximum likelihood estimators in continuous spaces. Labels correspond to the reward and constraint pseudo-rewards derived from occupancy gradients.3. Architecture / Algorithm: Policies are parameterized by a softmax over a parametrized score function ψ(s,a;θ). The core algorithm (Algorithm 1) reformulates the constrained max-entropy problem into a quadratic penalty objective P(θ) = -H(λπθ) + β[L(F2(θ))], where L is squared hinge. Pseudo-rewards corresponding to ∇λ[-H] and ∇λ[L∘R] are computed via backprop through the occupancy measure estimate. Using these pseudo-rewards, a single Monte Carlo batch is used to compute unbiased policy gradient estimates for the penalized objective via the policy gradient theorem and REINFORCE estimator, enabling a single-loop stochastic gradient descent in policy parameter space.4. Training Regime: The algorithm runs for N ≥ e^{O(ε^{-2})} iterations with batch size B=O(ε^{-4}), step size η=O(ε), and penalty parameter β=O(ε^{-1}). These parameters trading off accuracy, constraint violation, and sample complexity are derived from theoretical smoothness and strong duality assumptions. Both discrete and continuous state-action spaces are explored, using trajectory truncation and occupancy estimation techniques accordingly.5. Evaluation Protocol: Quantitative metrics include the entropy of the final policy's occupancy measure (objective) and constraint violation value. Baselines include the primal-dual PDPG method from Ying et al. (2025) known for ergodic average guarantees. Experiments report the final iterate's feasibility and optimality rather than ergodic averages, across multiple seeds (10). Ablations test robustness over penalty parameter β and step sizes.6. Reproducibility: The paper provides thorough theoretical proofs in appendices and algorithmic pseudocode; concrete implementation details for occupancy estimation in continuous spaces are deferred to supplemental sections. No mention of public code or pretrained models is made, but the methods rely on standard policy gradient estimators making replication feasible given access to environments. One concrete example end-to-end is the FrozenLake grid experiment where PGP uses batch rollouts to estimate occupancy, computes pseudo-rewards for objective and constraint gradients, performs SGD updates in the softmax policy parameter θ, and converges to a policy that nearly maximizes entropy while respecting the safety constraint.

Technical innovations

- Reformulating constrained maximum-entropy exploration as a single-loop quadratic penalty method directly in policy parameter space without dual variables.

- Constructing pseudo-rewards that yield unbiased stochastic gradient estimates for both the entropy objective and constraint penalties using the policy gradient theorem.

- Exploiting hidden convexity and strong duality in the occupancy-measure space to prove global last-iterate convergence under non-convex parametrized policies.

- Achieving poly(1/ε) sample complexity guarantees for constrained max-entropy exploration with a deployable single policy output rather than ergodic average guarantees.

Datasets

- FrozenLake grid-world — discrete finite MDP — standard OpenAI Gym environment

- PointMass continuous control — environment from Dulac-Arnold et al. (2021)

- SafeCartpole continuous control — environment from Dulac-Arnold et al. (2021)

Baselines vs proposed

- Primal-Dual Policy Gradient (PDPG) Ying et al. (2025): constraint violation oscillates near threshold, ergodic average weak regret guarantees vs PGP: last-iterate nearly feasible policy with lower constraint violation and stable convergence (Fig 1)

- Across penalty parameters β: entropy H remains stable while constraint violation decreases monotonically with β for PGP (Fig 2)

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2604.28144.

Fig 3: PointMass: State-Space Coverage. Relative visit frequencies of the SAC reference policy

Fig 4: (CME) on SafeCartpole. Trajectories illustrate diverse exploration of the full state space,

Fig 1: Solving

Fig 2: Robustness to β. The entropy of the final

Fig 5 (page 9).

Fig 6 (page 9).

Fig 5: Overview of the grid-world with discrete state-action space (left) and the safe cartpole exploration

Fig 6: Ablation study of unconstrained entropy maximization on the environment Figure 5a with

Limitations

- Strong duality assumption needed for global convergence guarantees may not always hold in practice, though it is weaker than Slater’s condition.

- Large batch sizes (B=O(ε^{-4})) and trajectory lengths required for theoretical guarantees lead to potentially high sample complexity.

- The method currently assumes smooth convex constraints and may not directly handle non-convex or hard constraints.

- Theoretical smoothness assumptions for entropy and constraint gradients only hold locally or require smoothing approximations.

- Continuous occupancy measure estimation relies on maximum likelihood estimators whose bias/variance properties are not fully characterized here.

- Empirical evaluation includes limited sets of benchmarks without adversarial or distribution shift robustness analyses.

Open questions / follow-ons

- Can the batch size and sample complexity be reduced via variance reduction or other advanced gradient estimation techniques?

- How to extend the framework to handle non-convex or discrete constraints beyond the smooth convex class considered?

- Can the approach be adapted for online or meta-RL settings with non-stationary constraints or environments?

- What are the robustness properties under adversarial or distributionally shifted constraints and environments?

Why it matters for bot defense

Bot-defense and CAPTCHA systems often require safe, constrained exploration when adapting dynamic challenge policies or reinforcement-learned user interaction strategies under constraints such as user experience, fairness, or resource limits. This paper's approach provides a theoretically justified, scalable method to maximize exploration entropy (diversity of challenge strategies) while respecting convex constraints relevant to safe deployment. The policy gradient penalty framework's single-loop, model-free nature combined with last-iterate guarantees implies practical tractability for continuous, high-dimensional control problems common in adaptive security mechanisms. Additionally, the use of pseudo-rewards for constraint enforcement could inspire novel techniques for integrating behavioral constraints into reinforcement-trained CAPTCHA or bot-detection policies. Practitioners should note, however, the sample complexity and smoothness assumptions that might limit direct application without adaptation to the interactive human-in-the-loop domain.

Cite

@article{arxiv2604_28144,

title={ Global Optimality for Constrained Exploration via Penalty Regularization },

author={ Florian Wolf and Ilyas Fatkhullin and Niao He },

journal={arXiv preprint arXiv:2604.28144},

year={ 2026 },

url={https://arxiv.org/abs/2604.28144}

}