Beyond Project-Based Learning: Conference-Style Writing as Authentic Assessment in Interdisciplinary Quantum Engineering Education

Source: arXiv:2604.27110 · Published 2026-04-29 · By Nischal Binod Gautam, Enrique P. Blair

TL;DR

This paper asks a narrower question than the usual “does project-based learning work?” debate: in an introductory quantum mechanics course for engineers, does requiring students to finish their project as a conference-style paper add educational value beyond the project itself? The authors frame the paper requirement as an instance of authentic assessment — not just an extra writing assignment, but a way to push students into disciplinary communication, research framing, and reflection on limitations. The study is positioned against the broader PBL literature, which strongly supports projects and authentic artifacts but rarely prescribes a specific scholarly genre as the endpoint.

The evidence comes from a post-course survey after a pilot offering of “Quantum Mechanics for Engineers” with 10 students total (6 graduate, 4 undergraduate). Quantitatively, students reported strong positive effects on engagement, confidence, scientific communication, and research readiness, while also indicating that the conference-paper requirement was demanding and would benefit from more scaffolding. The main result is not that students loved the paper requirement; rather, they saw it as worthwhile and pedagogically meaningful, even when it felt burdensome. The authors therefore argue for keeping the conference-style paper in future offerings, especially for graduate students, but with staged checkpoints and better support.

Key findings

- Students reported low prior familiarity with quantum topics: mean prior familiarity with quantum mechanics/quantum computing was 2.40/5, while prior project-based learning experience was 4.18/5 and comfort with computational tools was 3.82/5.

- Project design was perceived as clear: project instructions rated 4.00, deliverables 4.18, and early project introduction 4.50–4.55/5; the “often felt lost and needed more guidance” item was low at about 2.36/5.

- The project improved perceived learning and engagement: motivation to learn quantum mechanics 3.90–4.00/5, more engaged than traditional homework 4.00–4.09/5, and more confident discussing quantum mechanics 4.18–4.20/5.

- Students reported gains in research-adjacent skills: scientific communication 4.30–4.36/5, ability to structure a longer scientific report 4.18–4.20/5, and readiness for future research/independent study 4.09–4.10/5.

- The conference-style paper was viewed as useful but demanding: it helped students better understand how scientific research is communicated (3.70/5), but support for “keep the conference-paper requirement” was only moderate (3.40/5).

- Students distinguished burden from value: the item “simpler report might be more appropriate” received moderate agreement around 3.50/5, and “conference paper increased motivation for high-quality work” was only 3.20/5.

- Open-ended responses favored retention with scaffolding rather than removal: students asked for midpoint check-ins/progress checkpoints, clearer scope calibration by course level, and better alignment between lectures, applications, and paper expectations.

Methodology — deep read

The threat model here is pedagogical rather than adversarial: the authors are not defending against attackers, but studying how students experience a course design intervention. The relevant “adversary” in the abstract sense is course difficulty itself — especially the combination of unfamiliar quantum content, research-style reading, technical implementation, and formal writing. Assumptions are explicitly classroom-based: a single course offering, mixed graduate/undergraduate enrollment, project-based instruction across the semester, and a final artifact framed as a conference-style paper. The authors assume the survey captures student perception after the course, not objective learning gains, and they do not claim causal identification beyond the intervention context.

Data come from a post-course survey administered to 10 students enrolled in “Quantum Mechanics for Engineers”: 6 graduate students and 4 undergraduate seniors. The course design differed slightly by level: graduate students worked individually, while undergraduates worked in a group. The survey used five-point Likert items (1 = strong disagreement, 5 = strong agreement) spanning prior background, clarity of the project, engagement, conceptual understanding, workload, instructor support, and the conference-paper requirement. It also included open-ended prompts about what to add, remove, or improve. The paper says responses were analyzed descriptively at the item level using averages and response distributions, and open-ended answers were read qualitatively for recurring themes; no inferential statistics, coding scheme, or inter-rater reliability procedure is reported. The authors explicitly keep items separate rather than collapsing them, because their main concern is the writing component’s role relative to the broader project experience.

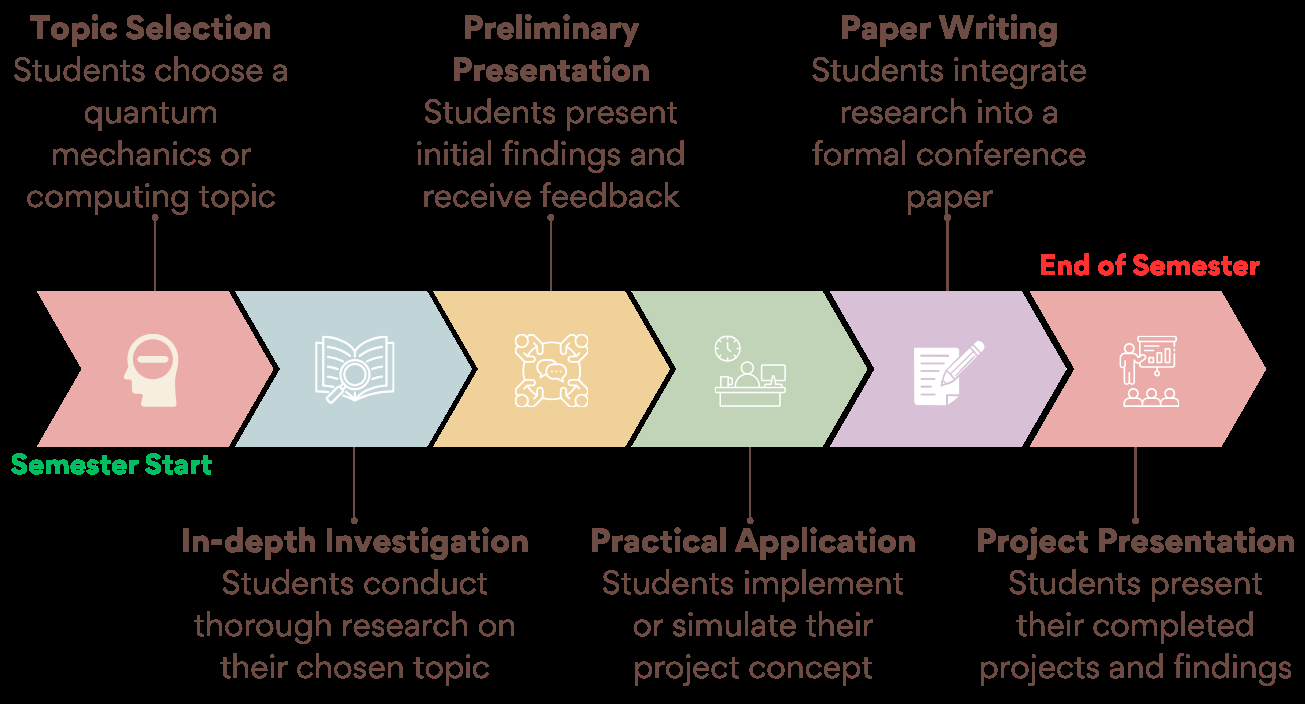

The course/assignment architecture is described as a semester-long sequence rather than a one-shot deliverable. Students were introduced to the project early, selected an application-centered topic related to quantum information sciences, reviewed literature, developed an analytical or computational approach, interpreted results, and presented the work orally before producing a conference-style manuscript. The paper format was intended to include a structured abstract, technical background, methods, results, and discussion. The authors note an alternative: students could submit a comprehensive longer project report to the instructor if needed, though the exact rules for that fallback are not deeply specified. A concrete end-to-end example is only described at a high level in the excerpt: students were expected to connect an application to quantum formalism, justify modeling choices, run an analytical/computational implementation, and then explain limitations and implications in a scholarly narrative. The course also intentionally omitted a traditional final exam so that the project could function as the capstone experience.

Training regime is not applicable in the machine-learning sense, but the instructional regime is clear: early project launch, literature review, midpoint feedback, implementation/simulation, presentation, and final paper. Hardware, epochs, optimizers, and seeds are irrelevant here and not reported. There is also no evidence of randomization, control group, or pre/post testing. The study is exploratory and practice-oriented, so the authors emphasize interpretation over statistical testing. One ambiguity worth noting is that the paper references both “conference-style paper” and “conference submission,” but the excerpt suggests the key requirement was writing in the genre; actual submission logistics appear to have been optional or at least negotiable.

Evaluation is entirely survey-based. The authors report means such as project clarity (~4.0–4.18), early introduction (~4.5), improved communication (~4.3–4.36), and readiness for research (~4.1). They compare broader project items against writing-specific items to determine whether students saw the paper as a distinct value-add or just extra workload. No baseline class without a conference-style paper is reported, no held-out cohort is used, and no statistical significance tests appear in the excerpt. Qualitative responses are summarized into themes: progress checkpoints, scope calibration, submission logistics, preserving the core project model, and strengthening conceptual bridges. Reproducibility is limited: the course design and survey question types are described, but the full instrument is only said to be in Appendix A, and no code, raw data, or frozen materials are mentioned in the excerpt.

Technical innovations

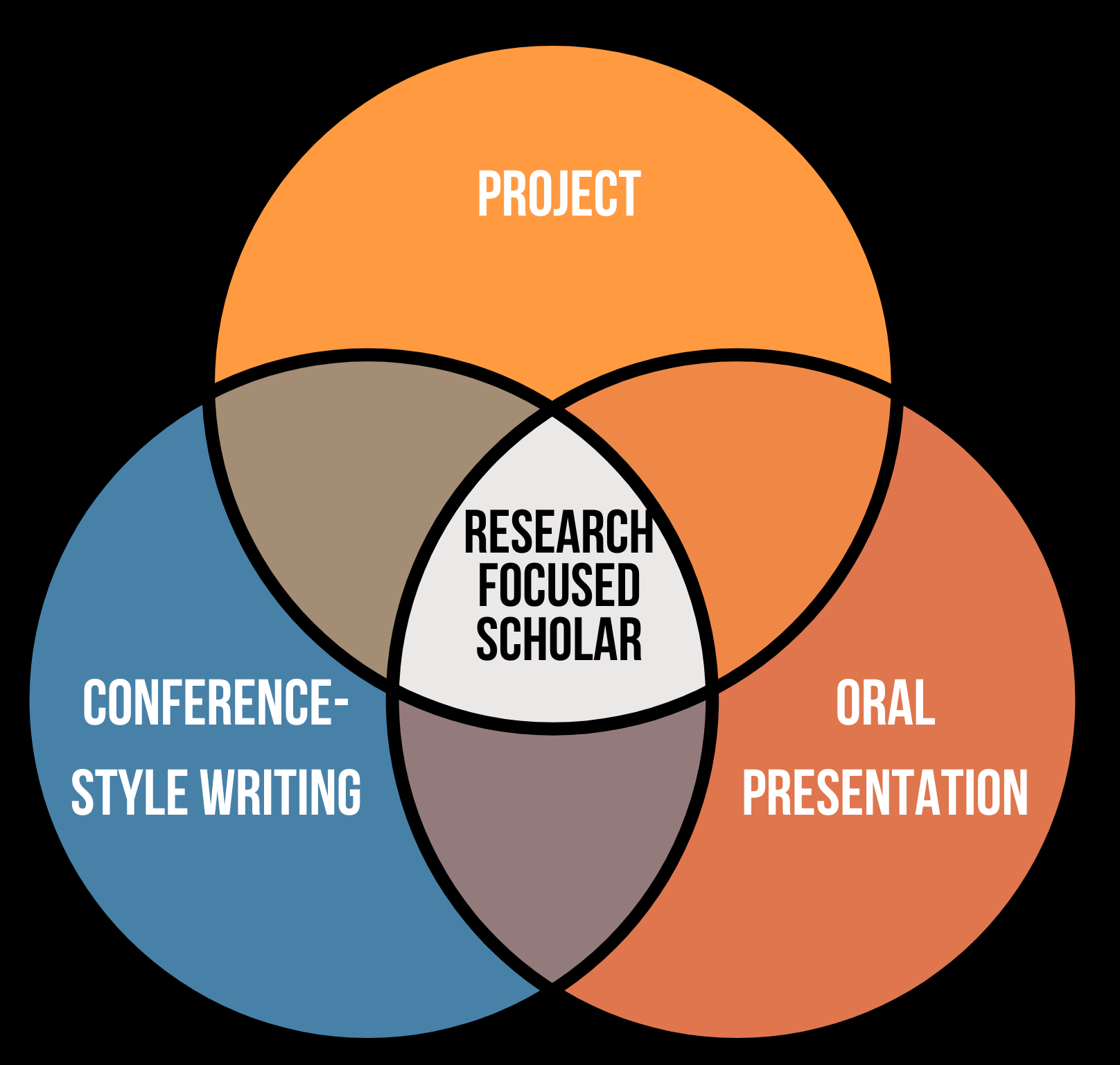

- Extends project-based learning in quantum engineering by making the culminating artifact a conference-style paper rather than a conventional lab report or generic project write-up.

- Treats scholarly writing as authentic assessment: the paper is used to evaluate students’ ability to frame problems, justify methods, interpret results, and communicate like emerging researchers.

- Integrates oral presentation, literature review, implementation, and conference-style manuscript into one semester-long learning arc instead of separate assignments.

Datasets

- Post-course survey responses — n=10 students (6 graduate, 4 undergraduate) — collected from a single pilot offering of ‘Quantum Mechanics for Engineers’ at Baylor University

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2604.27110.

Fig 1: The semester-long project timeline shows how topic

Fig 2: The venn diagram illustrates the complementary roles

Limitations

- Very small sample size (n=10), from one course offering at one institution, so generalizability is limited.

- No control group or comparison course without the conference-paper requirement, so causal claims about the writing assignment are not identified.

- Outcomes are self-reported perceptions rather than direct measures of learning, transfer, or publication-quality writing.

- The analysis is descriptive; no statistical significance tests, effect sizes, or reliability metrics for the survey instrument are reported in the excerpt.

- Graduate and undergraduate students had different project formats (individual vs group), but the excerpt does not disaggregate results by level.

- Open-ended feedback suggests the assignment may be too compressed without stronger scaffolding, so the positive reception depends on implementation details.

Open questions / follow-ons

- Would the same conference-paper requirement work as well in a larger class, or in a course with less instructor support?

- How much of the perceived value comes from the paper genre itself versus the semester-long sequencing and checkpoint structure?

- Do conference-style papers improve objective writing quality, technical reasoning, or research readiness beyond student self-report?

- How should expectations differ for undergraduate versus graduate students when authentic scholarly writing is used as assessment?

Why it matters for bot defense

For bot-defense and CAPTCHA practitioners, the transferable idea is not quantum content, but assessment design: if you want to measure whether someone can do a task authentically, you often need a culminating artifact that resembles the real workflow rather than a shallow proxy. In this paper, the authors argue that the writing format matters because it forces synthesis, justification, and communication under realistic constraints. That same logic applies to evaluation of humans, attackers, or assistants in adversarial settings: a robust assessment should require the subject to produce the kind of reasoning or output the real environment demands, not just answer isolated prompts.

The cautionary lesson is equally relevant. The survey suggests that authentic assessment can be valuable while still feeling burdensome unless it is scaffolded. For bot defense, that maps to a common deployment mistake: making an evaluation or challenge too ambitious without enough intermediate guidance or calibrated difficulty. If you are designing a human verification flow, fraud-detection training exercise, or red-team benchmark, this paper supports using staged checkpoints, clearer scope, and a final artifact that reflects real practice — but also warns that authenticity alone does not guarantee usability or fairness.

Cite

@article{arxiv2604_27110,

title={ Beyond Project-Based Learning: Conference-Style Writing as Authentic Assessment in Interdisciplinary Quantum Engineering Education },

author={ Nischal Binod Gautam and Enrique P. Blair },

journal={arXiv preprint arXiv:2604.27110},

year={ 2026 },

url={https://arxiv.org/abs/2604.27110}

}