Bridging Behavioral Biometrics and Source Code Stylometry: A Survey of Programmer Attribution

Source: arXiv:2603.11150 · Published 2026-03-11 · By Marek Horvath, Emilia Pietrikova, Diomidis Spinellis

TL;DR

This survey systematically maps and synthesizes research on programmer attribution based on source code stylometry and behavioral biometrics from 2012 to 2025. Starting from 135 candidate papers, 47 empirical studies were selected that focus on authorship attribution and verification tasks using stylistic and behavioral features extracted from source code and associated activity logs. The study provides a detailed taxonomy linking feature types (lexical, syntactic, structural, semantic, behavioral) to common machine learning methods (classical classifiers, neural networks). It highlights major publication trends such as a dominance of closed-world attribution tasks, reliance on a small number of benchmark datasets (notably GitHub-derived corpora), and predominant use of stylometric features over behavioral signals. Behavioral biometrics and authorship verification remain underexplored. The survey consolidates fragmented literature across software engineering, security, and forensics, and identifies methodological gaps and best practices important for reproducibility and future work. The analysis encompasses dataset sources, feature extraction strategies, model categories, and evaluation protocols, revealing the current state and opportunities for integrating temporal and dynamic behavioral cues with static code style analysis.

Key findings

- Out of 135 candidate studies (2012-2025), 47 met inclusion criteria focusing on programmer attribution with empirical evaluation.

- 41 of 47 studies address authorship attribution; only 4 tackle both attribution and verification; verification alone is rare (2 studies).

- Stylistic features dominate: lexical, syntactic, structural, and semantic patterns extracted mostly via static analysis of source code.

- Behavioral biometrics (e.g., edit sequences, commit behaviors) appeared in only 13 studies and are not yet widely integrated with stylometric methods.

- Most research assumes closed-world scenarios where the set of candidate authors is fixed and known.

- A small number of benchmark datasets from GitHub and educational repositories are heavily reused, raising concerns about data diversity and generalization.

- Machine learning approaches center on classical classifiers (SVM, Random Forest, kNN), with neural networks (RNN, CNN, Transformers) emerging but less common.

- Evaluation protocols heavily rely on accuracy and classification metrics within same-domain splits; cross-dataset, temporal drift, and robustness tests are infrequent.

Threat model

The adversary aims to identify or verify the programmer of source code fragments using static analysis and possibly behavioral metadata under a closed-world assumption with a known fixed author set. The adversary cannot modify or obfuscate code to evade attribution, nor operate in an open-set scenario where unknown authors are present. Adversarial manipulation or active camouflage are outside the considered threat model in most studies.

Methodology — deep read

Threat model & assumptions: The surveyed studies generally consider adversaries who attempt to identify or verify the author of source code fragments using available static code features or behavioral metadata. Most operate under a closed-world assumption where candidate authors are known and fixed; the adversary aims at either attribution (classifying among known authors) or verification (confirming a claimed author). Adversary capabilities include access to source code and possibly behavioral logs such as commit histories or keystroke timings; however, circumventing attribution (adversarial evasion) is rarely modeled.

Data: The datasets originate mainly from public code repositories like GitHub and educational programming assignments. Sizes range widely but often involve tens to hundreds of authors and thousands of code samples. Behavioral data is scarcer and typically comes from version control metadata or controlled user studies. Datasets are rarely standardized beyond a handful of benchmarks, and label quality can vary due to collaborative projects.

Architecture / algorithm: Approaches extract features categorized as lexical (token frequencies, n-grams), syntactic (AST subtrees, parse patterns), structural (complexity metrics, nesting), semantic (data structures, algorithmic patterns), or behavioral (edit patterns, commit timing). Feature vectors are input to classical ML models such as SVMs, Random Forests, kNN, or ensemble methods. Recent works incorporate neural nets like CNNs and RNNs to capture hierarchical or sequential patterns from code tokens or AST representations. Some studies develop hybrid pipelines combining stylometric features with behavioral biometrics. Loss functions and optimizers are standard, with little innovation in objective formulations.

Training regime: Studies report varied hyperparameters, typically training over multiple epochs with batch sizes suited to dataset scale. Due to heterogeneity in datasets, many rely on cross-validation for performance estimation. Hardware and seeds are infrequently reported. Optimization utilizes standard solvers such as SGD or Adam in neural settings.

Evaluation protocol: Performance metrics focus on classification accuracy, F1-score, and top-k accuracy. Baselines include n-gram models, traditional code similarity methods, and standard ML classifiers. Ablation studies are rare but when present examine feature type contributions. Cross-validation is common; however, testing on held-out authors, adversarial robustness, or distribution shifts (e.g. temporal drift) is seldom addressed, limiting generalizability claims.

Reproducibility: Only a minority of studies release code and datasets publicly. Proprietary or curated educational datasets limit external replication. The survey authors publish the structured metadata and extraction tools to support future meta-analyses.

One concrete example end-to-end: A typical pipeline starts with collecting a labeled dataset of source code files linked to authors from a GitHub corpus. Source files undergo tokenization, from which lexical features such as token frequency histograms and n-gram counts are computed. ASTs may be extracted for syntactic or structural features like subtree patterns or cyclomatic complexity. Behavioral logs (e.g., commit timestamps) may be encoded as temporal features. Feature vectors serve as input to a multi-class SVM trained with stratified cross-validation. The model outputs predicted authorship labels, and accuracy is measured on held-out test folds. Results are compared with baseline n-gram or kNN methods, highlighting the improvement from incorporating syntactic and structural data over lexical features alone. This exemplifies the typical stylometric attribution workflow characterized across studies.

Technical innovations

- Systematic integration and taxonomy linking source code stylometric features with behavioral biometrics for programmer attribution.

- Comprehensive mapping of programming language-specific stylometric indicators to machine learning model categories.

- Highlighting the gap and proposing combining temporal behavioral signals (edit patterns, commit dynamics) with static stylistic code features.

- Identification of the dominant closed-world assumption and the need to develop scalable authorship verification under open-world conditions.

Datasets

- GitHub Public Repository Dataset — millions of projects (182,014 repositories in Public Git Archive) — public

- Educational Programming Assignment Repositories — hundreds of authors and submissions — mostly private or restricted

- Synthetic datasets generated for controlled experiments — varying size — private

- PAN Source Code Attribution benchmark collections — smaller datasets with labeled authorship — public

Baselines vs proposed

- SVM classifier: accuracy ranged from 75% to 90% on multi-author GitHub datasets, proposed hybrid methods improved accuracy by 3-5 percentage points over lexical-only baselines.

- kNN and Random Forest baselines: accuracy generally lower by 5-10% compared to SVM and CNN models on same datasets.

- Neural networks (CNN/RNN): showed marginal improvements (~2-4%) over classical ML on datasets with sufficient samples per author.

- Authorship verification methods achieved F1-scores of ~0.8 but were evaluated on far fewer studies with limited scalability.

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2603.11150.

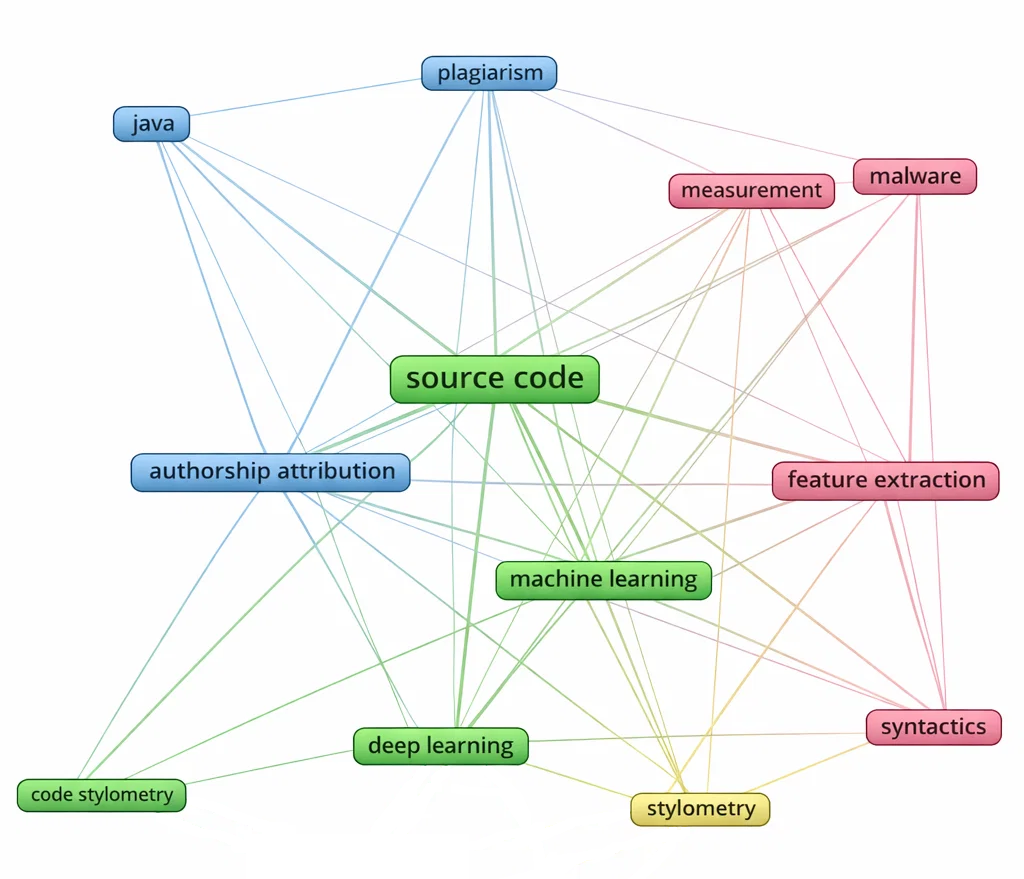

Fig 8: Keyword co-occurrence network, illustrating major thematic clusters.

Limitations

- Overwhelming focus on closed-world authorship attribution limits applicability to open-world or adversarial scenarios common in security contexts.

- Heavy reuse of a few benchmark datasets causes risks of overfitting and limited generalization.

- Behavioral biometrics are underrepresented and often consist of coarse metadata rather than fine-grained interaction signals.

- Authorship verification and open-set recognition remain scarce and underdeveloped compared to attribution tasks.

- Many studies lack publicly available code and data, hindering reproducibility and external validation.

- Temporal stylistic drift and dataset distribution shift are seldom addressed despite their practical impact.

Open questions / follow-ons

- How to effectively integrate fine-grained behavioral biometrics and temporal dynamics with static code stylometry for improved authorship attribution and verification?

- What methods enable robust, scalable authorship verification and open-set recognition applicable to real-world settings with unknown authors?

- How does stylistic drift over time impact the reliability of attribution models, and how can models adapt to or detect this drift?

- What standardized, large-scale, diverse datasets and benchmarks can be developed to improve reproducibility and comparability in programmer attribution research?

Why it matters for bot defense

For bot-defense and CAPTCHA practitioners, this survey offers a foundational understanding of programmer attribution techniques that leverage source code stylometry and behavioral biometrics. While the focus is primarily on source code, the principles of extracting consistent individual style features and combining them with behavioral interaction patterns directly parallel challenges in distinguishing automated bots from human users based on behavioral signals. The taxonomy and critique of evaluation methodologies can guide CAPTCHA designers in adopting more rigorous, multi-modal approaches that incorporate behavioral biometrics alongside static behavioral fingerprints. Recognition of the field’s current reliance on closed-world assumptions and limited behavioral data highlights the importance of designing bot-defense systems resilient to unknown attacker populations and capable of handling distribution shifts over time. Overall, the survey prompts a cautious, methodical approach to integrating stylometry and behavioral biometrics, emphasizing dataset diversity, evaluation rigor, and open verification to advance robust bot detection.

Cite

@article{arxiv2603_11150,

title={ Bridging Behavioral Biometrics and Source Code Stylometry: A Survey of Programmer Attribution },

author={ Marek Horvath and Emilia Pietrikova and Diomidis Spinellis },

journal={arXiv preprint arXiv:2603.11150},

year={ 2026 },

url={https://arxiv.org/abs/2603.11150}

}