RABot: Reinforcement-Guided Graph Augmentation for Imbalanced and Noisy Social Bot Detection

Source: arXiv:2602.21749 · Published 2026-02-25 · By Longlong Zhang, Xi Wang, Haotong Du, Yangyi Xu, Zhuo Liu, Yang Liu

TL;DR

This work addresses the critical challenges in social bot detection arising from severe class imbalance between bots and humans, and noisy graph topologies caused by deceptive bot connections that degrade Graph Neural Network (GNN) performance. The authors propose RABot, a multi-granularity framework combining neighborhood-aware oversampling to synthesize minority-class embeddings, and reinforcement-learning-based adaptive edge filtering to remove spurious edges in the social graph. This joint augmentation and denoising approach is architecture-agnostic and can be integrated with various GNN backbones.

Extensive experiments on three real-world social bot detection benchmarks (Cresci-15, Twibot-20, and MGTAB) and four different GNN architectures demonstrate that RABot consistently outperforms state-of-the-art baselines in both accuracy and F1-score. Notably, RABot boosts classification accuracy by over 2 percentage points on average when added to existing backbones, with gains especially pronounced on large noisy graphs like MGTAB. Ablation studies confirm the complementary contributions of the oversampling and edge-filtering modules. Further analyses reveal improved stability, data efficiency, and computational resource usage compared to prior methods.

Key findings

- RABot (RGT backbone) achieves 99.14±0.21% accuracy and 98.94±0.34% F1-score on Cresci-15, surpassing previous best (BECE) by 0.41 and 0.37 points respectively.

- On Twibot-20, RABot (RGT) reaches 87.92±0.12% accuracy and 88.40±0.28% F1-score, improving over the strongest baseline SEBot by 0.66 and 0.34 points.

- On the large, noisy MGTAB dataset, RABot (RGCN) obtains 91.16±0.31% accuracy and 89.03±0.28% F1-score, a gain of 0.85 and 0.93 points over prior SOTA.

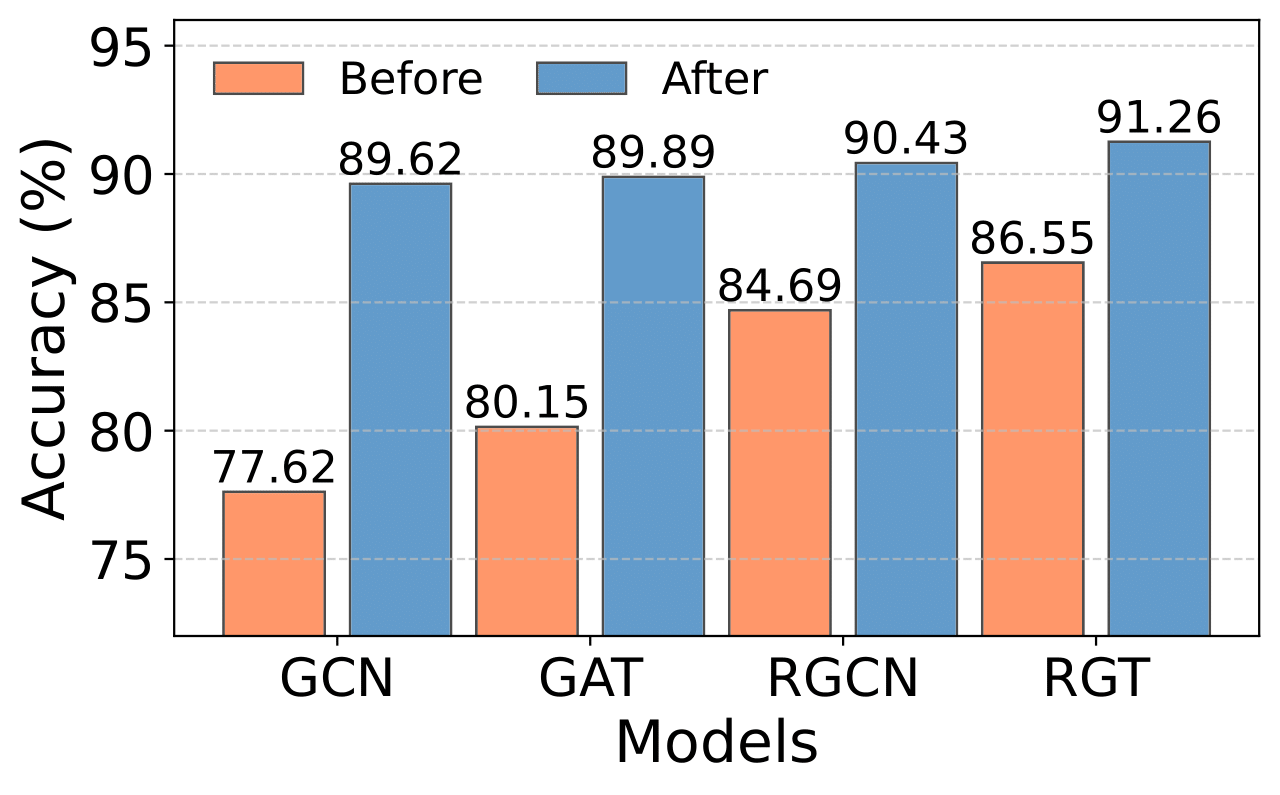

- RABot consistently improves four GNN backbones, increasing accuracy +2.99% (GCN), +2.74% (GAT), +1.51% (RGT), +2.25% (RGCN) on average across datasets.

- Ablation shows removing edge-filtering module causes largest single-component drop, up to −1.43% accuracy on MGTAB; feature augmentation also crucial for class balance.

- Dynamic threshold adaptation via reinforcement learning outperforms fixed thresholds by ~0.6% accuracy on Twibot-20 and MGTAB.

- RABot training is 4x faster and uses only 14% memory of the best competitor (LMBot) on Twibot-20, while running 300 epochs versus fewer for others.

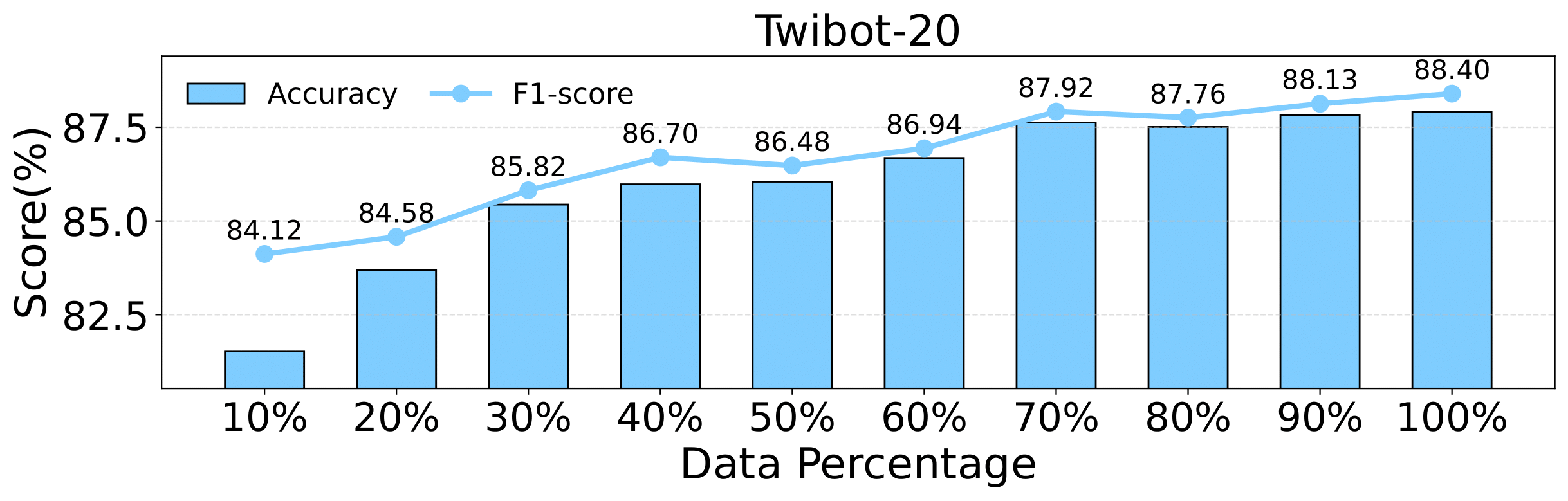

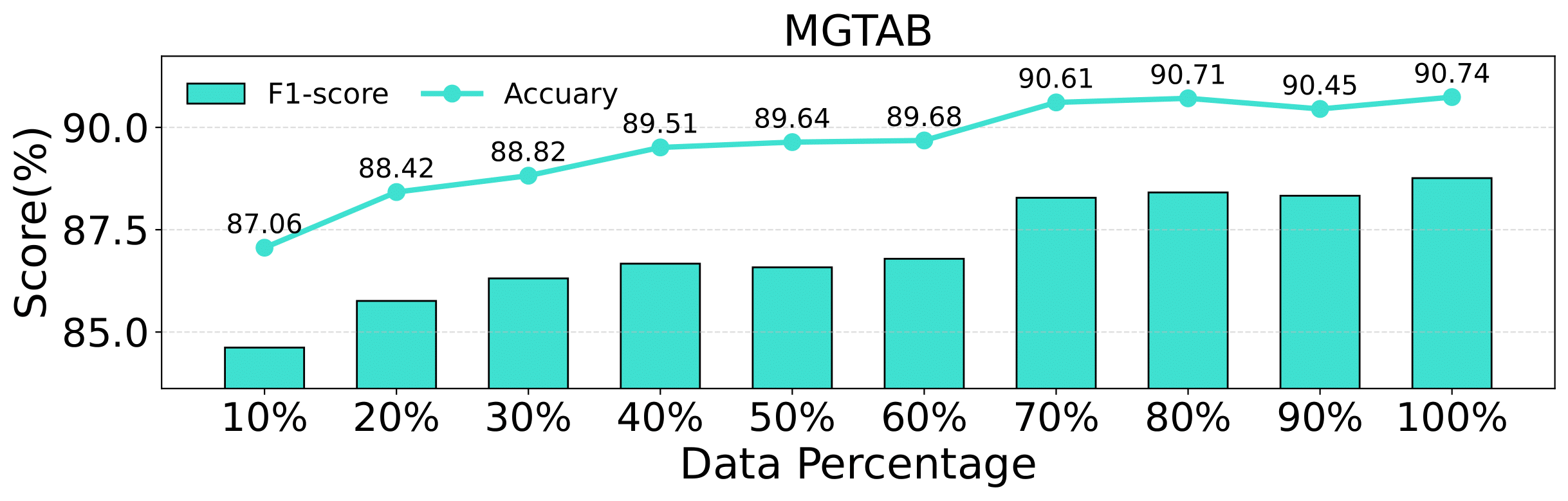

- RABot maintains strong performance under low supervision, surpassing baselines using as little as 50-80% of training data on Twibot-20 and MGTAB.

Threat model

The adversary controls a minority subset of social network nodes representing bots with the capability to mimic human behavior and engineer deceptive edges to confuse detection. The adversary cannot fully control or modify majority nodes' behavior or the global graph topology. The defender aims to detect bots by learning from graph structure and node features, coping with noisy and imbalanced data.

Methodology — deep read

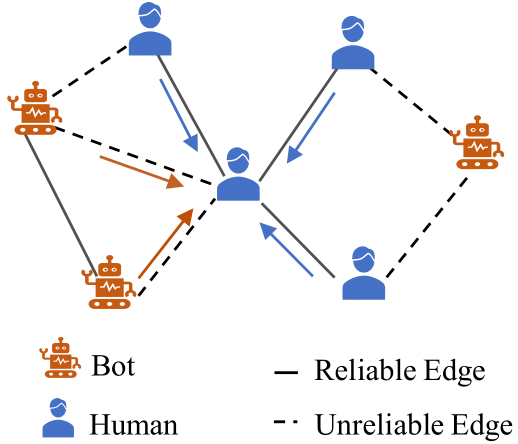

The threat model assumes an adversary controls a minority subset of nodes representing social bots, with capabilities to camouflage behavior and create deceptive edges in social graphs, making detection challenging due to severe class imbalance and noisy graph topology. The defender (RABot) aims to robustly differentiate bot and human nodes using graph-structured data.

Data: The method is evaluated on three public social bot detection benchmarks: Cresci-15 (~7K nodes), Twibot-20 (~229K nodes), and MGTAB (1.7M edges). Each contains user metadata, textual content (profile descriptions and tweets), and follower/friend relationships modeled as edges. 70%/20%/10% train/val/test splits are standard.

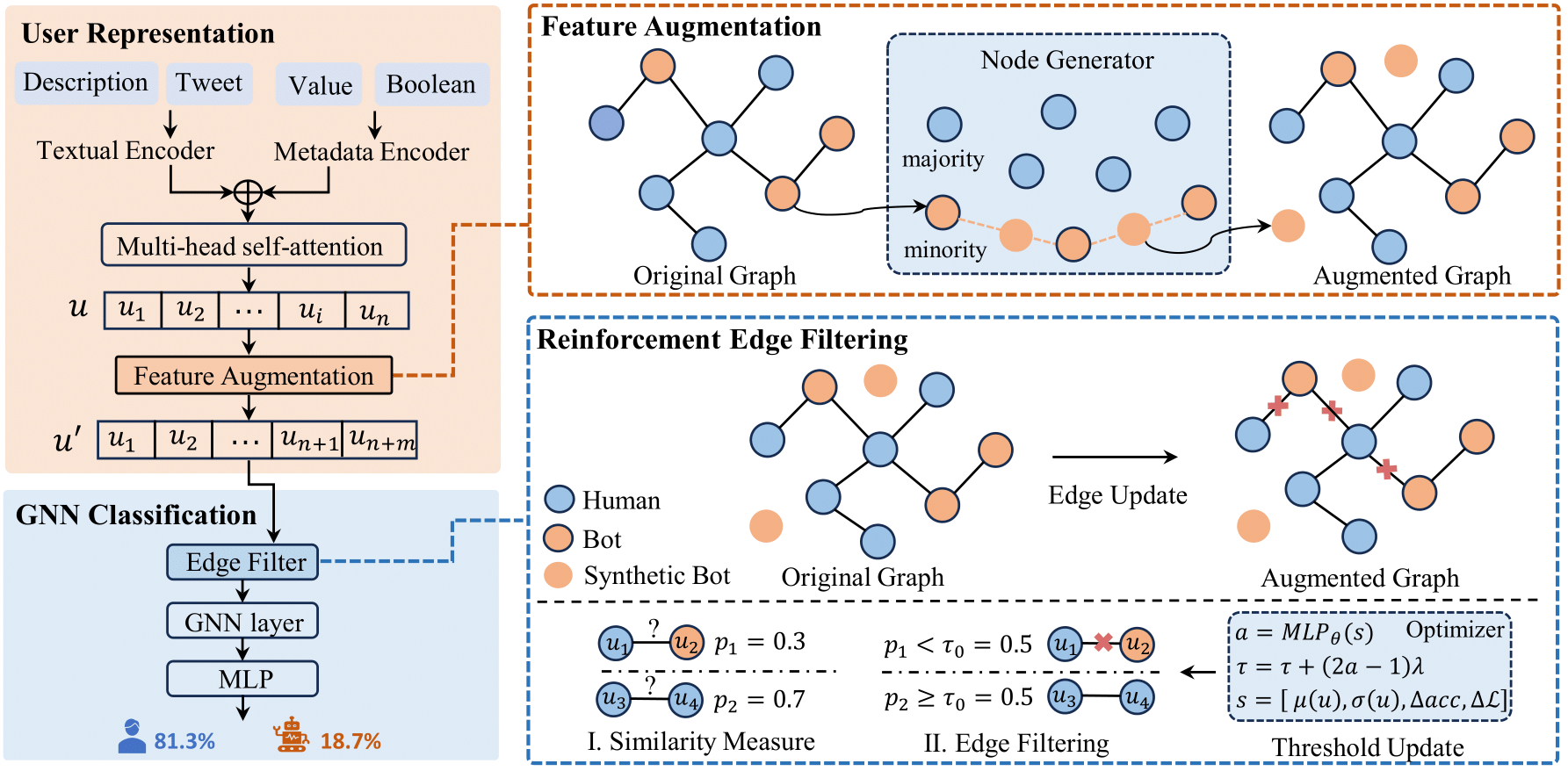

Architecture and Novel Components: RABot consists of four modules:

User Information Representation Module encodes heterogeneous features (numerical/boolean profile attributes plus textual content) into unified latent embeddings using MLPs and pretrained language models, fused through multi-head self-attention.

Feature Augmentation (Oversampling) Module synthesizes new minority-class node embeddings by linear interpolation in local latent subgraphs (neighbor-aware oversampling), balancing class proportions without noisy edge construction.

Reinforcement Edge Filtering dynamically prunes noisy or spurious edges during message passing. Similarity scores between connected nodes are computed via ℓ1 distance of label predictions from small MLPs. A trainable threshold τ decides edges to keep/drop. τ is adapted every T epochs using reinforcement learning, where a small MLP policy network observes node feature means, variances, and recent accuracy/loss changes to adjust τ.

GNN Classification Module applies a generic message-passing GNN (e.g., GCN, GAT, RGT, RGCN) to refined features and edges, outputting node-level probabilities trained via binary cross-entropy loss.

Training uses multi-objective loss combining classification on original nodes, augmented nodes, and edge-filtering losses weighted by hyperparameters λs and λe. Adam optimizer trains for 300 epochs at 0.001 learning rate on RTX A800 GPUs.

Evaluation uses accuracy and F1-score averaged over 5 runs with different seeds. Ablation experiments disable components like attention, augmentation, edge filtering, or GNN classification. Comparisons include feature-based and graph-based state-of-the-art baselines. Data efficiency is tested by varying labeled training size from 10% to 100%. Threshold adaptation is compared to fixed values.

Reproducibility: The paper does not mention public code release or frozen weights. Datasets are publicly available benchmarks.

Technical innovations

- Neighborhood-aware oversampling performing linear interpolation of minority class embeddings within local subgraphs to generate synthetic nodes, avoiding noisy edge construction.

- Reinforcement learning-guided adaptive thresholding for dynamic edge filtering, adjusting pruning strength based on observed node feature statistics and recent task metrics.

- Integration of similarity-based edge reliability estimation derived from label-prediction-consistency rather than raw embedding distance to better handle camouflage.

- Modular framework orthogonal to any GNN backbone, allowing seamless plug-in of augmentation and filtering modules to enhance diverse message-passing models.

Datasets

- Cresci-15 — ~7,000 nodes, social bot detection benchmark — publicly available

- Twibot-20 — ~229,000 nodes — publicly available

- MGTAB — large-scale dataset with 1.7 million edges — publicly available

Baselines vs proposed

- BECE on Cresci-15: Accuracy = 98.73%, F1 = 98.57% vs RABot (RGT): Accuracy = 99.14%, F1 = 98.94%

- SEBot on Twibot-20: Accuracy = 87.26%, F1 = 88.06% vs RABot (RGT): Accuracy = 87.92%, F1 = 88.40%

- BotRGCN on MGTAB: Accuracy = 89.27%, F1 = 86.07% vs RABot (RGCN): Accuracy = 91.16%, F1 = 89.03%

- GCN baseline overall: ~77-83% accuracy vs RABot (GCN): +2.81 to +4.73% accuracy gains across datasets

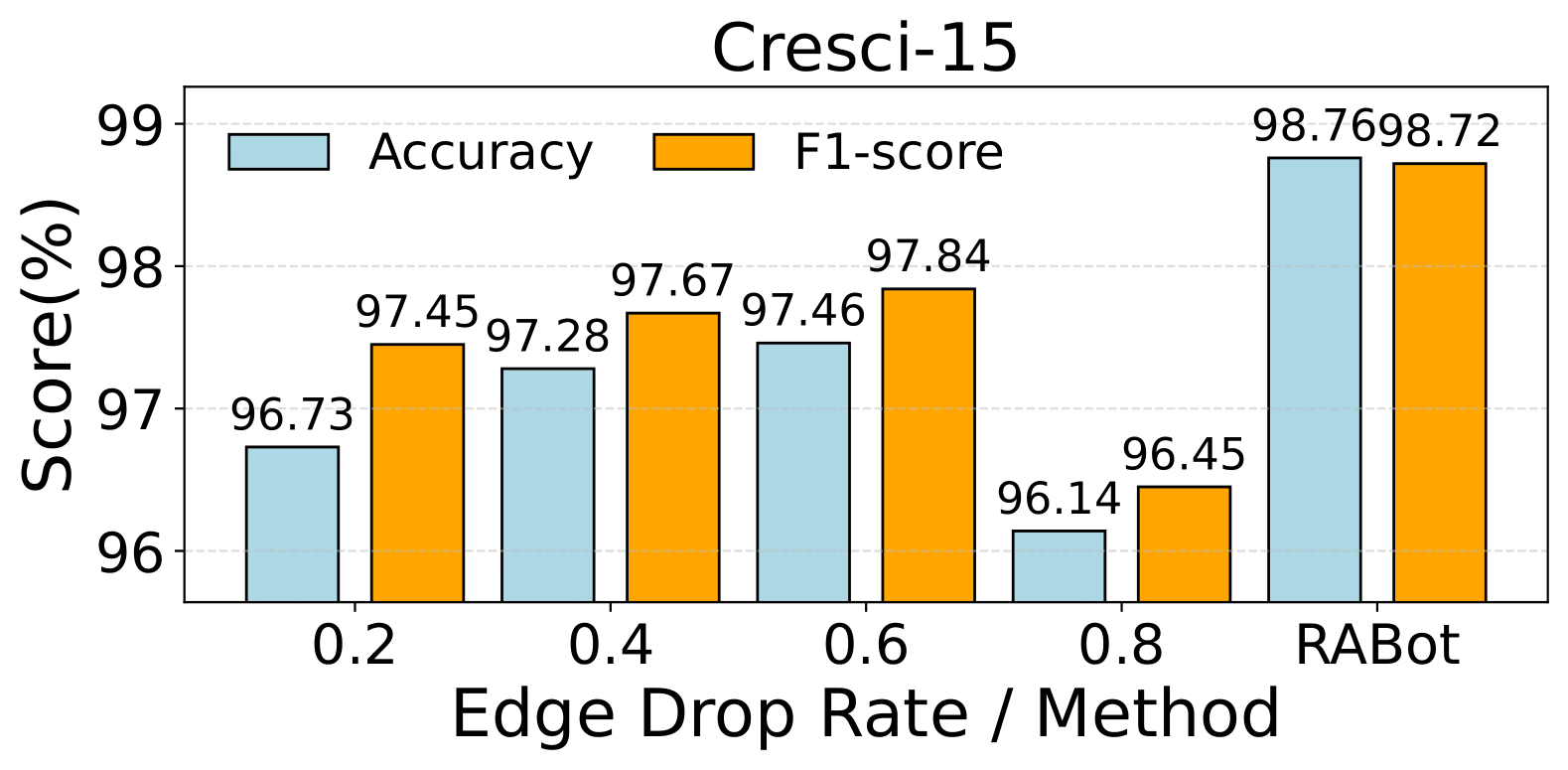

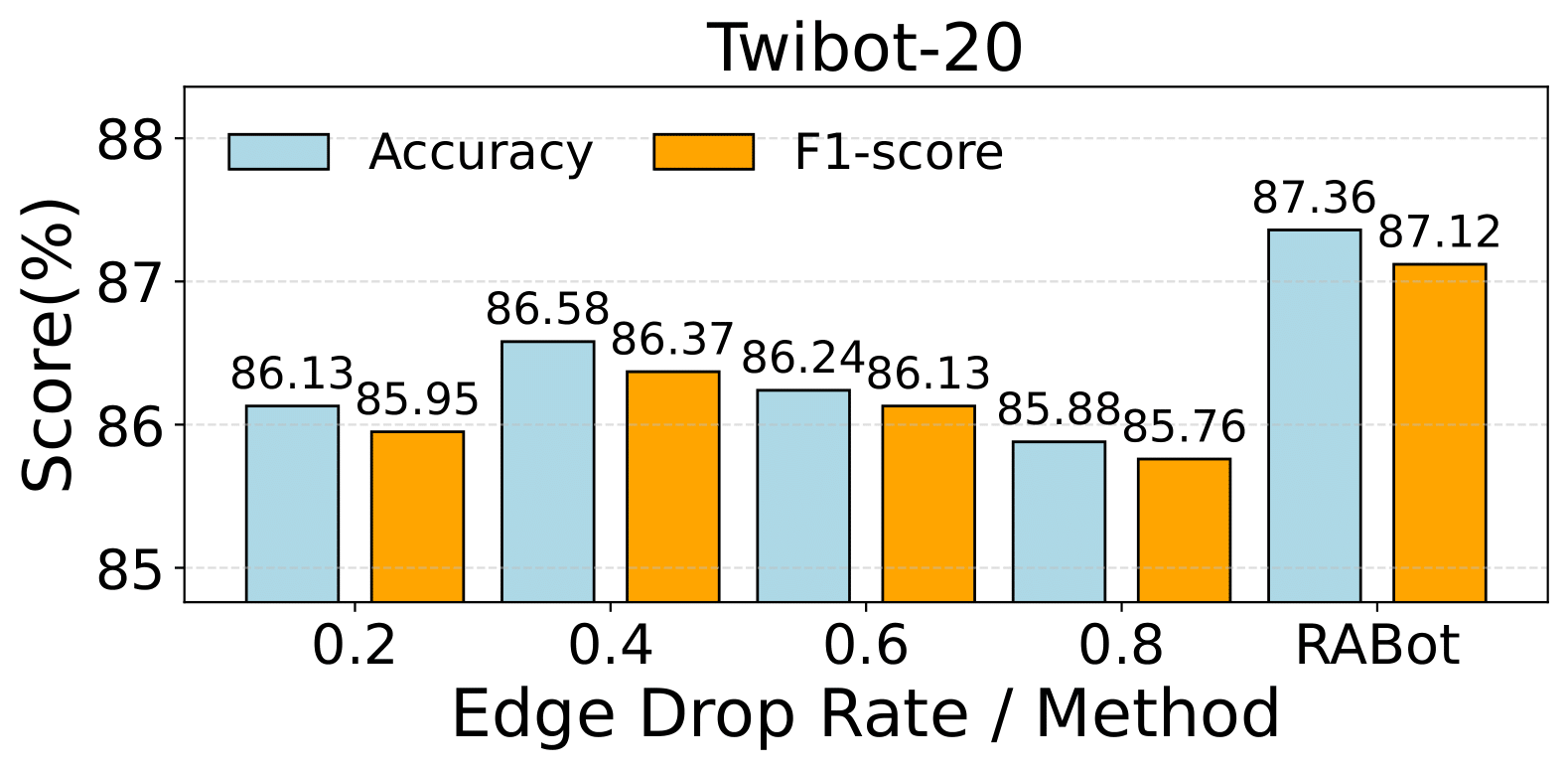

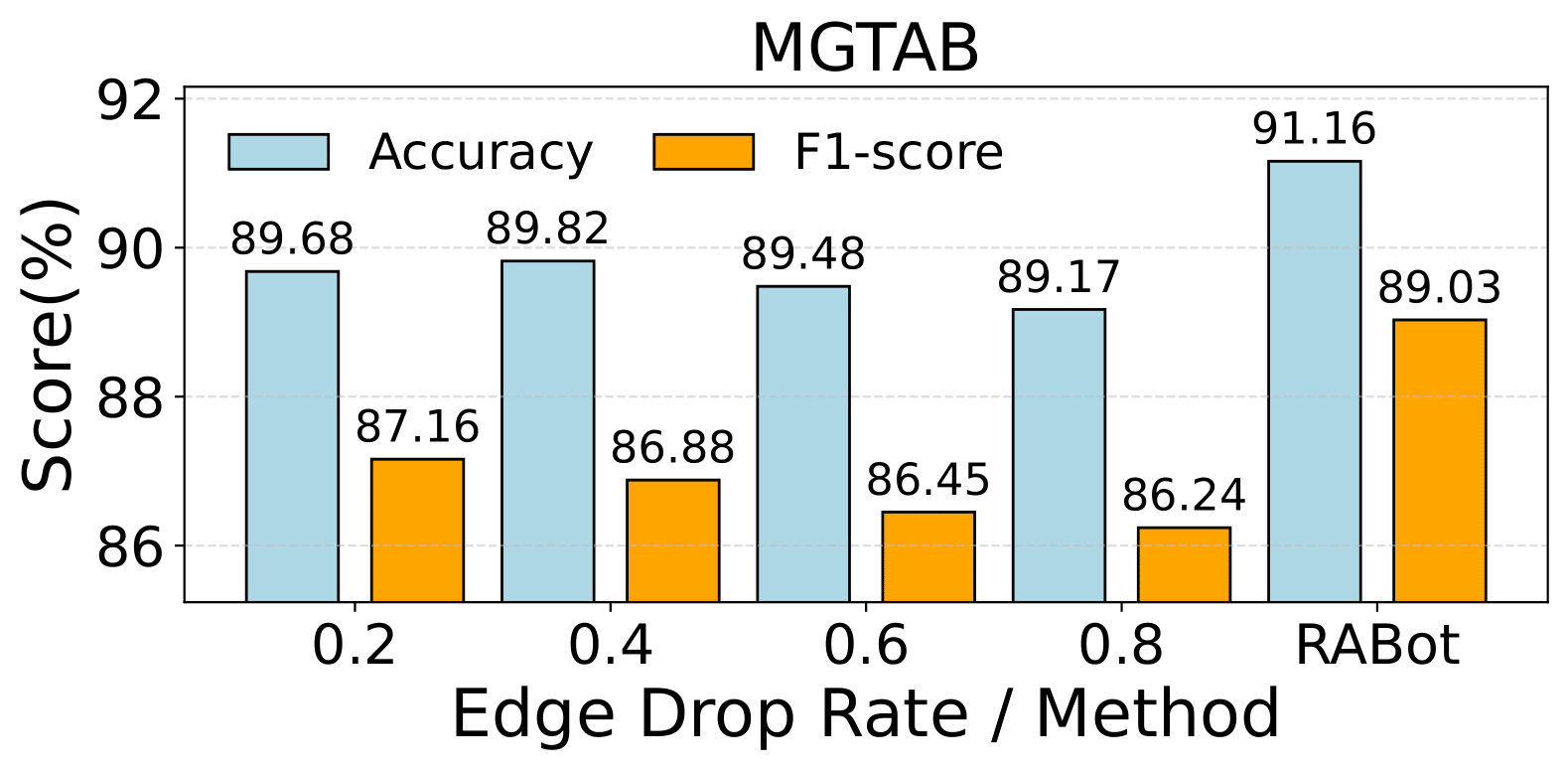

- Random edge removal baseline vs RABot edge-filtering: RABot consistently higher accuracy by ~1-2%

- RABot training time on Twibot-20: 5 min vs LMBot: 21 min, peak memory 2614M vs 18636M

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2602.21749.

Fig 1: Unreliable aggregation in social networks (left).

Fig 2: Overall structure of RABot model, which consists of four modules: user information representation module, feature

Fig 3 (page 3).

Fig 3: Performance of RABot with a RGCN backbone versus random edge removal of different drop rates on the Cresci-15,

Fig 4: Data-efficiency study. The model is trained on ran-

Fig 5: Sensitivity of RABot with a GAT backbone to the

Fig 7 (page 6).

Fig 8 (page 6).

Limitations

- No explicit adversarial evaluation; robustness against adaptive bot strategies evolving to circumvent augmentation or edge filtering remains untested.

- No cross-platform or temporal distribution shift analysis, so generalization to evolving social networks is unknown.

- Datasets focus primarily on Twitter or Twitter-like platforms; applicability to other social media or heterogeneous graphs is not shown.

- No code or model weights released, limiting reproducibility by third parties.

- Reliance on feature quality for neighborhood interpolation; embedding space interpolation quality depends on initial representation fidelity.

- Reinforcement learning threshold adaptation adds complexity; ablation does not isolate overhead or convergence challenges.

Open questions / follow-ons

- How robust is RABot against adaptive bot attacks that specifically target augmentation or edge filtering mechanisms?

- Can the reinforcement learning-based threshold adaptation be further optimized for faster convergence or reduced complexity?

- How does RABot generalize across social networks with different structural properties or on unseen platforms?

- Could the framework extend to multi-class or dynamic bot detection scenarios where bot behavior changes over time?

Why it matters for bot defense

For bot-defense engineers and CAPTCHA practitioners, RABot presents a principled approach to improve graph-based detection of automated accounts by addressing two key real-world challenges: class imbalance and noisy graph topology caused by sophisticated bots. The neighborhood-aware oversampling could inspire data augmentation strategies for minority attacker classes in interaction graphs, while RL-driven adaptive edge filtering demonstrates a viable path to dynamically prune spurious signals that impede classifier learning.

Since RABot is architecture-agnostic and integrates as a modular enhancement to existing GNN pipelines, deploying similar augmentation and filtering modules could enhance resilience in CAPTCHA or bot detection systems that utilize interaction graphs or behavioral networks. Its improvements on data efficiency and training speed may also be beneficial in production settings with large-scale social graphs. However, careful consideration is needed regarding robustness under adversarial scenarios and potential overhead from RL components.

Cite

@article{arxiv2602_21749,

title={ RABot: Reinforcement-Guided Graph Augmentation for Imbalanced and Noisy Social Bot Detection },

author={ Longlong Zhang and Xi Wang and Haotong Du and Yangyi Xu and Zhuo Liu and Yang Liu },

journal={arXiv preprint arXiv:2602.21749},

year={ 2026 },

url={https://arxiv.org/abs/2602.21749}

}