Turing Test on Screen: A Benchmark for Mobile GUI Agent Humanization

Source: arXiv:2604.09574 · Published 2026-02-24 · By Jiachen Zhu, Lingyu Yang, Rong Shan, Congmin Zheng, Zeyu Zheng, Weiwen Liu et al.

TL;DR

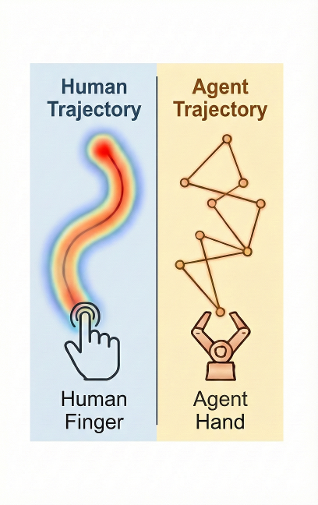

This paper addresses the emerging adversarial dynamic between autonomous GUI agents—software capable of interacting with mobile device interfaces—and platform defenses that seek to detect and block them. Existing research has emphasized utility (task success) and robustness against platform perturbations, yet largely ignored anti-detection or human-likeness of agent behavior. The authors propose extending the classical Turing Test concept into the "Turing Test on Screen," where the core challenge is discriminating human versus agent touch and sensor event sequences on mobile GUIs. They formalize this as a Min-Max game between detector and agent aiming to minimize behavioral divergence.

To study this, the authors collect a high-fidelity dataset of touch and sensor dynamics from real humans and multiple state-of-the-art GUI agents across 21 apps and diverse user demographics. Through detailed feature analysis, they identify precisely why vanilla LMM agents fail the humanization test: overly linear trajectories, unrealistic tap duration distributions, and unnatural timing intervals. They establish the Agent Humanization Benchmark (AHB) with new detection metrics quantifying the imitability-utility trade-off. Then, they propose four humanization strategies—from heuristic B-spline noise smoothing to data-driven trajectory history matching and temporal behavior adjustments—that substantially reduce detectability while preserving task performance.

Their experiments show raw agents can be detected by XGBoost and SVM with >98% accuracy, but humanized agents reduce detection accuracy down to near-random in some dimensions. Data-driven history matching outperforms heuristic smoothing on imitability. Fake action injection masks temporal patterns but may degrade utility, highlighting complex trade-offs. Overall, this paper shifts the focus from pure agent functionality to how agents perform within adversarial human-centric GUIs, laying theoretical and empirical groundwork for seamless agent coexistence in hostile digital ecosystems.

Key findings

- Vanilla LMM-based GUI agents are trivially detectable by SVM and XGBoost classifiers with detection accuracies >98% across multiple app domains.

- Human touch and sensor event dynamics differ fundamentally from agents in trajectory curvature, tap durations (Gaussian 0.05-0.10s for humans vs near-zero spikes for agents), and action intervals (long-tail human distribution vs fixed agent latencies up to 50-80s).

- The Agent Humanization Benchmark (AHB) quantifies imitability as inverse detection accuracy and utility as task success rate, enabling trade-off evaluation.

- History Matching humanization strategy reduces XGBoost detection accuracy from 0.9817 (B-spline) to 0.9450 in one app cluster, showing data-driven behavioral mimicking is more effective than heuristic smoothing.

- Fake Action injection neutralizes temporal interval rules, reducing detection accuracy to ∼0.52 (random guess), but degrades online task utility significantly (e.g. down to 15% success in trip planning).

- Long Press humanization reduces tap duration detection accuracy from 0.9840 to 0.6133 by fitting tap times to human Gaussian distribution.

- Certain features like endpoint coordinate precision (endY) and action intervals remain hardest to humanize due to direct conflict between imitability and task utility.

- Detection accuracy plateaus at 5-10 features for raw agents but remains significantly higher for humanized agents across 24 features, indicating persistent stylistic differences even after humanization.

Threat model

The adversary is the platform operator who possesses the ability to monitor all GUI event streams including precise motion and sensor data generated by users or agents. They deploy rule-based or machine learning detectors to classify interaction patterns as human or agent outputs. The adversary cannot directly modify or access the internal model parameters of the agent but can react by blocking, imposing login challenges, or injecting noise to thwart agent activity once detected. The agent’s goal is to evade detection by mimicking subtle human biomechanics while maintaining functional utility. Attacks focused on altering underlying UI semantics or causing denial-of-service are out of scope.

Methodology — deep read

Threat Model & Assumptions: The adversary is the platform deploying detector models (rule-based heuristics and ML classifiers) to distinguish human from agent interaction traces on mobile GUI. The agent is an autonomous GUI agent based on Large Multimodal Models (LMMs) that generate sequences of logical UI commands triggering physical event streams (touch and sensor events). The platform cannot directly access the agent internals but observes event stream E1:T. The agent aims to minimize detection probability while maintaining task success.

Data: The authors collect a large-scale dataset spanning 21 popular mobile apps categorized into 5 domains such as social media, shopping, trip planning. The dataset contains granular MotionEvent (e.g., touch coordinates, pressure) and SensorEvent (e.g., gyroscope, magnetometer) sequences from both humans (4 demographic subgroups) and multiple state-of-the-art LMM-based agents (UI-TARS, MobileAgent variants, AgentCPM, AutoGLM). 24 statistical biomechanical features are extracted per sequence, covering kinematics, geometry, and temporal dynamics. Data splits and preprocessing details are referenced (Appendix), with comprehensive exploratory analysis presented.

Architecture/Algorithm: They formalize the "Turing Test on Screen" as a Min-Max game between Detector DΘ and agent GΦ. The detector models are rule-based heuristics and ML classifiers (SVM, XGBoost) trained on the 24-dimensional feature vectors. The agent is abstracted as a policy generating logical actions which map to physical event streams. Humanization is achieved via an External Wrapper module H that post-processes raw agent actions to humanized sequences, independent of the underlying agent model. Four strategies are proposed: (a) heuristic noise injection via B-spline smoothing of linear swipes, (b) data-driven history matching by affine-transforming real human trajectories matched by task vector, (c) injection of fake micro-actions to mask long inter-action intervals, and (d) sampling tap durations from a Gaussian fitted to human taps. The paper includes theoretical bounds linking Jensen-Shannon divergence minimization to effective humanization.

Training Regime: Detectors are trained using supervised learning on labeled human vs agent data with 24 motion features. Standard SVM and XGBoost optimization are used; hyperparameters and training epochs are not explicitly detailed. Humanization wrappers operate at runtime or offline post-processing. Experiments consider both online (real-time) and offline (post-hoc) evaluation modes.

Evaluation Protocol: Detection accuracy is the main metric to quantify imitability, with 1.0 meaning perfect distinguishability and 0.5 meaning random guess (successful humanization). Utility is measured as task success rate per app domain. They evaluate against five detector hierarchies: rule-based checks, SVM, and XGBoost classifiers. Ablations vary humanization strategies singly and in combination. Feature-level analysis identifies easiest and hardest features to humanize, supported by ROC thresholding and controlled experiments (e.g., Fig 4 and 5). Distribution shifts across five application clusters are tested.

Reproducibility: The authors provide public code and dataset (links included). Full details of feature extraction, detector implementation, and humanization algorithms are documented in appendices. Some implementation details of LMM agents are from referenced prior work. The dataset includes 21 apps, 4 human demographics, and multiple agent models.

Concrete Example: For one social media app cluster, raw agent swipes are detected by XGBoost at 98.17% accuracy. Applying the history matching humanization strategy offline reduces detection accuracy to 94.50%, significantly masking mechanical patterns. Concurrently, injecting fake actions shifts interval distributions near human but lowers online task utility to about 45-60%, illustrating the imitability-utility trade-off. The combined use of multiple humanization strategies requires careful balance to avoid introducing new detection artifacts.

Technical innovations

- Formalization of GUI agent detection as a Min-Max adversarial game modeling the "Turing Test on Screen" interaction between platform detector and agent.

- Development of the Agent Humanization Benchmark (AHB) providing standardized imitability and utility metrics quantifying the trade-off between successful human likeness and task performance.

- A novel corpus of high-fidelity user interaction data capturing MotionEvent and SensorEvent sequences from diverse humans and state-of-the-art LMM agents across 21 mobile applications.

- Introduction of data-driven history matching humanization strategy that aligns agent trajectories with recorded human trajectories via affine transformations, improving imitability beyond prior heuristic noise methods.

- Comprehensive feature-level analysis revealing distinct biomechanical and temporal touch dynamics that differentiate raw and humanized agent behavior, highlighting persistent detection bottlenecks.

Datasets

- Agent Humanization Benchmark dataset — size unspecified but large-scale encompassing 21 applications and multiple user demographics and agent models — publicly released at https://huggingface.co/datasets/lyyang2766/Passing-the-Turing-Test-on-Screen-Agent-Humanization-Benchmark/tree/main

Baselines vs proposed

- Raw agent (RAW): XGBoost detection accuracy = 0.9817 in Social Media cluster vs History Matching humanization offline reduces to 0.9450

- Raw agent (RAW): SVM detection accuracy = 0.9969 (Social Media) vs History Matching offline = 0.7190

- B-spline smoothing (heuristic): XGBoost detection accuracy = 0.9817 (Social Media) vs History Matching = 0.9450

- Raw agents interval detection rule accuracy = 1.0 vs Fake Action injection reduces interval detection accuracy to ~0.52

- Raw agent tap duration detection accuracy = 0.9840 (Shopping cluster) vs Long Press humanization reduces to 0.6133

- Utility (task success rate) drops from 0.75 to 0.15 in Trip Planning cluster when applying Fake Action humanization

- Combined humanization strategies often reduce detection accuracy but may increase detectability in other feature dimensions due to uncoordinated artifacts

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2604.09574.

Fig 1: The adversarial landscape between GUI Agents and Mobile Platforms. The figure illustrates three key stages:

Fig 2 (page 2).

Fig 3 (page 2).

Fig 4 (page 2).

Fig 5 (page 2).

Fig 6 (page 2).

Fig 7 (page 2).

Fig 8 (page 2).

Limitations

- Humanization strategies induce trade-offs between reducing detectability and preserving task utility, with some methods (e.g., Fake Action) causing significant performance degradation.

- Temporal behavior modeling remains challenging due to persistent agent inference latencies and lack of UI contextual awareness for safe fake action injection.

- Endpoint precision and coordinate noise remain hard to humanize without risking task failure, indicating fundamental constraints tied to GUI layout.

- The generalization of the Detector and humanization strategies to unseen apps or user demographics is unclear; distribution shifts beyond tested clusters are not exhaustively evaluated.

- Detection models rely on 24 heuristic features; more complex or future detectors using other behavioral modalities may require different humanization adaptations.

- The agent models tested are state-of-the-art but relatively few; further evaluation with diversified agent architectures is needed to validate universality.

Open questions / follow-ons

- How to design lightweight, UI-aware guard agents capable of safely inserting fake actions without incurring latency or task disruption?

- Can end-to-end agent architectures be trained jointly for task utility and human-like behavior to natively reduce detectability?

- What are the limits of temporal synchronization in humanization given inherent LMM inference overheads?

- How will detection and humanization strategies evolve under future multi-modal sensor fusion detectors beyond touch dynamics?

Why it matters for bot defense

This work is highly relevant to bot-defense and CAPTCHA practitioners tasked with discriminating human users from sophisticated autonomous agents operating on mobile interfaces. The formalization of the detection problem as a Min-Max game and introduction of a detailed benchmark with fine-grained behavioral features provides a rigorous framework for evaluation. Practitioners can leverage the proposed detection metrics and feature analyses to harden their systems against emerging LMM-powered bots exhibiting humanization attempts. Conversely, understanding the proposed humanization techniques can inform the design of more robust detectors that consider multi-dimensional biomechanical and temporal signatures. Finally, the observed imitability-utility trade-offs highlight the importance of multi-faceted detection strategies combining heuristic and learned features to prevent stealthy agent infiltration in user-facing GUIs.

Cite

@article{arxiv2604_09574,

title={ Turing Test on Screen: A Benchmark for Mobile GUI Agent Humanization },

author={ Jiachen Zhu and Lingyu Yang and Rong Shan and Congmin Zheng and Zeyu Zheng and Weiwen Liu and Yong Yu and Weinan Zhang and Jianghao Lin },

journal={arXiv preprint arXiv:2604.09574},

year={ 2026 },

url={https://arxiv.org/abs/2604.09574}

}