Next-Gen CAPTCHAs: Leveraging the Cognitive Gap for Scalable and Diverse GUI-Agent Defense

Source: arXiv:2602.09012 · Published 2026-02-09 · By Jiacheng Liu, Yaxin Luo, Jiacheng Cui, Xinyi Shang, Xiaohan Zhao, Zhiqiang Shen

TL;DR

Next-Gen CAPTCHAs is a defense-oriented CAPTCHA benchmark and generation framework for GUI agents, motivated by the claim that modern multimodal, computer-use agents have already broken many current logic-style CAPTCHAs. The paper’s core idea is not to make existing puzzles harder in the usual “more reasoning” sense, but to design tasks that exploit persistent human–agent gaps in interactive perception, latent-state maintenance, and low-level action execution. The authors frame this as a cognitive-gap problem inside an extended POMDP for GUI interaction, where the agent must ground observations, maintain workspace state across steps, and execute browser actions correctly under partial observability.

The paper contributes 27 newly designed CAPTCHA families, a procedural generation and automatic-verification pipeline, and a real-web evaluation platform. The benchmark is a sampled snapshot of a larger continuously generative system: the main test set contains 519 puzzles, plus a smaller 135-puzzle subset for low-budget testing. In their experiments, humans reportedly solve the tasks at near ceiling, while several frontier MLLM-backed GUI-agent stacks stay in the low single digits on average Pass@1. The main result is that these CAPTCHA families appear to preserve a substantial human–agent gap even for reasoning-heavy models that already solve prior logic CAPTCHAs effectively.

Key findings

- Humans achieved 98.8% Avg Pass@1 on the benchmark, with an average completion time of 31 s/CAPTCHA, while the best evaluated agent backbone (GPT-5.2-xHigh) reached only 5.9% Avg Pass@1.

- On the 519-puzzle main benchmark under Browser-Use, GPT-5.2-xHigh scored 5.9% Pass@1, Gemini-3-Flash-High 3.2%, Claude-Opus4.5-Extended-ThinkingHigh 3.0%, Gemini-3-Pro-High 1.3%, Doubao-Seed-1.8-Thinking-HighEffort 1.3%, and Qwen3-VL-Plus-ThinkingHigh 0.9%.

- A lightweight filter retained CAPTCHA families only if Gemini 3 Flash stayed below 30% Pass@1 on a 20-instance pilot set while humans stayed above 90% success on 10 sampled pilot instances; this yielded 27 families.

- The authors say 25 of the 27 families are fully script-generated with automatic checking; 2 families use curated human-crafted instances.

- On the 135-puzzle subset, framework ablation with Claude-Opus4.5 as backbone/protocol reported CrewAI at 0.00% overall Pass@1, Browser-Use at 1.48%, and Claude Cowork at 4.44%.

- Thinking-mode ablation on Gemini-3-Flash improved Pass@1 only modestly: 0.6% (Minimal), 1.9% (Low), 2.5% (Medium), and 3.2% (High), while reasoning-token usage increased from none to 5.3K/12.9K/18.5K tokens.

- The paper reports GPT-5.2-xHigh incurred about $3,122 total API cost on the evaluation set while still only reaching 5.9% Pass@1, which the authors use to argue for unfavorable attacker economics.

- For current CAPTCHA families, Fig. 4 reports non-trivial correlations between success and trajectory metrics, but for Next-Gen CAPTCHAs these correlations are near zero, suggesting success is not explained by longer deliberation or more steps.

Threat model

The adversary is a GUI-enabled automated agent, typically a frontier multimodal model wrapped in a browser-control framework, attempting to solve live CAPTCHA challenges via the same visual and interaction channels a human user sees. It can screenshot, read limited DOM metadata, reason over the task, and issue click/scroll/type/drag actions, potentially with additional internal thinking budget and API spending. It cannot access hidden ground truth, the verifier logic except through observable behavior, or privileged annotations beyond the page’s exposed interface. The defender assumes the attacker may be strong at static perception and textual reasoning but remains brittle in spatial grounding, memory across steps, and low-level action selection under UI constraints.

Methodology — deep read

The threat model is a GUI-enabled agent trying to solve live web CAPTCHAs through the same browser surface a human would use. The paper’s extended POMDP formulation makes the assumptions explicit: the agent observes a webpage state through a limited observation channel, maintains an internal workspace, and chooses both browser actions (click/scroll/type/drag) and internal deliberation. The defender’s goal is the usual CAPTCHA objective—easy for humans, hard for automated solvers—but the paper sharpens that into exploiting a persistent cognitive gap in perception, memory, decision-making, and action. The attacker is assumed to have strong multimodal perception and reasoning, including frontier MLLMs and GUI agents, but not privileged access to hidden ground truth or backend-only verifier internals beyond what the interface exposes. The main experiments use Browser-Use as the reference integration, with the page observation restricted to a screenshot, lightweight metadata, and a filtered DOM-derived interaction view; the authors explicitly say they do not provide extra overlay/SoM annotation channels.

The data pipeline starts from a set of candidate CAPTCHA task families designed around specific human–agent gaps: scene-structure inference, temporal integration, numerosity/discrete invariants, latent-state tracking, and perception-to-action alignment. For each family they implemented a full task end-to-end, including a web interface and, where applicable, a procedural instance generator whose puzzle rules and solution are encoded directly into generation so that correctness is guaranteed by construction. Before model filtering, they manually checked that the tasks were interpretable, human-friendly, and usable as CAPTCHAs, then produced a small pilot set: 20 generated instances per generative family, and comparable curated examples for the few non-generated ones. They then used Gemini 3 Flash as a stress-test model and retained only families where the model stayed below 30% Pass@1 on the pilot set while a sampled human subset exceeded 90% success. This curation produced 27 vision-language CAPTCHA families, of which 25 are script-generated with automatic checking and 2 are curated. From those families they sampled a main benchmark of 519 puzzles and a budget subset of 135 puzzles (5 per family).

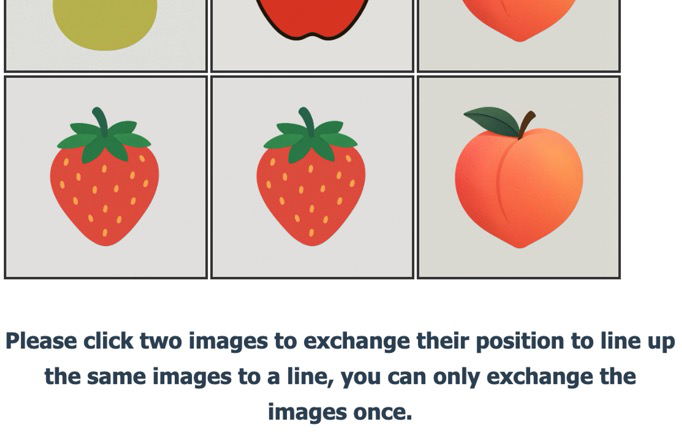

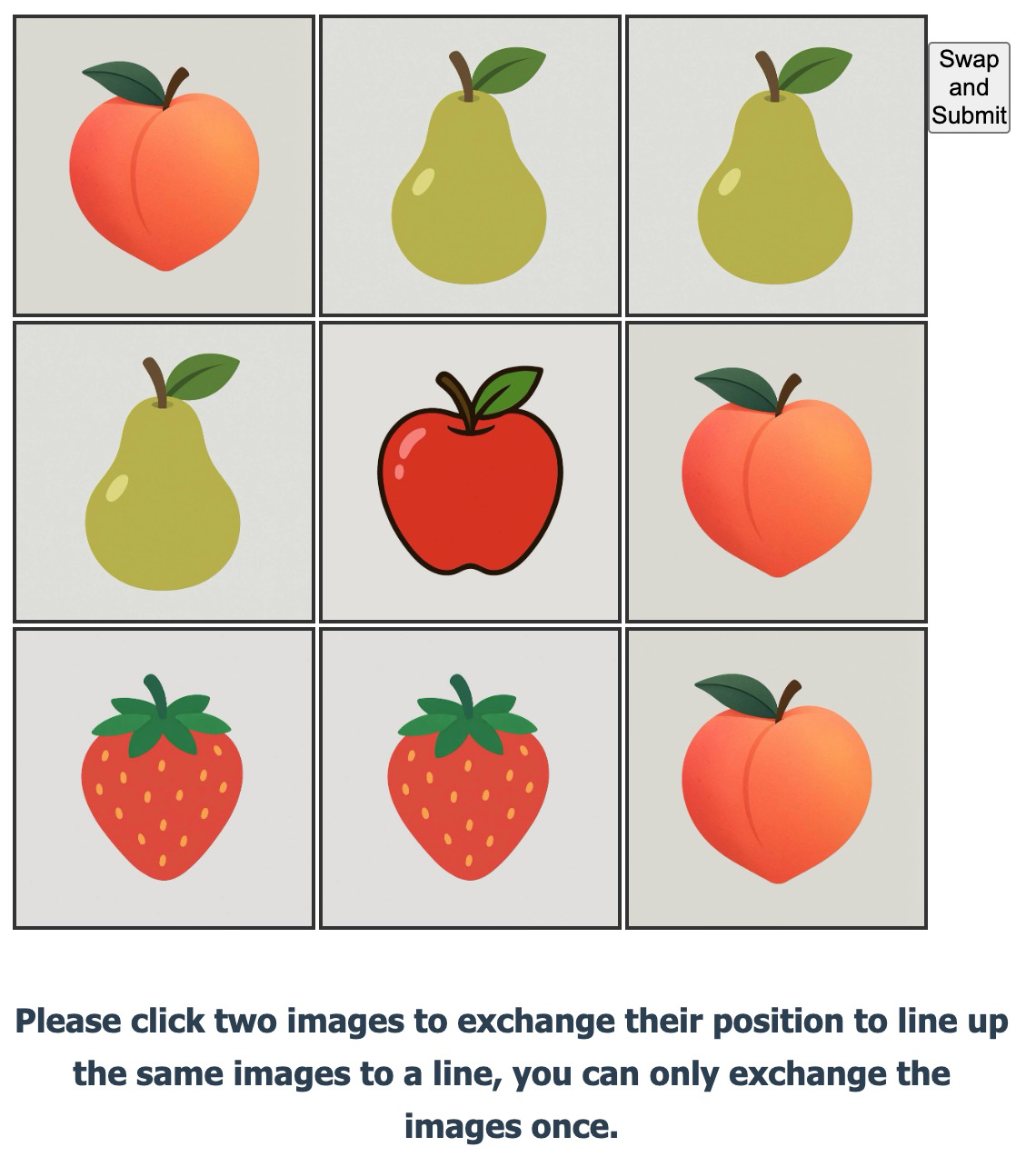

Architecturally, the paper does not propose a neural model; the novelty is the CAPTCHA design and generation framework. The tasks are grouped around five gap types: G1 scene-structure inference (e.g., mirror or depth/occlusion cases), G2 temporal integration (motion/sequence-reveal tasks), G3 numerosity and discrete invariants (counts, parity, path endpoints), G4 latent-state tracking (intermediate variables that must be carried across steps), and G5 perception-to-action alignment (tasks where the right decision must be translated into the right browser action such as drag-and-drop rather than click-through). The key mechanism is to avoid decomposable “solve in one snapshot, then perform a few obvious actions” puzzles. Instead, the design aims to create tasks where an agent can stare at the right region and still fail because it does not reliably maintain state or execute the correct action primitive. A concrete example in the paper is the Bingo-style task path from Fig. 2: an agent screenshots the page, parses a grid, enumerates candidate swaps, then clicks two cells and submits. The authors use that trace to argue that current CAPTCHAs are workflow-friendly, while Next-Gen CAPTCHAs are not.

The evaluation protocol is a live-browser Pass@1 test with state reset between puzzles. At each step, the agent receives a screenshot, DOM-derived interaction elements, and metadata, then outputs structured browser actions executed by Playwright in visible mode. Success is determined by automatic verification on the platform. The main results are reported on the 519-puzzle set, with the browser agent framework held fixed unless the paper is specifically comparing frameworks. They compare multiple MLLM backbones under the Browser-Use agent, and additionally analyze cost, latency, and thinking-mode effects. For ablation, they also compare agent frameworks on the 135-puzzle subset, holding the backbone MLLM constant. Fig. 7 analyzes the cost–accuracy–latency trade-off across models, and Fig. 8 shows the effect of increasing reasoning budget on Gemini-3-Flash. The paper also reports correlation analysis between success and logged trajectory metrics in Fig. 4, finding that on current CAPTCHAs success correlates with trajectory features, but on Next-Gen CAPTCHAs those correlations are near zero. I do not see any mention of cross-validation, confidence intervals, or statistical significance testing beyond the correlation plot’s significance markers, and the excerpt does not specify seeds, optimizer settings, or training because there is no model training in the usual sense.

Reproducibility is partial. The authors say they release an open-source real-web evaluation platform and a benchmark sampled from the broader defense system, and they mention a project page. However, the benchmark is explicitly only a curated sample from a continuously generative system, and the excerpt does not specify whether all 27 family generators, all verification code, or all human-study materials are fully released. Because the paper is mostly a systems/benchmark contribution rather than a learned model paper, reproducibility depends on whether the procedural generators and browser integration are available and whether the live APIs used in the experiments remain accessible. The excerpt also does not give enough detail to independently reconstruct the human study beyond “small-scale” and “high success rates and low completion times.”

Technical innovations

- A CAPTCHA design framework based on an extended POMDP view of GUI-agent interaction, explicitly targeting observation grounding, latent-state maintenance, and action execution rather than static visual recognition.

- Twenty-seven newly designed CAPTCHA families engineered around five human–agent cognitive-gap categories, with 25 families procedurally generated and automatically verifiable.

- A curation pipeline that filters candidate families using a pilot human test plus a strong model stress test (Gemini 3 Flash <30% Pass@1, humans >90% on sampled pilot items).

- A real-web, GUI-framework-agnostic evaluation platform that standardizes browser interaction and logging for MLLM-backed agents.

- A benchmark snapshot sampled from a larger generative defense system, including a 519-puzzle main set and a 135-puzzle low-budget subset.

Datasets

- Next-Gen CAPTCHA main test set — 519 puzzles — sampled from the authors’ continuously generative CAPTCHA defense system

- Next-Gen CAPTCHA budget subset — 135 puzzles — sampled from the authors’ continuously generative CAPTCHA defense system

- Pilot family sets — 20 generated instances per generative family plus comparable curated examples for non-generated families — authors’ internal generation pipeline

- Human pilot sample — 10 sampled pilot instances per retained family for filtering — authors’ internal human evaluation

- Human study sample — small-scale, size not specified — authors’ internal human evaluation

Baselines vs proposed

- GPT-5.2-xHigh (Browser-Use): Avg Pass@1 = 5.9% vs proposed: 98.8% human baseline

- Gemini-3-Flash-High (Browser-Use): Avg Pass@1 = 3.2% vs proposed: 98.8% human baseline

- Claude-Opus4.5-Extended-ThinkingHigh (Browser-Use): Avg Pass@1 = 3.0% vs proposed: 98.8% human baseline

- Gemini-3-Pro-High (Browser-Use): Avg Pass@1 = 1.3% vs proposed: 98.8% human baseline

- Doubao-Seed-1.8-Thinking-HighEffort (Browser-Use): Avg Pass@1 = 1.3% vs proposed: 98.8% human baseline

- Qwen3-VL-Plus-ThinkingHigh (Browser-Use): Avg Pass@1 = 0.9% vs proposed: 98.8% human baseline

- CrewAI (Claude-Opus4.5 backbone, 135-puzzle subset): Overall Pass@1 = 0.00% vs proposed: 4.44% (Claude Cowork)

- Browser-Use (Claude-Opus4.5 backbone, 135-puzzle subset): Overall Pass@1 = 1.48% vs proposed: 4.44% (Claude Cowork)

- Gemini-3-Flash Minimal thinking: Pass@1 = 0.6% vs proposed (High thinking): 3.2%

- Gemini-3-Flash Low thinking: Pass@1 = 1.9% vs proposed (High thinking): 3.2%

- Gemini-3-Flash Medium thinking: Pass@1 = 2.5% vs proposed (High thinking): 3.2%

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2602.09012.

Fig 1: Frontier Models as GUI Agent Backbones’ Pass@1 on

Fig 2: With the enhanced Computer Use abilities like taking

Fig 3: Current CAPTCHAs System Fails. Recent Advanced

Fig 4: Success–trajectory correlation differs between current and Next-Gen CAPTCHAs. Left & Middle: Spearman ρ between

Fig 5: Next-Gen CAPTCHA family examples. Representative instances from Next-Gen CAPTCHA task families. The full family

Fig 6 (page 3).

Fig 7 (page 3).

Fig 6: Next-Gen CAPTCHA data curation pipeline.

Limitations

- The paper’s strongest claims rely on a preprint benchmark with a curated sample, not a fully exhaustive public test of the underlying continuously generative system.

- The excerpt does not provide detailed seeds, hyperparameters, or statistical confidence intervals for the main pass-rate comparisons.

- Human-study details are thin in the excerpt: sample size, participant demographics, and task-by-task variance are not specified.

- Evaluation is mostly on Browser-Use plus a few auxiliary frameworks; broader agent stacks or bespoke attack orchestration may behave differently.

- The benchmark is designed around the authors’ hypothesized human–agent gaps, so there is a risk of overfitting to current agent weaknesses rather than establishing a durable security margin.

- The paper emphasizes low Pass@1, but does not fully quantify usability friction, accessibility impact, or failure recovery for legitimate users in real deployment.

Open questions / follow-ons

- How stable are these CAPTCHA families against future GUI agents that improve action grounding and long-horizon memory, not just reasoning tokens?

- Which of the five cognitive-gap categories contributes most to the security margin, and can they be combined or replaced with cleaner, more accessible designs?

- How well do the generated tasks hold up under accessibility tools, alternate input modalities, or non-browser agent stacks?

- Can the continuously generative system be deployed operationally without creating unacceptable false positives, latency, or maintenance burden for real users?

Why it matters for bot defense

For bot-defense practitioners, the important takeaway is that the paper is arguing for a shift from static puzzle design to generative, interaction-heavy challenges that exploit limitations of agentic workflows. If this result holds up, defenders should think less about making a puzzle “hard to recognize” and more about whether the task requires stable latent-state tracking, correct manipulation primitives, and rapid human-style intuition under partial observability. That said, the benchmark also signals a moving target: the same class of tasks that frustrates today’s browser agents may become less effective as agents improve their perception-to-action stack.

For a CAPTCHA/abuse-defense engineer, the practical reaction is to treat this as evidence that current logic CAPTCHAs are fragile against top-tier agents, but also to be cautious about adopting any single family wholesale. The design direction is likely useful as one layer in a broader abuse stack, especially where the goal is to raise attacker cost and induce friction. But deployment would need careful accessibility review, localization testing, and monitoring for agent adaptation, because the paper’s own framing implies these are not permanent solutions—just a better use of the current human–machine gap.

Cite

@article{arxiv2602_09012,

title={ Next-Gen CAPTCHAs: Leveraging the Cognitive Gap for Scalable and Diverse GUI-Agent Defense },

author={ Jiacheng Liu and Yaxin Luo and Jiacheng Cui and Xinyi Shang and Xiaohan Zhao and Zhiqiang Shen },

journal={arXiv preprint arXiv:2602.09012},

year={ 2026 },

url={https://arxiv.org/abs/2602.09012}

}