A Hybrid CAPTCHA Combining Generative AI with Keystroke Dynamics for Enhanced Bot Detection

Source: arXiv:2510.02374 · Published 2025-09-29 · By Ayda Aghaei Nia

TL;DR

This paper addresses the longstanding tension in CAPTCHA design between usability and resilience against modern AI-powered bots. Traditional CAPTCHAs have become increasingly vulnerable to machine learning advances, prompting exploration of alternative defenses. The author proposes a novel hybrid CAPTCHA system that combines dynamically generated natural-language challenges from a Large Language Model (Google's Gemini) with behavioral biometric analysis focused on keystroke dynamics (typing rhythm). By generating simple, non-repetitive cognitive puzzles and analyzing subtle human typing patterns such as latency variability, the system aims to distinguish genuine human users from bots, including paste-based and scripted typing attacks.

The paper details the client-server architecture ensuring secure challenge generation and verification without exposing answers. Behavioral keystroke features like total typing time, mean latency, and standard deviation of inter-keystroke timings are extracted client-side. A heuristic classifier thresholds these features to detect bot-like uniformity or unnatural typing speed. Experimental evaluation with 15 human users and two bot scripts (paste and scripted typing) demonstrates a 100% bot detection rate while maintaining high human usability (87% first-try success, 100% within two). Results suggest combining cognitive and behavioral layers can enhance CAPTCHA security without degrading user experience.

Key findings

- Hybrid CAPTCHA achieved 100% detection of paste-based bots and typing-simulation bots over 50 trials each.

- Human participants (n=15) solved 45 trials with 87% first-attempt success rate and 100% within two attempts.

- Standard deviation threshold for latency (σF > 20ms) and total typing time (Ttotal > 150ms) were effective heuristics to flag bots.

- Paste events were reliably detected and blocked to foil direct answer insertion.

- Typing-simulation bot had near zero latency variation (σF ≈ 0), triggering behavioral anomaly detection.

- LLM-generated questions prevented replay attacks by varying challenge content dynamically.

- Typing data captured with sub-millisecond precision (performance.now()) on client-side ensured reliable feature extraction.

- System architecture securely hashed answers with SHA-256 to avoid exposing plaintext solutions to client.

Threat model

Adversary is an automated bot attempting to bypass CAPTCHA by submitting correct answers either via clipboard pasting or scripted typing with fixed timing delays. The attacker cannot predict or replay dynamic LLM-generated questions and cannot perfectly replicate human typing variability. The adversary is limited to automated input methods on a single session and cannot compromise server-side components or modify the client interface.

Methodology — deep read

The paper’s threat model assumes adversaries attempting to bypass CAPTCHA via automated bots that either paste answers directly or simulate typing with fixed delays. Attackers lack knowledge of dynamic challenges and cannot forge realistic human typing patterns.

Data comprised responses from two groups: 15 human volunteers answering 3 LLM-generated questions each (total 45 trials) and two bot scripts each run in 50 trials. The bots used were a paste-based insertion script and a typing-simulation bot that typed characters sequentially with fixed 50ms latency.

The hybrid CAPTCHA system consists of a client-side interface that renders the question and captures keystroke timestamps with JavaScript’s high-precision performance.now() API. The backend securely calls Google's Gemini LLM via API to generate simple common-sense questions using prompt engineering enforcing JSON output with a question and answer. The backend hashes the correct answer with SHA-256 and sends only the question text and hash to the client.

From the raw inter-keystroke timing sequence T={t1,...,tn} for an n-character input, the client computes flight times Fi=ti+1−ti and extracts three behavioral features: total typing duration Ttotal=tn−t1, mean latency μF, standard deviation σF. These statistics capture the rhythm of typing, with humans expected to show variability unlike bots.

Classification uses heuristics: match answer hash, no paste event detected, σF > 20ms, and Ttotal >150 ms for inputs longer than three chars. Passing all leads to classification as human, else flagged as bot.

The system was evaluated in Google Chrome with sub-millisecond timing precision. Thresholds derived empirically from initial testing were applied uniformly. Performance metrics captured included success rates for humans and detection rates for bots. The evaluation did not include adversarial tuning or distributional shifts.

The paper provides code-level detail on feature calculation and hashing but does not release code or dataset publicly. The bot scripts were simple but representative of two common attack modes.

An example end-to-end flow: upon page load, the client requests a CAPTCHA question from the backend, which calls the LLM to generate e.g. "What color is the sky on a clear day?" and hashes "blue". The question and hash send to client. User types "blue"; keystroke timestamps recorded and flight time metrics calculated. The client hashes the input and compares with target hash while checking no paste event occurred and behavioral features exceed thresholds. If all pass, the user is accepted as human; otherwise bot classification is triggered.

Technical innovations

- Integration of LLM-generated dynamic cognitive questions with keystroke dynamics behavioral biometrics in a single unified CAPTCHA system.

- Use of SHA-256 hashing of answers client-side to prevent exposing plaintext answers while enabling verification.

- Simple heuristics based on timing feature thresholds (latency std deviation and total duration) to classify human vs bot input in real-time.

- Client-side capture of keystroke timing data with sub-millisecond precision using performance.now() API to extract robust behavioral features.

Datasets

- Human typing data — 45 trials from 15 volunteers — collected internally for evaluation

- Bot simulation data — 100 trials (50 paste-based, 50 typing simulation) — developed internally

Baselines vs proposed

- Paste-based bot: success rate = 0% vs hybrid CAPTCHA: blocked 100%

- Typing-simulation bot (fixed 50ms delay): success rate = 0% vs hybrid CAPTCHA: blocked 100%

- Human users: first attempt success = 87% vs overall success (two attempts) = 100%

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2510.02374.

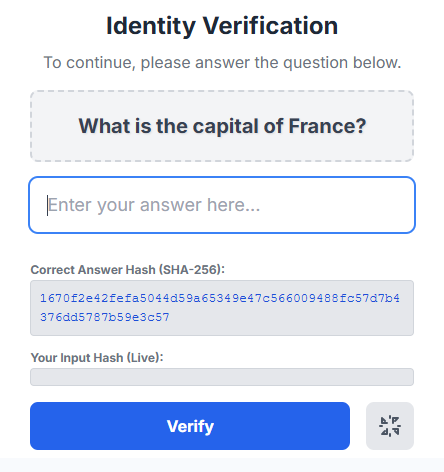

Fig 1: A general knowledge question generated by the LLM.

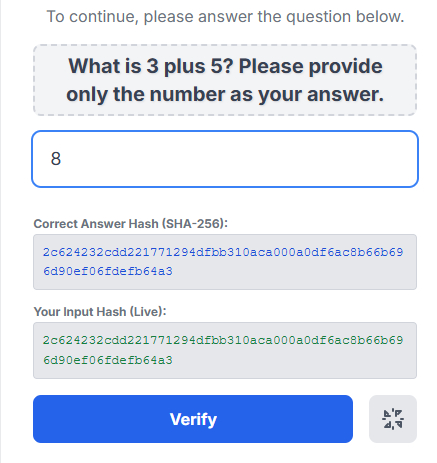

Fig 2: A mathematical question to demonstrate challenge variety.

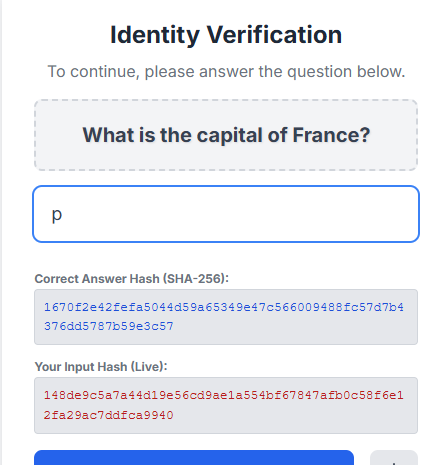

Fig 3: Real-time hashing shows the user’s input hash (bottom) does not yet match the correct answer hash (top).

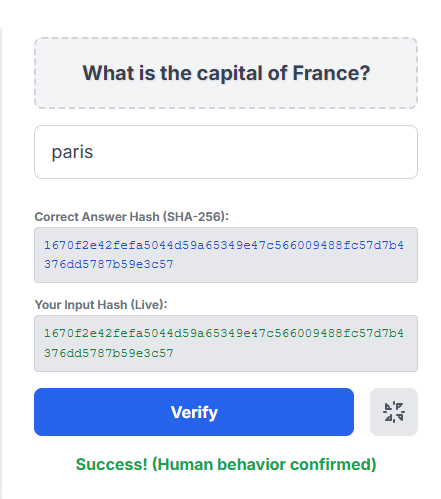

Fig 4: A successful verification message is displayed after the correct answer is typed with human-like behavior.

Limitations

- Bot adversaries were simplistic; did not test against advanced bots that inject randomized human-like typing delays.

- Small sample size for human users (n=15) limits generalizability of threshold parameters.

- Heuristic classifier, while effective here, lacks robustness against adaptive adversaries; no ML-based model used.

- Dependency on external LLM API introduces latency and availability concerns for production deployment.

- No evaluation of long-term user experience and potential accessibility issues with keystroke behavioral biometrics.

- No analysis of resistance to advanced replay or mimicry attacks beyond simple paste and scripted typing.

Open questions / follow-ons

- How effective would a more sophisticated adversary be who randomizes typing delays to mimic human keystroke variability?

- Can machine learning models trained on a large corpus of human typing patterns improve classification beyond heuristic thresholds?

- Would adding multi-modal biometrics like mouse movement or touchscreen gestures enhance robustness without harming usability?

- What is the system’s performance under high load or in diverse client environments including mobile devices?

Why it matters for bot defense

This work highlights a promising direction for bot defense practitioners interested in hybrid CAPTCHA designs combining cognitive and behavioral methods. By leveraging LLMs for dynamic, semantically novel questions, it addresses replay attack vulnerabilities common in static CAPTCHAs. The incorporation of keystroke dynamics as an on-device behavioral biometric provides an interpretable and lightweight secondary verification layer without extensive user tracking or privacy trade-offs.

For CAPTCHA engineers, these findings encourage exploration of integrating behavioral timing features to detect automated typing scripts that easily evade answer-only checks. The heuristic thresholds and feature extraction methods offer a practical baseline, though practitioners should anticipate evolving bots that spoof behavior and consider adopting ML-based behavioral classifiers. Finally, reliance on external LLM APIs underscores the need to evaluate latency and availability impacts when deploying generative AI-powered challenges at scale.

Cite

@article{arxiv2510_02374,

title={ A Hybrid CAPTCHA Combining Generative AI with Keystroke Dynamics for Enhanced Bot Detection },

author={ Ayda Aghaei Nia },

journal={arXiv preprint arXiv:2510.02374},

year={ 2025 },

url={https://arxiv.org/abs/2510.02374}

}