Hybrid Generative Fusion for Efficient and Privacy-Preserving Face Recognition Dataset Generation

Source: arXiv:2508.10672 · Published 2025-08-14 · By Feiran Li, Qianqian Xu, Shilong Bao, Boyu Han, Zhiyong Yang, Qingming Huang

TL;DR

This paper addresses the challenge of constructing a large-scale, high-quality synthetic face recognition dataset that contains no real-world identities, as required by the DataCV ICCV Face Recognition Dataset Construction Challenge. The authors introduce a hybrid generative fusion approach combining dataset cleaning of a baseline real-world dataset (HSFace) and synthetic identity generation using diffusion (Stable Diffusion) and GAN-style methods (Vec2Face). The cleaning improves identity consistency by removing noisy and mislabeled images using face embedding clustering and GPT-4o-assisted visual verification. Synthetic identities are generated via Stable Diffusion with prompt engineering for identity diversity, then expanded using Vec2Face to efficiently create many identity-consistent variants at low computational cost. A curriculum learning approach orders training from easy synthetic samples to harder cleaned real samples for better model generalization and convergence.

Evaluated on training sets with 10K, 20K, and 100K identities, each with 50 images, their approach achieved first place in the challenge leaderboard. Experiments show this dataset construction strategy improves recognition accuracy consistently across multiple test splits and dataset scales. This work demonstrates how careful hybrid use of generative models and cleaning paired with curriculum training can overcome synthetic data challenges in privacy-preserving face recognition.

The authors also verify zero identity leakage to any public dataset, fulfilling strict privacy constraints. Their code and datasets are publicly available for reproducibility, making this a meaningful advancement for synthetic face data generation under constrained ethical and computational scenarios.

Key findings

- Applying DBSCAN clustering on HSFace embeddings and retaining only the largest identity cluster removed label noise, discarding identities with less than 20% remaining samples.

- GPT-4o multimodal validation effectively identifies and removes ambiguous or inconsistent face samples from identity clusters beyond clustering alone.

- Stable Diffusion XL 1 is used with prompt engineering to generate one high-quality reference image per synthetic identity with diverse controlled attributes.

- Vec2Face expands each Stable Diffusion reference image by generating 49 identity-consistent variants, enabling efficient augmentation while preserving identity features.

- Curriculum learning by placing synthetic, visually consistent identities early in training leads to faster convergence and improved recognition accuracy compared to random mixing.

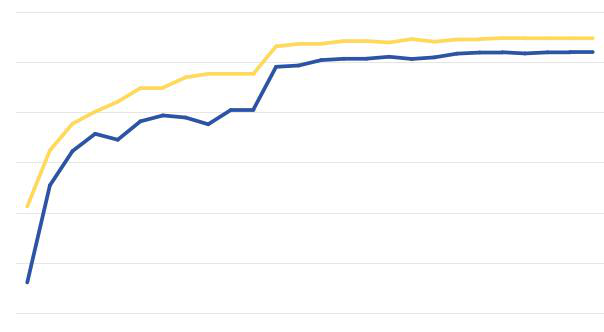

- The final datasets of 10K, 20K, and 100K identities with 50 images each achieve leading average accuracies of 84.37%, 85.43%, and 86.78%, respectively, outperforming all other challenge participants.

- Synthetic identities were verified against mainstream real face datasets to confirm zero identity leakage, meeting strict privacy requirements.

- Data augmentation after cleaning restores samples to 50 per identity using random flips, color jitter, grayscale, affine transforms, rotation, Gaussian blur, and downsampling.

Threat model

The adversary is an entity attempting to extract or recognize real individual identities from the training dataset, which is prohibited by privacy constraints. The defenders assume that access is limited to the dataset and models trained on it, with no side channels or oracle access to the generative model internals. The approach mitigates identity leakage by thorough cleaning and verification steps, and by generating synthetic faces that do not match any known real identity. However, it does not consider adversaries performing direct attacks on the synthesis pipeline or training process.

Methodology — deep read

Threat Model & Assumptions: The adversary is implicitly the privacy-concerned entity enforcing that no real identities appear in the dataset. The method assumes access to a baseline real dataset (HSFace) that may contain noisy or inconsistent labels. The goal is to generate a high-quality synthetic dataset without privacy leakage, so adversaries cannot recover real identities from the training set.

Data: The starting point is the HSFace dataset at three scales (10K, 20K, 100K identities), each identity having up to 50 images. After cleaning, data augmentation is applied to ensure exactly 50 samples per identity. Synthetic identities are generated from scratch using Stable Diffusion XL 1 text-to-image model and expanded with Vec2Face to maintain identity consistency. A pool of hundreds of identity prompts controls major facial attributes.

Architecture/Algorithm: Dataset cleaning uses a Mixture-of-Experts (MoE) strategy combining two modules: (1) Feature embedding extraction using a pretrained face recognition model (hsface300K.pth), followed by DBSCAN clustering with cosine similarity to isolate intra-identity image clusters. (2) GPT-4o multimodal validation where composite grids of identity images are input to GPT-4o with a specially designed prompt to identify inconsistent or mislabeled faces. The largest consistent cluster is retained per identity; those retaining under 20% of samples are discarded.

Synthetic identity generation uses Stable Diffusion XL 1 with carefully engineered text prompts specifying attributes like age, gender, ethnicity, pose, expression, lighting, angle, background, and accessories to generate one canonical identity image. Then the Vec2Face lightweight decoder model takes this reference and generates 49 diverse but identity-consistent images by varying pose, illumination, and expression efficiently, greatly reducing the diffusion model costs.

Curriculum Learning: Synthetic identities with low intra-class variance and strong identity coherence are placed at the beginning of the training schedule as 'easy' samples, followed by the harder, more variable cleaned HSFace samples. The training dataset groups identities, preserves order (no shuffling), and replaces discarded HSFace identities with synthetic ones to preserve dataset size.

Training Regime: Experiments run on a single NVIDIA RTX 4090 GPU using PyTorch. The pretrained hsface300.pth model serves as feature extractor during cleaning. Data augmentation includes random horizontal flips, color jitter, grayscale, affine and in-plane rotation, Gaussian blur, and downsampling, applied offline to restore 50 images per identity.

Evaluation Protocol: The dataset is evaluated by the DataCV ICCV Challenge organizers on three test subsets (ACC 1, ACC 2, ACC 3) across 10K, 20K, and 100K identity scales with fixed 50 images per identity training sets. Metrics are average accuracy (ACC) over the subsets. Their method achieves first place on the leaderboard across all dataset sizes, demonstrating scalability and effectiveness.

Reproducibility: The authors release code at https://github.com/Ferry-Li/datacv_fr and use publicly provided pretrained weights and datasets from the Vec2Face baseline and HSFace sources. Details such as hyperparameters for DBSCAN similarity thresholds and data augmentation probabilities are disclosed. The exact training/final face recognition model code is not detailed but expected to be fixed as per the competition rules. Overall, the description is thorough for reproduction of the dataset generation pipeline.

Technical innovations

- Mixture-of-Experts cleaning that combines embedding-based clustering with GPT-4o multimodal validation significantly improves identity purity over clustering alone.

- Hybrid generative pipeline using Stable Diffusion for high-fidelity reference image synthesis combined with Vec2Face for computationally efficient identity-consistent sample expansion.

- Prompt engineering strategies inject controlled variations in facial attributes during diffusion-based synthesis to maximize inter-identity diversity.

- Curriculum learning data structuring orders synthetic easy identities before real cleaned data to enhance model convergence and generalization under fixed training regimes.

Datasets

- HSFace-10K — 10,000 identities, up to 50 images each — provided by competition organizers

- HSFace-20K — 20,000 identities, up to 50 images each — provided by competition organizers

- HSFace-100K — 100,000 identities, up to 50 images each — provided by competition organizers

- Synthetic fusion datasets at 10K, 20K, and 100K identity scales combining cleaned HSFace and generated identities — constructed by authors

Baselines vs proposed

- nicolo.didomenico: Average ACC = 77.26% (10K) vs Proposed: 84.37%

- mnogolikomus: Average ACC = 82.83% (10K) vs Proposed: 84.37%

- f10942093: Average ACC = 83.25% (10K) vs Proposed: 84.37%

- anjith2006: Average ACC = 83.66% (10K), 85.13% (20K), 85.74% (100K) vs Proposed: 84.37% (10K), 85.43% (20K), 86.78% (100K)

- Ours (Proposed): Average ACC = 84.37% (10K), 85.43% (20K), 86.78% (100K) — consistently 1st across all test subsets

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2508.10672.

Fig 1: An overview of our method. We first perform data cleaning (Step 1) using a face recognition model and GPT-4o validation,

Limitations

- The reliance on a pretrained feature extractor and DBSCAN threshold tuning may limit robustness if underlying model biases exist.

- The GPT-4o assisted cleaning is heuristic and depends on prompt design and model behavior, which can vary and might misclassify ambiguous samples.

- No explicit adversarial attack evaluation or robustness testing against identity spoofing within synthetic data was performed.

- Visual similarity among synthetic identities remains a challenge as intra-class variation is lower than in real data, potentially limiting model generalization without curriculum learning.

- Although code and dataset generation pipelines are released, the final trained face recognition models and training code are not detailed, limiting full reproducibility of performance.

- Computational resource requirements for diffusion model inference, while mitigated by Vec2Face, may still be non-trivial for extremely large-scale dataset synthesis.

Open questions / follow-ons

- How can generative models be improved to simultaneously increase intra-class variation while preserving identity consistency in synthetic face datasets?

- Can automated multimodal validation tools like GPT-4o be extended to provide more fine-grained quality and privacy guarantees at scale?

- What are the impacts of different curriculum learning schedules or mixing strategies on convergence and model robustness?

- How do synthetic datasets generated by this hybrid approach perform under adversarial attacks or domain shifts compared to real-world datasets?

Why it matters for bot defense

For practitioners in bot-defense and CAPTCHA systems, this paper provides valuable insights into generating large-scale synthetic face datasets that are privacy-preserving and diverse enough to train robust face recognition models. The hybrid generative fusion approach balances computational cost with sample quality and includes a rigorous cleaning pipeline to prevent identity leakage, a critical concern in sensitive applications. Its curriculum learning strategy for dataset structuring also suggests practical benefits in improving model training stability and performance.

While CAPTCHA systems typically require bot-detection mechanisms rather than face identification, techniques here can inform synthetic sample creation and quality assurance in other biometric or bot-resistance domains. The verification approach combining clustering and multimodal language model reasoning highlights an innovative direction for dataset validation. However, the limited intra-class variance in synthetic faces remains a cautionary point, as such over-homogeneity could limit generalization when deployed in adversarial real-world environments prone to spoofing or impersonation attacks.

Cite

@article{arxiv2508_10672,

title={ Hybrid Generative Fusion for Efficient and Privacy-Preserving Face Recognition Dataset Generation },

author={ Feiran Li and Qianqian Xu and Shilong Bao and Boyu Han and Zhiyong Yang and Qingming Huang },

journal={arXiv preprint arXiv:2508.10672},

year={ 2025 },

url={https://arxiv.org/abs/2508.10672}

}