AirSignatureDB: Exploring In-Air Signature Biometrics in the Wild and its Privacy Concerns

Source: arXiv:2508.08502 · Published 2025-08-11 · By Marta Robledo-Moreno, Ruben Vera-Rodriguez, Ruben Tolosana, Javier Ortega-Garcia, Andres Huergo, Julian Fierrez

TL;DR

This work introduces AirSignatureDB, a novel, publicly accessible dataset of in-air signature biometrics collected from 108 users in unconstrained real-world conditions using 83 different smartphone models across four sessions. Unlike prior datasets, AirSignatureDB includes both genuine samples and skilled forgeries, enabling robust evaluation of verification systems under realistic attack scenarios and temporal variability. The paper benchmarks traditional Dynamic Time Warping (DTW) alongside multiple deep learning models (FCN, ResNet, InceptionTime, RNN) on this challenging dataset, revealing that DTW and CNN-based models outperform RNNs, with the best Equal Error Rates (EER) reaching 2.3% in skilled forgery scenarios using sensor fusion.

Beyond verification, the authors present a first-of-its-kind method to reconstruct three-dimensional in-air signature trajectories purely from inertial sensor data (accelerometer and gyroscope) embedded in smartphones, using sensor fusion with orientation estimation via the Madgwick filter and double integration of acceleration signals. This challenges prior assumptions that in-air gestures are traceless and reveals forensic traceability properties but also raises serious privacy concerns about behavioral biometrics derived from inertial data. The results highlight real-world behavioral variability, device heterogeneity, and privacy implications rarely addressed in the literature.

Key findings

- AirSignatureDB contains in-air signature data from 108 users collected over 4 sessions at least 2 days apart, using 83 different smartphone models.

- Dynamic Time Warping (DTW) achieves 9.0% EER in 1vs1 random impostor trials and 4.7% EER in 4vs1 random impostor trials using linear accelerometer data.

- In skilled forgery 1vs1 verification, best EERs are 8.9% achieved by FCN (accelerometer and linear accelerometer) and ResNet (gyroscope).

- In skilled forgery 4vs1 verification, ResNet with sensor fusion (accelerometer + linear accelerometer + gyroscope) achieves a low EER of 2.3%.

- Convolutional models (FCN, ResNet, InceptionTime) outperform RNNs significantly, with RNN best EER at 11.3%.

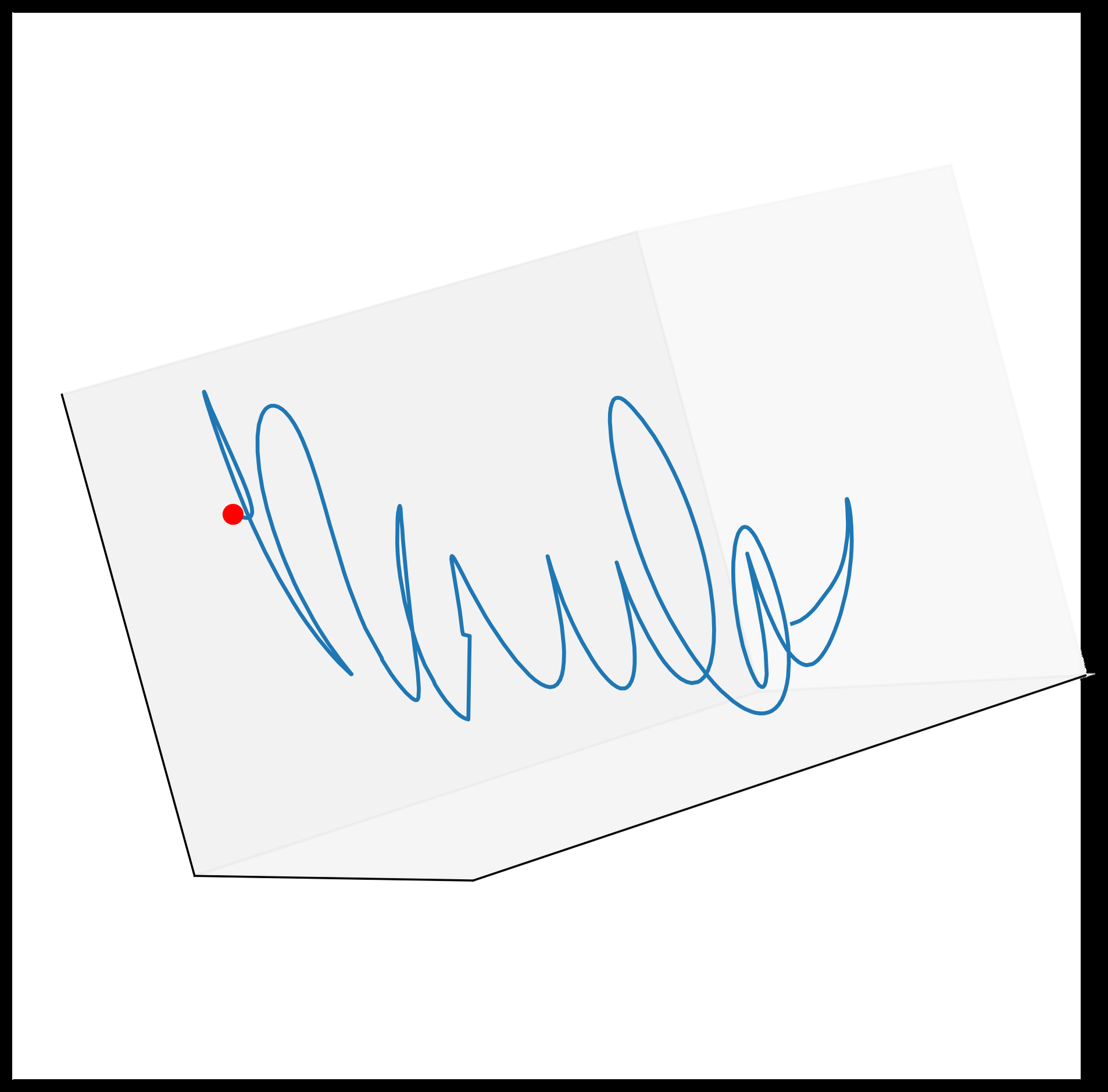

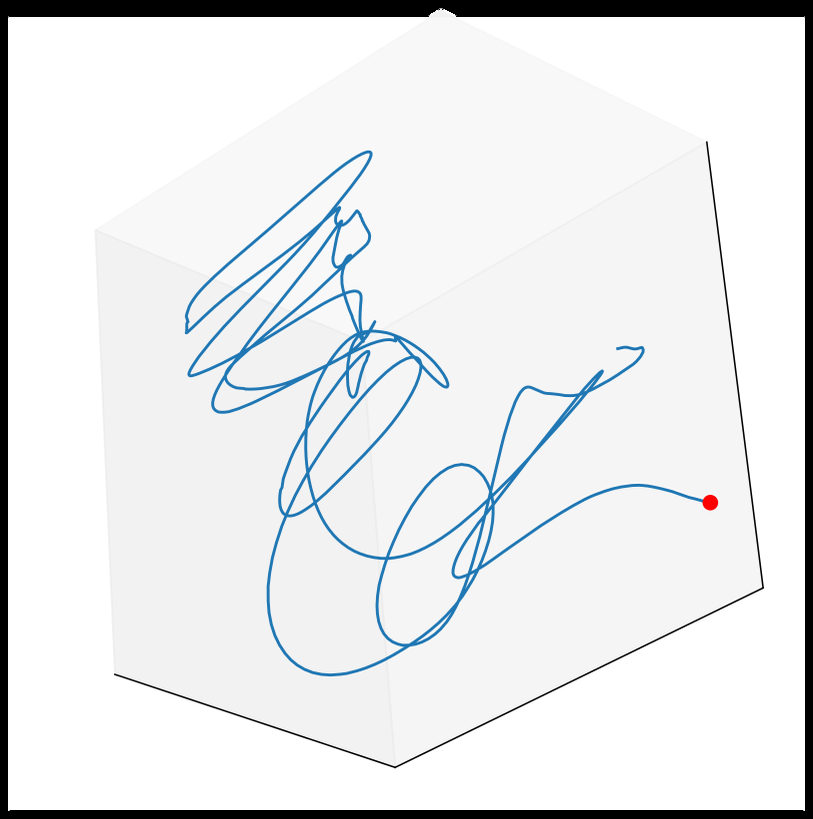

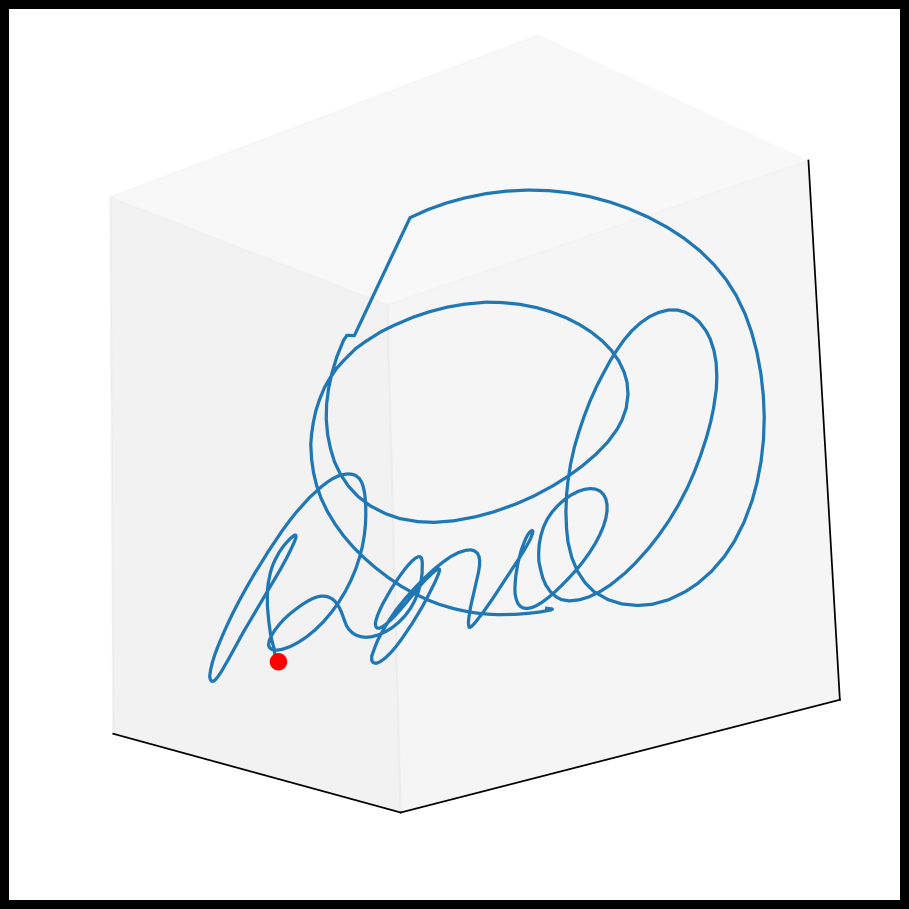

- The study demonstrates 3D in-air signature trajectory reconstruction from smartphone inertial sensors by estimating orientation with a Madgwick filter and double integrating accelerations filtered to remove drift.

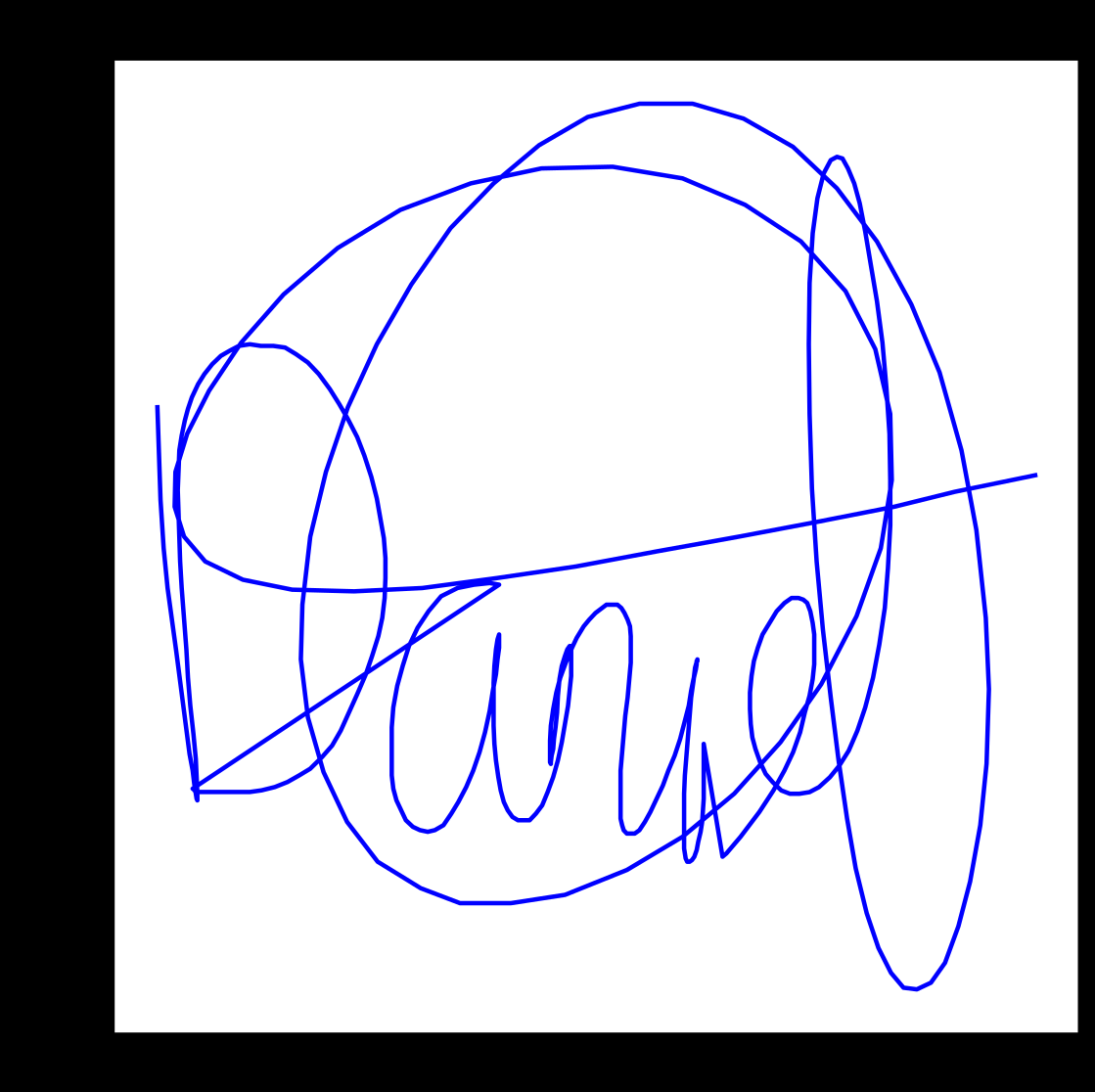

- Trajectory reconstruction accuracy is supported using paired handwritten signature images as ground truth references.

- The ability to reconstruct 3D trajectories reveals that in-air gestures are not inherently traceless, raising privacy and forensic traceability concerns.

Threat model

The adversary simultaneously has visual access to static images and videos of user signatures, enabling skilled forgery attempts to imitate both handwritten and in-air signatures. However, they lack access to inertial sensor data and cannot perfectly replicate complex wrist and grip dynamics captured uniquely by motion sensors. The attacker’s goal is to impersonate a genuine user and bypass the biometric verification system using these forged signatures.

Methodology — deep read

The study targets a threat model where attackers may perform skilled forgery attacks—imitating both the shape and dynamic properties of in-air signatures—but cannot fully reproduce unique inertial motion patterns generated by the genuine user’s grip and wrist motion. The adversary has access to static images and videos of the genuine signatures but not the internal sensor data.

Data was collected via a custom Android app from 108 users over 4 sessions, each spaced at least two days apart (average 4.12 days), using 83 different Android smartphone models to encompass natural device variability. For each session, users provided two on-screen handwritten signatures and two in-air signatures captured by accelerometer, linear accelerometer, and gyroscope sensors. Skilled forgeries were collected by two trained impostors imitating the genuine users’ signatures based on static images and videos to replicate both visual and dynamic aspects.

Raw inertial signals were preprocessed by resampling all data to 100 Hz to address diverse device sampling rates, followed by Z-score normalization and moving average smoothing (window size 5) to reduce noise. Movement activity detection (MAD) isolated motion segments by thresholding signal energy derived from the linear accelerometer, used because it filters out gravity effects.

Verification models evaluated included Dynamic Time Warping (DTW) for direct sequence alignment and comparison, a bidirectional two-layer LSTM recurrent neural network generating 32-dimensional embeddings aggregated with a fully connected layer, and several convolutional networks adapted for time series: Fully Convolutional Network (FCN), Residual Network (ResNet), and InceptionTime. Each CNN projected to 128-dimensional embedding spaces. Verification was framed as a Siamese network task using cosine similarity and contrastive loss to separate genuine and impostor pairs.

Training used an 80/20 train-test split on 108 users, with a further 80/20 split within training for validation and hyperparameter tuning. Sessions 2 and 3 provided enrollment samples, session 4 provided test samples. Models were trained on both random and skilled forgery negative samples, reflecting realistic attack exposure. Evaluation reported Equal Error Rate (EER) for both 1vs1 (single enrollment sample) and 4vs1 (four enrollment samples averaged) matching scenarios.

For 3D trajectory reconstruction, the authors estimated device orientation by integrating gyroscope data with a Madgwick filter combining accelerometer gravity reference to reduce drift. Accelerometer data was then rotated into inertial Earth frame, gravity compensated, and double integrated to recover velocity and position trajectories. Cutoff frequencies for Butterworth high-pass filters on velocity and position signals were adapted per user using frequency-domain analysis (FFT) to reduce drift effects. Paired on-screen handwritten signatures served as 2D ground-truth references, enabling qualitative accuracy assessment of the reconstructed 3D in-air signature paths.

Code and the AirSignatureDB dataset are publicly available upon request, facilitating reproducibility.

Technical innovations

- Introduction of AirSignatureDB, the largest multi-session, multi-device, real-world dataset of in-air signatures including skilled forgeries for robust biometric evaluation.

- Benchmarking of both traditional DTW and state-of-the-art deep learning time-series models for in-air signature verification in challenging realistic conditions.

- Novel demonstration of feasible 3D trajectory reconstruction of in-air signatures using only smartphone inertial sensors and orientation estimation with a Madgwick filter, validated with paired on-screen handwritten signatures.

- Analysis revealing the privacy risks arising from the non-traceless nature of in-air gestures when sensor data enables reconstruction of behavioral biometrics.

Datasets

- AirSignatureDB — 108 users, 4 sessions, 83 smartphone models — collected by authors, publicly available upon request

Baselines vs proposed

- DTW with linear accelerometer: 1vs1 random impostor EER = 9.0% vs 4vs1 EER = 4.7%

- FCN with linear accelerometer: 1vs1 skilled forgery EER = 8.9%

- ResNet with gyroscope: 1vs1 skilled forgery EER = 8.9%

- ResNet with sensor fusion (acc + lin acc + gyro): 4vs1 skilled forgery EER = 2.3%

- RNN: best EER 11.3% in skilled forgery 1vs1, significantly worse than CNNs

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2508.08502.

Fig 2: Example of 3D trajectory reconstruction from our

Fig 3: Two in-air signatures from the same user reconstructed

Fig 3 (page 8).

Fig 4 (page 8).

Fig 5 (page 8).

Limitations

- Performance remains modest compared to controlled datasets, indicating real-world collection introduces significant variability and noise.

- Reconstruction of 3D trajectories depends on careful sensor fusion and drift mitigation; quantitative accuracy metrics are limited, mostly qualitative.

- RNN models underperform compared to CNNs, suggesting sequence modeling can be improved with additional temporal alignment or preprocessing.

- Evaluation focuses on small set of two skilled impostors; scalability and robustness under more diverse attacker population remain untested.

- Privacy risk discussion is conceptual; no formal privacy-preserving mechanisms or mitigation strategies are evaluated.

- Dataset demographics skew mostly right-handed users with moderate age diversity but certain subpopulations are underrepresented.

Open questions / follow-ons

- How can privacy-preserving methods be integrated effectively to protect against trajectory reconstruction from inertial biometrics?

- What approaches can improve robustness and accuracy of RNN and sequential models in highly variable, real-world signature data?

- How generalizable are the results to other behavioral biometrics captured by inertial sensors with varying device characteristics?

- Can trajectory reconstruction be made quantitatively precise enough to serve as reliable forensic evidence?

Why it matters for bot defense

For bot-defense and CAPTCHA practitioners, this work emphasizes the relevance of behavioral biometrics captured by ubiquitous inertial sensors under unconstrained conditions, highlighting their robustness against sophisticated forgery attacks when fusion of multiple sensor modalities and enrollment strategies are used. The availability of AirSignatureDB fills a critical need for real-world datasets encompassing device heterogeneity and attacker modeling, enabling more realistic evaluation of behavioral biometric security systems.

The trajectory reconstruction findings raise caution about privacy risks as biometrics thought to be ephemeral or traceless may actually leak detailed user-specific movement patterns exploitable for identity tracing or profiling. Designers of CAPTCHA or bot-detection systems incorporating inertial biometric signals must therefore consider threat models that include sensor data leakage and behavioral data reconstruction. This underscores the importance of integrating privacy-aware mechanisms and evaluating system vulnerability beyond classical spoofing to include side-channel or data inversion attacks.

Cite

@article{arxiv2508_08502,

title={ AirSignatureDB: Exploring In-Air Signature Biometrics in the Wild and its Privacy Concerns },

author={ Marta Robledo-Moreno and Ruben Vera-Rodriguez and Ruben Tolosana and Javier Ortega-Garcia and Andres Huergo and Julian Fierrez },

journal={arXiv preprint arXiv:2508.08502},

year={ 2025 },

url={https://arxiv.org/abs/2508.08502}

}