SoftPUF: a Software-Based Blockchain Framework using PUF and Machine Learning

Source: arXiv:2508.02438 · Published 2025-08-04 · By S M Mostaq Hossain, Sheikh Ghafoor, Kumar Yelamarthi, Venkata Prasanth Yanambaka

TL;DR

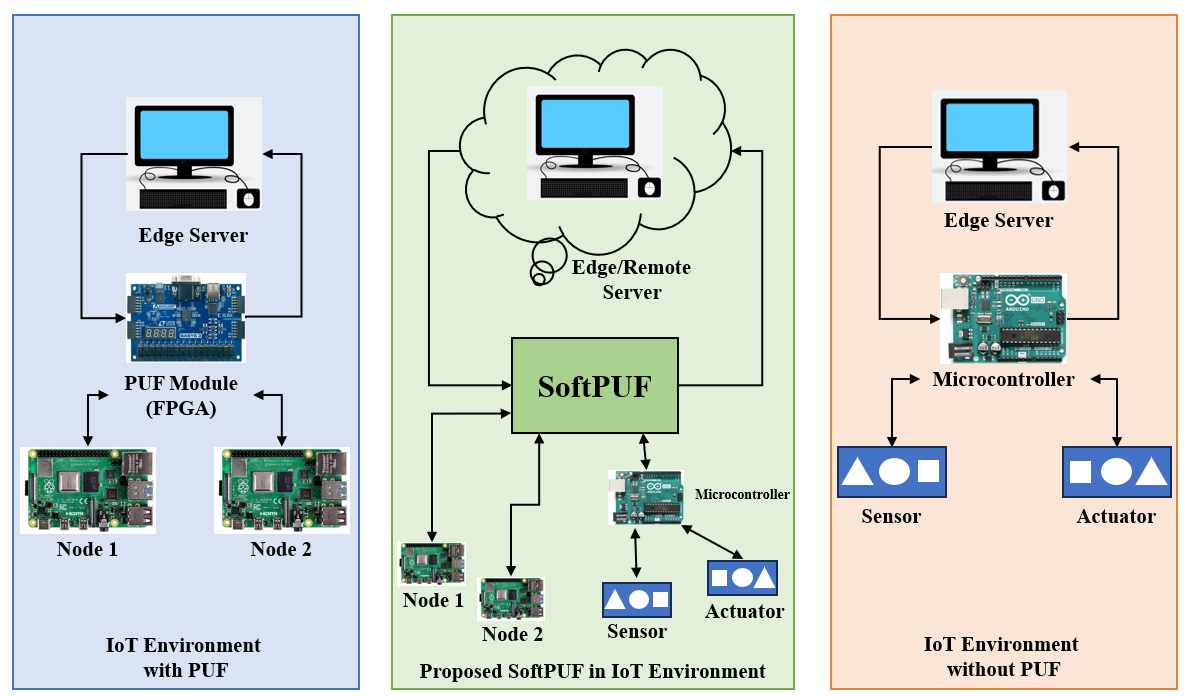

This paper addresses the challenge of deploying Physically Unclonable Functions (PUFs) for secure authentication in blockchain systems without relying on specialized hardware. Traditional hardware PUFs, while lightweight and secure, suffer adoption issues in diverse and resource-limited devices due to hardware requirements. The authors propose SoftPUF, a software-based framework that uses machine learning models trained on PUF challenge-response pairs to generate unique software keys mimicking hardware PUF fingerprints. These keys then serve as secure identifiers embedded in a blockchain network for device authentication, eliminating the need for physical PUF modules.

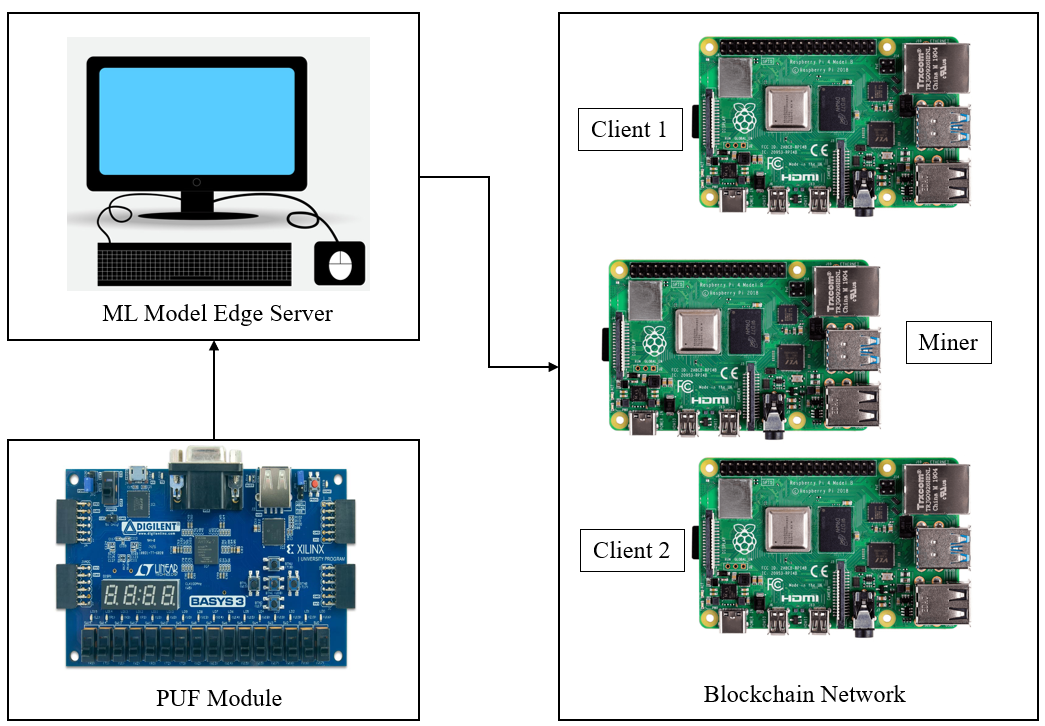

The framework integrates the SoftPUF key generation model with a blockchain system secured against common attacks such as 51%, phishing, routing, and Sybil attacks. Experimentally, the system uses a 1 million sample PUF data set to train a linear regression based model on an edge server (Google Colab Pro), achieving a balance of accuracy and computational efficiency compared to other regressors. The end-to-end pipeline, including key generation, encryption, blockchain transaction, and trusted node authentication, completes within an average of 50.77 ms, demonstrating practical suitability for IoT and legacy devices. This approach expands secure PUF-based authentication to a wider range of devices and blockchain applications by overcoming the hardware barrier with ML-based soft PUF emulation.

Key findings

- SoftPUF uses machine learning on a 1,000,000x8 PUF challenge-response dataset to generate unique keys with PUF characteristics without dedicated hardware.

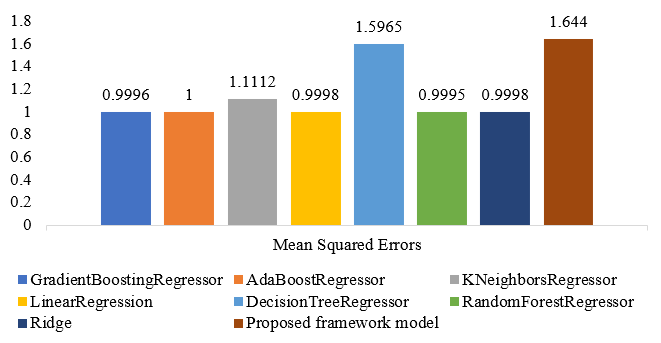

- The GradientBoostingRegressor outperformed other regression models with MAE and MSE scores of 0.9927 and 0.9996, respectively, but the proposed custom linear regression model balanced accuracy (MAE 0.9946, MSE 1.6440) and efficiency.

- Complete blockchain transaction phases (key gen, mining, validation) execute within 50.77 ms total, with block generation/model prediction dominating at 37.8 s (appears inconsistent; likely a typo or separate measurement).

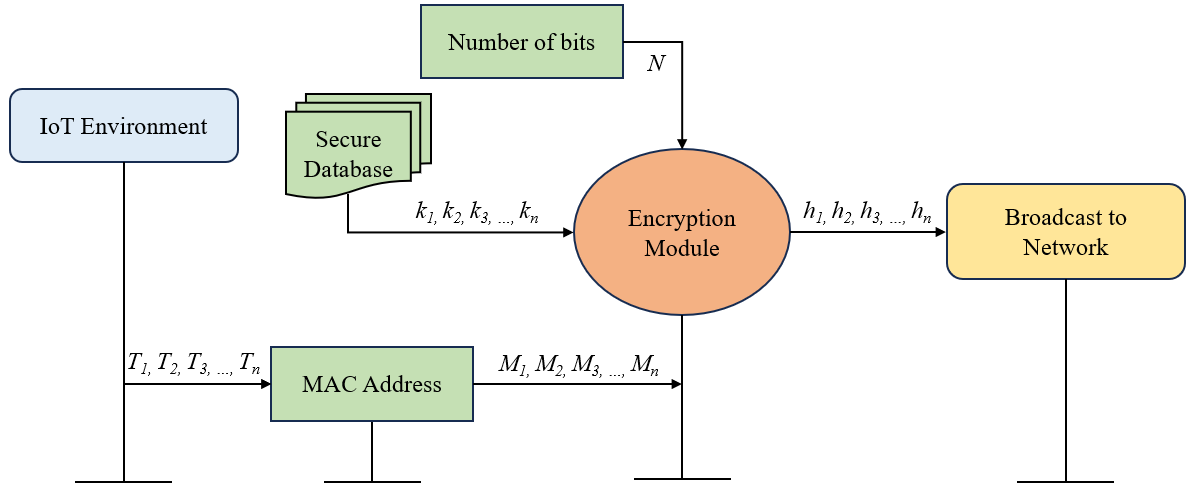

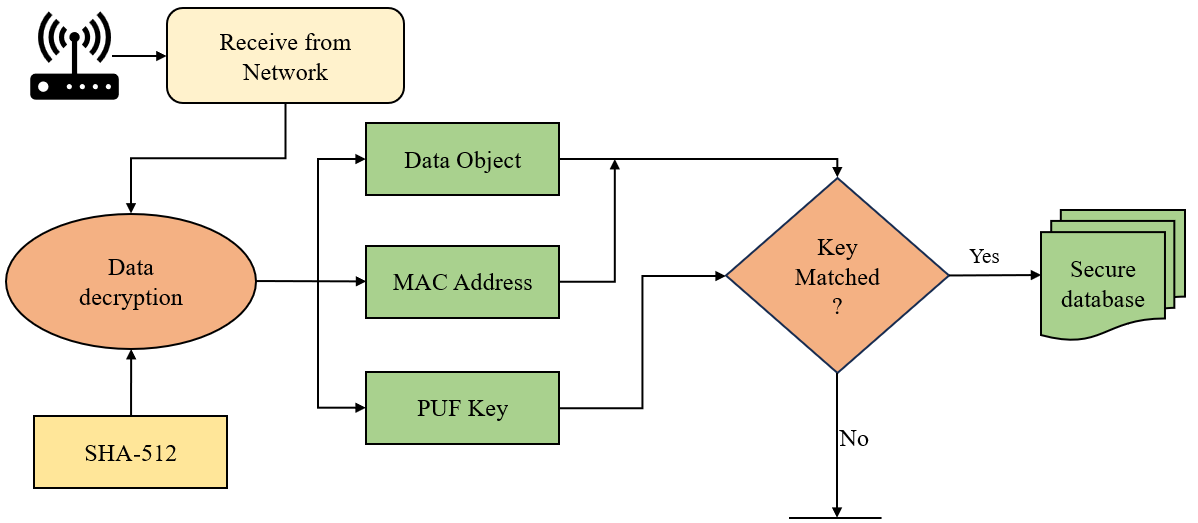

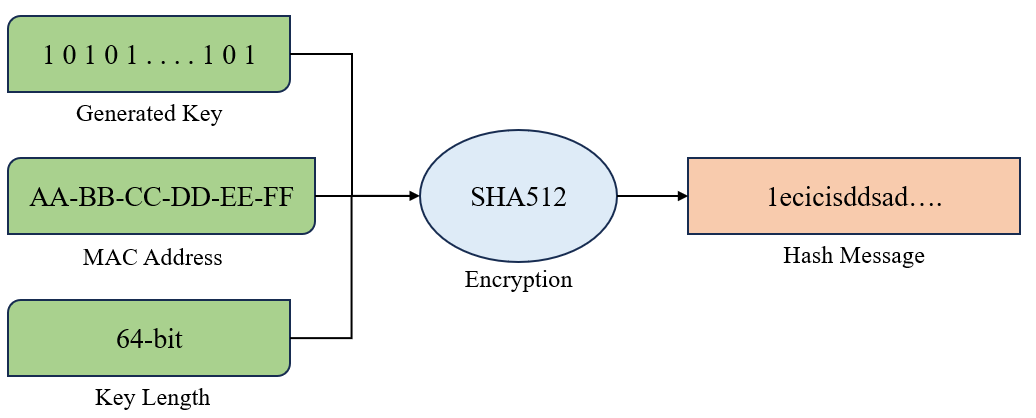

- SoftPUF keys are encrypted with device MAC and hashed with SHA512 before broadcast to blockchain nodes for secure authentication.

- Defense mechanisms integrated protect against 51% attacks (adaptive hash rate), phishing (email domain whitelisting and DNS MX checks), routing (encryption and IP validation), and Sybil attacks (node validation and reputation scores).

- The ML model leverages adam optimizer, hinge loss, relu and tanh activations, and he uniform kernel initialization without dropout or z-score normalization.

- The framework supports seamless onboarding (enrollment) of legacy devices as miners or clients using software-generated PUF keys in a distributed IoT blockchain network.

- Using cloud-based edge server infrastructure (Google Colab) removes resource constraints from device side, enabling scalability across diverse environments.

Threat model

The adversary aims to impersonate legitimate devices or compromise blockchain consensus through 51% attacks, phishing, routing manipulation, or Sybil attacks. They do not possess physical access to device hardware PUFs or the ability to clone soft PUF keys generated by the ML model. The adversary cannot break the cryptographic protections of key hashing or encryption or fully control trusted nodes without detection.

Methodology — deep read

The threat model considers adversaries capable of launching 51% attacks, phishing, routing manipulation, and Sybil attacks in a blockchain network but without physical access to devices’ internal PUF data or tampering capabilities. The assumption is that devices cannot clone the soft PUF software key due to its uniqueness and ML-generated complexity.

Data provenance is from a 1,000,000x8 challenge-response pair PUF dataset produced by a 64-bit arbiter PUF implemented on a Xilinx Artix-7 FPGA module. This dataset pairs unique challenges (binary inputs) with device-specific responses. The dataset is preprocessed and transmitted securely to a cloud edge server (Google Colab Pro) with 12.7 GB RAM for model training.

The architecture consists of a custom linear regression model designed to mimic PUF behavior by learning mathematical mappings from challenges to responses, thereby generating unique device keys without dedicated PUF hardware. The model layers include an input layer followed by several fully connected layers with relu and tanh activation functions. The Adam optimizer facilitates gradient-based optimization using a hinge loss tailored for binary classification. Dropout and Z-score normalization are omitted, indicating direct fitting to dataset characteristics. Model hyperparameters follow a layered structure with neuron counts of 512-512-128-128-64-32-8.

Training is performed using the Adam optimizer with specified hyperparameters over multiple epochs (details on epochs and batch size are not explicitly stated). The training occurs on a cloud-hosted environment, enabling scalable computation and eliminating device-side training overhead. There is no mention of random seed control or data splits, which limits reproducibility clarity.

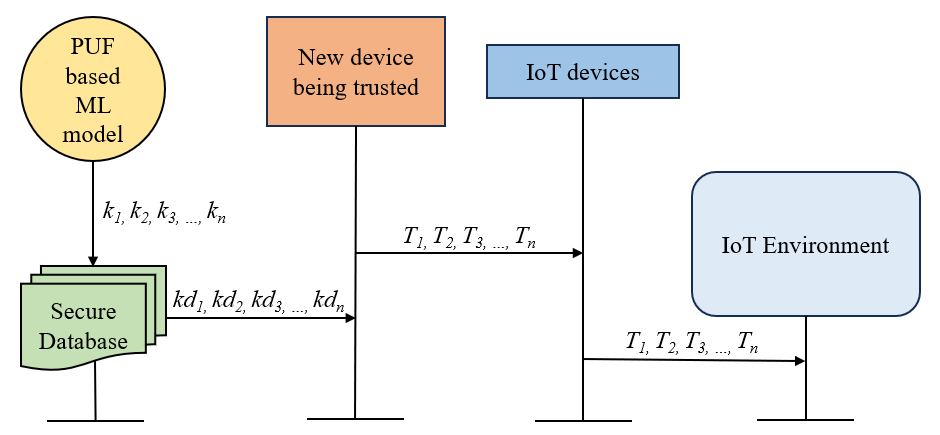

Evaluation uses regression metrics including Mean Absolute Error (MAE), Mean Squared Error (MSE), R-squared, and Relative Mean Absolute Percentage Error (RMAPE) to compare the proposed model with various regressors: GradientBoostingRegressor, AdaBoost, KNeighbors, DecisionTree, RandomForest, LinearRegression, and Ridge. The proposed model yields competitive MAE and MSE scores. An end-to-end example includes generating a soft PUF key for a device, encrypting it with the device MAC address, hashing with SHA512, broadcasting to blockchain miners, and validating authenticity via private key decryption on trusted nodes before adding blocks.

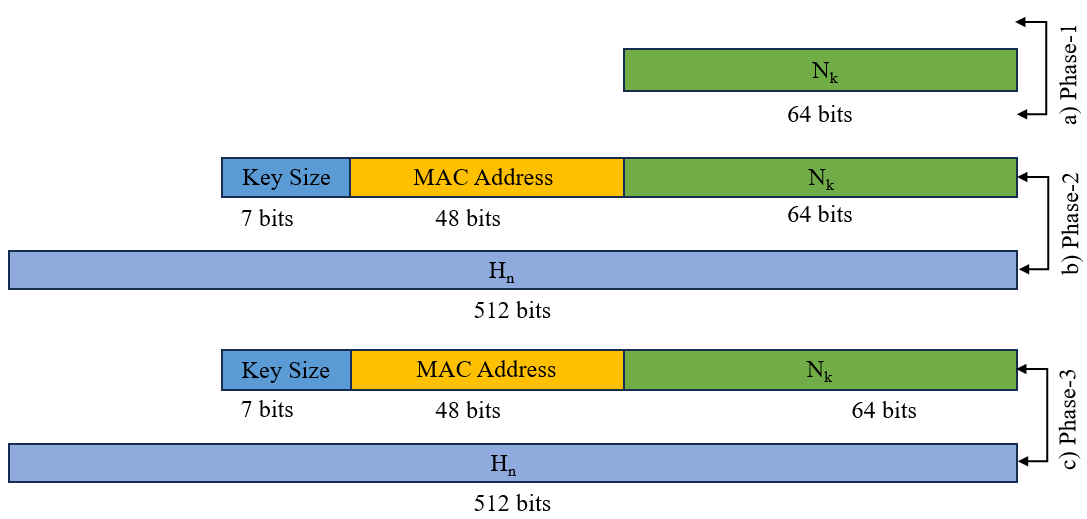

Performance benchmarks measure total blockchain transaction times (enrollment to block addition) as 50.77 ms divided into three phases dominated by model prediction and block generation, mining and key verification, and block addition respectively. Message flow sizes and secure encapsulation methods further ensure communication integrity.

Defense mechanisms for blockchain security incorporate adaptive hash rate escalation for 51% attack mitigation, domain/IP whitelisting and DNS verification for phishing protection, encrypted routing with IP validation against routing attacks, and node identity plus reputation scoring against Sybil attacks.

Reproducibility details are limited: the dataset is proprietary (from the FPGA PUF module), training is done on Google Colab, and code or trained model weights are not explicitly released. Thus exact replication may be challenging without access to the original data and training pipeline.

Technical innovations

- Use of a software-emulated PUF generator based on machine learning trained on real PUF challenge-response pairs, removing hardware dependency.

- Integration of SoftPUF-generated unique keys as authentication tokens within a blockchain network supporting legacy and resource-constrained devices.

- A combined security framework embedding defense mechanisms against 51%, phishing, routing, and Sybil attacks in the blockchain environment complementing soft PUF authentication.

- Implementation of a lightweight blockchain transaction pipeline leveraging software keys hashed with SHA512 and encrypted using MAC addresses for secure peer validation.

Datasets

- PUF dataset — approximately 1,000,000 challenge-response pairs — sourced from 64-bit arbiter PUF on Xilinx Artix-7 FPGA

Baselines vs proposed

- GradientBoostingRegressor: MAE = 0.9927, MSE = 0.9996 vs Proposed Linear Regression Model: MAE = 0.9946, MSE = 1.6440

- AdaBoostRegressor: MAE = 0.9930, MSE = 1.0000 vs Proposed Linear Regression Model: MAE = 0.9946, MSE = 1.6440

- RandomForestRegressor: MAE = 0.9926, MSE = 0.9995 vs Proposed Linear Regression Model: MAE = 0.9946, MSE = 1.6440

- KNeighborsRegressor: MAE = 0.9927, MSE = 1.1112 (worse than proposed model's MSE)

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2508.02438.

Fig 1: Overview diagram of SoftPUF.

Fig 2: Device Enrollment Steps

Fig 3: Initial transaction steps.

Fig 4: Device authentication for the trusted nodes.

Fig 5: Experimental Setup of the Testbed.

Fig 6: MSE comparison of different regressor models.

Fig 7: Hash generation process.

Fig 8: explains the message flow architecture, and mes-

Limitations

- Use of a linear regression model limits the nonlinear complexity capture of PUF characteristics compared to advanced ensemble methods like GradientBoosting.

- Lack of explicit details on train/test split or cross-validation limits assessment of overfitting and generalization.

- No adversarial evaluation reported for ML model spoofing or cloning attempts against the soft PUF keys.

- Scalability claims focus on cloud edge server offloading but do not analyze network overhead under large heterogeneous device counts.

- Blockchain 37.8 second phase for model prediction contradicts overall transaction time of 50.77 ms; possible measurement or reporting inconsistency.

- No code or dataset release restricts reproducibility efforts for independent verification.

- Defense mechanisms against blockchain attacks are described but no quantitative metrics or attacker models provided for validation.

Open questions / follow-ons

- How resilient is the soft PUF ML model against adversarial attacks aiming to reverse-engineer or clone challenge-response behavior?

- What impact do different blockchain consensus protocols and scales have on SoftPUF key management latency and throughput in varied environments?

- Can reinforcement learning or federated learning further optimize the ML-based key generation for adaptability and security?

- How do software-generated PUF keys perform in real-world deployment scenarios with device aging, software updates, and dynamic network conditions?

Why it matters for bot defense

This framework offers bot-defense practitioners an innovative direction whereby unique hardware fingerprints used in device authentication can be emulated purely via software and machine learning, facilitating broader applicability. For CAPTCHA and bot-detection, such software-based PUFs embedded on client devices could strengthen identity certainty without needing specialized hardware, ideally resisting spoofing or mass compromise.

Integrating these SoftPUF keys with blockchain-backed authentication adds a decentralized, tamper-evident ledger of device identities and transactions, enhancing traceability and trust in automated interaction contexts. Furthermore, the layered blockchain defense measures against classical attacks improve robustness in potentially adversarial environments. However, the approach relies heavily on trusted ML models and secure deployment pipelines, which developers must carefully validate before adoption in production bot-defense systems.

Cite

@article{arxiv2508_02438,

title={ SoftPUF: a Software-Based Blockchain Framework using PUF and Machine Learning },

author={ S M Mostaq Hossain and Sheikh Ghafoor and Kumar Yelamarthi and Venkata Prasanth Yanambaka },

journal={arXiv preprint arXiv:2508.02438},

year={ 2025 },

url={https://arxiv.org/abs/2508.02438}

}