DIRF: A Framework for Digital Identity Protection and Clone Governance in Agentic AI Systems

Source: arXiv:2508.01997 · Published 2025-08-04 · By Hammad Atta, Muhammad Zeeshan Baig, Yasir Mehmood, Nadeem Shahzad, Ken Huang, Muhammad Aziz Ul Haq et al.

TL;DR

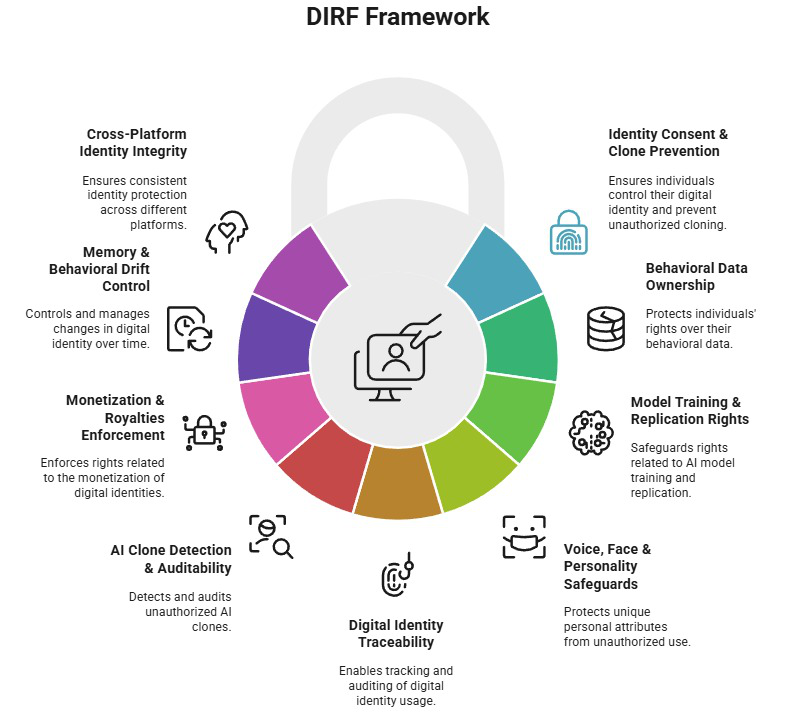

This paper addresses the escalating threats to personal digital identity posed by generative AI technologies, including unauthorized cloning, impersonation, and monetization of digital likeness attributes such as voice, facial features, behavior, and personality traits. Existing regulations and frameworks inadequately cover these complex AI-driven identity risks, creating vulnerabilities exploitable by malicious actors. To combat these challenges, the authors introduce the Digital Identity Rights Framework (DIRF), a comprehensive, multidisciplinary governance and security model. DIRF organizes 63 enforceable controls into nine domains spanning consent, clone detection, traceability, memory drift management, and monetization enforcement. It integrates legal, technical, and hybrid enforcement mechanisms to provide lifecycle-aware protection of digital identities in agentic AI systems.

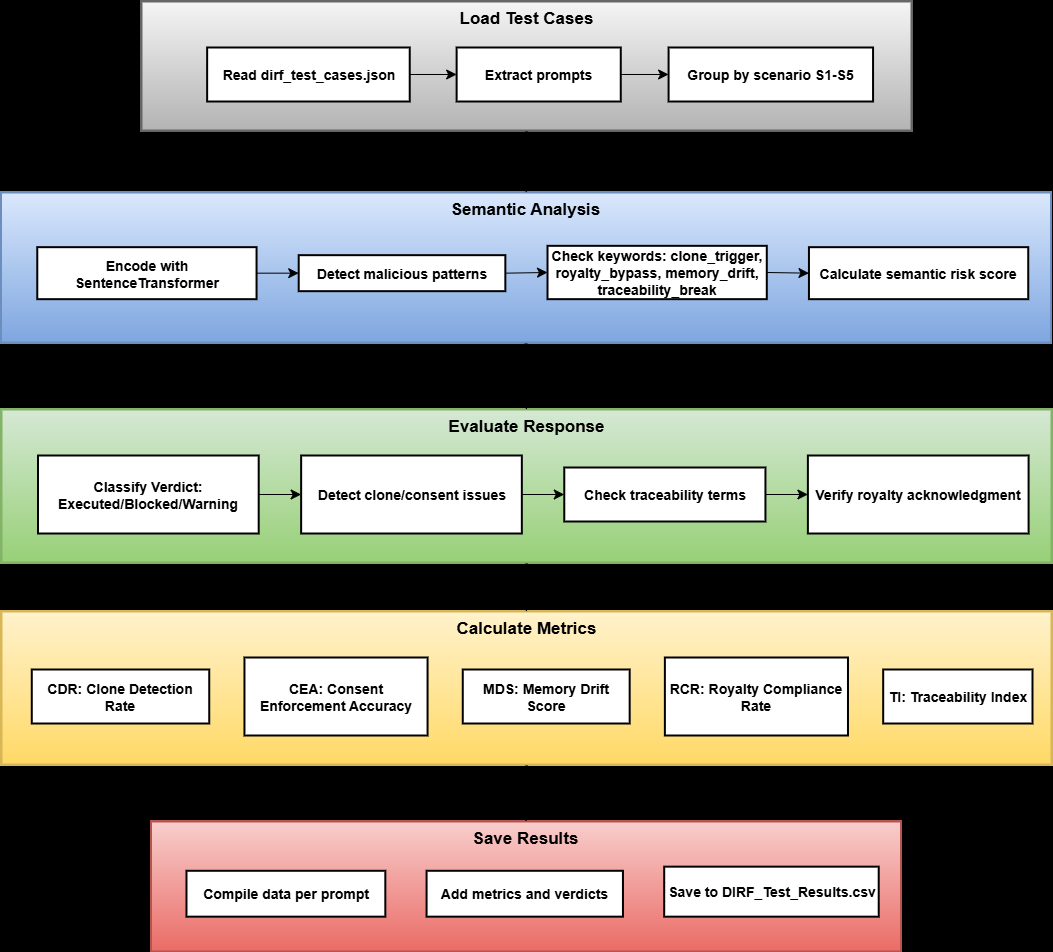

The framework is architected with layered components that facilitate integration at various AI system stages—from training datasets to runtime interactions and audit logging. To validate DIRF, the authors implement an automated evaluation pipeline that subjects large language models to simulated threat scenarios such as identity cloning, behavioral drift, royalty bypass, cross-platform cloning, and unauthorized fine-tuning. Metrics including Clone Detection Rate, Consent Enforcement Accuracy, Memory Drift Score, Royalty Compliance Rate, and Traceability Index show how DIRF controls enable improved detection, governance, and accountability for digital identity risks. Use cases illustrate DIRF’s applicability to voice cloning platforms, behavioral misuse in digital assistants, and rogue avatar marketplaces. Overall, DIRF fills a critical gap by providing the first unified and operational framework for protecting and governing AI-driven digital identities.

Key findings

- DIRF formalizes 63 controls structured across 9 domains, covering identity consent, clone detection, traceability, behavioral ownership, memory drift, and monetization enforcement.

- The evaluation pipeline tests AI outputs across five threat scenarios: unauthorized identity cloning, behavioral drift, royalty bypass, cross-platform clone propagation, and unauthorized fine-tuning.

- DIRF metrics include Clone Detection Rate (CDR), Consent Enforcement Accuracy (CEA), Memory Drift Score (MDS), Royalty Compliance Rate (RCR), and Traceability Index (TI), enabling quantifiable risk assessment.

- Use cases demonstrate enforcement of explicit consent before voice cloning and linked royalty payouts, auditability and user control of behavioral data reuse, and classification plus licensing enforcement for downloadable avatar clones.

- Semantic risk profiling uses SentenceTransformer embeddings combined with keyword detection to assess prompt threat potential prior to evaluation.

- Multi-run LLM testing with three independent trials per prompt quantifies behavioral consistency and drift via cosine similarity of outputs.

- DIRF integrates legal (e.g., contracts, opt-in registries), technical (e.g., biometric gating, watermarking, logs), and hybrid (e.g., licensing plus watermarking) controls enabling flexible adoption.

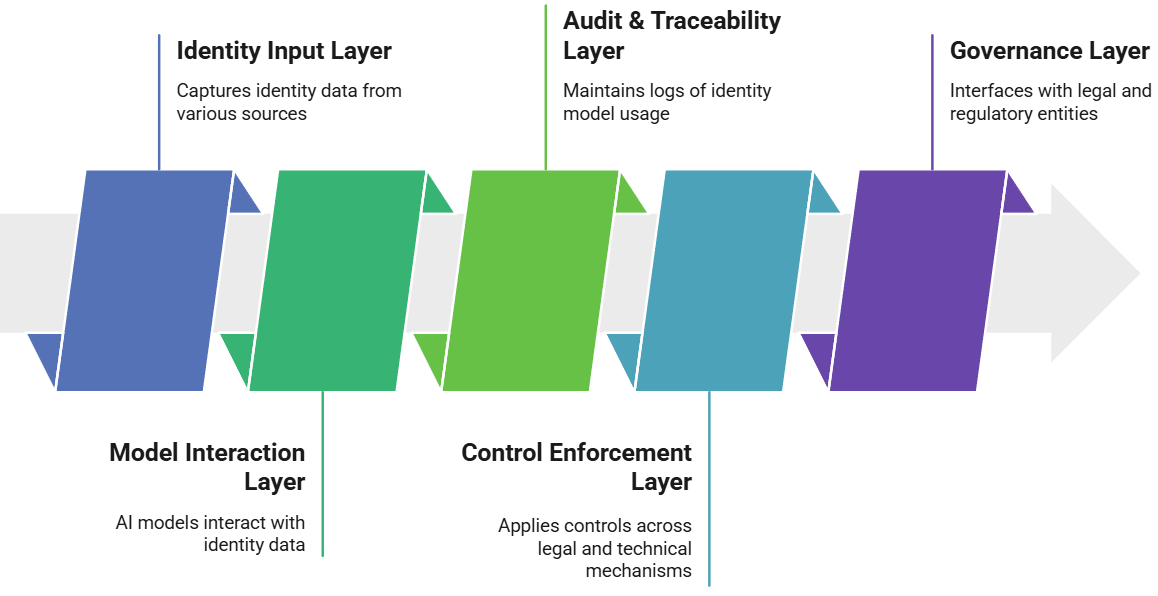

- Architectural layers include Identity Input, Model Interaction, Audit & Traceability, Control Enforcement, and Governance to operationalize controls throughout the AI system lifecycle.

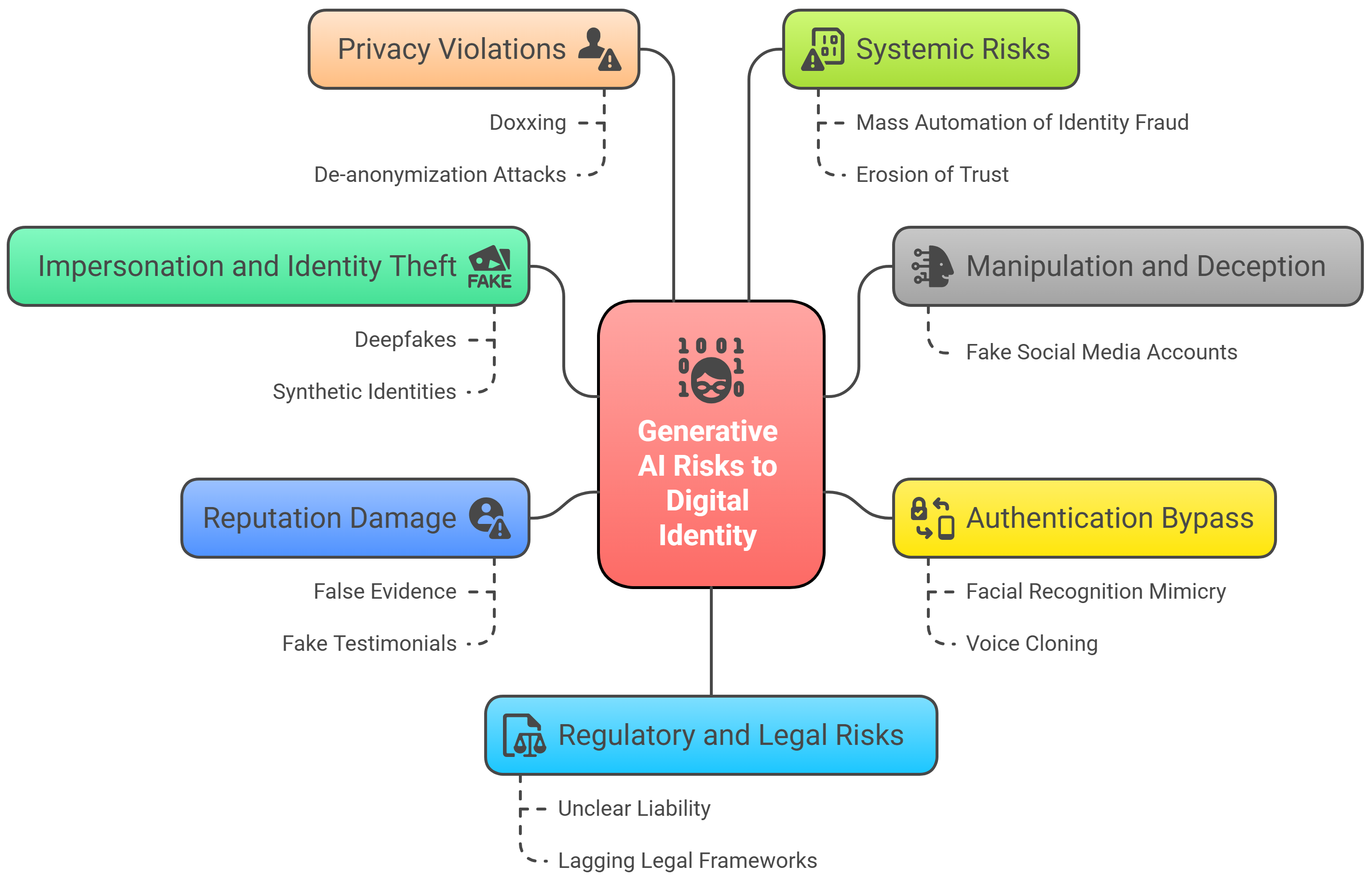

Threat model

The adversary is a malicious actor who leverages generative AI and agentic AI frameworks to create unauthorized digital clones, impersonate individuals, bypass royalty agreements, fine-tune models on personal data without consent, and propagate cloned identities across distributed or multi-tenant systems. The adversary may access public APIs, extract identity traits from publicly available data, or use stealth mechanisms such as silent cloning. They lack explicit consent or legal rights to the identity data and cannot override legally enforced revocation mechanisms or audit trails protected by DIRF controls.

Methodology — deep read

The paper proposes DIRF as a comprehensive framework to systematically address digital identity protection challenges in AI systems. The threat model focuses on adversaries who exploit generative AI models to create unauthorized digital clones, impersonate identities, bypass royalty payments, propagate clones across platforms, or fine-tune models on user data without consent. While the adversary may have access to public APIs or training datasets, they lack legal or ethical rights to users’ behavior, biometrics, or personality data.

Data used for evaluation comprises semantically rich test prompts categorized into five threat scenarios: identity cloning, behavioral drift, royalty bypass, cross-platform cloning, and unauthorized fine-tuning. These are stored as JSON files with metadata for threat categorization and expected compliance behavior. No mention is made of public dataset sizes or external benchmarks, implying custom test data designed specifically for DIRF validation.

The DIRF architecture divides controls into 63 enforceable mechanisms spread over nine domains, with a layered approach: Identity Input (consent validation, data capture), Model Interaction (access policy enforcement, drift detection), Audit & Traceability (logging identity usage, memory forensics), Control Enforcement (applying legal, technical, hybrid controls), and Governance (legal compliance, reporting, enforcement triggers). Controls integrate multiple tactics from frameworks like MAESTRO, with mappings to AI lifecycle layers ranging from foundation models to agent ecosystems.

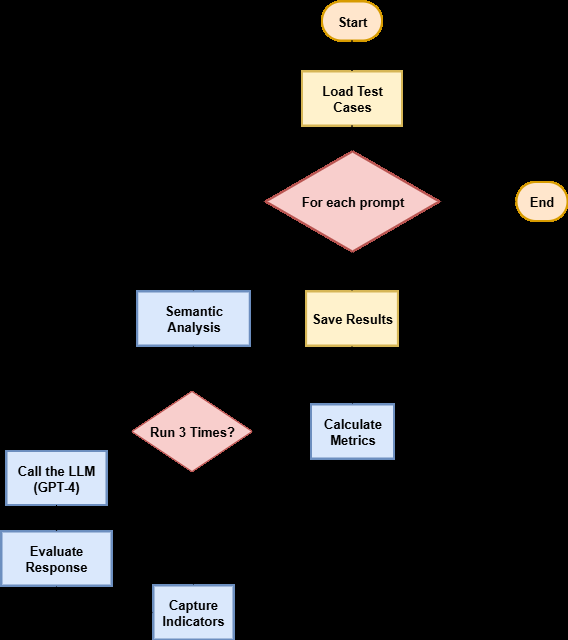

Training or model development details are not described as DIRF targets policy and system-level controls rather than model architectures. However, the evaluation uses the GPT-4 language model on OpenAI API to simulate agentic AI behaviors under threat scenarios. Each test prompt is executed independently three times to enable statistical consistency measures.

The multi-stage evaluation protocol processes each prompt through semantic threat profiling using SentenceTransformer embeddings to calculate similarity scores to known malicious patterns combined with keyword detection for cloning, royalty, memory drift, and traceability risk indicators. Responses are assessed using a rule-based classification for verdict (Executed, Blocked, Warning) and flagged for violations of clone detection, consent, traceability, or royalty acknowledgement.

Metrics calculated include:

- Clone Detection Rate (CDR): percent of unauthorized clones correctly flagged

- Consent Enforcement Accuracy (CEA): percent compliance blocking of unconsented actions

- Memory Drift Score (MDS): quantifies semantic deviation over repeated prompt runs

- Royalty Compliance Rate (RCR): percentage of monetized uses triggering royalty acknowledgment

- Traceability Index (TI): completeness of audit metadata tracking identity usage

Results are aggregated and correlated with scenario-specific pass/fail thresholds, allowing quantitative evaluation of DIRF enforcement effectiveness. The evaluation framework is illustrated in Figure 4 and 5, highlighting automated processing from semantic risk assessment through final metric computation.

Three concrete use cases demonstrate DIRF controls in practice: voice cloning on ad platforms ensuring consent and royalties, behavioral memory misuse in digital assistants with auditing and user controls, and unauthorized avatar marketplaces using clone classification and licensing disclosures.

The authors do not report releasing code or datasets publicly but provide detailed tables (Tables 1-9) mapping controls to enforcement types, tactics, and MAESTRO layers to facilitate adoption. The evaluation’s reliance on GPT-4 as a test model anchors results in a current high-capability LLM but limits generalization to other architectures or platforms. No adversarial robustness tests or real-world deployment trials are described, leaving reproducibility dependent on access to similar LLM APIs and custom test prompts.

Overall, the methodology thoroughly defines a governance framework integrated with lifecycle technical controls, validated against simulated threat scenarios through automated multi-run evaluation with interpretable compliance metrics.

Technical innovations

- A unified Digital Identity Rights Framework (DIRF) defining 63 enforceable controls across nine domains specifically targeting AI-generated digital identity exploitation.

- Layered architectural design integrating legal, technical, and hybrid enforcement mechanisms mapped to AI system lifecycle stages for comprehensive identity governance.

- Semantic threat profiling combining SentenceTransformer embeddings with keyword-based risk quantification to assess digital identity threat levels pre-evaluation.

- Automated multi-run prompt execution with semantic similarity-based Memory Drift Score (MDS) quantifying AI model behavioral consistency under identity-related attack scenarios.

- Operationalization of royalty enforcement controls linked to clone usage events, enabling traceable monetization compliance within agentic AI systems.

Datasets

- Custom DIRF test prompt sets — size unspecified — proprietary JSON repository of categorized prompts for 5 identity threat scenarios

Baselines vs proposed

- No explicit baseline models compared; evaluation conducted on GPT-4 responses with DIRF compliance metrics as primary assessment.

- Clone Detection Rate, Consent Enforcement Accuracy, Memory Drift Score, Royalty Compliance Rate, and Traceability Index quantified effectiveness of DIRF controls on simulated attacks.

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2508.01997.

Fig 1: Overview of AI-Generated Risks to Digital Identities

Fig 2: DIRF Layered Architecture Overview

Fig 3: An overview of DIRF framwork domains.

Fig 4: DIRF Experimental Setup: represents the procedural pipeline used to evaluate LLM responses against

Fig 5: DIRF Threat Evaluation Workflow Details including loading test cases, analyzing semantic risks, executing

Limitations

- Evaluation uses only the GPT-4 model on OpenAI API, limiting generalization across other LLM architectures or generative AI platforms.

- No public release of code or datasets impedes independent reproduction or benchmarking of the DIRF evaluation pipeline.

- Lack of adversarial robustness testing under active evasion attacks or real-world deployment scenarios reduces confidence in operational security guarantees.

- Limited discussion on user experience impact or system overhead introduced by DIRF controls during identity interaction and model invocation.

- No explicit exploration of cross-jurisdictional legal enforcement challenges or integration with varying global data protection regulations.

- Absence of quantitative results detailing exact metric values, thresholds, or ablation studies for individual controls reduces transparency.

Open questions / follow-ons

- How resilient is DIRF against sophisticated adversaries who actively evade detection through adversarial prompt engineering or model poisoning?

- What are the practical integration challenges and performance impacts when adopting DIRF controls in large-scale commercial AI systems or federated environments?

- How can legal frameworks be harmonized internationally to support DIRF’s enforcement mechanisms across different jurisdictions?

- Can real-time enforcement and consent verification be efficiently implemented in low-latency or resource-constrained agentic AI deployments?

Why it matters for bot defense

Bot-defense and CAPTCHA practitioners face growing challenges as generative AI enables sophisticated impersonation and identity spoofing that can bypass traditional authentication and behavioral analytics. DIRF provides a structured governance model and technical-enforcement guidance for detecting unauthorized identity cloning, enforcing consent, maintaining traceability, and governing monetization rights in AI systems. While CAPTCHA primarily focuses on distinguishing humans from bots, the identity risks highlighted by DIRF illustrate the need for multi-layered verification strategies that address impersonation through AI-generated digital likenesses. Practitioners can apply core DIRF concepts, such as real-time clone detection, memory drift monitoring, and audit logging of identity usage, to enhance bot detection systems and defend against AI-driven identity spoofing attacks. Furthermore, the integration of legal and hybrid enforcement controls underscores the importance of combining technical measures with policy and user consent in comprehensive identity protection frameworks. Overall, DIRF’s detailed domain controls may inform future CAPTCHA designs that incorporate identity provenance, multi-modal biometrics, and behavioral consistency verification to resist AI clone-based attacks.

Cite

@article{arxiv2508_01997,

title={ DIRF: A Framework for Digital Identity Protection and Clone Governance in Agentic AI Systems },

author={ Hammad Atta and Muhammad Zeeshan Baig and Yasir Mehmood and Nadeem Shahzad and Ken Huang and Muhammad Aziz Ul Haq and Muhammad Awais and Kamal Ahmed and Anthony Green },

journal={arXiv preprint arXiv:2508.01997},

year={ 2025 },

url={https://arxiv.org/abs/2508.01997}

}