LLM-Assisted Cheating Detection in Korean Language via Keystrokes

Source: arXiv:2507.22956 · Published 2025-07-29 · By Dong Hyun Roh, Rajesh Kumar, An Ngo

TL;DR

This paper tackles a practical but underexplored anti-cheating problem: detecting LLM-assisted writing in Korean from typing behavior rather than submitted text. The motivation is that text-based plagiarism detectors miss paraphrasing and transcription of LLM output, while video proctoring and browser lockdowns are intrusive or bypassable. The authors frame keystrokes as a low-intrusion behavioral signal that can expose how a response was produced, not just what the response says.

What is new is the combination of three dimensions that prior keystroke-cheating work had mostly not combined: Korean-language data, a three-way cheating taxonomy (bona fide, paraphrased ChatGPT response, transcribed ChatGPT response), and explicit control for cognitive load using six Bloom’s taxonomy prompts. They collected a new dataset from 69 Korean university students, built interpretable temporal and rhythmic feature sets, and evaluated MLP/SVM/XGBoost under user-independent splits plus cognition-aware and cognition-unaware settings. The headline result is that the models generally separated transcribed writing best, paraphrasing was the hardest class, temporal features were strongest when train/test cognition matched, and rhythmic features were relatively more robust when cognition shifted across conditions.

Key findings

- Dataset size: 69 university students (33 female, 36 male; mean age ≈23) completed Korean writing tasks under three conditions: bona fide, paraphrasing ChatGPT responses, and transcribing ChatGPT responses.

- The study collected six prompts per writing scenario, aligned to Bloom’s taxonomy levels: remember, understand, apply, analyze, evaluate, create; the first phase only was used for the paper, with 50/69 participants completing both phases.

- Temporal features outperformed rhythmic features in the cognition-unaware setting; with XGBoost, temporal accuracy rose from about 87% at 30% training data to about 93% at 70%, while rhythmic accuracy stayed mostly in the mid/high-80s.

- Under cognition-unaware evaluation, transcribed responses were easiest to detect: temporal XGBoost reached 100% recall for the transcribed class, while temporal MLP and SVM were about 99.05%.

- Paraphrased responses were the hardest class across models: in cognition-unaware evaluation, temporal recalls were 86.67% (MLP), 82.86% (XGBoost), and 76.19% (SVM), with rhythmic models lower overall (about 78.10%, 80.00%, 71.43% respectively).

- In cognition-aware evaluation, temporal models benefited from high-load/high-load (H→H) training/testing, with XGBoost surpassing 93% accuracy beyond the 60% training mark; rhythmic models were more stable under low-load/low-load (L→L) than H→H.

- The paper states that model performance significantly exceeded human evaluators for distinguishing bona fide and transcribed responses, but the excerpt does not provide the exact human accuracy numbers.

- The authors report 105 user-independent train/test splits by varying training proportion from 30% to 70% in 2% increments with 5 random trials per split, and they average accuracy over those trials.

Threat model

The adversary is a student using ChatGPT to produce or assist with Korean writing in an academic setting, including both direct transcription of model output and paraphrasing to disguise assistance. The detector sees only keystroke dynamics and task context, not the prompt content beyond the fixed writing prompt, and the evaluation assumes the attacker cannot tamper with the logging system. The attacker can choose between writing unaided, paraphrasing, or transcribing, but cannot evade the in-person collection protocol or the copy-paste restrictions in normal input fields. The study does not model a sophisticated attacker who intentionally mimics bona fide keystroke rhythms over long periods or who changes devices/input methods to evade detection.

Methodology — deep read

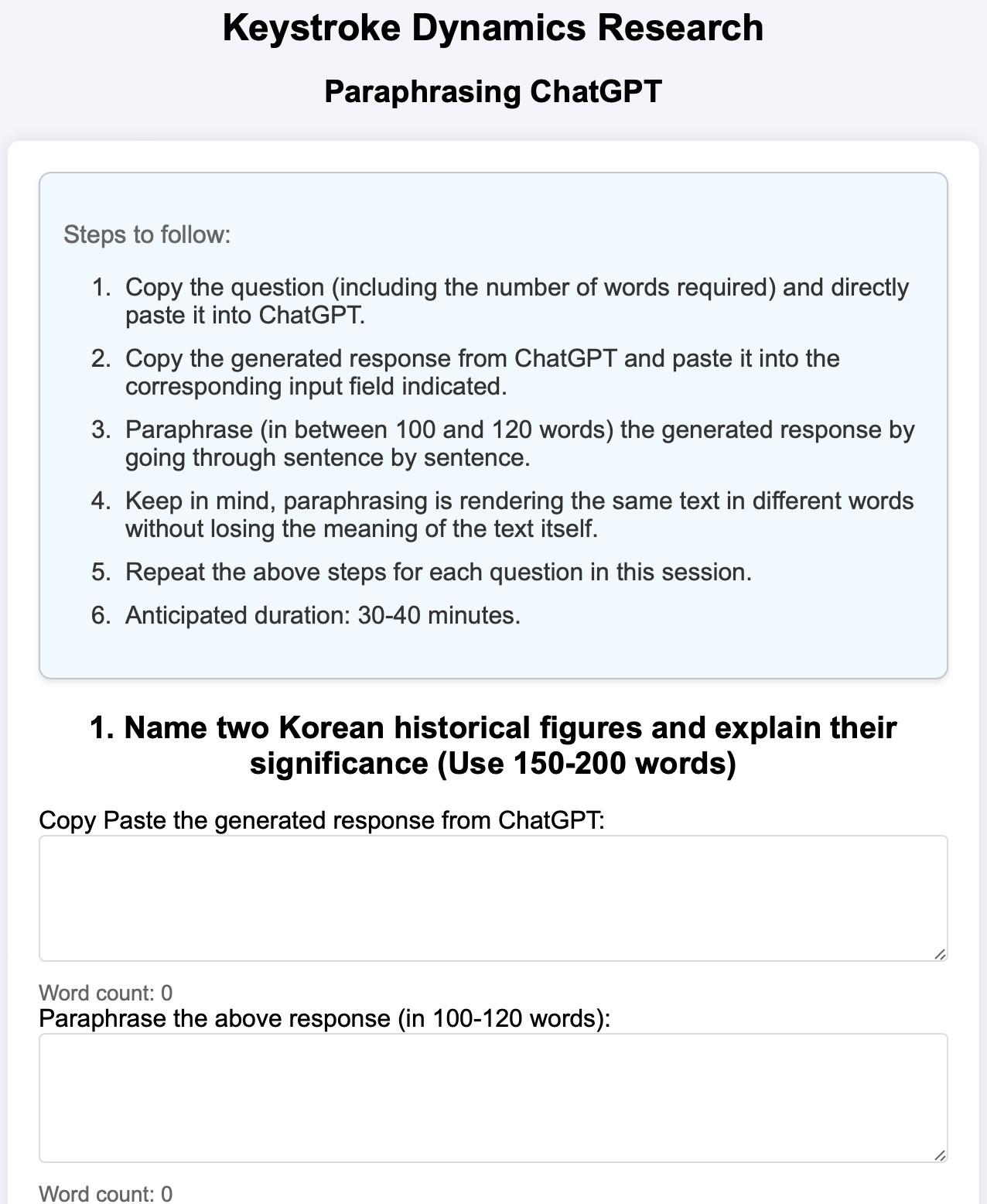

Threat model and experimental framing: the adversary is a student who uses ChatGPT during writing and may either paraphrase the model output or copy it verbatim/transcribe it, with the goal of evading detection in an academic setting. The detector is not trying to recover the prompt or text source; it tries to classify the writing process from keystrokes. The authors explicitly aim for user-independent generalization, so the test users are unseen at training time. They also model a second axis of variability: cognitive load. Their assumption is that typing dynamics contain stable process signatures of planning, revision, and transcription that persist even when the final text is well edited or when the content is Korean. What the attacker cannot do in this setup is alter the keystroke log itself, and the collection environment disables copy-paste in ordinary input fields to prevent easy shortcutting; however, the study does allow pasting into a dedicated field for ChatGPT output during assisted conditions.

Data collection and labels: the dataset was gathered in person under IRB approval from 69 Korean university students recruited through a university community platform. The sample is balanced enough to be reported as 33 female and 36 male, with mean age about 23. Each participant completed writing tasks in three conditions: bona fide writing without external assistance, paraphrasing ChatGPT-generated Korean responses, and transcribing ChatGPT-generated Korean responses word-for-word. For each condition, there were six prompts corresponding to Bloom’s taxonomy levels. The prompts are described in Table 1 and are deliberately general, culturally relevant questions for Korean university students to reduce topic-specific confounds. Participants typed responses on a custom web portal that logged KeyType, KeyEvent (Down/Up), and timestamps. In paraphrase/transcribe sessions, a second input area let participants paste the ChatGPT answer they had obtained and reference it while producing the final response. The authors also recorded user text and ChatGPT text so they could validate adherence to the assigned condition. The paper says the full dataset and code are public at a GitHub repository, but the excerpt does not give the dataset size in terms of individual keystrokes or total sessions beyond the participant counts and task structure. A second phase with different questions was run for 50 participants, but the paper uses only first-phase data here to emphasize user-independent evaluation; the second phase is reserved for future user-dependent work.

Preprocessing and feature engineering: the raw log stream required several cleanup steps before feature extraction. First, they removed rare systematic "Unidentified" key events that consistently followed CapsLock Down; this was used by participants to switch into English input for loanwords. Second, they normalized prolonged key holds such as repeated Backspace/Arrow KeyDown events with a single KeyUp by retaining only the first KeyDown and the final KeyUp, preserving hold duration and interval structure. Third, they corrected base-character versus Shift-modified-character misclassifications that occurred when Shift was pressed during a key press sequence; Table 2 shows an example where a base consonant could be logged as its doubled/shifted form or vice versa, and they fixed these by inspecting context around Shift sequences. This matters because Korean input method behavior can create noisy logs that would otherwise contaminate hold-time and interval features.

Architecture / algorithm: the authors intentionally stayed with interpretable, hand-crafted features rather than end-to-end sequence models. They extracted two families of features. Temporal features consisted of key hold times (KHT) and key interval times (KIT). For each user/task pair they computed a fixed-length vector from these timing distributions using five summary statistics: first quartile, median, third quartile, mean, and standard deviation. They also removed implausibly short durations for Backspace/Arrow keys (0–2 ms) and filtered feature-level outliers using the 0.5th and 99.5th percentiles; missing values were imputed with feature-wise medians. Rhythmic features were designed to capture higher-level writing process structure: pause bins, P-bursts, R-bursts, delete bursts, inter-word pauses, inter-sentence pauses, and pause-before-delete. Unlike prior work that used only coarse pause thresholds, they binned pause durations in 300 ms intervals from 3–300 ms up to 2400+ ms, aiming to represent fine-grained pause dynamics and reduce mimicry. For each rhythmic feature list, they computed seven statistics: mean, standard deviation, total duration, count, median, first quartile, and third quartile, and added entropy for pause durations and P-burst lengths, yielding 107 rhythmic features total. The novel point is not a new neural architecture but a carefully designed feature space that tries to separate motor execution from cognitive planning/revision.

Training regime and evaluation: the paper evaluates three classifiers—MLP, SVM, and XGBoost—chosen based on prior keystroke literature. Hyperparameters were optimized with a genetic algorithm, where each candidate configuration was assessed using 5-fold stratified cross-validation and accuracy as the fitness score. Feature selection was done separately for each train/test split using mutual information on the training set, keeping the top 50% of features and applying the same subset to the test set. The evaluation is explicitly user-independent: train and test users do not overlap. To probe robustness, the authors generated 105 train/test splits by varying train size from 30% to 70% in 2% increments and repeating five random trials at each proportion. They report accuracy, recall, and confusion matrices. They also run two cognition settings. In Cognition-Unaware mode, all prompts are pooled per user, ignoring Bloom level. In Cognition-Aware mode, prompts are split into low-load (questions 1–3) and high-load (questions 4–6), and they evaluate H→H, H→L, L→H, and L→L combinations; the excerpted results focus mainly on H→H and L→L. A concrete example of the workflow is: a participant answers the "Analyze" prompt, either on their own or by paraphrasing/transcribing a ChatGPT Korean answer; the keystroke log is cleaned, KHT/KIT and rhythmic summaries are computed for that question, features are selected on the training partition only, and the resulting vector is fed to XGBoost/MLP/SVM to predict bona fide vs paraphrase vs transcription.

Reproducibility and reporting: the authors claim the code and dataset are publicly available on GitHub. The paper provides enough procedural detail to reproduce the data pipeline at a high level, including the logging fields, feature definitions, split strategy, and tuning method. However, some implementation details are still underspecified in the excerpt: exact MLP/SVM/XGBoost hyperparameter ranges, genetic algorithm settings, random seeds, and whether class balancing was used are not provided here. The excerpt also does not report significance tests for the model-vs-human comparison, even though it says the model significantly outperformed humans. The final confusion matrices shown in Figs. 3 and 5 are aggregated from the five 70/30 splits, which is useful but means the full uncertainty across all 105 split settings is not reflected in the displayed matrices.

Technical innovations

- A Korean-language keystroke dataset for LLM-assisted cheating detection, extending the literature beyond the English-only settings of prior work.

- A three-way cheating taxonomy that separates bona fide writing from ChatGPT paraphrasing and ChatGPT transcription, rather than treating LLM assistance as a binary label.

- A cognition-aware evaluation design grounded in Bloom’s taxonomy, with low-load versus high-load prompt groupings and train/test cognition-matched versus cognition-shifted settings.

- A rhythmic feature representation with 300 ms pause bins plus entropy over pause and burst lengths, intended to capture planning/revision structure more finely than coarse pause thresholds used in earlier work.

- A fully user-independent evaluation protocol over 105 random train/test splits, aimed at testing cross-user generalization rather than memorization of individual typing styles.

Datasets

- Korean LLM-assisted cheating keystroke dataset — 69 participants; first phase used in paper, second phase collected from 50 participants — collected in person by the authors; public GitHub repository linked in paper

- ChatGPT-generated reference responses — paired with participant responses across paraphrasing/transcribing conditions — generated during the experiment from the same six Bloom-taxonomy prompts

Baselines vs proposed

- Temporal XGBoost vs Temporal MLP/SVM (cognition-unaware): accuracy ≈93% at 70% train for XGBoost vs ≈90% for MLP and ≈89% for SVM

- Rhythmic XGBoost vs Rhythmic MLP/SVM (cognition-unaware): accuracy ≈89% at 70% train for XGBoost vs ≈87-88% for MLP/SVM

- Temporal XGBoost (cognition-unaware): transcribed recall = 100.0% vs Temporal MLP = 99.05% and Temporal SVM = 99.05%

- Temporal MLP/SVM/XGB (cognition-unaware): paraphrased recall = 86.67% / 76.19% / 82.86%

- Rhythmic MLP/SVM/XGB (cognition-unaware): paraphrased recall = 78.10% / 71.43% / 80.00%

- Temporal XGBoost (cognition-aware H→H): accuracy surpasses 93% beyond 60% training, outperforming L→L in the same model family

- Rhythmic models (cognition-aware): L→L outperforms H→H across classifiers, but the excerpt does not provide a single unified accuracy number for all cases

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2507.22956.

Fig 1: Overview of the data collection setup. Figure 1a shows

Fig 2 (page 3).

Limitations

- The excerpt does not provide exact human-evaluator accuracy numbers, only that the models significantly outperformed humans.

- Only first-phase data are used in the reported experiments; the second phase is reserved for future user-dependent evaluation.

- The paper emphasizes user-independent evaluation, but it does not test cross-device, cross-session, or longitudinal drift, so stability over time remains unknown.

- Although the authors argue rhythmic features generalize better under cross-cognition conditions, the gains are described qualitatively and some settings are noisy; no statistical test for the interaction is shown in the excerpt.

- The study is limited to Korean university students and Korean input behavior, so generalization to other age groups, educational contexts, keyboards, or languages is unproven.

- The methodology relies on prompt-controlled ChatGPT outputs and in-person collection, which is good for validity but may not reflect fully natural cheating behavior in uncontrolled environments.

Open questions / follow-ons

- Would a hybrid model combining text features with keystrokes materially improve paraphrase detection, especially in the hardest bona fide-vs-paraphrase boundary?

- How well do these feature sets transfer across keyboards, operating systems, input methods, or remote/proctored testing environments?

- Can a model trained on one set of Bloom-taxonomy prompts generalize to unseen prompts with different topical or rhetorical demands?

- Would longitudinal user-specific calibration improve performance enough to justify a two-stage deployment (general model plus per-user adaptation)?

Why it matters for bot defense

For bot-defense and CAPTCHA practitioners, the key takeaway is that process signals can carry more information than final text alone. This paper shows that even when the output text is human-authored or human-edited, the typing process can reveal assistance patterns, especially for linear transcription versus more effortful paraphrasing. That suggests a useful design pattern: if you are building abuse detection around user-generated text, consider adding lightweight behavioral telemetry rather than relying exclusively on semantic or textual similarity checks.

The work also warns that the strongest signal is not a single pause threshold or a one-size-fits-all model. Performance depends on the cognitive context of the task, and the same feature family can behave differently when the writing prompt is easier or harder. In practice, that means a deployed detector should be calibrated per task type, language, and input modality, and should be evaluated against subtle evasion behaviors like paraphrasing, not just obvious copy-paste. For CAPTCHA-like defenses, the broader lesson is that human effort patterns are measurable, but they are not universal; they vary with task difficulty and user population, so robust systems need explicit distribution-shift testing before deployment.

Cite

@article{arxiv2507_22956,

title={ LLM-Assisted Cheating Detection in Korean Language via Keystrokes },

author={ Dong Hyun Roh and Rajesh Kumar and An Ngo },

journal={arXiv preprint arXiv:2507.22956},

year={ 2025 },

url={https://arxiv.org/abs/2507.22956}

}