MFAz: Historical Access Based Multi-Factor Authorization

Source: arXiv:2507.16060 · Published 2025-07-21 · By Eyasu Getahun Chekole, Howard Halim, Jianying Zhou

TL;DR

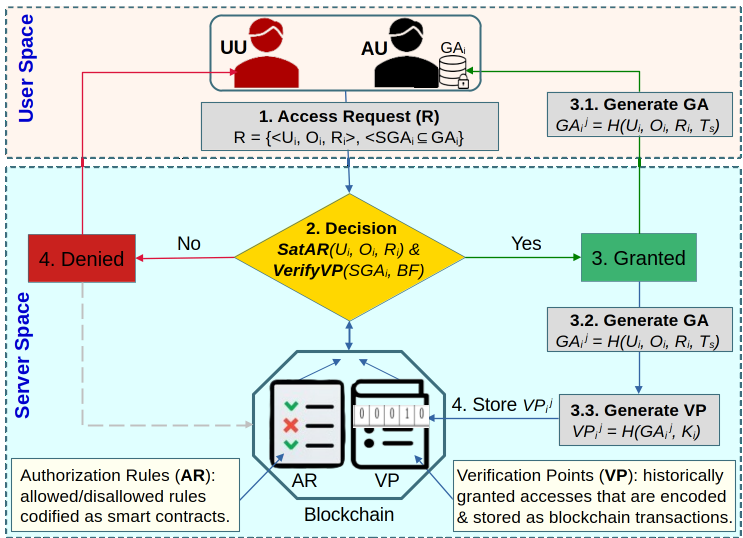

MFAz tackles a gap that standard access control and MFA do not address: once a session is already established, an attacker who steals or reuses the session identifier can often bypass the original authorization checks. The paper’s central claim is that authorization itself should be multi-factor, not just authentication, so it introduces a two-stage scheme where a normal access-control rule check is followed by a second factor derived from historically granted accesses and nonces. That second factor is intended to make a stolen session token insufficient on its own.

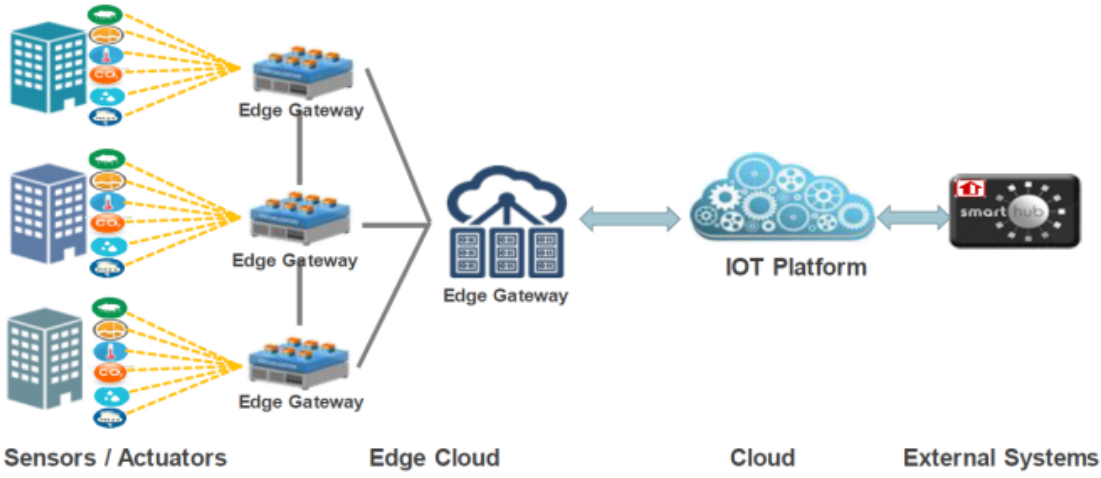

The new pieces are the generation of fine-grained access rules (ARs) plus verification points (VPs) that are derived from past granted accesses, and then the implementation choices to make this practical: VPs are stored/queried with a Bloom filter, while ARs and VPs are persisted through blockchain smart contracts. The evaluation uses an IIoT/smart-city testbed with heterogeneous devices (gateway, PC, Raspberry Pi) to argue that the scheme is both feasible and efficient. The abstract and provided excerpt strongly suggest good performance and security properties, but the truncated source text does not include enough numeric results to quote exact deltas.

Key findings

- The paper formalizes what it calls a multi-factor authorization (MFAz) scheme, and argues it is orthogonal to MFA because the second factor is checked at authorization time, not login time.

- Access is granted only if both the access-control rule check (AR) and the verification-point check (VP) succeed; a stolen SID alone is therefore insufficient under the proposed model.

- Verification points are generated from historically granted accesses plus nonces/timestamps, so the second factor is tied to prior legitimate access history rather than a static secret.

- Bloom filters are used specifically to reduce runtime and storage overhead for VP membership checks, making the scheme plausible on constrained devices such as Raspberry Pi-class nodes.

- Blockchain/smart contracts are used to store ARs and VPs immutably and in a decentralized way, removing a single point of failure for authorization state.

- The evaluation is performed on a smart-city / IIoT testbed with heterogeneous devices, including edge gateways, PCs, and RPIs, rather than on a purely synthetic benchmark.

- The excerpt provided here does not include the paper’s numeric latency or throughput results, so no exact performance delta can be reliably quoted from the source text available.

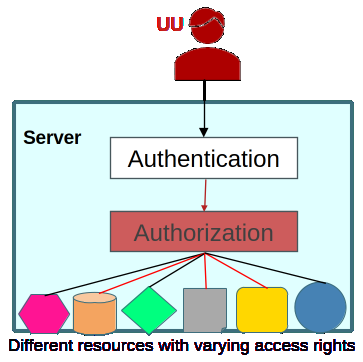

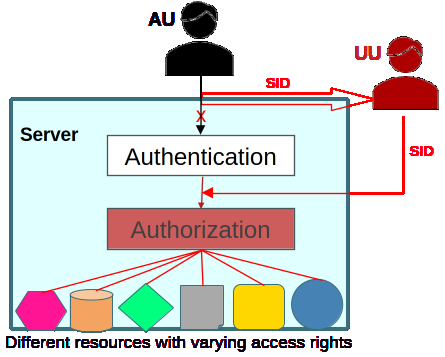

Threat model

The adversary may be either a conventional unauthorized user exploiting weak access-control policies or a session hijacker who steals a valid session identifier and replays it after authentication has completed. The attacker is assumed unable to read the legitimate user’s locally stored historical granted accesses, unable to sniff the protected channel, and unable to tamper with the blockchain-backed AR/VP state. The attacker may, however, use session fixation, XSS, brute forcing, or similar methods to obtain the SID, and may try to terminate the user’s real session to avoid concurrent-use detection.

Methodology — deep read

Threat model: the paper considers two classes of adversaries. First, conventional unauthorized users who exploit weak or misconfigured access-control policies to bypass authorization. Second, session-hijacking attackers who obtain a valid session identifier after authentication and then replay it to impersonate the legitimate user. The authors explicitly assume the attacker cannot read the historical granted-access tokens (GAs) stored on the legitimate user’s device, and cannot sniff the authenticated channel because communication is protected by SSL/TLS. They do allow the attacker to steal the SID through techniques like session fixation, XSS, or brute forcing, and even to terminate the real user’s session to avoid dual-use suspicion. In other words, the model is aimed at post-authentication takeover, but not at a fully compromised endpoint or a compromised blockchain ledger.

Data / state used by the scheme: there is no public dataset in the ML sense. Instead, the scheme uses per-user historical access state. During enrollment, the server provisions a user’s long-term key Ki and then bootstraps dummy historical granted accesses and verification points so the user has an initial history to reference. A historical granted access GAj_i is formed from user attributes Ui, operation Oi, resource Ri, and timestamp Ts. The corresponding verification point VPj_i is derived by hashing the GA together with Ki (the text and pseudocode show H(GAj_i, Ki), with SHA-256 named in implementation). The user stores GAs locally on their device; the server stores ARs and VPs on blockchain, with the VP representation also inserted into a Bloom filter for compact membership testing. The text does not describe any train/validation/test split because this is not a learned model.

Architecture / algorithm: the authorization pipeline has two gates. First, SatAR(Ui, Oi, Ri) checks whether the requested subject/action/resource combination satisfies the access rules. These ARs are described as attribute-based fine-grained access-control rules and are stored as smart contracts on-chain. If ARs pass, the server fetches the latest Bloom filter from blockchain and runs VerifyVP: it reconstructs a temporary VP from the user-presented SGAi list by GenVP(SGAi, Ki), then checks membership in the Bloom filter. If both checks succeed, access is granted; the server then generates the new GA for this session, derives the new VP, inserts the VP into the Bloom filter, and stores the updated filter back on chain. The core novelty is that the second factor is not an OTP or biometric but a history-derived authorization token. One concrete end-to-end example: a user requests read access to resource Ri, presents its attribute bundle plus a randomly selected subset SGAi of prior granted-access tokens. The server confirms the request matches policy, recomputes the expected VP from SGAi and Ki, verifies that this VP exists in the Bloom filter, and only then allows the session to continue and updates the historical state for the next access.

Training regime / implementation: there is no training loop, epochs, optimizer, or seed strategy because the system is deterministic/security-oriented rather than learned. The implementation is in C/C++ using the MIRACL cryptography library, with SHA-256 as the hash function. Bloom filters are preconfigured with a fixed capacity and false-positive rate, though the excerpt says these parameters may be adjusted in future depending on usage and device capability. Blockchain is prototyped with Ganache as a local testnet. The excerpt does not provide hardware frequencies, memory sizes, or software version pins beyond those components.

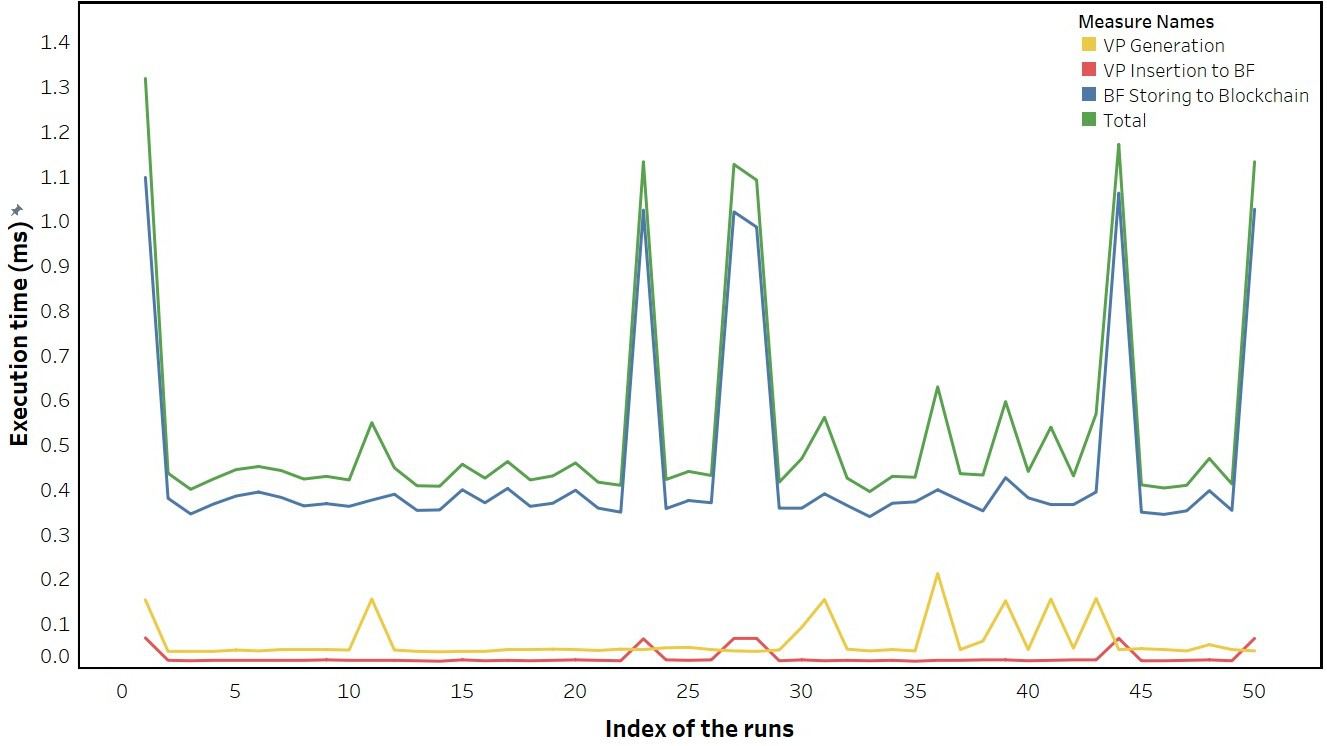

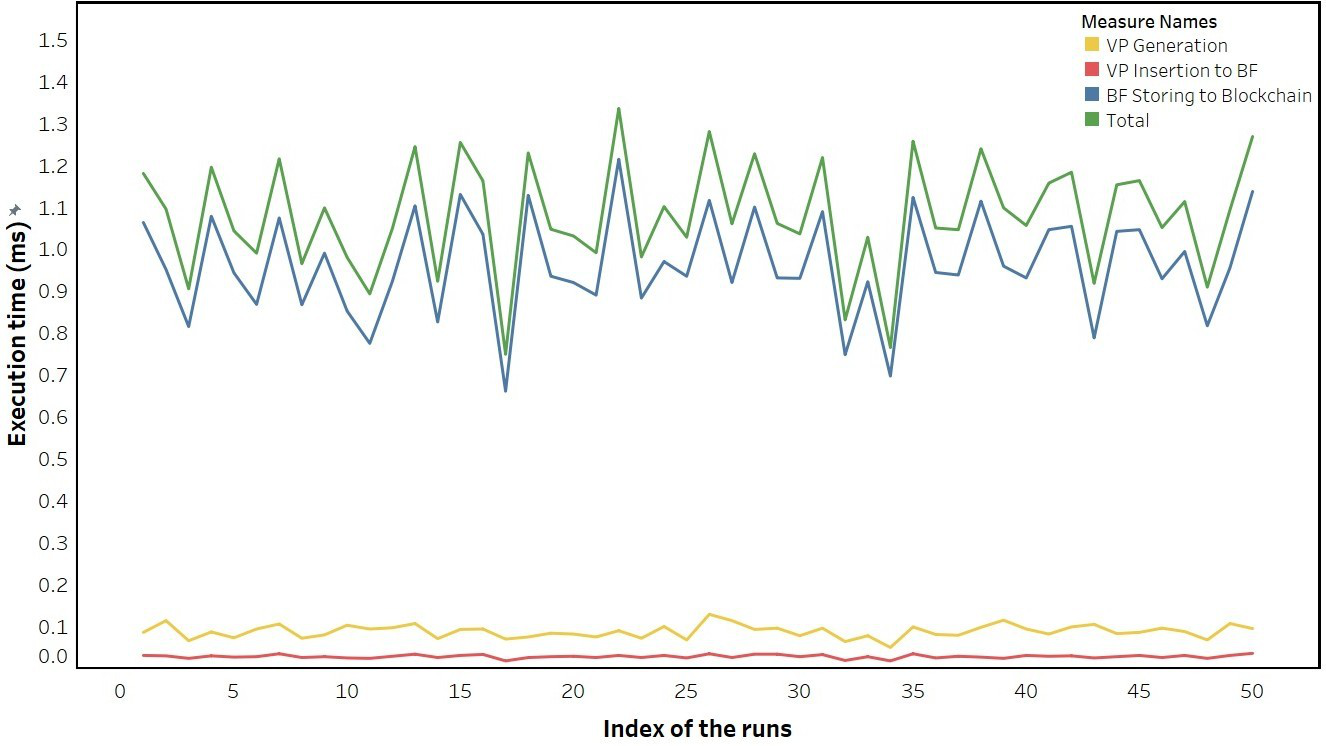

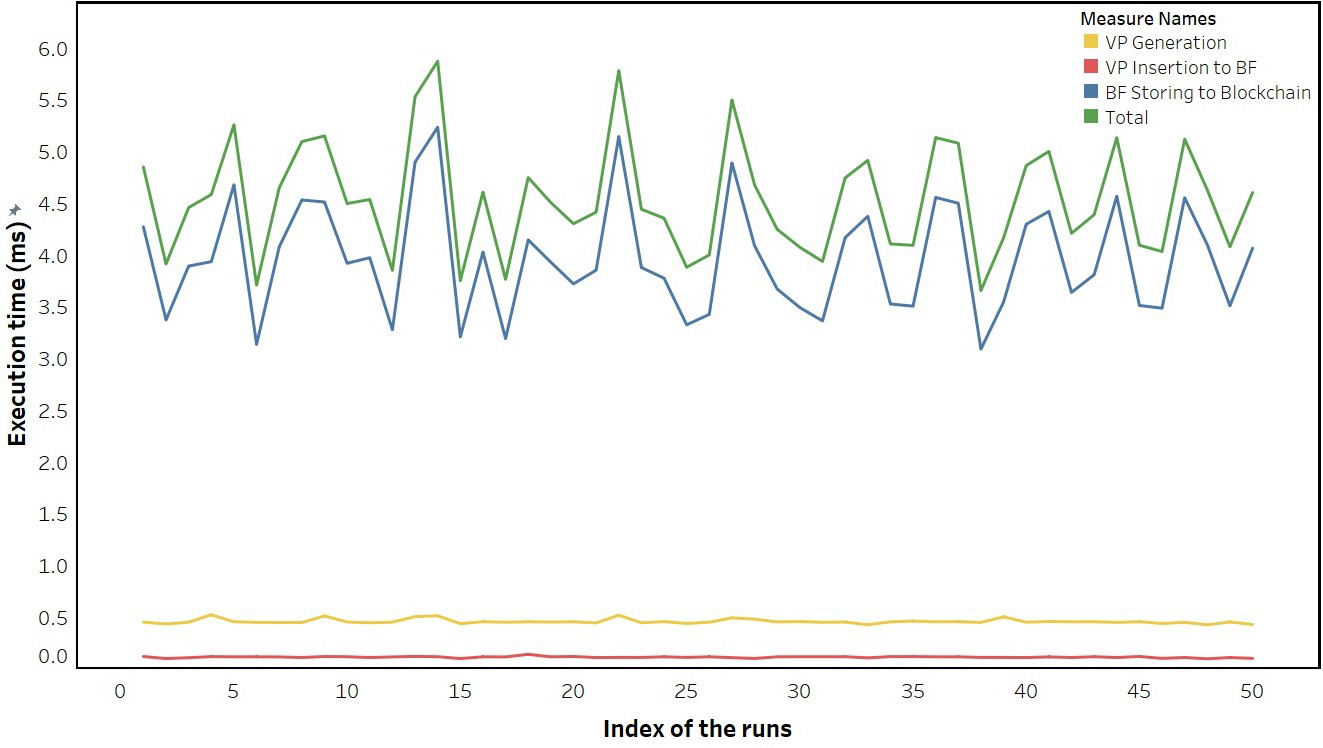

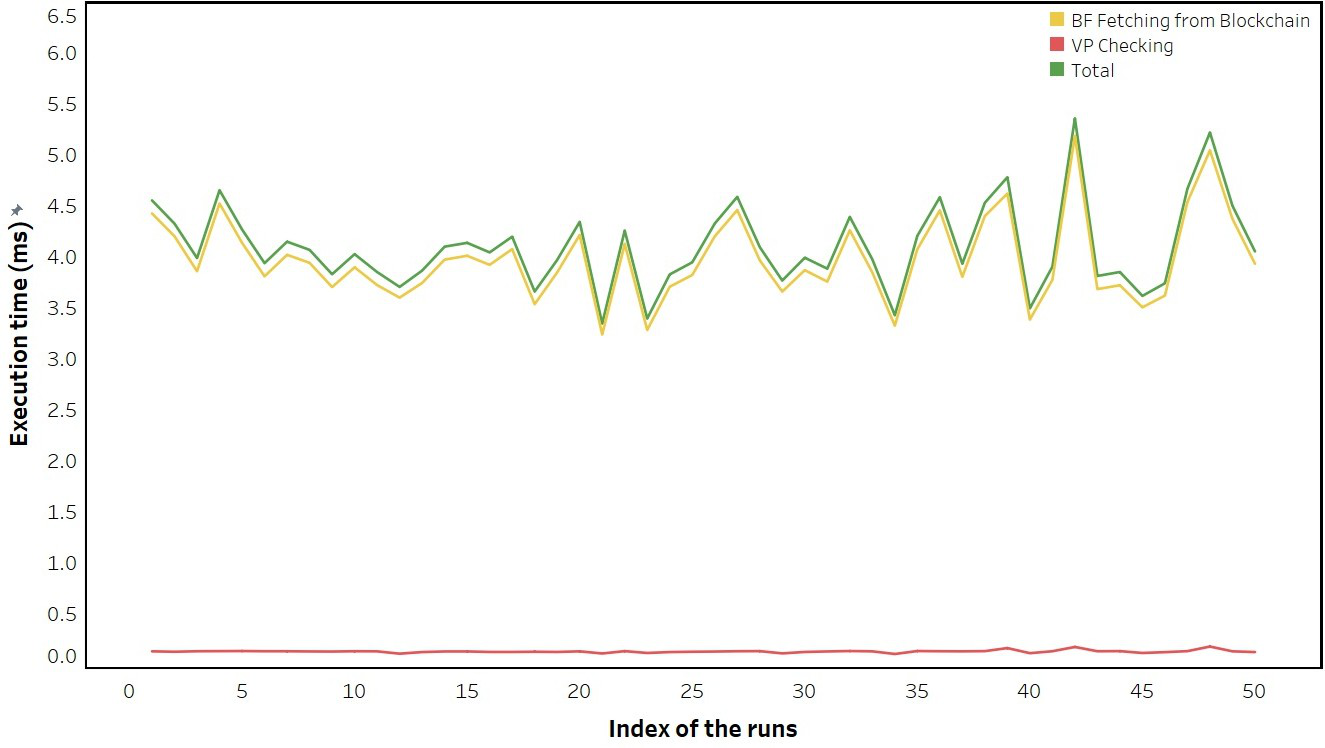

Evaluation protocol: the paper evaluates on an IIoT smart-city testbed with devices of varying compute power, including gateways, PCs, and Raspberry Pi units, and uses common communication protocols such as HTTP/HTTPS, TCP, UDP, and MQTT. The text provided includes figure captions indicating separate measurements for VP generation time and VP verification time across gateway, PC, and RPi, each over 50 runs, which implies repeated-run timing experiments rather than a single measurement. However, the excerpt you provided is truncated before the numerical results and before any ablation table, baseline comparison table, or statistical test discussion. So while the paper clearly measures runtime and storage efficiency, I cannot state exact values, confidence intervals, or baseline deltas from the available source text. Reproducibility is partial: the implementation stack is named, but the excerpt does not mention code release, frozen artifacts, or public datasets.

Technical innovations

- Introduces multi-factor authorization (MFAz) as a distinct security primitive from MFA, with the second factor checked at authorization time rather than authentication time.

- Derives verification points from historically granted accesses plus nonce-like values, making authorization depend on prior legitimate access history rather than a static credential.

- Uses a Bloom filter to compress and verify verification points efficiently, trading controlled false positives for low storage and fast membership checks.

- Uses blockchain smart contracts to store authorization state immutably and decentralize decision support across devices in a distributed environment.

Datasets

- Historical granted-access tokens / verification points — not a public dataset; per-user state generated during enrollment and runtime

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2507.16060.

Fig 1: Threat model: (a) weak access control threats (b) session hijacking threats

Fig 2: An illustration of the authorization process in MFAz

Fig 3 (page 11).

Fig 3: Architecture of the IoT-based smart city testbed

Fig 4: VP generation time of (a) Gateway (b) PC and (c) RPI, for 50 runs

Fig 5: VP verification time of (a) Gateway (b) PC and (c) RPI, for 50 runs

Fig 7 (page 15).

Fig 8 (page 16).

Limitations

- The excerpt does not provide the numeric experimental results, so claims of effectiveness cannot be independently checked from the supplied text.

- Bloom filters introduce false positives; the security impact of false-positive VP matches is not quantified in the excerpt.

- The threat model assumes the attacker cannot access the user’s stored historical granted accesses and cannot compromise the secure channel; a compromised endpoint would likely break those assumptions.

- The design depends on blockchain availability and correctness, but the excerpt does not analyze smart-contract bugs, chain reorganization, or on-chain scalability overhead in depth.

- The scheme is evaluated on a smart-city testbed, but the excerpt does not show cross-domain generalization or stress under large-scale distributed load.

Open questions / follow-ons

- How does MFAz behave under Bloom-filter false positives, especially if an attacker can iteratively probe for a colliding VP?

- What is the end-to-end latency and scalability cost when AR/VP updates must be written through blockchain in a high-throughput environment?

- How robust is the scheme if the user device storing historical granted accesses is partially compromised or cloned?

- Can the historical-access mechanism be adapted to federated or cross-organization authorization without leaking access patterns?

Why it matters for bot defense

For bot-defense practitioners, the paper is less about CAPTCHA and more about a broader design pattern: authorization can be made context/history-aware so that a stolen token is not enough. That is relevant anywhere attackers can replay or piggyback on a legitimate session after passing the front-door challenge. In a CAPTCHA context, this suggests pairing challenge completion with a second authorization check that depends on legitimate prior interaction history or device-bound state, rather than treating the challenge result as a one-time proof of humanity.

Operationally, the strongest takeaway is the defensive value of binding post-login actions to an evolving trust state. A bot defense system could apply the same principle to sensitive actions like account recovery, payout, or session continuation: after the initial challenge, require a server-verifiable history token that the attacker would not possess even if they hijack the session cookie. The main caution is complexity and failure modes: Bloom-filter-like compact state and decentralized decision storage can help scale, but they also introduce false positives, state-synchronization issues, and extra latency that matter in real bot-detection pipelines.

Cite

@article{arxiv2507_16060,

title={ MFAz: Historical Access Based Multi-Factor Authorization },

author={ Eyasu Getahun Chekole and Howard Halim and Jianying Zhou },

journal={arXiv preprint arXiv:2507.16060},

year={ 2025 },

url={https://arxiv.org/abs/2507.16060}

}