Defensive Adversarial CAPTCHA: A Semantics-Driven Framework for Natural Adversarial Example Generation

Source: arXiv:2506.10685 · Published 2025-06-12 · By Xia Du, Xiaoyuan Liu, Jizhe Zhou, Zheng Lin, Chi-man Pun, Cong Wu et al.

TL;DR

This paper addresses the growing vulnerability of traditional CAPTCHA systems to automated attacks leveraging deep neural networks (DNNs). Existing adversarial CAPTCHA methods typically rely on perturbations of original images, which limits diversity, image naturalness, and applicability when no original images are available. The authors propose Defensive Adversarial CAPTCHA (DAC), a semantics-driven framework that shifts input from pixel-level perturbations to attacker-specified semantic prompts. DAC harnesses Large Language Models (LLMs) to enrich prompt diversity and guide diffusion model-based adversarial example generation, overcoming limitations of prior pixel-space approaches. The framework supports both white-box targeted attacks—using a paired latent noise optimization and EDICT coupling for robust inversion—and black-box untargeted attacks with an enhanced Bi-Path DAC (BP-DAC) approach that integrates multi-model gradient guidance and bidirectional optimization to improve transferability. Experiments on ImageNet categories across eight DNN classifiers demonstrate significantly improved Attack Success Rates (ASR) over existing methods, achieving near 99.4% ASR on unknown black-box models, while producing CAPTCHAs visually indistinguishable from clean images and maintaining semantic consistency. Overall, the paper presents a novel, flexible adversarial CAPTCHA generation method that expands the space of synthetic adversarial CAPTCHAs beyond relying on original images, improving robustness against automated breakers while preserving human-usable semantics and naturalness.

Key findings

- DAC achieves an average targeted attack success rate of 81.9% against black-box models, outperforming ten baseline attack methods (best baseline averaged 67.2%) on ImageNet classes (Table III).

- BP-DAC attains near 99.4% ASR against unknown black-box models by integrating gradients from three proxy models and optimizing across targeted and secondary class losses.

- Using LLM-augmented detailed prompts improves semantic accuracy and diversity over using simple labels, reducing generation errors and enriching CAPTCHA complexity.

- Latent pairwise diffusion optimization with EDICT coupling reduces error accumulation and enables stable, high-quality adversarial diffusion sample generation.

- CLIP-guided initialization in BP-DAC improves the naturalness and distribution alignment of generated CAPTCHAs, aiding stealth against human and model detection.

- BP-DAC's bi-path optimization balances losses from target and second-highest class probabilities, helping exploit decision boundary vulnerabilities for better transferability.

- The approach does not depend on any original clean images, overcoming copyright and dataset limitations that constrain traditional adversarial CAPTCHA generation.

Threat model

The adversary is assumed to have either white-box access to the target model (knowledge of model architecture and parameters) for targeted adversarial CAPTCHA generation or black-box access where only query or proxy models are accessible. The attacker specifies target or undesired categories via semantic input prompts and aims to produce adversarial CAPTCHAs that fool DNN classifiers while remaining natural and human-interpretable. However, the adversary cannot modify the training dataset or model training process, nor have direct access to the original benign CAPTCHA images in some scenarios.

Methodology — deep read

Threat Model and Assumptions: The authors consider two attack settings relevant to CAPTCHA security—white-box targeted attacks where adversaries know the target model's details, and black-box untargeted attacks where the model structure and parameters are unknown. The attacker is assumed to have access only to generate adversarial CAPTCHA images guided by class prompts, without involvement in training or original image data. The attacker aims to cause model misclassification while preserving human interpretability.

Data: Experiments reference ImageNet's 1000-class taxonomy as the space of semantic categories for CAPTCHA generation and attack targets but do not rely on clean images from ImageNet. No explicit dataset of CAPTCHA images is used since DAC synthesizes examples from semantic prompts. Model architectures used as targets and proxies include ResNet50/152, MobileNetV2, DenseNet161, EfficientNet-B7, InceptionV3, AlexNet, GoogLeNet, and SE-ResNeXt101.

Architecture and Algorithm: DAC uses a diffusion model generator G conditioned on semantic prompts to produce adversarial CAPTCHA images starting from noise. The prompt P is expanded via an LLM to form a rich text prompt P'. Latent variables (x_t, y_t) in the diffusion process are coupled and optimized jointly using EDICT. Gradient guidance from the target model's loss with respect to the latent variables improves generation towards the target adversarial class. Latent pairs are merged dynamically with weighting alpha to yield robust inversion.

For black-box attacks, BP-DAC introduces a bi-path optimization strategy integrating predicted class probabilities and gradients from multiple proxy models (f1,f2,f3). It balances loss terms targeting both the main target class and second-highest class to exploit decision boundary weaknesses and improve adversarial transferability. CLIP gradients guide initialization to ensure semantic consistency and naturalness.

Training Regime: The diffusion model generates images through iterative denoising steps, alternately updating latent variables with gradients computed on model prediction loss. Learning rates for latent variables are tuned (eta_x, eta_y). The process continues until the generated CAPTCHA fools the model or maximum iterations are reached. Experiments run on NVIDIA A40 GPUs with 48 GB memory. Exact hyperparameter settings for epochs and batch sizes are not fully specified.

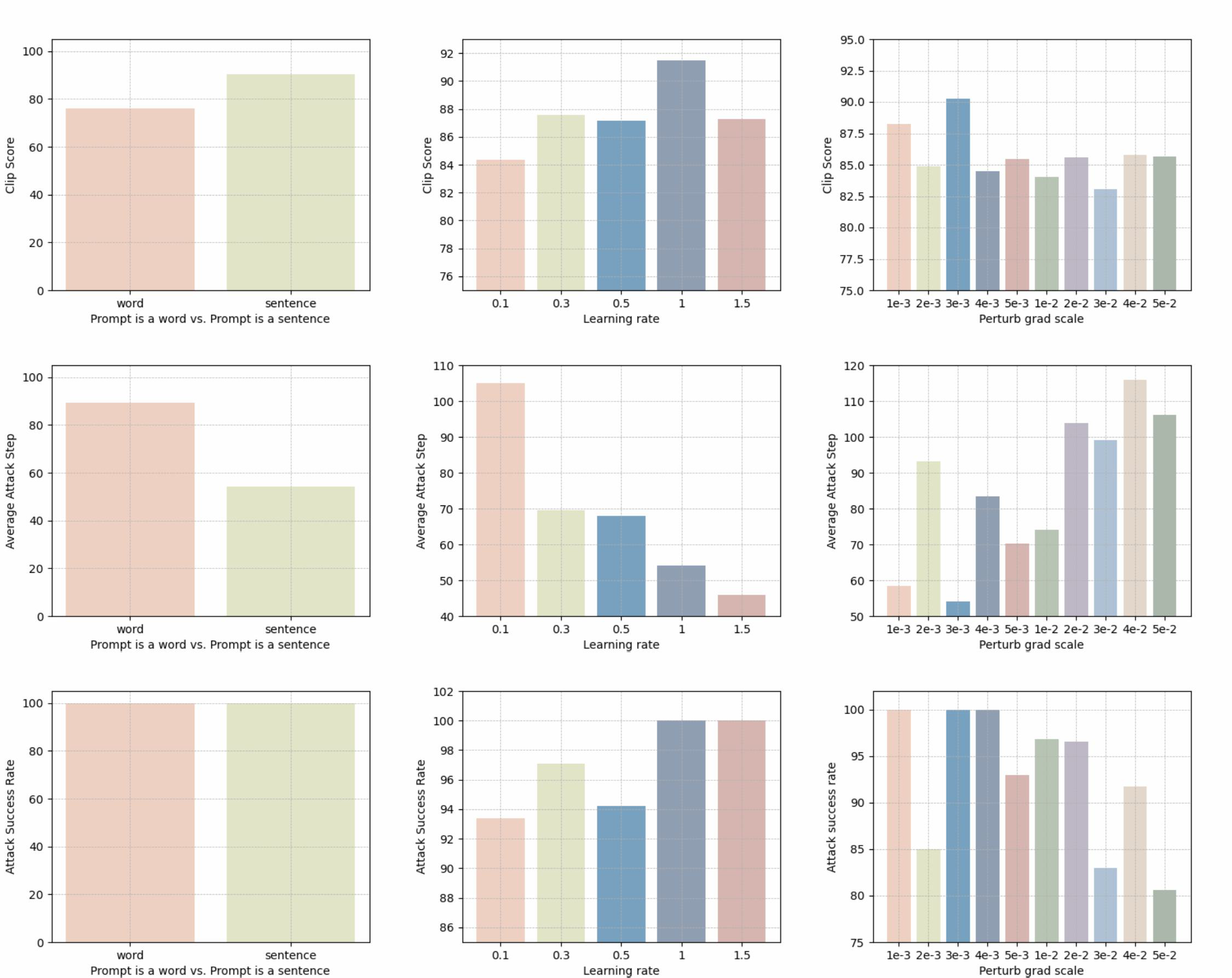

Evaluation Protocol: Attack effectiveness is measured by Attack Success Rate (ASR) against known and unknown models in both white-box and black-box settings. CLIP Score is used to assess semantic alignment and image quality. Baselines include multiple contemporary adversarial attack and CAPTCHA defense methods (e.g., SAE, ADer, ReColorADV, ACA). Ablations study prompt complexity, latent noise coupling, and bi-path optimization components. Transfer attacks test model generalization. Fig. 4 and Table III detail these comparisons.

Reproducibility: The paper does not explicitly mention public release of code or pretrained models. The use of well-known architectures and ImageNet categories aids replicability. The datasets and proxy models are publicly known, but some core proprietary components such as custom diffusion model checkpoints or LLM prompt templates are not fully disclosed. The paper leaves some implementation details (exact learning rates, total training steps) partially unspecified.

Concrete example: In the white-box targeted attack process (Algorithm 1), given an initial prompt like "A goldfish swims in the bowl", the LLM extends this to a richer description. The diffusion model generates latent variables (x_t, y_t) which are iteratively updated with gradient guidance from the target classifier's loss w.r.t. the target label (e.g., 'goldfish'). EDICT's coupling steps between x_t and y_t reduce error in backpropagation through diffusion. Latents are merged with weighting parameter alpha to refine the current state each step. This produces a final adversarial CAPTCHA image that humans perceive as a realistic goldfish scene but that the target model misclassifies.

Technical innovations

- Introduction of a semantics-driven framework (DAC) that generates adversarial CAPTCHAs directly from attacker-specified semantic prompts using LLM-augmented diffusion models, removing reliance on original image inputs.

- Design of parameter-shared coupled latent noise variable pairs and bidirectional calibration via EDICT during diffusion process enabling robust inversion and stable, high-fidelity adversarial example generation.

- Bi-path unsourced adversarial CAPTCHA (BP-DAC) method that integrates multi-model gradients and optimizes jointly on target and second-highest class losses to significantly improve attack success and transferability in black-box scenarios.

- Use of CLIP model gradients to semantically guide the initial latent state in diffusion generation, enhancing visual naturalness and maintaining CAPTCHA stealth against both human inspection and model detection.

Datasets

- ImageNet — reference to 1000 classification categories — publicly available

Baselines vs proposed

- SAE: average targeted attack success rate (ASR) 49.05% vs DAC: 81.9% (Table III)

- ADer: ASR 17.75% vs DAC: 81.9%

- ReColorADV: ASR 39.25% vs DAC: 81.9%

- cAdv: ASR 45.9% vs DAC: 81.9%

- ACA: ASR 67.2% vs DAC: 81.9%

- BP-DAC black-box attack obtains near 99.4% ASR on unknown models, outperforming typical black-box methods (exact baselines not detailed in this context)

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2506.10685.

Fig 1: Practical application scenarios of adversarial examples in CAPTCHA

Fig 2: Our proposed bi-path unsourced adversarial CAPTCHA (BP-DAC) attack framework.

Fig 3: The adversarial examples generated by our proposed method.

Fig 4: Attack success rate of transfer attacks based on Resnet50 (left) and

Fig 5: Attack success rate, average attack steps, and Clip Score of different settings.

Fig 6: Comparison plot of adversarial samples generated using detailed prompt versus using labels. It is easy to conclude that a more detailed prompt will

Limitations

- The framework relies on proxy white-box models for gradient guidance in black-box attacks, which may not generalize to all real-world unknown models.

- No explicit adversarial robustness evaluation against adaptive defenders or adversarial training was reported; effectiveness under such defenses is unclear.

- Image quality and human usability were primarily assessed via CLIP Score and visual indistinguishability claims; no extensive user studies quantifying human difficulty or accessibility were presented.

- Details on training hyperparameters and convergence criteria are partly underspecified, which may impact reproducibility and performance consistency.

- The approach is computationally expensive due to iterative diffusion steps and latent pair optimizations, potentially limiting real-time applicability.

- The semantic prompting depends on LLM quality and domain generality; noisy or ambiguous prompts may degrade CAPTCHA generation quality.

Open questions / follow-ons

- How do DAC and BP-DAC generated CAPTCHAs fare against adaptive CAPTCHA defenses including adversarial training or detection-based mitigations?

- Can the approach generalize to multi-modal (e.g., audio, text-based) CAPTCHAs or CAPTCHA variants beyond single-class semantic prompts?

- What are the computational trade-offs and scalability of the diffusion-based framework for high-throughput real-world CAPTCHA generation?

- How might user studies validate human perceptual indistinguishability versus automated detection in large-scale deployment scenarios?

Why it matters for bot defense

From a bot-defense engineering perspective, this paper introduces a new paradigm for adversarial CAPTCHA generation that is semantically guided and does not rely on perturbing existing images. This expands the design space for CAPTCHA challenges, enabling creation of adversarial yet natural-looking tests that are more resistant to state-of-the-art recognition models. The DAC framework’s use of LLMs for semantic richness and diffusion models for natural image synthesis suggests practical approaches for improving CAPTCHA diversity and robustness while maintaining human usability. The BP-DAC method’s integration of multiple model gradients and bi-path optimization provides a promising avenue for black-box attack defense testing, reflecting realistic threat scenarios where attackers cannot access the target model internals. However, practitioners should consider the computational overhead and currently limited evaluation against adaptive defenses when applying these techniques. Overall, the study demonstrates how advances in generative modeling and adversarial machine learning can reshape CAPTCHA security design, warranting further exploration for practical CAPTCHA deployment and robustness evaluation.

Cite

@article{arxiv2506_10685,

title={ Defensive Adversarial CAPTCHA: A Semantics-Driven Framework for Natural Adversarial Example Generation },

author={ Xia Du and Xiaoyuan Liu and Jizhe Zhou and Zheng Lin and Chi-man Pun and Cong Wu and Tao Li and Zhe Chen and Wei Ni and Jun Luo },

journal={arXiv preprint arXiv:2506.10685},

year={ 2025 },

url={https://arxiv.org/abs/2506.10685}

}