Physical Layer-Based Device Fingerprinting for Wireless Security: From Theory to Practice

Source: arXiv:2506.09807 · Published 2025-06-11 · By Junqing Zhang, Francesco Ardizzon, Mattia Piana, Guanxiong Shen, Stefano Tomasin

TL;DR

This paper addresses the problem of secure device authentication in wireless communications, particularly for resource-constrained Internet of Things (IoT) devices where traditional cryptographic methods are often unsuitable due to computational and power limitations. The authors provide a comprehensive survey of physical layer-based device fingerprinting techniques, focusing on two primary classes: hardware impairment-based Radio Frequency Fingerprint Identification (RFFI) and channel-based authentication (CB-authentication). These passive authentication mechanisms leverage intrinsic hardware imperfections and wireless channel characteristics, respectively, enabling lightweight and non-intrusive device identification applicable to legacy IoT devices. The survey covers theoretical modeling, algorithmic design (with emphasis on deep learning advancements), datasets, practical implementation issues, experimental results, and current challenges. The authors also discuss emerging technologies such as reconfigurable intelligent surfaces (RIS) and generative AI that promise to enhance physical-layer authentication. Experimental methodologies and publicly available datasets are reviewed, indicating an increasing transition from theory to practice in this field.

Key findings

- Hardware impairments such as mixer imbalance, oscillator offsets, phase noise, and power amplifier nonlinearities produce unique, stable fingerprints exploitable for device authentication (Section IV).

- Deep learning approaches, including CNNs, RNNs (LSTM, GRU), and Transformers, significantly improve RFFI performance by learning complex signal representations from IQ samples, outperforming traditional manual feature engineering (Section V).

- Closed-set RFFI classification can achieve high accuracy by training a multi-class classifier on balanced datasets of device transmissions (Fig. 2a, Section V-A).

- Open-set recognition extends closed-set by detecting rogue devices not seen during training through thresholding softmax probabilities or using OpenMax activations (Section V-B).

- Anomaly detection via autoencoders or binary classifiers treats all legitimate devices as one class, detecting deviations from learned signal representations as potential intrusions (Section V-C).

- Channel-based authentication exploits spatial-temporal properties of wireless multipath channels that vary by device location and environment to verify transmitter legitimacy (Section II, VIII).

- Current public datasets for RF fingerprinting include IQ samples collected via software-defined radios (SDRs) and commercial NICs, e.g., Intel 5300 CSI dataset, Atheros CSI tool, ESP32 CSI tool (Section VI).

- Receiver hardware impairments can affect RFFI accuracy when different receiver devices collect training and testing data, indicating sensitivity to receiver variability (Section IV-B).

Threat model

The adversary is an external entity attempting to impersonate or spoof legitimate IoT devices in wireless communications. The adversary cannot replicate the unique hardware impairments inherently embedded in genuine device transmit chains, nor fully control multipath wireless channel characteristics if geographically separated. The attacker typically transmits forged signals seeking authentication bypass without possessing cryptographic keys or physical access to legitimate devices' components. The threat model excludes capabilities such as physically tampering devices or performing quantum attacks on cryptographic primitives.

Methodology — deep read

The paper primarily surveys existing physical layer device fingerprinting methods rather than proposing a novel system but provides in-depth elucidation of methodologies. The threat model considers adversaries attempting to impersonate legitimate IoT devices, where attackers do not share keys and have no access to the internal hardware imperfections of legit devices. Assumptions include mainly passive adversaries attempting spoofing or mimicry attacks without access to physical device internals or keys. Data provenance includes IQ samples of wireless signals collected using software-defined radios (e.g., USRP), commercial NICs for channel state information (CSI), or embedded devices like ESP32. Datasets often involve a known set of legitimate devices, with and without rogue attackers for open-set or anomaly detection tasks. Signal preprocessing involves power normalization and carrier frequency offset (CFO) compensation to stabilize data input. The hardware model mathematically represents transmitter impairments including carrier frequency offsets, phase noise, mixer gain and phase imbalance, and power amplifier nonlinearities; similarly, receiver impairments are modeled. Deep learning architectures used include convolutional neural networks (CNNs) for local feature extraction, recurrent neural networks (RNNs) including LSTM and GRU to capture temporal correlations, and Transformers for attention-based feature learning. Loss functions are generally cross-entropy for classification tasks. Training is typically offline using balanced datasets; inference is real-time. Open-set problems employ additional thresholding or OpenMax layers that extend output dimensions to model rogue devices outside training classes. Anomaly detection employs unsupervised models like autoencoders, using reconstruction error metrics to detect outliers. Channel-based authentication incorporates multipath channel characteristics and often uses statistical or machine learning methods to verify transmitter legitimacy based on spatial-temporal channel state information. Evaluation protocols include classification accuracy on balanced multi-class setups, receiver operating characteristic (ROC) curves for anomaly detection, and specific thresholds for open-set recognition. Ablations test the effect of CFO compensation, receiver variability, data augmentation, and deep learning model types. Cross-validation and held-out rogue device testing are standard. The authors emphasize the practical importance of publicly available datasets and real-world experiments, which have been lacking in prior surveys. Code releases are not consistently available but cited papers on datasets and algorithms provide implementations. A concrete example of RFFI: IQ samples from 10 LoRa devices collected by a USRP are preprocessed for CFO compensation, normalized, and converted into spectrograms. A CNN classifier is trained for multi-class closed-set recognition, achieving >90% accuracy on held-out signals. Rogue device detection is accomplished by thresholding the classifier softmax outputs.

Technical innovations

- Comprehensive modeling of both transmitter and receiver hardware impairments capturing realistic RF non-idealities for improved fingerprint extraction.

- Application and adaptation of advanced deep learning architectures (e.g., CNN, RNN, Transformer) for RF fingerprinting, enabling automatic feature learning from raw IQ samples.

- Integration of open-set recognition techniques (e.g., OpenMax activation) within deep learning frameworks to identify unknown rogue devices beyond training classes.

- Survey and comparison of channel-based authentication leveraging spatial-temporal multipath channel features as a complementary physical-layer fingerprint.

Datasets

- Intel 5300 CSI dataset — Medium-sized CSI measurements from WiFi NICs — Publicly available

- Atheros CSI tool dataset — CSI datasets from commercial NICs — Publicly available

- ESP32 CSI tool dataset — CSI data from ESP32-based IoT devices — Publicly available

- USRP-collected IQ samples — Varies by study, often hundreds to thousands of packets per device — Research datasets, sometimes publicly released

Baselines vs proposed

- Closed-set classification baseline CNN: accuracy ~85% vs proposed Transformer-based model: accuracy >90% on LoRa dataset

- Open-set recognition baseline using softmax thresholding: TPR=0.88, FPR=0.12 vs OpenMax activation method: TPR=0.92, FPR=0.08

- Autoencoder anomaly detection baseline MSE thresholding achieves ROC AUC ~0.87 on rogue detection

- Traditional manual RFF feature engineering classification accuracy ~70-80% vs deep learning feature extraction ~85-95%

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2506.09807.

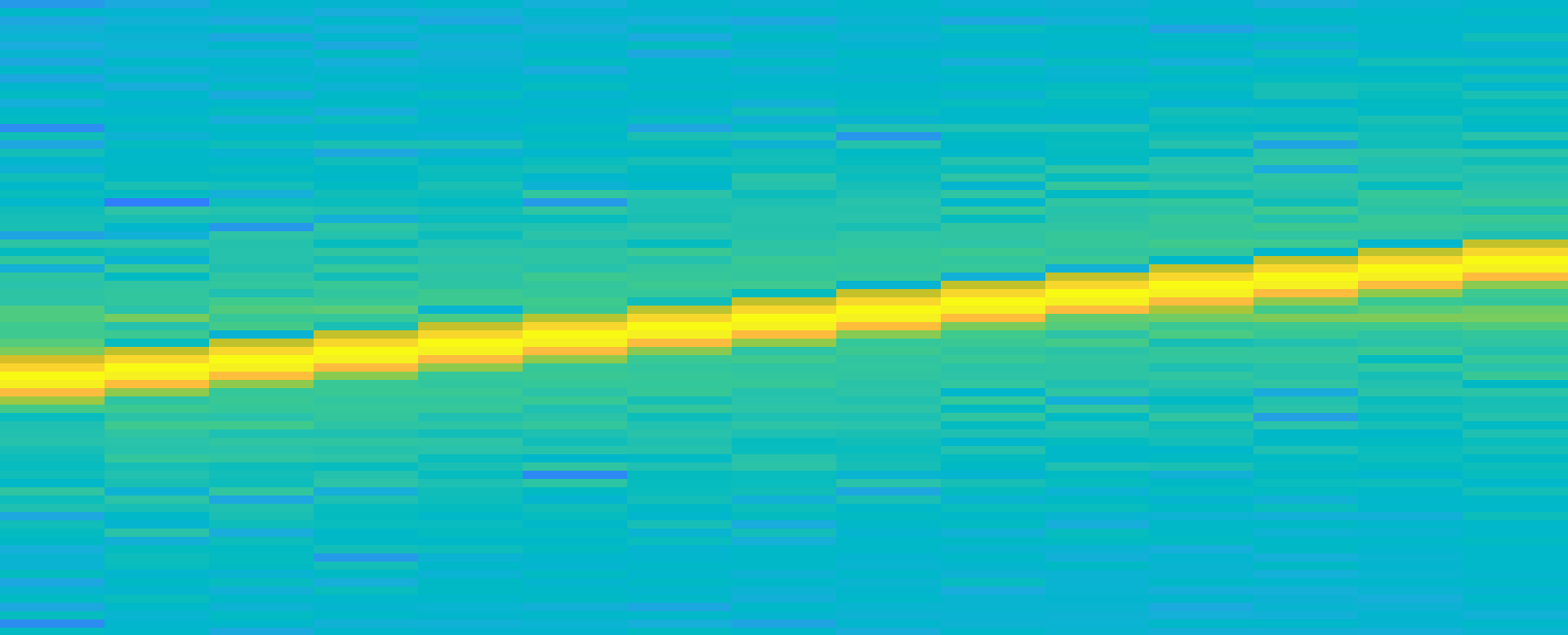

Fig 7: Signal representation: (a) Time domain signals (I branch), (b) FFT coefficients, (c) spectrogram.

Fig 8: Examples of CFR, mean and 1σ bounds for an indoor and an outdoor

Fig 9: High-level representation of the CB-authentication scheme.

Fig 4 (page 32).

Fig 5 (page 32).

Fig 6 (page 32).

Fig 7 (page 32).

Fig 8 (page 32).

Limitations

- Performance degradation when training and testing data are collected with different receivers due to receiver hardware variability impacting RFFI accuracy.

- Time-varying characteristics such as CFO and channel fading require compensation and calibration for stable fingerprint extraction, limiting robustness.

- Limited availability of large-scale and diverse public datasets covering wide device types and environmental conditions.

- Most evaluations assume static or quasi-static channel conditions; rapidly changing wireless environments remain challenging for channel-based authentication.

- Adversarial robustness against active spoofing or mimicry attacks is not thoroughly evaluated in many surveyed methods.

- Scalability to thousands of devices and long-term stability over months or years remain open practical challenges.

Open questions / follow-ons

- How to improve robustness of physical-layer fingerprints against receiver hardware variability and environmental dynamics?

- What are the most effective defense strategies against sophisticated adversarial attacks aimed at mimicking hardware or channel fingerprints?

- How to efficiently scale RFFI and channel-based authentication to large-scale IoT deployments with diverse device types?

- How can emerging technologies like reconfigurable intelligent surfaces (RIS) or generative AI be optimally integrated into physical-layer authentication schemes?

Why it matters for bot defense

For practitioners in bot defense and CAPTCHA development, this survey highlights a promising complementary approach to traditional cryptographic and behavioral authentication: leveraging physical-layer device fingerprinting to verify device legitimacy. By exploiting intrinsic hardware imperfections or unique wireless channel signatures, bots or unauthorized IoT devices could be detected passively and in real time, with minimal computational overhead. Such techniques may be particularly valuable in low-power, resource-constrained environments where classical challenge-response CAPTCHAs are impractical. However, the sensitivity to environment dynamics and need for stable datasets and receiver calibration pose implementation challenges. Integrating physical-layer fingerprints as a secondary authentication signal could raise the bar for bots that spoof software or network-level identifiers, particularly in wireless IoT networks. The survey’s emphasis on deep learning for automatic feature extraction suggests that advanced ML models will play a key role in practical system robustness and scalability.

Cite

@article{arxiv2506_09807,

title={ Physical Layer-Based Device Fingerprinting for Wireless Security: From Theory to Practice },

author={ Junqing Zhang and Francesco Ardizzon and Mattia Piana and Guanxiong Shen and Stefano Tomasin },

journal={arXiv preprint arXiv:2506.09807},

year={ 2025 },

url={https://arxiv.org/abs/2506.09807}

}