Got Ya! -- Sensors for Identity Management Specific Security Situational Awareness

Source: arXiv:2503.04274 · Published 2025-03-06 · By Daniela Pöhn, Heiner Lüken

TL;DR

This paper argues that conventional logging and network-centric security situational awareness miss an important gap: they can tell you that an identity logged in or a token was used, but not whether the action was legitimate for the person behind the account. The authors therefore propose an identity-management-specific situational awareness model that treats identities, identity-management systems (IdMS), federation partners, external services, and protocol-specific traces as first-class sensors. The goal is to improve perception, comprehension, and projection for attacks that blend into normal authentication and authorization traffic, such as account takeover, token theft, phishing-driven OAuth abuse, and misuse of service accounts.

What is new here is not a new classifier or detector, but a sensor-and-context framework for identity management, plus a proof-of-concept in OAuth. The paper derives layers for internal identity management, external identity, threats, and detection, and then maps concrete sources like IdMS logs, password-leak services, OSINT, HaaSS, NetFlow, and contextual data (device, IP, location, OS version, role concept) to those layers. In the OAuth example, the authors build a minimal test environment and show rule-based anomaly detection on logged requests, flagging repeated token use and suspicious user agents. The result is a conceptual first step rather than a validated detector: the paper claims novelty in IdM-specific situational awareness, but does not report large-scale accuracy or robustness numbers.

Key findings

- The paper proposes what the authors describe as the first identity-management-specific security situational awareness concept, extending Endsley’s perception/comprehension/projection model to IdM layers and protocol-specific traces.

- Table 1 aggregates sensors/sources across internal, organizational, and external inputs, including log files, AV/IPS/IDS, leak detection, CERT warnings, OSINT/IdM honeypots, NetFlow, role concepts, and other documentation.

- Table 2 reduces several IdM attack classes to minimum observable features: e.g., password stuffing needs password (hashed) plus IP address(es), while session hijacking needs different IP address and device/browser fingerprint.

- In the OAuth proof-of-concept, the test environment uses a minimal stack with one authorization server, one resource server, and one client, implemented in Python/Flask with authlib and MySQL.

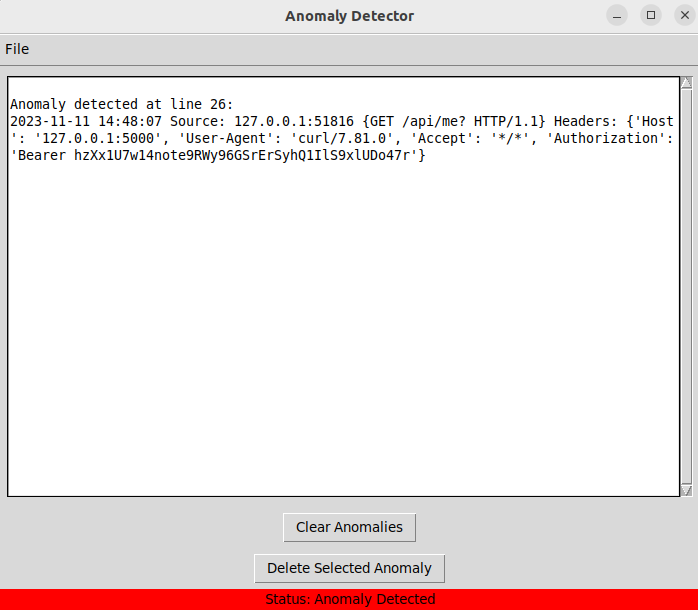

- The OAuth demo’s anomaly detector flags reused tokens as suspicious; the paper states that if a token from the authorization code grant is reused to request protected resources, the request is displayed as an anomaly in the GUI (Figure 2).

- The authors explicitly state they did not evaluate efficiency or report real-world performance because they could not use pseudonymized production logs; the detection is described as real-time inside the test environment.

- The paper notes that the number and quality of features available to rules depend on log detail, and that “other sensors add valuable data,” implying the demo’s rule set is intentionally incomplete.

- The paper positions OAuth 2.1 as a security-hardening direction because insecure grants like implicit and resource owner password credentials are removed, but the proof-of-concept still compares implicit versus authorization code grants under the current OAuth 2.0-style workflow.

Threat model

The adversary is a malicious actor targeting identities, identity-management systems, or federated OAuth/OIDC-style protocols via phishing, credential stuffing, token theft, session hijacking, malware, MITM, or abuse of service accounts and misconfigurations. They can use actions that resemble normal authentication and authorization flows and may operate inside or outside the organization, including through federation partners and external services. The defender can observe IdMS/application/network logs and some contextual or external-intelligence sources, but cannot assume full visibility into user intent or access to all private data, and the authors assume PII/privacy constraints may limit real-world log collection.

Methodology — deep read

The threat model is broad and operational rather than formal: the adversary is a malicious actor who can obtain credentials, tokens, or session context via phishing, credential stuffing, password spraying, malware, man-in-the-middle interception, session hijacking, or takeover of service accounts or the IdMS itself. The paper assumes attackers often try to blend into normal identity-management traffic, so purely protocol-agnostic or network-only monitoring is insufficient. The authors also assume defenders can observe logs, context data, and some external intelligence sources, but may not have access to complete production traces because of privacy constraints. They explicitly frame identity management as a cross-organizational problem, so a federation partner or external service may also be part of the sensing surface.

On the data side, there is no large public dataset. The only concrete empirical setup is a toy OAuth test environment with one authorization server, one resource server, and one client per grant type. The stack is Python plus Flask plus authlib, with MySQL used for persistent state. Logging is configured to write request metadata to header_logs.log in the format “TIMESTAMP - Source-IP: PORT - Request-Line - Header.” The paper does not provide train/test splits, annotation procedure, or a corpus size beyond the handful of generated tokens and scripted requests in the demo. It also does not describe preprocessing beyond reading the log file in a loop and matching events against hand-written rules.

Architecturally, the main contribution is a layered sensor model for identity management. The authors define four layers: internal identity management, external identity, threat, and detection. Internal identity management covers digital identities, authentication/authorization, and logged authentication actions across systems, databases, applications, web servers, and end-user applications such as SSI wallets. External identity covers federation partners, external services, password-leak services, OSINT, and human/surveys. The threat layer distinguishes end-user, service-account, and IdMS targets. The detection layer then maps these layers to sensors and contextual data such as IP address, device/browser fingerprint, session-related identifiers, location, OS version, software version, services, vulnerabilities, role concept, network plan, and documentation. The novelty is the explicit claim that these are not just generic security sensors but identity-specific indicators that should feed situational awareness.

The OAuth proof-of-concept is rule-based. The authors choose two grant types: implicit and authorization code, explicitly to contrast an insecure grant with a more secure one. They say they use threats from RFC 6819 and general web-application threats, then encode rules for token reuse/replay, cross-site scripting, and man-in-the-middle patterns. The demo flow is: create a client, authenticate a user, create a session, obtain an authorization code, exchange it for a token, then access a protected resource using the access token. The anomaly detector scans header_logs.log and marks repeated token use as anomalous; it also treats certain user agents, such as curl, as suspicious. A concrete end-to-end example is described where a legitimate OAuth interaction produces one token per grant, and then a scripted malicious request that reuses an access token is detected and shown in the GUI (Figure 2). The paper mentions that more advanced methods like isolation forest and hidden Markov models may fit live environments better, but they are not implemented or evaluated.

Evaluation is qualitative and scenario-based. There are no reported precision/recall/F1 numbers, no ROC curves, no statistical tests, and no ablation study. The only “results” are the successful creation of tokens for the two grants and the real-time recognition of repeated-token use as an anomaly in the test environment. The authors also discuss contextually that authorization code flow is safer than implicit, and that OAuth 2.1 removes insecure grants, but they do not quantify detection improvements from one grant versus another. Reproducibility is limited: the paper names the software stack and log format, but does not provide code, frozen weights, or a public dataset; instead, the authors say they could not use real-world logs because of PII constraints and plan to extend the test environment in future work.

Technical innovations

- Defines a four-layer identity-management-specific situational awareness model: internal identity management, external identity, threat, and detection.

- Maps IdM attack classes to minimum observable characteristics, e.g. session hijacking to differing IP address plus device/browser fingerprint.

- Extends situational awareness inputs beyond standard logs to identity-specific sources such as password-leak detection, OSINT, federation partners, HaaSS, and IdM honeypots.

- Provides a proof-of-concept OAuth monitoring setup that flags token reuse and suspicious request patterns from request-header logs.

- Highlights protocol-specific sensing as the key differentiator from prior cyber situational awareness work that is mostly network-centric.

Datasets

- No public dataset — toy OAuth test environment with one authorization server, one resource server, and one client; scripted requests logged to header_logs.log; source not public.

- MySQL-stored OAuth metadata — small test-environment state; source not public.

Baselines vs proposed

- Implicit grant vs authorization code grant: no quantitative metric reported; proposed detector flags token reuse as anomalous in both test cases.

- Rule-based log detection vs isolation forest / HMM: no head-to-head results reported; the latter are only suggested as future alternatives for live environments.

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2503.04274.

Fig 2: As a second aspect, the user-agent curl

Limitations

- No real-world dataset or production logs; the authors explicitly say privacy constraints prevented use of pseudonymized operational data.

- No quantitative evaluation: there are no accuracy, precision, recall, false-positive, latency, or throughput numbers.

- The detection logic is hand-written and demo-scale, so robustness against adaptive attackers, noisy logs, and distribution shift is unknown.

- The OAuth example focuses on token reuse and a few obvious suspicious patterns, leaving many protocol attacks untested.

- The framework is conceptual and sensor-centric; the paper does not validate which sensors actually improve detection most.

- Cross-organizational awareness is discussed only conceptually and not implemented or evaluated.

Open questions / follow-ons

- Which identity-specific sensors actually provide the best marginal detection value once privacy cost and operational overhead are accounted for?

- How well does the proposed layer model transfer from OAuth to SAML, OIDC, and enterprise IdMS implementations like Active Directory?

- Can contextual data such as role, device, and location be fused into a calibrated risk score without creating unacceptable false positives for legitimate travel, device changes, or automation?

- What would cross-organizational sharing of IdM situational awareness look like in practice, and what data-minimization scheme would make it viable?

Why it matters for bot defense

For bot-defense and CAPTCHA practitioners, the main takeaway is that identity telemetry should be treated as a richer signal space than login outcomes alone. If you only look at whether a login succeeded, you miss the difference between a legitimate user, a session hijack, a replayed token, and a scripted OAuth abuse flow. The paper’s sensor list suggests concrete features that can complement CAPTCHA and risk engines: device/browser fingerprints, IP and ASN changes, token reuse, session-linked identifiers, password-leak exposure, and external intelligence about the account. In practice, this argues for tying CAPTCHA or step-up challenges to identity-context anomalies rather than just raw request volume.

The OAuth demo is also a reminder that some abuse is not “solved” by stronger front-door verification. A bot that already has a valid token, or a human-in-the-middle replaying a session, may pass standard authentication but still be detectable through protocol-specific traces. For a bot-defense team, the right reaction is to expand monitoring around authorization code exchange, token replay, suspicious client behavior, and federation edge cases, while keeping false positives in mind for legitimate automation and device churn. The paper is not a mature detector, but it is useful as a design checklist for what to instrument in an identity-aware defense pipeline.

Cite

@article{arxiv2503_04274,

title={ Got Ya! -- Sensors for Identity Management Specific Security Situational Awareness },

author={ Daniela Pöhn and Heiner Lüken },

journal={arXiv preprint arXiv:2503.04274},

year={ 2025 },

url={https://arxiv.org/abs/2503.04274}

}