A multimodal dataset for understanding the impact of mobile phones on remote online virtual education

Source: arXiv:2412.14195 · Published 2024-12-13 · By Roberto Daza, Alvaro Becerra, Ruth Cobos, Julian Fierrez, Aythami Morales

TL;DR

The paper introduces the IMPROVE dataset, a novel, large-scale multimodal resource aimed at investigating how mobile phone usage affects learners in remote online education settings. By collecting behavioral, biometric, physiological, and academic performance data from 120 participants divided into three groups with varying levels of phone access and interaction, the dataset enables holistic analysis of distraction effects, cognitive load, and phenomena like nomophobia. The collection harnessed an extensive setup of 16 synchronized sensors—including EEG, eye tracking, multiple cameras, smartwatches, and keystroke dynamics—during 30-minute learning sessions on an HTML course delivered via MOOC platforms. Detailed manual and semi-supervised labeling of mobile phone interruptions distinguishes controlled interventions and spontaneous interactions.

Technical validation confirms the high fidelity and synchronization of the multimodal signals, while initial statistical analyses reveal measurable biometric shifts (e.g., heart rate, EEG attention levels) linked to mobile phone presence and usage. The dataset is comprehensive, reaching 2.83 terabytes, publicly available with standardized formats and accompanied by extensive documentation and tools for visualization and analysis. This resource represents a significant advancement for research into mobile distractions, learner engagement, and secure certification in remote virtual education.

Key findings

- The dataset contains 120 learners divided into 3 groups: Group 1 allowed mobile phone use, Group 2 allowed possession but prohibited use, Group 3 had phones confiscated.

- Each learner session lasted approx. 30 minutes and included videos, document reading, and a 16-question multiple choice test.

- A total of 16 synchronized sensors provided multimodal streams, including EEG (1 Hz, 5 frequency bands), eye tracking (120 Hz), 3 RGB + 2 NIR cameras, smartwatches measuring heart rate and EDA (100 Hz), keyboard and mouse dynamics, and audio recordings at 8 kHz.

- 145 labeled mobile phone interaction events were logged, including controlled supervisor interventions and uncontrolled spontaneous interactions.

- Filtering and signal processing methods (median filtering for EEG, moving average for heart rate) improved signal quality while preserving important biometric features.

- Statistical analyses indicated biometric changes associated with phone usage, such as variations in heart rate and attention estimates derived from EEG.

- Semi-supervised relabeling of interruption events improved annotation consistency beyond initial manual labels.

- Learners engaging with phones reported higher distraction but groups deprived of phones showed increased stress levels, illustrating the complex tradeoffs of phone presence.

Threat model

Not a security-focused paper per se; the adversary model is implicit: the 'adversary' is distraction caused by mobile phone usage or anxiety induced by phone unavailability that impacts learner focus and performance. No active hostile adversary or attacker is considered. The threat assessed is the degradation of learner cognitive states leading to poorer learning outcomes or compromised examination integrity.

Methodology — deep read

Threat Model & Assumptions: The study assumes typical remote online learners who may or may not interact with their mobile phones during educational sessions. The key adversarial factor is distraction caused by phone usage or anxiety caused by unavailability (nomophobia). There are no active malicious adversaries; rather the dataset aims to study naturalistic behavioral and biometric responses to phone presence and interruptions.

Data Collection: The dataset includes 120 engineering students from Universidad Autónoma de Madrid, balanced by gender, degree program, and HTML proficiency. Sessions were ~30 minutes online lessons on HTML from an edX MOOC. Participants were assigned to one of three groups controlling mobile phone accessibility and interaction.

Sensors & Architecture: A total of 16 sensors were used: EEG band (NeuroSky) producing power spectral density at 1 Hz across 5 EEG bands plus attention and meditation estimates; eye tracker (Tobii Pro Fusion) at 120 Hz recording gaze, fixations, saccades; three RGB cameras and two near-infrared cameras capturing video at 20–30 Hz; two smartwatches (Huawei Watch 2 and Fitbit Sense) measuring heart rate, electrodermal activity (EDA), temperature, and inertial data at 100 Hz; computer keyboard and mouse logging at up to 895 Hz; microphone audio at 8 kHz; and screen capture at 1 Hz. Platforms used included edBB for sensor synchronization and edX for course delivery.

Protocol: Participants underwent calibration and a pre-session EDA stress test and pretest on HTML. The learning session included watching two videos (total ~2 min), reading two documents, followed by an evaluation test with 16 multiple choice questions. Mobile phone conditions were controlled per group: Group 1 could use phones freely, Group 2 had phones face down with usage banned but allowed ringing vibrations, and Group 3 had phones confiscated. Supervisor interventions sent standardized messages at two fixed points, labeled alongside uncontrolled interactions. Post-session, participants completed forms rating anxiety, distraction, difficulty, and performance.

Signal Processing: Raw biometric signals were filtered—median filtering (window=5) for EEG, moving average filtering (window=15s) for heart rate, and Butterworth low-pass filters for accelerometer data to reduce noise and artifacts. Facial video was processed using MediaPipe BlazeFace and WHENet CNNs to extract 2D/3D facial landmarks and head pose angles. Eye tracking data were processed with a velocity-based I-VT fixation filter.

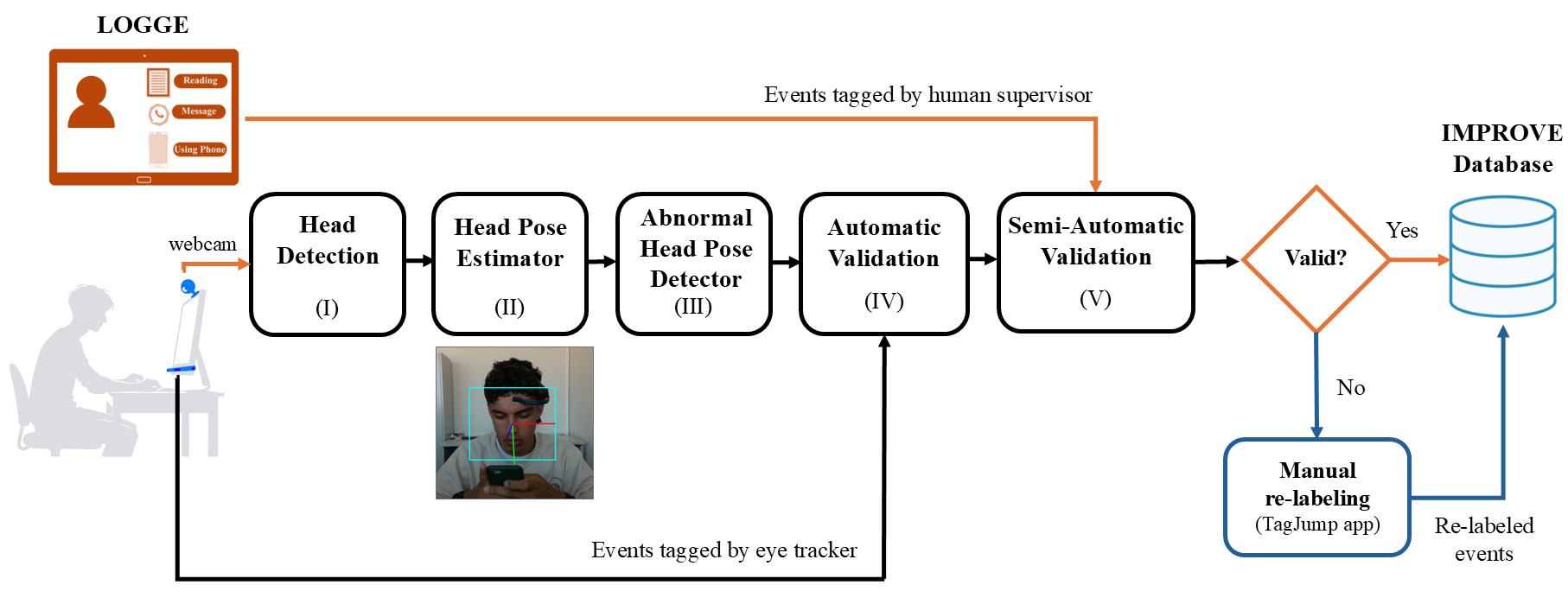

Labeling and Annotations: Phone interaction events were manually labeled by supervisors with the LOGGE tool and further refined using a semi-supervised machine learning approach to improve consistency and accuracy of interruption event timing.

Evaluation: Technical validation included signal quality checks, synchronization correctness, and verification of biometric signal consistency across different acquisition methods (e.g., two smartwatches). Statistical analyses compared biometric and academic performance metrics across groups and related them to phone usage events. Data visualizations and synchronization were enabled via the M2LADS platform. The paper does not report adversarial testing or distribution shift evaluations.

Reproducibility: The full IMPROVE dataset is publicly available via GitHub and Science Data Bank with detailed guides, raw and processed signals, annotations, and associated code for visualization and analysis. However, some code (e.g., for semi-supervised labeling) may require local adaptation. The dataset is anonymized but includes non-anonymized videos with participant consent.

Technical innovations

- A first multimodal dataset combining synchronized behavioral, biometric, physiological, and academic performance data to study mobile phone effects on remote learners.

- Integration of 16 diverse sensors including EEG, eye tracking, multi-view RGB and NIR cameras, smartwatches, and input dynamics in a unified acquisition framework.

- Novel semi-supervised method for refining mobile phone usage event annotations to improve labeling consistency beyond manual supervisor labeling.

- Use of CNN-based real-time facial landmark and 3D head pose detection pipelines (MediaPipe BlazeFace and WHENet) to quantify abnormal head poses correlated with phone interruptions.

Datasets

- IMPROVE — 120 learners — Universidad Autónoma de Madrid (public release via GitHub and Science Data Bank)

Baselines vs proposed

- Group 1 (phone use allowed): baseline academic performance improvement measured by pretest to posttest change = 42.11% learners reporting medium to high performance.

- Group 3 (phone confiscated): higher stress due to lack of phone reported by 95% as medium to high, versus 26.32% medium-high anxiety at session start in Group 1.

- Attention levels derived from EEG showed statistically lower scores in Group 1 during phone interruptions compared to no-interruption intervals (details in paper Fig. 5 - exact numbers unclear).

- Heart rate variability (smoothed by moving average) increased significantly during phone interaction events in Group 1 versus baseline periods.

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2412.14195.

Fig 1: Acquisition setup used during data capture with the edBB1,14 platform, illustrating all sensors and devices

Fig 2: Protocol followed during the learning session to evaluate the impact of mobile phone usage on learners’ academic

Fig 3 (page 4).

Fig 3: Overview of the platforms and tools used to capture the IMPROVE dataset. The edBB setup was used to monitor

Fig 4: Example of filtered signals in IMPROVE: The left graph displays the raw heart rate signal and the same signal

Fig 5: Diagram of the proposed semi-supervised method for curating mobile phone usage event data. The participant

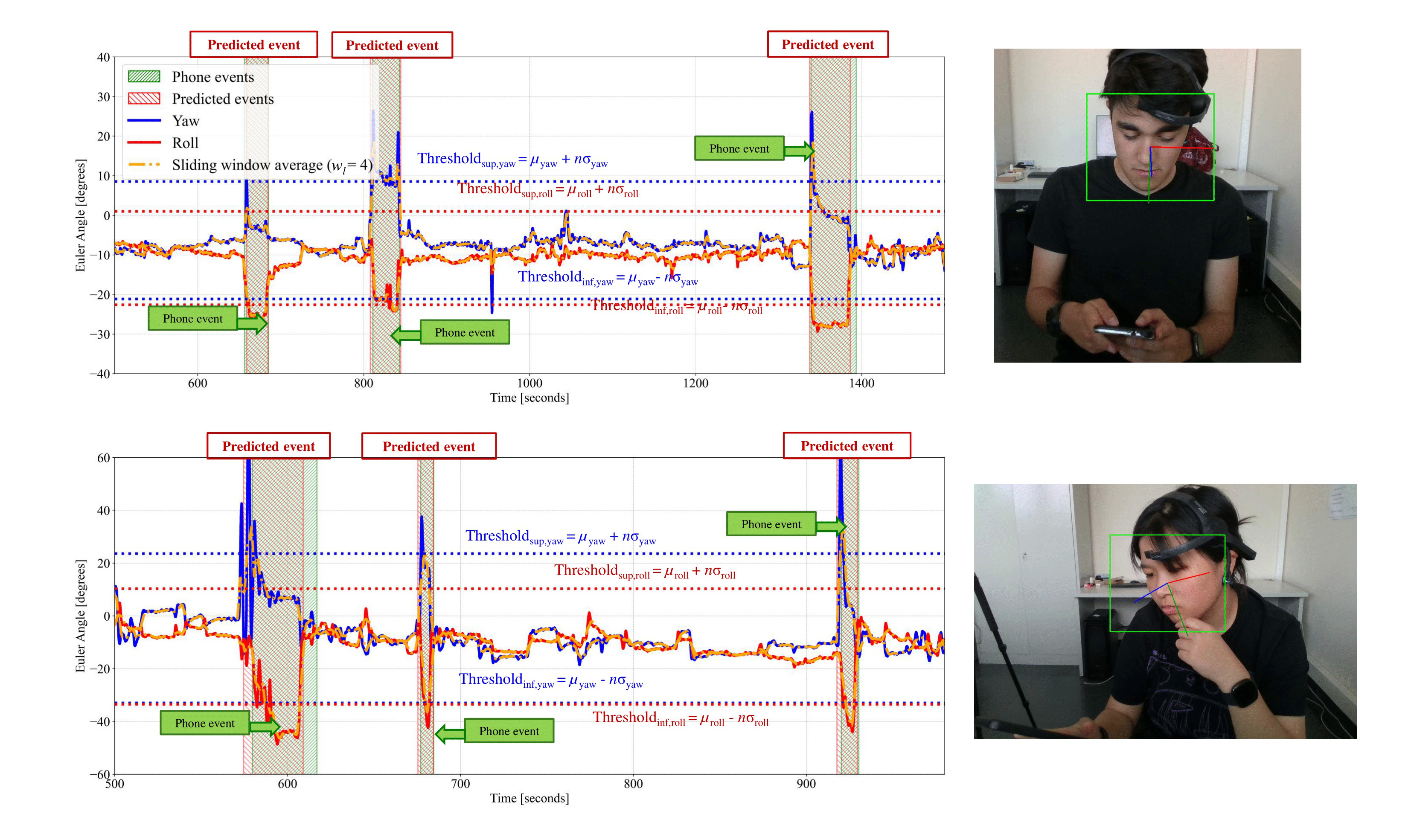

Fig 6: Example of the abnormal head pose detector for two students. The graphs show the Euler angles obtained during the

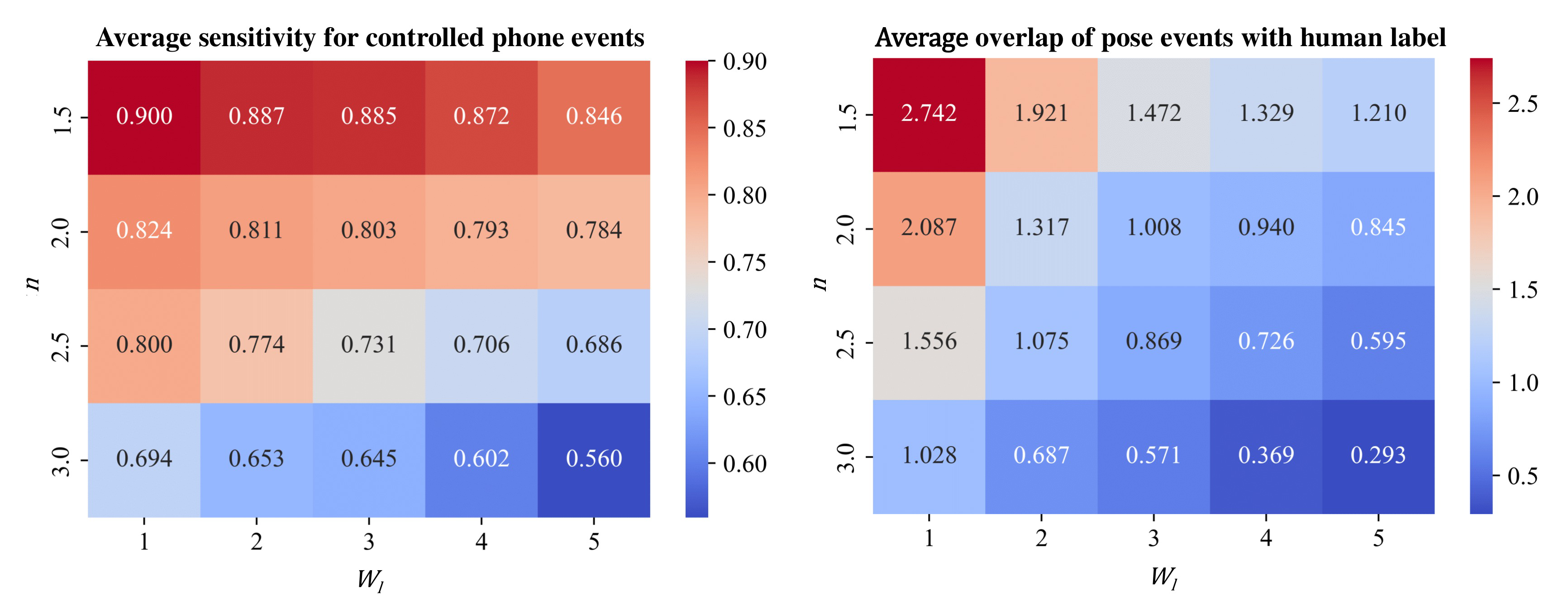

Fig 7: Average event detection sensitivity obtained by the abnormal head pose detection approach to detect mobile phone

Limitations

- Sample limited to 120 engineering students from a single university; results may not generalize to broader populations or disciplines.

- No adversarial testing or robustness evaluation against deliberate manipulation of biometric signals was performed.

- The controlled environment and supervisor presence may affect the naturalistic behavior of participants (Hawthorne effect).

- Potential labeling errors remain despite semi-supervised refinement; exact inter-rater reliability metrics not reported.

- Only one type of learning content (HTML MOOC) was used; additional subjects or longer-term studies could yield different results.

- Some sensors (e.g., EEG headset) may have limitations in signal quality or susceptibility to motion artifacts despite filtering.

Open questions / follow-ons

- How robust are the observed biometric changes and distraction effects across different curricula, age groups, and cultural contexts?

- Can machine learning models trained on IMPROVE be developed to automatically detect and mitigate distraction in real-time during remote education?

- What is the longitudinal impact of phone deprivation or unrestricted phone use on learner mental health and academic performance?

- How might integration with anti-cheating or authentication mechanisms leverage biometric and behavioral data to securely certify online exam sessions?

Why it matters for bot defense

For practitioners of bot defense and CAPTCHA in remote education, the IMPROVE dataset provides a rich multimodal benchmark to study human attention and distraction patterns related to mobile phone presence. Understanding biometric signatures associated with distraction and nomophobia can inform the design of more adaptive, context-aware proctoring and challenge systems that detect genuine human engagement versus automated or inattentive behavior. Moreover, the high-fidelity synchronized signals including EEG, eye tracking, and input dynamics offer novel modalities for continuous user verification and behavioral biometrics in online learning scenarios. The dataset also opens avenues for detecting subtle lapses in focus or attempts to circumvent exam integrity through mobile phone interactions, helping to improve secure certification technologies. However, the data collection focuses on natural distraction rather than adversarial cheating attempts, so further work is needed to evaluate attack resilience.

Cite

@article{arxiv2412_14195,

title={ A multimodal dataset for understanding the impact of mobile phones on remote online virtual education },

author={ Roberto Daza and Alvaro Becerra and Ruth Cobos and Julian Fierrez and Aythami Morales },

journal={arXiv preprint arXiv:2412.14195},

year={ 2024 },

url={https://arxiv.org/abs/2412.14195}

}