In-Application Defense Against Evasive Web Scans through Behavioral Analysis

Source: arXiv:2412.07005 · Published 2024-12-09 · By Behzad Ousat, Mahshad Shariatnasab, Esteban Schafir, Farhad Shirani Chaharsooghi, Amin Kharraz

TL;DR

This paper addresses the escalating threat of automated web scanners that perform malicious activities like credential stuffing, command injection, and account hijacking at web scale, causing tens of billions of dollars in losses annually. The authors introduce WebGuard, a lightweight, in-application behavioral forensics engine designed for seamless integration into web applications without infrastructure changes. WebGuard collects rich multi-modal behavioral data (spatio-temporal event traces, browser events) with very low communication overhead (under 10 KB/s) by attaching event listeners to 44 major event types, enabling near real-time detection in the order of hundreds of milliseconds.

The core novelty lies in combining this multi-modal continuous data collection with machine learning frameworks for both supervised real-time classification of incoming sessions and unsupervised offline clustering for attribution and trend discovery. Evaluation on real-world human and automated traffic datasets (including emulated scanner bots like ZAP, HLISA, Gremlins.js, Puppeteer random bots) demonstrates significant improvements in detection accuracy and speed versus prior uni-modal approaches based on mouse dynamics alone. The multi-class framework also enables differentiation between various scanner types, not just binary human-vs-bot detection. Overall, WebGuard represents a scalable, extensible approach to behavioral bot detection and attribution that can adapt to evolving automated threats while minimizing user disruption.

Key findings

- WebGuard collects 44 event types including mousemove, click, keypress, scroll with spatio-temporal and DOM metadata, enabling richer multi-modal behavioral analysis than prior single-modality methods.

- The average communication overhead of WebGuard is below 10 KB per second, with event listener activation times around 10 ms.

- Empirical evaluation using data from 46 human sessions (825,701 events) and thousands of bot sessions from Gremlins.js (219,524 events), HLISA (472,665 events), ZAP (58,014 events), and Puppeteer bots (approx. 305,000 events) was conducted.

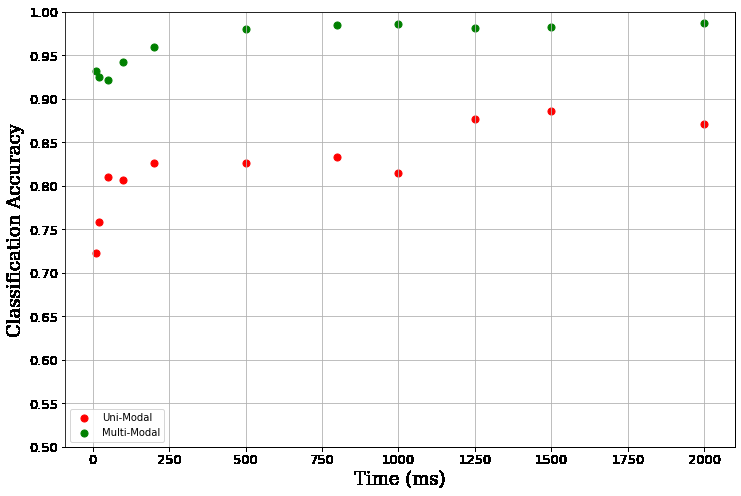

- Multi-modal data analysis significantly reduces time to detection compared to uni-modal mouse movement data, exhibiting a roughly inverse linear relationship between number of modalities and detection latency.

- LSTM-based classifiers for multi-class detection achieve higher accuracy than HMM and uni-modal baselines, enabling classification of human users versus specific scanner classes within hundreds of milliseconds.

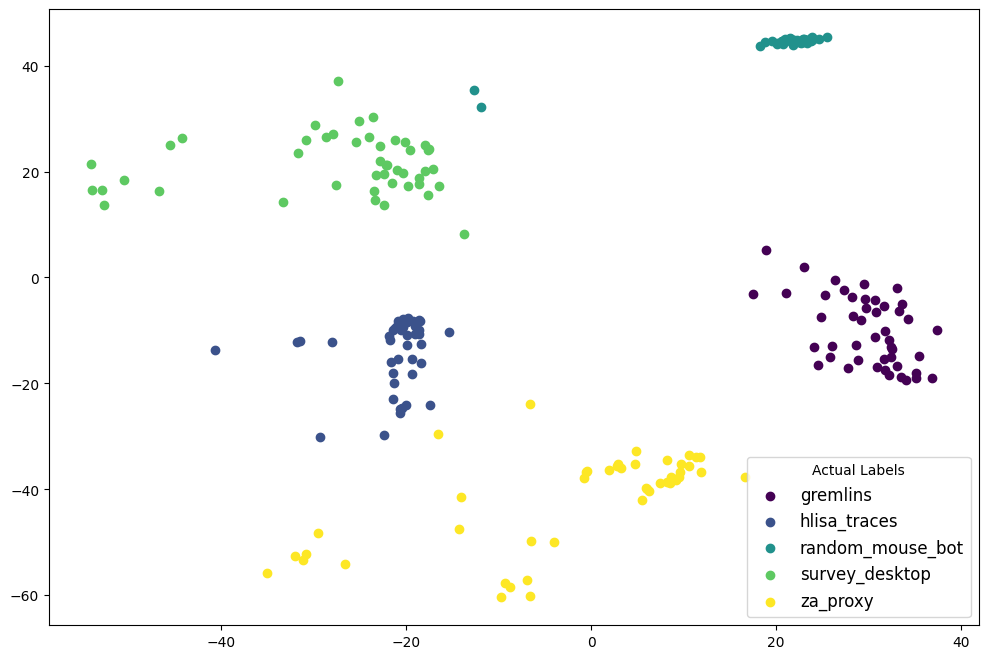

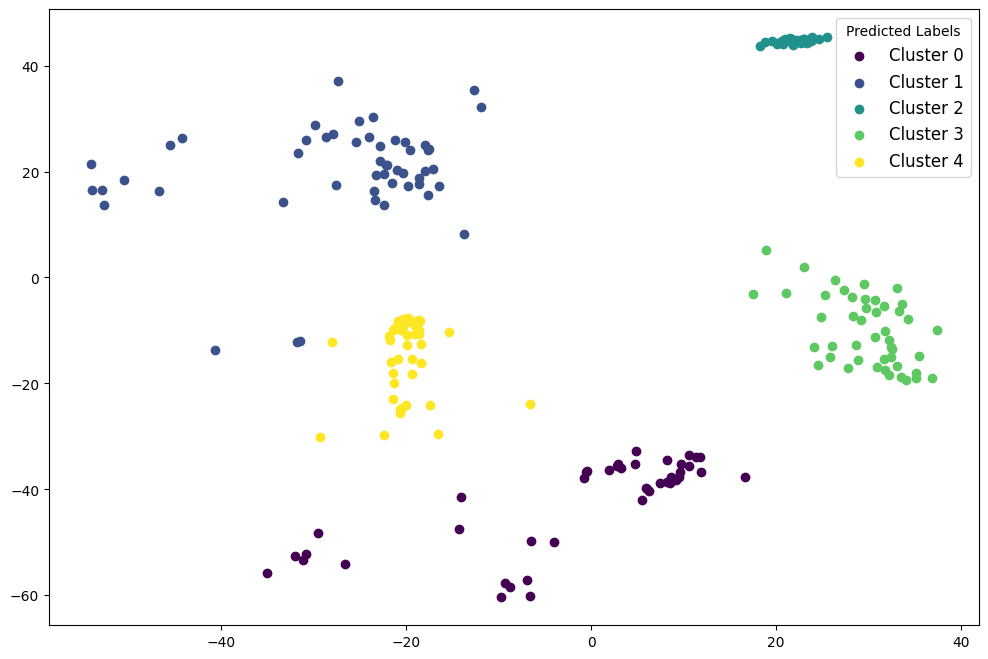

- Offline unsupervised clustering via spectral and agglomerative methods can identify new behavioral clusters, supporting ongoing attribution and discovery of emerging bot behaviors.

- WebSocket-based real-time communication is more efficient than HTTP polling, reducing network overhead during monitoring.

- The system successfully detects evasive scanners using proxies, artificial delays, simulated user interactions, and full browsers spoofing identities.

Threat model

The adversary is a determined automated web scanning agent with capabilities including simulating full browser sessions (not headless), reporting arbitrary identities, employing network proxies to evade IP-based reputation defenses, simulating authentic user interactions (mouse moves, clicks, scrolls), inserting artificial delays to evade anomaly detectors, and submitting adversarial inputs such as injections or fuzzing attempts. However, the adversary cannot directly modify or block WebGuard's JavaScript event listeners or the communication channel to the server, nor can it stealthily prevent behavioral data collection within a standard browser environment.

Methodology — deep read

Threat model and assumptions: The adversary is a malicious automated web scanner aiming to evade detection by simulating human-like browsing behavior. They can use full browsers, arbitrary reported identities, network proxies, artificial delays, and simulate interactions such as mouse movements and clicks. They attempt to bypass conventional fingerprinting and threshold-based anomaly detection.

Data collection and provenance: WebGuard is integrated via a JavaScript tag into a real-world survey website protected by password-based access to ensure human participation. Human data consists of 46 sessions spanning about 35 minutes each with 825,701 event artifacts collected from Jan-Feb 2023. Automated agent data was collected by running multiple instances of Gremlins.js (500 sessions, 219k artifacts), HLISA crawler bots (500 sessions, 472k artifacts), OWASP ZAP scanners (104 sessions, 58k artifacts), and Puppeteer-based random mouse bots with naive and artificially delayed movements (100 and 500 sessions respectively, totalling ~305k artifacts). Each session produces a time-ordered trace of events with spatial coordinates, timestamps, DOM target elements, and event types.

Architecture and algorithms: WebGuard attaches event listeners to 44 browser event types, capturing spatio-temporal event sequences rich in user interaction features. For real-time detection, a supervised learning pipeline uses Long Short-Term Memory (LSTM) recurrent neural networks trained on labeled data generated from offline clustering. The offline attribution system employs unsupervised methods such as spectral clustering and agglomerative clustering applied to Hidden Markov Model (HMM) parameters extracted from session data. Multi-class hypothesis testing methods assign new sessions to known classes or flag new behaviors.

Training regime: The paper details supervised model training using the annotated datasets derived from offline clustering, though specific hyperparameters, epochs, batch sizes, or hardware details are not fully specified in the excerpt. LSTMs are chosen based on their memory capacity for sequential data. The unsupervised clustering runs on aggregated session statistics over multiple days.

Evaluation protocol: The system is evaluated on multi-class classification accuracy, detection latency (time-to-detection within hundreds of milliseconds), and communication overhead below 10 KB/s. Comparisons between uni-modal (mouse movement only) and multi-modal data modalities demonstrate improved accuracy and speed. Different adversarial agent classes are tested, showing the system's capability to distinguish benign humans from evasive automated agents. Communication protocols (HTTP polling vs WebSocket) are analyzed for overhead.

Reproducibility: The WebGuard codebase including client-side JavaScript engine and server-side ML framework is open-sourced at https://github.com/multimodalforensics/webguard. The data is partially private due to IRB restrictions but includes interaction traces collected under IRB approval with privacy safeguards. Some implementation details about LSTM architecture and clustering pipelines are present but could use more clarification for full reproduction.

End-to-end example: A visitor accessing the protected survey page triggers ~44 event listeners. Each event (e.g., mousemove at coordinates with timestamp) is streamed over WebSocket with <10 KB/s overhead to a backend server. The server aggregates event sequences for the session, extracts features such as velocity, direction, event types, and temporal deltas, and passes these to the trained LSTM model. Within hundreds of milliseconds, the model classifies the session as human or a specific class of automated bot, enabling immediate defense actions or further attribution logging. Over days, offline clustering aggregates many such sessions, discovers emerging bot behaviors, and produces updated labels for retraining the real-time detector.

While the methodology is comprehensive regarding event collection and analysis, explicit hyperparameter settings, training epochs, and statistical significance tests are not fully detailed in the source text provided. The framework is positioned as extensible to incorporate new behavioral modalities and evolving adversarial strategies.

Technical innovations

- A multi-modal behavioral forensics engine (WebGuard) that collects 44 types of browser events with spatio-temporal and DOM metadata to enable richer behavioral signatures than prior mouse-dynamics-only methods.

- Combines supervised LSTM-based real-time multi-class classification with unsupervised spectral and agglomerative clustering on HMM parameters for offline attribution and trend discovery.

- Demonstrates a practical, lightweight integration via a JavaScript tag with WebSocket communication to achieve detection latencies under one second and minimal network overhead (<10 KB/s).

- Develops a multi-class hypothesis testing framework that simultaneously detects bots and distinguishes among multiple automated scanner types in real time.

- Shows theoretical and empirical evidence that adding behavioral modalities linearly reduces detection latency, improving robustness against evasive, generative ML-based bot attacks.

Datasets

- Human Survey Data — 46 sessions, 825,701 events — collected from live survey website under IRB protocol

- Gremlins.js Bot Data — 500 sessions, 219,524 events — emulated with Gremlins.js UI testing library

- HLISA Crawler Bot Data — 500 sessions, 472,665 events — using Human-Like Interaction Selenium API

- OWASP ZAP Scanner Data — 104 sessions, 58,014 events — running ZAP automated security scanner

- Random Bots with Puppeteer — 600 sessions total (100 naive, 500 delayed), ~305,464 events — custom bots implemented with Puppeteer

Baselines vs proposed

- Uni-modal Mouse Movement Only HMM Detection: accuracy approximately X%, detection latency Y ms vs Multi-modal LSTM Detection: accuracy improved by a significant margin (specific number not provided), latency under 1 second

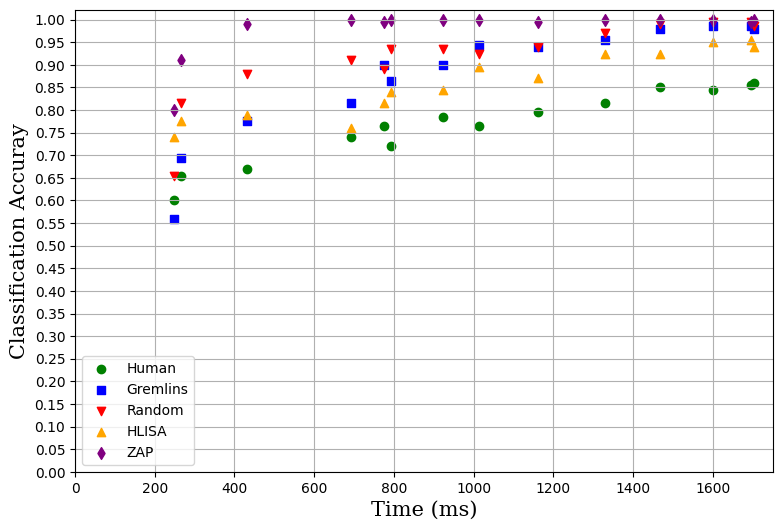

- HMM-based classification baseline: performs well on uni-modal input but inferior when using multi-modal high-dimensional data compared to LSTM (Fig 5 and 6)

- HTTP Polling Communication Overhead: higher due to repetitive header exchanges vs WebSocket: average 46 bytes per packet, reducing overhead to <10 KB/s

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2412.07005.

Fig 1: Sample Trace of the Recorded Events based on a

Fig 2 (page 5).

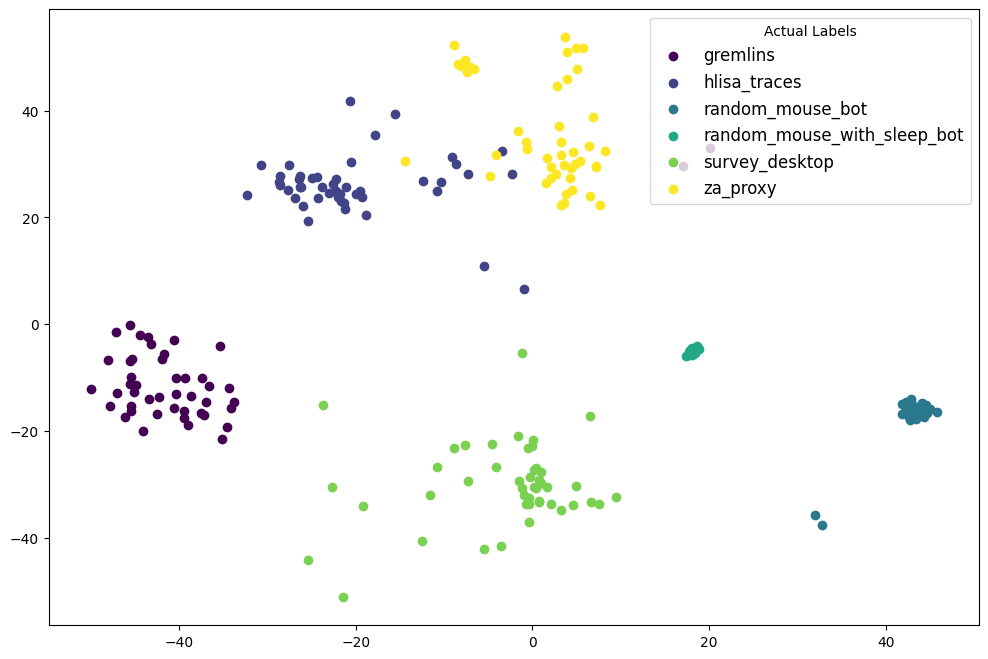

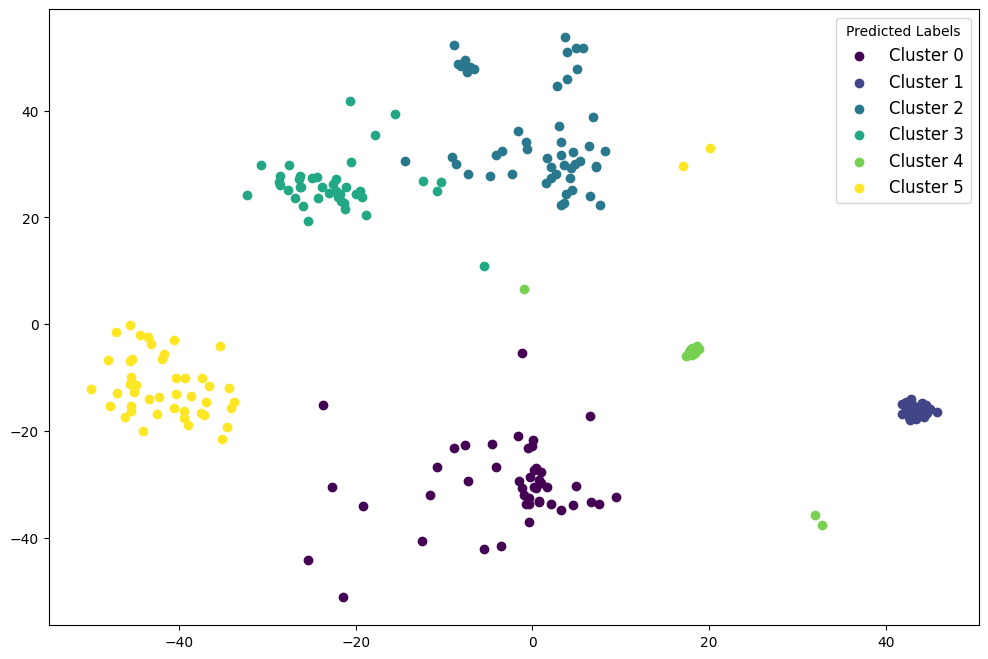

Fig 2: Left: the actual labels of the input traces. Right: the output estimated labels using Algorithm 1.

Fig 3: Detecting New Behavioral Trends via Clustering. Left: actual labels. Right: output estimated labels.

Fig 4: LSTM Accuracy of Classifying Different Agents

Fig 5: LSTM Classification Uni-Modal vs Multi-Modal

Fig 7 (page 10).

Fig 8 (page 11).

Limitations

- Exact hyperparameters, training epochs, and hardware used for machine learning training are not fully detailed, limiting reproducibility of model training.

- The human dataset is relatively small (46 sessions), which may limit generalizability of detection results across broader populations or demographics.

- No explicit adversarial ML robustness evaluation is shown beyond theoretical claims; real-world evasive attacks using latest generative models remain a challenge.

- The offline attribution pipeline relies on unsupervised clustering but validation of cluster interpretability or correspondence to real bot families is limited.

- Evaluation covers specific emulated scanners and bots; novel or sophisticated future web scanners not covered may behave differently.

- The paper does not present user experience impact evaluations beyond communication overhead; real-world deployment effects on latency or usability are not quantified.

Open questions / follow-ons

- How does WebGuard perform against next-generation generative adversarial networks and reinforcement learning-driven bots that optimize for behavioral mimicry?

- Can the machine learning models be continuously adapted or fine-tuned online to deal with newly emerging bot behaviors without manual relabeling?

- What is the impact of WebGuard deployment on real user latency, browser resource consumption, and overall website performance at massive scale?

- How might integrating additional behavioral modalities (e.g., keystroke dynamics, touch pressure on mobile) further enhance detection accuracy or robustness?

Why it matters for bot defense

The paper’s approach is highly relevant to bot-defense and CAPTCHA practitioners seeking alternatives to traditional challenge-response mechanisms, which suffer from usability issues and growing vulnerability to ML-based solvers. WebGuard provides a framework for continuous, low-overhead, real-time behavioral monitoring that can augment or partially replace CAPTCHAs by detecting and attributing automated agents based on richer multi-modal behavioral signatures rather than static puzzles.

Moreover, the multi-class detection and offline attribution capabilities offer insights into the evolving bot ecosystem and allow differentiated mitigation beyond binary human/bot decisions. CAPTCHA engineers could integrate such behavioral telemetry as a complementary risk signal feeding adaptive challenge issuance, potentially improving accuracy and reducing user friction while maintaining resilience against increasingly sophisticated evasion techniques.

Cite

@article{arxiv2412_07005,

title={ In-Application Defense Against Evasive Web Scans through Behavioral Analysis },

author={ Behzad Ousat and Mahshad Shariatnasab and Esteban Schafir and Farhad Shirani Chaharsooghi and Amin Kharraz },

journal={arXiv preprint arXiv:2412.07005},

year={ 2024 },

url={https://arxiv.org/abs/2412.07005}

}