Towards Understanding Emotions for Engaged Mental Health Conversations

Source: arXiv:2406.11135 · Published 2024-06-17 · By Kellie Yu Hui Sim, Kohleen Tijing Fortuno, Kenny Tsu Wei Choo

TL;DR

This paper addresses the critical challenge of timely and effective emotional support in text-based mental health conversations, particularly for youth who increasingly rely on text messaging platforms. The authors propose a novel passive emotion-sensing system that combines keystroke dynamics (KD)—the behavioral biometrics of typing patterns—with sentiment analysis derived from the text content. Unlike previous work that predominantly focused on either physiological signals or text, this fusion-based approach aims to unobtrusively capture emotional states in real time within synchronous chat interactions common to mental health helplines. Through an in-person user study with 31 participants exposed to emotionally evocative videos, the authors collected synchronized keystroke and message data to train machine learning models predicting emotional valence, arousal, and discrete categories based on Ekman’s emotions. The findings demonstrate that while sentiment analysis via LLM-based text models yields strong performance, integrating KD features provides modest but consistent improvements, especially for high-arousal emotions such as anger, surprise, and fear where fusion models achieved F1 scores up to 0.985. The paper discusses design considerations for how this emotion-aware technology could augment human responders in mental health platforms without replacing human empathy, alongside challenges around privacy, personalized modeling, and the inclusion of modern text inputs.

Key findings

- Fusion models combining text-based sentiment and keystroke dynamics achieved highest F1 scores for anger (0.985), surprise (0.967), and fear (0.956), outperforming single modality models.

- Categorical emotion classification outperformed dimensional valence-arousal models overall, with lowest categorical emotion F1 = 0.756 vs dimensional arousal F1 = 0.746.

- Keystroke-only models achieved an average precision of 0.796 and F1 score of 0.791 using random forest classifiers.

- Fusion models showed small but consistent F1 score improvements over text-only models in happiness, surprise, fear, anger, and valence classification (mean ΔF1 = 0.007).

- The chat application prototype successfully integrated a real-time KD-only model capable of predicting valence/arousal from typing features immediately after message send.

- Manual annotation reliability yielded Krippendorf’s alpha of 0.75 for valence, 0.56 for arousal, and average 0.76 for categorical emotions, indicating moderate agreement.

- Study excluded clinical diagnoses and modern text input features (auto-correct, emojis) to control data but acknowledged these limits generalizability.

- Early fusion of KD and LLM-text features demonstrated feasibility but suggested KD’s incremental contribution requires further enhancement via deeper features or modeling.

Threat model

Not a security-focused paper; the study assumes honest participants engaging in mental health conversations with no adversarial manipulation or evasion of emotion detection. The system is designed to unobtrusively sense emotional states from keystroke and text patterns without considering adversarial attacks or privacy breaches in this initial work.

Methodology — deep read

- Threat Model and Assumptions: The adversary considered is not explicitly defined; this is primarily a study to detect emotions unobtrusively in text-based mental health conversations with no adversarial manipulation assumed. The system assumes honest user engagement and valid keystroke data. 2. Data Collection: Thirty-one participants (18 female) aged 18-50, fluent in English without clinical mental health diagnoses, engaged in synchronous chats via a purpose-built desktop messaging app. Each participant viewed three emotionally evocative short videos (from an 8 video pool, OpenLAV dataset) designed to span valence and arousal dimensions. After each video, they conversed with a research team moderator about their emotional response. All keystroke timings and messages typed were logged. Manual annotation of messages for valence (-1/0/1) and arousal (-1/0/1) plus up to 3 categorical emotions from Ekman’s 7 basic emotions + neutral was performed by two annotators, with Krippendorf’s alpha assessed for reliability. 3. Features and Models: Keystroke dynamics features include timing intervals between keypress/release events, backspace frequency, and enter key usage to infer corrections and pacing. Content features cover punctuation, capitalization, and sentence structure. For text analysis, GPT-4 Turbo (gpt-4-0125-preview) was used to generate valence, arousal, and emotion labels from entire conversation logs. Machine learning models used include Random Forest (RF), Support Vector Machine, and multinomial logistic regression for classification, with RF achieving best performance. Fusion models combined KD features with LLM-generated text features as input for better predictions. 4. Training Regime: Experimental setups were not fully detailed regarding epochs or batch size, but models were trained presumably on the 31 participant dataset with cross-validation or held-out splits not explicitly stated. Hyperparameters and random seeds were not specified, indicating some reproducibility uncertainty. 5. Evaluation Protocol: Models were evaluated on precision, recall, F1 score, and accuracy metrics per emotion class and valence/arousal dimension. Comparisons were made between text-only, KD-only, and fusion feature models. Inter-annotator reliability was measured to validate ground truth labels. A prototype integration with real-time KD-only emotion prediction was demonstrated in the chat application. 6. Reproducibility: Code or data releases are not mentioned. The data is partially controlled and consented but may not be publicly available. Use of proprietary LLM (GPT-4 Turbo) can limit replication. No frozen weights described. Overall, the study provides a proof-of-concept experimental framework combining keystroke behavioral biometrics and large language model sentiment analysis for emotion detection in engaged mental health chat conversations, but more detail is needed for full reproducibility. Concrete end-to-end example: A participant watches an emotionally loaded video, chats with the moderator describing their feelings, while the app logs every keypress with millisecond timing. The typed messages are annotated manually and analyzed by GPT-4 for sentiment labels. The timing patterns and text features are extracted and fed into random forest classifiers to predict discrete emotions and valence/arousal scores. Fusion of these modalities marginally improves classification, especially for strong emotions like anger and fear.

Technical innovations

- Novel fusion of keystroke dynamics with LLM-derived sentiment features to improve emotion detection in synchronous text chat contexts.

- Use of GPT-4 Turbo to generate nuanced valence, arousal, and discrete emotion labels on short user messages to complement behavioral biometrics.

- Development of an unobtrusive real-time emotion sensing prototype embedded in a mental health messaging application using KD-only models.

- Application of combined dimensional (valence/arousal) and categorical (Ekman) emotion models for richer affective state classification in mental health support chats.

Datasets

- OpenLAV — 8 short videos (42-62s each) — public dataset for affective video stimuli

- In-house keystroke and chat logs — 31 participants, synchronous text conversations — controlled study data (not public)

Baselines vs proposed

- Text-only model (LLM sentiment): F1 score on anger = approx. 0.978 vs Fusion model: 0.985

- Text-only model (LLM sentiment): F1 score on surprise = approx. 0.960 vs Fusion model: 0.967

- Text-only model: valence prediction F1 approx. 0.653 vs Fusion model: 0.660

- Keystroke-only model: average precision = 0.796, F1 = 0.791 using random forest

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2406.11135.

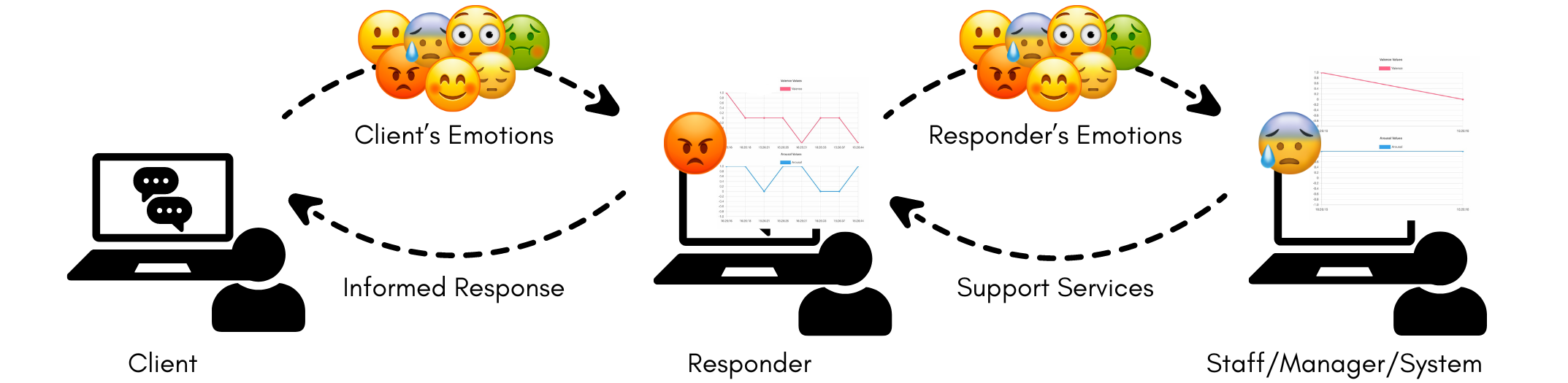

Fig 1: Concept of an emotion-aware platform for mental health conversations ©Kellie Yu Hui Sim; Kohleen Tijing Fortuno;

Limitations

- Small sample size (31 participants) limits generalizability and ability to build personalized models.

- Exclusion of participants with clinical mental health conditions reduces applicability to target clinical populations.

- Study performed in controlled desktop setting without modern texting features like auto-correct, emojis, or mobile device inputs.

- Manual annotation disagreement especially on arousal dimension implies noisy ground truth labels.

- Proprietary LLM (GPT-4 Turbo) usage and lack of code/data release hinder reproducibility.

- KD contribution to emotion detection showed only minimal improvements over text-only models, indicating need for richer KD features or deeper modeling.

- No adversarial evaluation or deployment in real crisis helpline environments yet.

Open questions / follow-ons

- How can keystroke dynamics feature extraction and modeling be improved to meaningfully boost emotion detection beyond text-only analysis?

- What is the impact of incorporating modern text input modalities (auto-correct, emojis, mobile typing) on KD-based emotion sensing?

- How can additional physiological measures (facial expressions, heart rate) be integrated with KD and text to form more robust multi-modal emotion detection in mental health settings?

- What are the best practices for presenting emotion-sensing outputs to human responders to maximize support without causing alert fatigue or overreliance on automated suggestions?

Why it matters for bot defense

For bot-defense and CAPTCHA practitioners, this paper exemplifies how keystroke behavioral biometrics can extend beyond authentication to infer affective states in human-computer interactions, emphasizing the subtle information embedded in typing dynamics. While the KD features here provide only incremental gains over text analysis for emotion detection, the approach of fusing multiple passive signals highlights a direction for robust human state sensing absent intrusive sensors. For CAPTCHAs, adapting keystroke dynamics to detect emotional or engagement signals could enrich user interaction models or help distinguish bots exerting minimal cognitive or emotional load. However, the paper also underscores the domain-specific challenges in deploying KD models reliably due to variability, privacy concerns, and the necessity of multi-modal fusion, which CAPTCHA systems should heed when considering behavioral biometrics for nuanced user state inference. Finally, the privacy discussions here resonate for CAPTCHA and bot-defense applications must carefully balance behavioral data collection, real-time inference, and user consent.

Cite

@article{arxiv2406_11135,

title={ Towards Understanding Emotions for Engaged Mental Health Conversations },

author={ Kellie Yu Hui Sim and Kohleen Tijing Fortuno and Kenny Tsu Wei Choo },

journal={arXiv preprint arXiv:2406.11135},

year={ 2024 },

url={https://arxiv.org/abs/2406.11135}

}