Dazed & Confused: A Large-Scale Real-World User Study of reCAPTCHAv2

Source: arXiv:2311.10911 · Published 2023-11-17 · By Andrew Searles, Renascence Tarafder Prapty, Gene Tsudik

TL;DR

This paper presents a large-scale, real-world user study of Google's reCAPTCHAv2 conducted over 13 months with more than 3,600 distinct university users interacting via live account creation and password recovery web services. The study uniquely uses unaware participants within a natural service context rather than recruited volunteers, yielding valuable ecological validity. It combines quantitative timing data with qualitative user feedback collected through surveys including System Usability Scale (SUS) ratings. Key findings include improved checkbox solving times with user experience, significant differences in solving times between different website services (account creation vs password recovery), and correlations of solving performance to education level and academic major. Users consistently perceive image puzzles as annoying and checkbox challenges as easy, with SUS scores indicating image tasks are “OK” but checkbox tasks are “good.” A cost and security analysis demonstrates that reCAPTCHAv2 has negligible security benefits while incurring tremendous human labor, energy consumption, and privacy concerns. The authors conclude that reCAPTCHAv2 is obsolete and recommend deprecation.

Key findings

- Checkbox challenges solved 35% faster on the 10th attempt compared to the 1st (Tables 7 and 8).

- Password recovery service users solved checkbox captchas 17% faster than account creation users (p = 1.1e-115) (Tables 4, 5, 6).

- Mean solving time for behavioral (checkbox-only) captchas was 1.85 seconds, while image challenges took 10.3 seconds on average—a 557% increase (Table 3).

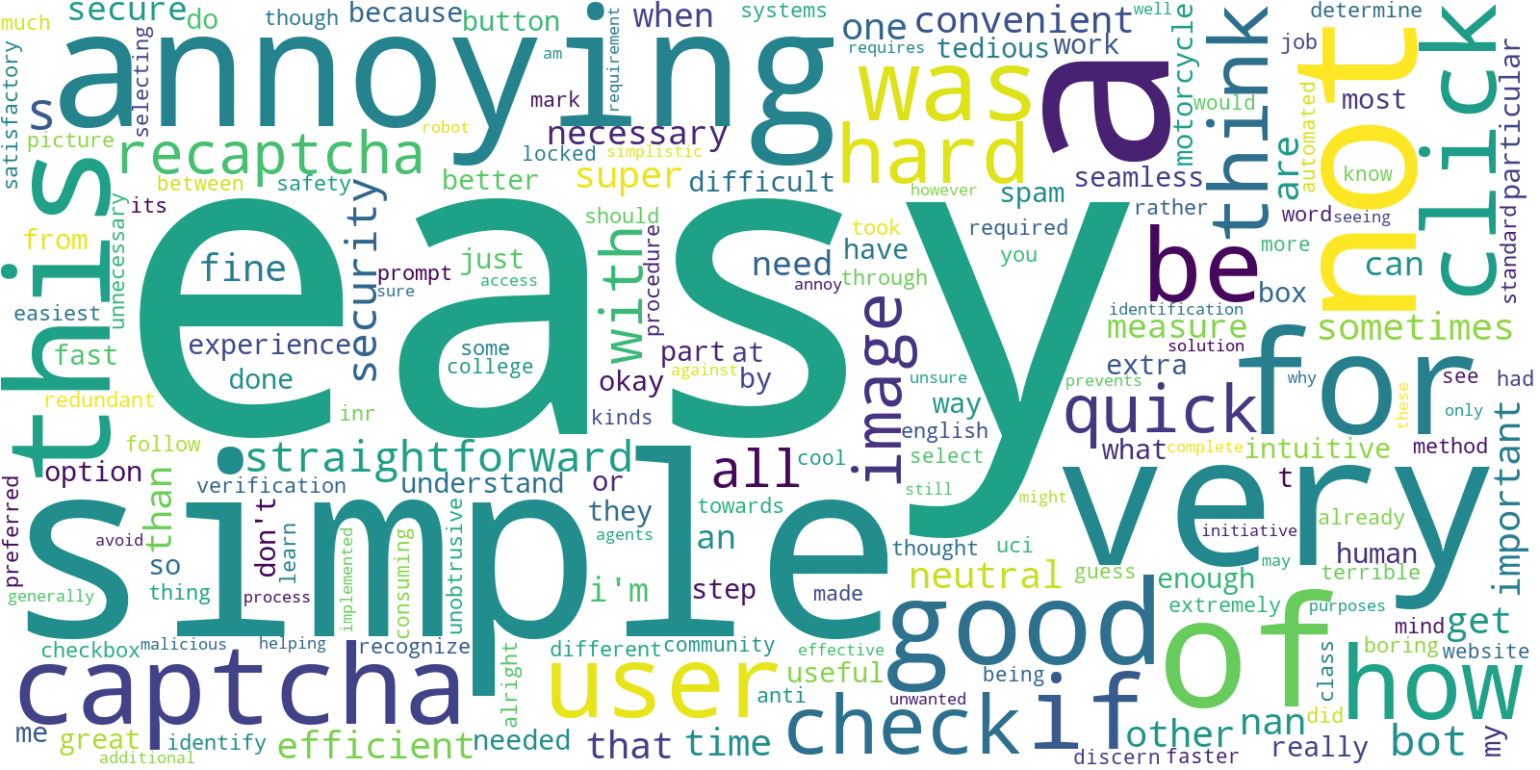

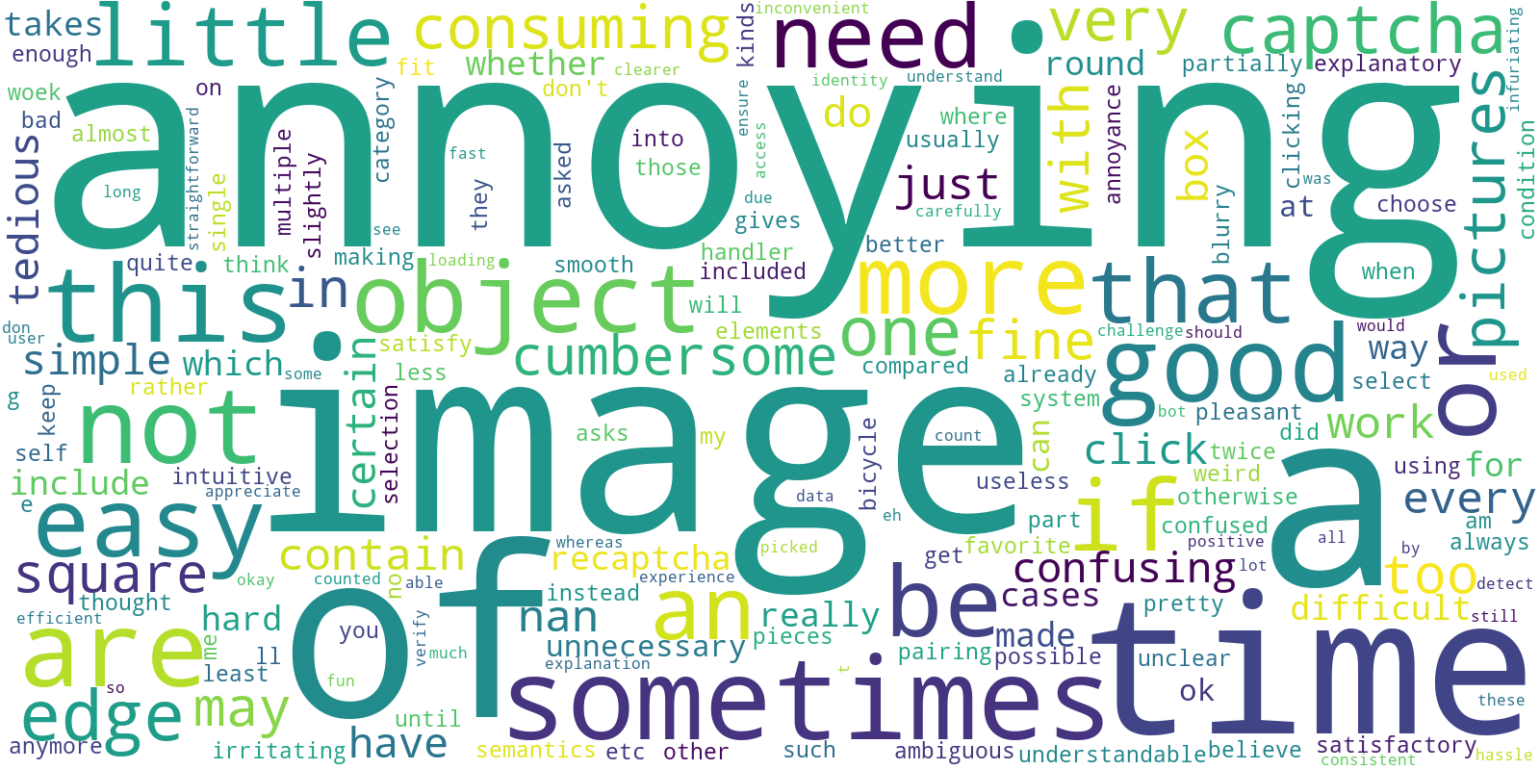

- System Usability Scale (SUS) scores averaged 78 ('good') for checkbox tasks and 58 ('OK') for image tasks, with 40% of users describing image challenges as annoying versus <10% for checkbox.

- Technical STEM majors solved captchas statistically significantly faster than non-technical majors (Tables 12, 13, 14).

- Education level correlates with solving performance: freshmen were slowest, seniors fastest (Tables 9, 10, 11).

- ReCAPTCHAv2’s cumulative human solving time estimated at 819 million hours globally, equating to approximately $6.1 billion USD in unpaid labor.

- ReCAPTCHAv2 consumes approximately 134 PB of bandwidth and 7.5 million kWh of electricity, causing about 7.5 million pounds of CO2 pollution.

Threat model

This paper implicitly assumes an adversary motivated to bypass reCAPTCHAv2 captchas with automated bots capable of solving behavioral analysis and image classification challenges at high success rates, as shown in prior works. The adversary can automate solving processes but cannot completely evade human verification in all cases. The focus is on human usability effects rather than adversarial robustness evaluation. The adversary also does not have direct access to Google’s internal captcha scoring or risk analysis beyond normal client-side observations.

Methodology — deep read

Threat Model & Assumptions: The study assumes a real-world deployment where users (university students) are unaware they participate in a captcha evaluation study. The adversary model is implicit: bots attempting to bypass reCAPTCHAv2, but this work focuses primarily on human usability and performance rather than direct bot detection or attack resistance. Users do not prepare or attempt to game the system. The authors assume users have no prior anticipation of encountering captchas in this specific workflow.

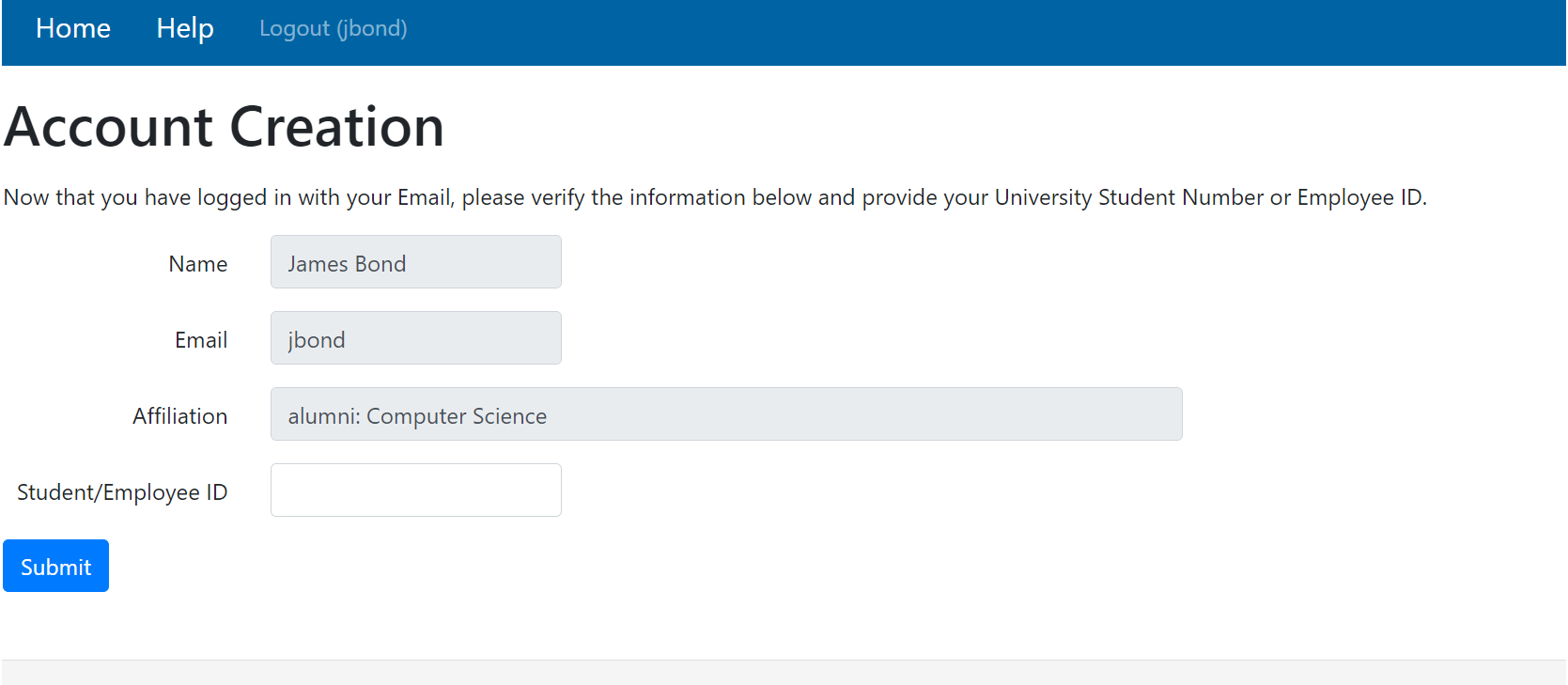

Data: Data was collected from a live web service for account creation and password recovery within the School of Information & Computer Sciences (SICS) at the University of California Irvine. Over 13 months, 9,169 form submissions with captcha solving times were logged; after filtering incomplete or erroneous data and excluding non-student users, 8,915 valid sessions from 3,625 unique participants remained. Demographic attributes (major and education level) were harvested post-hoc using a JavaScript directory crawler querying the university directory. A subset of participants (108) completed a post-study survey covering detailed SUS usability questions and qualitative feedback.

Architecture / Algorithm: The reCAPTCHAv2 challenge combines behavioral analysis (checkbox "I am not a robot") and a fallback image classification challenge. Captcha is triggered after form submission; the client records timestamps upon captcha rendering and successful validation response from Google servers. Solving time includes entire user interaction—behavioral clicking, potential image solving, and retries. The system uses Google’s “easy” difficulty setting.

Training Regime: Not applicable as no model training was performed by authors; study purely observational with respect to deployed Google reCAPTCHAv2 service.

Evaluation Protocol: Solving times are analyzed quantitatively using descriptive statistics (mean, median, std dev) and rigorous statistical tests including Shapiro-Wilk for normality, skewness and kurtosis tests, Brown-Forsythe for variance equality, and Kruskal-Wallis with Holm-Bonferroni correction for mean comparisons across groups (e.g., by service type, attempts, education level, major). Usability was assessed using standard SUS scales and open-ended survey responses. Google reCAPTCHAv2 admin console data was used to validate behavioral versus image challenge splits.

Reproducibility: The dataset and code are not publicly released due to privacy and university data restrictions. The methodology is described in detail to facilitate replication in similar institutional contexts.

Concrete Example: A new user attempts account creation and submits a form. Upon submission, the reCAPTCHAv2 checkbox challenge is rendered (timestamp recorded). The user clicks the checkbox; if failed, an image task is presented. Upon successful captcha verification (potentially multiple attempts), the timestamp is logged. The difference between timestamps yields total solving time, which is then associated with user demographics harvested separately. This data is aggregated over thousands of users and statistically analyzed to identify performance and usability trends.

Technical innovations

- Deployment of a large-scale, real-world reCAPTCHAv2 study with over 3,600 unaware participants over 13 months, outperforming prior smaller or artificial studies.

- Use of a university directory crawler to automatically obtain detailed participant demographic data (major, education level) without disrupting natural captcha interactions.

- Novel multi-attempt per-user measures showing solving time speedups of 35% from first to tenth attempt on checkbox challenges.

- Comprehensive cost and environmental impact analysis quantifying human labor, bandwidth, electricity, and carbon footprint of global reCAPTCHAv2 use.

Datasets

- Live SICS reCAPTCHAv2 account creation and password recovery logs — 9,169 raw sessions, filtered to 8,915 valid sessions from 3,625 unique participants — University of California Irvine (private dataset)

- Post-study survey responses — 108 participants — collected via online Google Form

Baselines vs proposed

- Google reCAPTCHAv2 behavioral accuracy (checkbox) = 79.98% vs image challenge accuracy = 92.96% (Google dashboard, Table 2)

- Mean solving time checkbox (behavioral) = 1.85s vs image mean solving time = 10.3s (557% slower) (Table 3)

- Password recovery checkbox solving time mean = 1.67s vs account creation checkbox solving time mean = 1.96s (17% slower) (p=1.1e-115) (Table 4)

- Checkbox SUS median score = 78 (good) vs image SUS median score = 58 (OK) (Survey Section 4)

Figures from the paper

Figures are reproduced from the source paper for academic discussion. Original copyright: the paper authors. See arXiv:2311.10911.

Fig 1: reCAPTCHAv1 distorted text captcha [21]

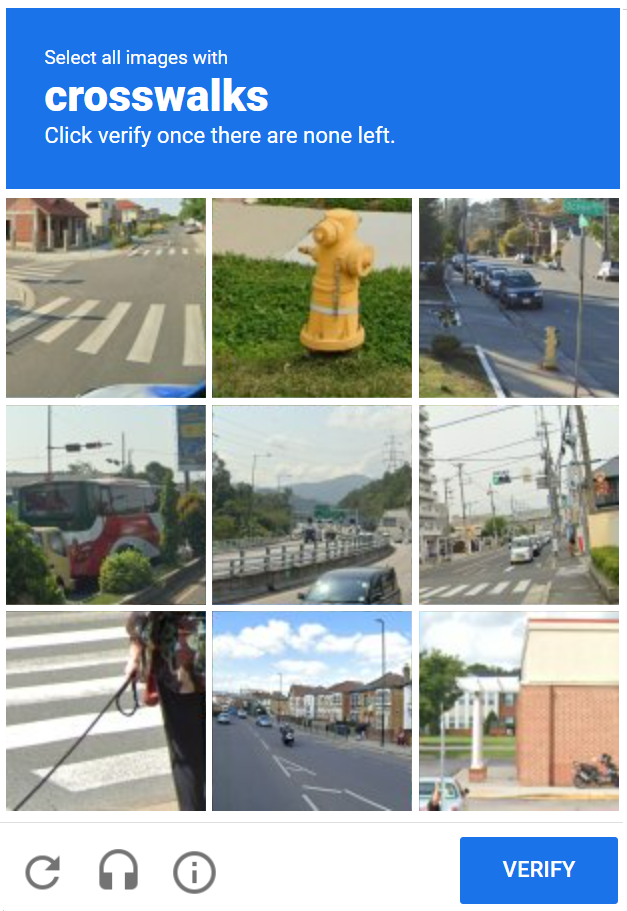

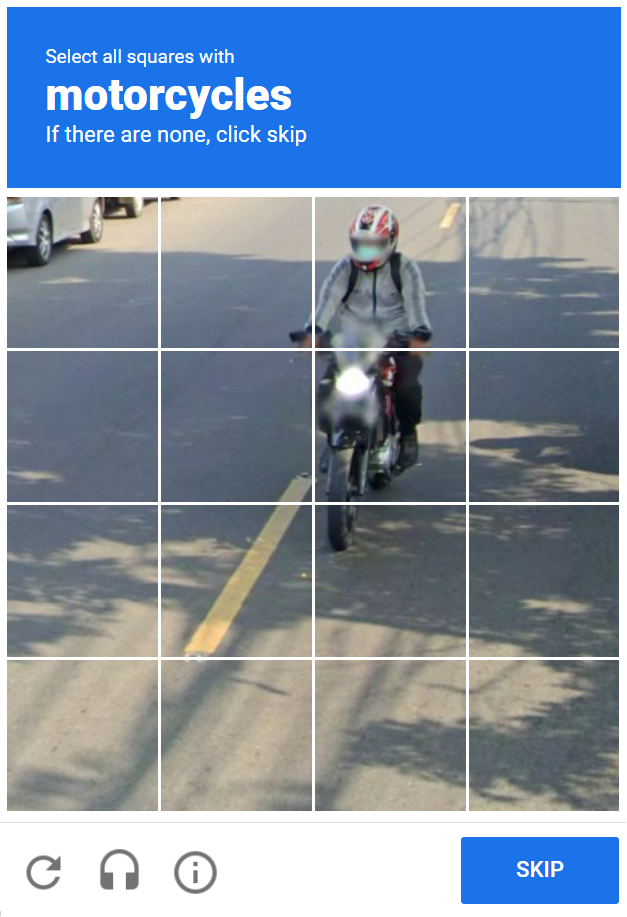

Fig 2: Image Labeling Task captcha [21]

Fig 5: hCAPTCHA [19]

Fig 14: Preference score for checkbox only scenario

Fig 15: Preference score for checkbox+image scenario

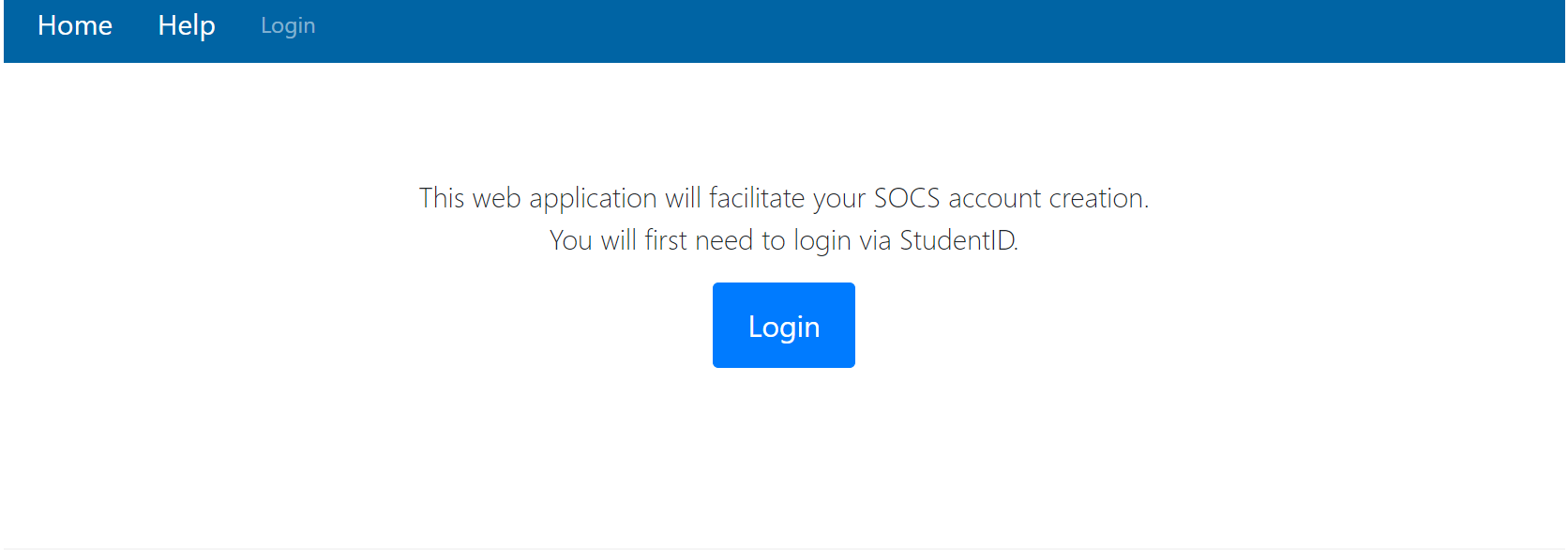

Fig 18: Initial login page

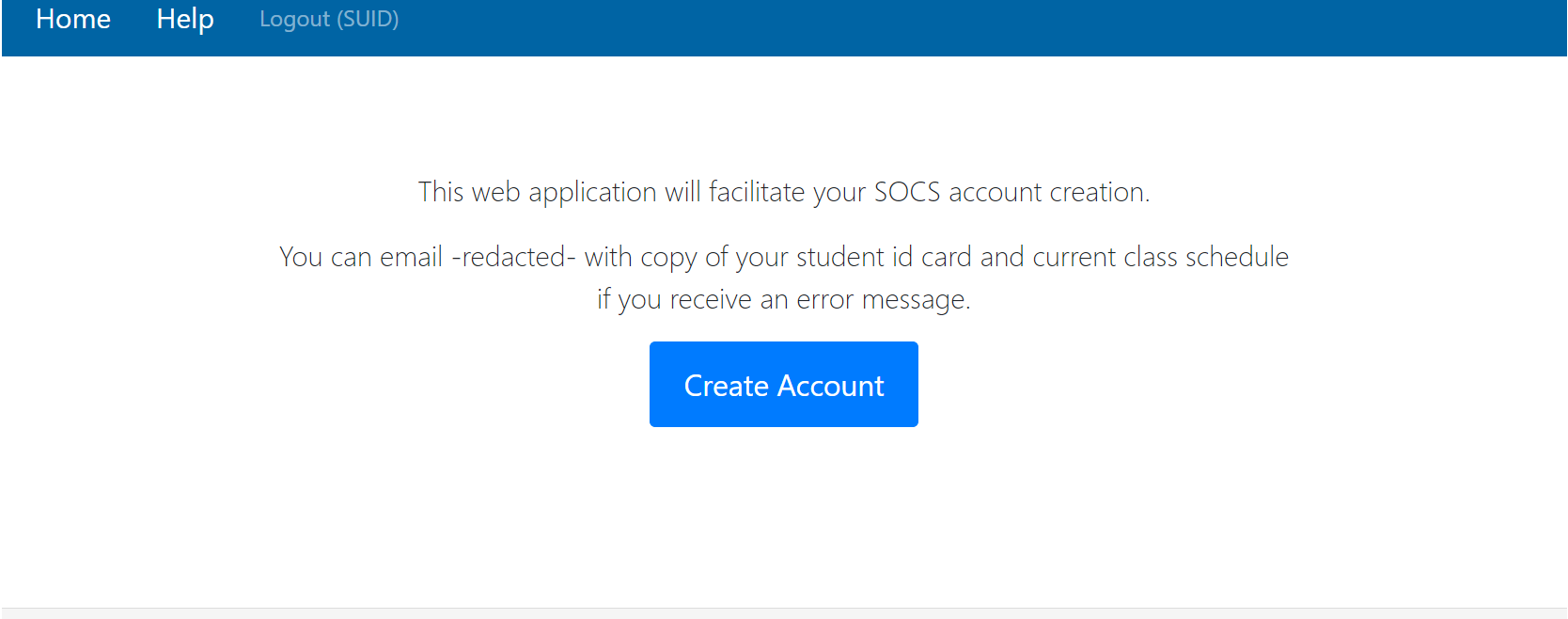

Fig 19: Initial Account Creation Page

Fig 20: Account creation form

Limitations

- Participant population limited to a single US public university’s School of Information and Computer Sciences; results may not generalize globally or to less tech-savvy populations.

- Study only includes users already logged into campus VPN and enrolled in SICS courses, potentially less representative of casual internet users.

- No direct adversarial or automated bot attack evaluations were performed on reCAPTCHAv2 in this study, limiting security conclusions to existing literature and authors’ analyses.

- Data and methodology cannot be fully independently replicated due to privacy constraints and lack of public dataset/code release.

- The study setting precludes control over exact captcha difficulty parameter tuning or behavior of reCAPTCHAv2 provider beyond using “easy” setting.

- Survey participation was limited (108 respondents), possibly introducing some response bias in usability measures.

Open questions / follow-ons

- How do different user populations (e.g., older, less tech-savvy, or non-English speakers) perform and perceive reCAPTCHAv2?

- What is the impact of alternate captcha difficulty settings or newer versions (reCAPTCHA v3, hCAPTCHA) on usability and security tradeoffs?

- Can improved privacy-preserving bot detection systems be designed that avoid the large human cost and annoyance identified here?

- What automated bot-resistance mechanisms might effectively replace or supplement reCAPTCHAv2 given these low-security, high-cost findings?

Why it matters for bot defense

This study offers bot-defense practitioners detailed empirical evidence that reCAPTCHAv2, while still widespread, imposes significant human burden and annoyance with minimal security gain. Its behavioral checkbox component is relatively efficient for users but offers negligible bot resistance, and the fallback image challenges are slow and disliked. The statistically significant contextual effects on solving time highlight that captcha deployment context (account creation vs password reset) influences user experience and should be considered carefully. Additionally, demographic correlations indicate that educational and domain expertise factors may affect captcha solving performance. From a bot defense perspective, this work reinforces the notion that solely relying on reCAPTCHAv2 is insufficient and costly. It motivates exploration of alternative or supplemental approaches that reduce human labor, improve accessibility, and increase bot resilience without privacy-invasive tracking. Finally, the extensive cost analysis informs practitioners about the environmental and human resource impact of captcha reliance at scale, potentially shaping future captcha lifecycle decisions.

Cite

@article{arxiv2311_10911,

title={ Dazed & Confused: A Large-Scale Real-World User Study of reCAPTCHAv2 },

author={ Andrew Searles and Renascence Tarafder Prapty and Gene Tsudik },

journal={arXiv preprint arXiv:2311.10911},

year={ 2023 },

url={https://arxiv.org/abs/2311.10911}

}