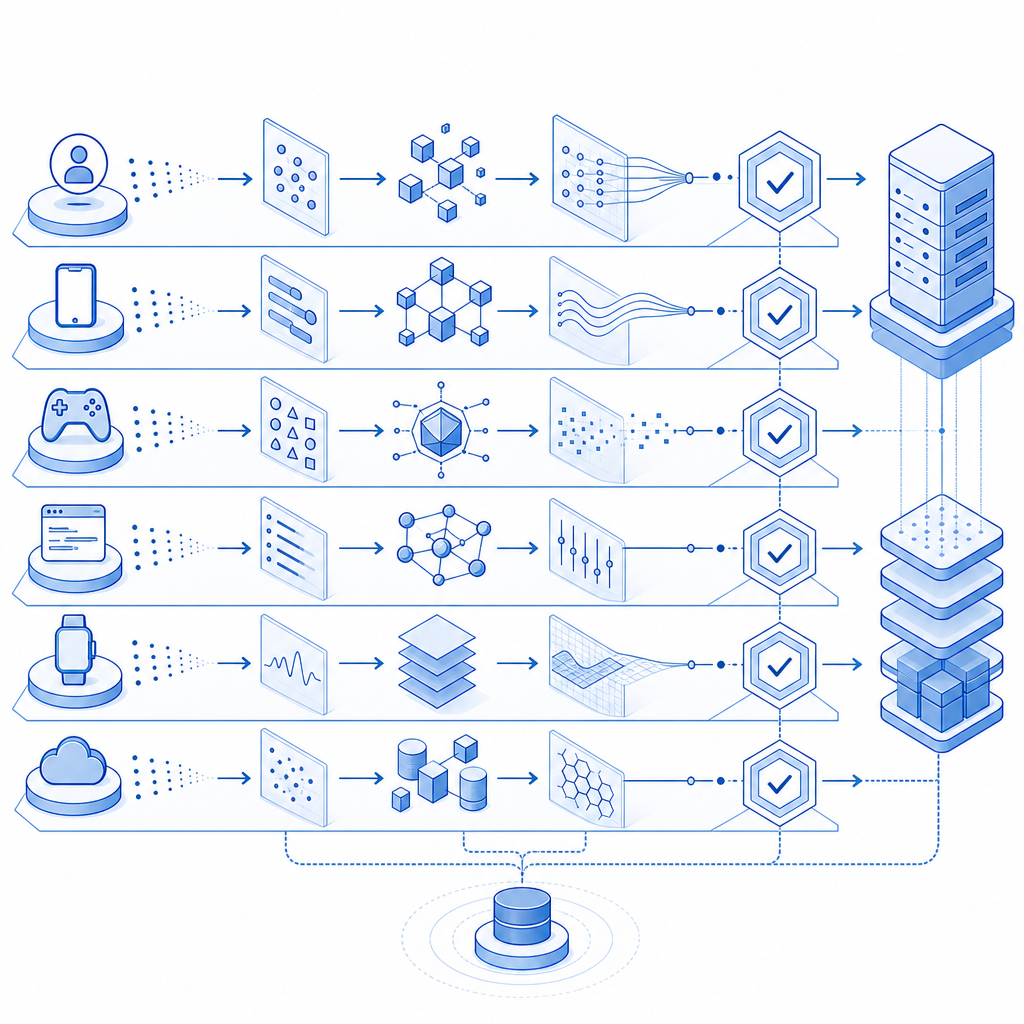

Bot detection techniques work best when they combine multiple weak signals into a stronger decision. No single check reliably separates humans from automation anymore; instead, modern defenses use behavior patterns, browser integrity, network reputation, challenge results, and server-side validation to reduce abuse without blocking too many real users.

That matters because bots are not all the same. Some are high-volume credential stuffers, some scrape content, some try account takeover, and some look almost normal until a specific action tips them off. A useful defense stack should therefore be layered: observe, score, challenge, and verify. If you build it that way, you can adapt as traffic changes instead of depending on one brittle gate.

What bot detection techniques actually look for

The most effective bot detection techniques focus on deviations from normal interaction, not just obvious automation markers. A defender’s goal is to answer a few questions quickly:

- Does this session behave like a real browser or app?

- Does the interaction pace fit a human user?

- Does the request path make sense for a legitimate flow?

- Is the device or IP reputation suspicious?

- Can the server verify a challenge outcome independently?

A single flag rarely proves anything. For example, a headless browser is not automatically malicious, and a mobile automation framework is not always fraud. The better approach is to accumulate evidence. A login attempt from a residential IP, with realistic mouse movement, can still be a bot if it submits dozens of credential pairs in a minute. Likewise, a scraper might pass a basic CAPTCHA challenge but fail when you correlate token validation, timing, and session continuity.

Common signal categories

- Behavioral signals: pointer movement, focus changes, typing cadence, scroll depth, and time-to-action.

- Technical signals: browser fingerprints, JavaScript execution, cookie persistence, WebGL or canvas consistency, and header order.

- Network signals: IP reputation, ASN patterns, geographic drift, proxy/VPN likelihood, and request burstiness.

- Flow signals: missing referers, impossible navigation paths, repeated form resets, or API calls without prior page visits.

- Challenge signals: token validity, challenge completion time, and server-side consistency checks.

The important part is not collecting everything. It is collecting enough to make a confident decision while keeping latency and false positives under control.

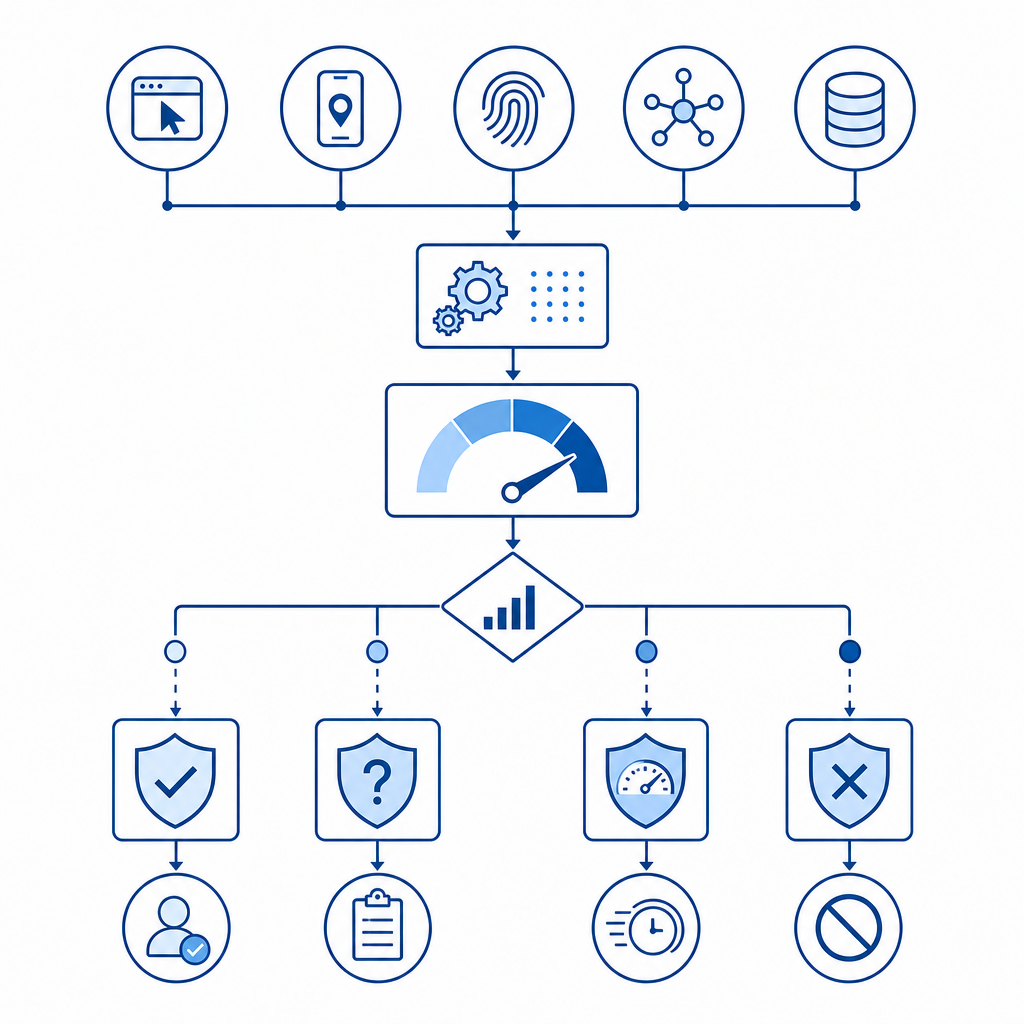

A practical defense stack: detect, challenge, verify

A good implementation usually has three stages. First, detect suspicious sessions. Second, decide whether to challenge, throttle, or allow. Third, verify the outcome on the server so the client cannot simply claim success.

Here is a simple mental model:

| Stage | What it does | Typical inputs | Output |

|---|---|---|---|

| Detection | Scores the request | interaction timing, headers, device hints, IP reputation | risk score |

| Challenge | Tests active behavior | CAPTCHA, proof-of-work, email step-up, pass token | pass/fail signal |

| Verification | Confirms trust server-side | token, client IP, secret key | allow/deny decision |

This is where products like CaptchaLa fit naturally: they let you pair a client-side challenge with server-side verification, so the browser alone cannot decide its own fate. That separation is important. Any client-visible check can be copied, replayed, or spoofed unless the server validates the result.

A typical verification flow looks like this:

# Client completes challenge and receives a pass token

# Client sends token to your backend with the request

# Backend validates token before processing the action

POST https://apiv1.captcha.la/v1/validate

Body: { pass_token, client_ip }

Headers: X-App-Key, X-App-SecretOn the issuance side, you can create a server token for the challenge flow via:

POST https://apiv1.captcha.la/v1/server/challenge/issueThat pattern keeps trust anchored on your backend, which is where it belongs.

Techniques worth using, and where they fit

Not every method deserves the same weight. Some are great as low-friction hints; others are stronger but should be used sparingly. Below are the techniques I see as most useful from a defender perspective.

1) Behavioral analysis

Humans are inconsistent in ways bots often are not. They pause, backtrack, change cursor direction, and hesitate before submission. Automated flows often become suspicious when they move too quickly, too evenly, or too perfectly.

Useful specifics:

- Measure median time between page load and first interaction.

- Track variance in keypress intervals rather than just total form time.

- Flag repeated identical navigation sequences across many sessions.

- Compare pointer movement entropy against known-good baselines.

Behavioral analysis is strongest when combined with page context. A user spending 2 seconds on a login form may be fine if they use password autofill. The same timing may be odd on a long checkout page.

2) Device and browser integrity checks

A lot of bot detection techniques depend on whether the client environment behaves like a normal browser. This can include JavaScript execution, cookie support, storage consistency, and tamper-resistant challenge loading.

CaptchaLa, for example, provides a loader at https://cdn.captcha-cdn.net/captchala-loader.js and SDK coverage across Web, iOS, Android, Flutter, and Electron, which helps you implement the same trust model across surfaces rather than inventing one-off logic per app. The broader ecosystem matters too: reCAPTCHA, hCaptcha, and Cloudflare Turnstile each make different tradeoffs around friction, privacy posture, and integration style. The right choice depends on your traffic mix and operational needs.

3) Network and reputation checks

Network metadata is rarely enough on its own, but it is excellent for prioritization. A request from a known proxy cluster or a data center ASN might deserve more scrutiny than a long-lived residential session with normal browsing behavior.

Good practice:

- Score IP reputation before expensive work.

- Watch for impossible travel or rapid geolocation drift.

- Treat bursts from many fresh accounts as a coordination signal.

- Compare request cadence across IPs, user agents, and session IDs.

These checks are especially useful for abuse patterns like signup flooding and credential stuffing, where the actor can vary superficial details but still leaves statistical fingerprints.

4) Server-side validation and session binding

Client-side checks are only half the story. A pass token should be validated on the server, ideally bound to the current session and client IP where appropriate. That way, replaying a token from another device or using it outside the intended flow becomes much harder.

If you are implementing this in production, keep the validation logic close to the action you are protecting:

- login

- signup

- password reset

- checkout

- contact form submission

- API write operations

This is also where first-party data matters. You should make decisions using signals you collect directly from your own product and infrastructure, not by relying on opaque third-party assumptions. That keeps the model more auditable and easier to tune.

Implementation notes that reduce false positives

A bot defense that blocks real users is just a different kind of outage. The best bot detection techniques are therefore careful about context, fallback, and observability.

A few implementation rules help a lot:

- Start with risk scoring, not hard blocks, for borderline sessions.

- Use step-up challenges on sensitive actions instead of every page load.

- Exempt known good traffic patterns where possible, such as internal testers or trusted enterprise integrations.

- Log both the score and the reason codes so you can tune thresholds later.

- Re-evaluate the model periodically as attack patterns change.

If you want a multilingual UX, it helps to know that CaptchaLa supports 8 UI languages. That reduces friction when you need to challenge global traffic without creating a confusing experience for legitimate users.

For mobile and cross-platform apps, native SDK support can matter more than a web-only widget. CaptchaLa’s SDKs cover Web (JS, Vue, React), iOS, Android, Flutter, and Electron, with published packages including Maven la.captcha:captchala:1.0.2, CocoaPods Captchala 1.0.2, pub.dev captchala 1.3.2, plus server SDKs like captchala-php and captchala-go. That mix makes it easier to keep one verification strategy across your product surface area.

The nice thing about this approach is that it scales with your abuse patterns. If bots start behaving more like humans, you lean harder on server validation and session integrity. If they become noisier, behavioral and network scoring catches more of them early. Either way, you are not depending on a single brittle rule.

Conclusion: build a layered system, not a single gate

The strongest bot detection techniques are layered, explainable, and measured. They combine behavioral clues, browser integrity, network reputation, challenge outcomes, and server-side validation into a single decision process that you can tune over time. That is far more durable than trying to guess “bot or human” from one field or one widget.

If you are comparing providers or planning an implementation, docs is the best place to review integration details, and pricing shows the tiers from free usage through higher-volume plans. For teams building practical abuse defenses, the next step is usually to instrument your highest-risk flows first, then expand from there.