Bot detection on Reddit starts with one idea: you usually do not want to “block bots” everywhere, you want to make risky actions expensive, measurable, and reversible. Reddit-like products are especially attractive to automation because account creation, voting, commenting, scraping, and reward abuse all look like normal user activity unless you model behavior across time, device, and network signals.

That makes the problem less about one magic CAPTCHA and more about layered detection. For a community platform, the right question is: which actions deserve friction, which deserve silent scoring, and which deserve hard verification only when the risk is high?

What makes Reddit-style abuse hard to detect

Reddit’s interaction model creates a lot of ambiguity. A person can post rapidly during breaking news, a new user can legitimately comment several times in a row, and a mobile app can generate patterns that look “bot-like” if you only inspect timing. So the goal is not to find perfect bot fingerprints; it is to separate normal bursts from coordinated automation.

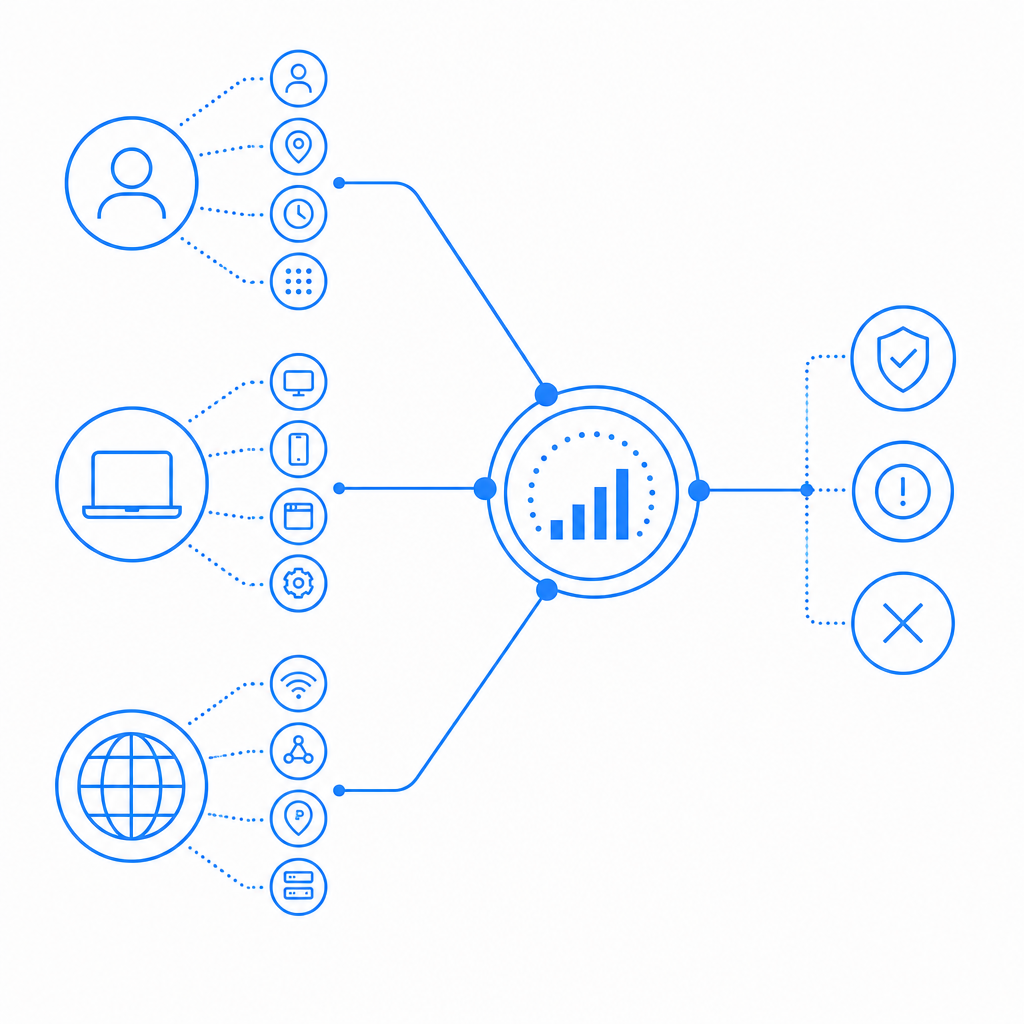

The most useful signals are usually behavioral and relational, not just technical:

- Account age, karma history, and moderation outcomes.

- Request rhythm: inter-arrival times, burstiness, and time-of-day consistency.

- Device continuity: cookie reuse, session stability, and SDK integrity.

- Network patterns: IP reputation, ASN clustering, proxy churn, and geo drift.

- Graph signals: many accounts acting on the same submissions, subreddits, or URLs.

A common mistake is over-weighting a single signal. For example, a residential IP does not mean “human,” and a fast sequence of actions does not mean “bot.” Better systems score combinations. If a user is new, has little history, switches IPs often, and repeatedly triggers the same action across multiple communities, that is much more meaningful than any one datapoint alone.

A practical detection stack for Reddit-like platforms

A strong stack usually has three layers: passive observation, adaptive friction, and verification.

1) Passive observation

Collect signals without interrupting the user. This includes:

- session duration and navigation paths

- mouse, touch, and typing cadence

- account linkage patterns

- content similarity across accounts

- repeated failed actions or rejected submissions

This layer is ideal for building risk scores and trend analysis. It also helps with moderation workflows, because you can flag accounts for review without forcing everyone through a challenge.

2) Adaptive friction

When risk crosses a threshold, increase the cost of automation:

- rate-limit high-value actions like voting, posting, or messaging

- require email or phone verification for suspicious signups

- temporarily slow down repeated actions

- add proof-of-work style delays or lightweight challenge flows

This is where a service like CaptchaLa can fit naturally: not as a wall for everyone, but as a step-up check when your own scoring indicates elevated risk. The advantage of a step-up model is that it preserves the normal experience for trusted users while forcing questionable sessions to prove continuity.

3) Verification

For the riskiest actions, verify server-side. A typical flow is:

- client receives a challenge token or pass token

- client submits the protected action

- backend validates the token before completing the action

If you are using CaptchaLa, the server-side validation endpoint is POST https://apiv1.captcha.la/v1/validate with {pass_token, client_ip} in the body and X-App-Key plus X-App-Secret in headers. That kind of validation should happen only on your backend, never in the browser.

Here is a simple defender-side example of how the logic is often structured:

if user_action in high_risk_actions:

risk = score_request(user, session, ip, history)

if risk >= hard_challenge_threshold:

require_challenge()

elif risk >= soft_challenge_threshold:

slow_down_request()

log_for_review()

else:

allow_action()The key is that the score is contextual. A brand-new account posting a first comment in a busy subreddit is not the same as an established contributor replying in their usual community.

Comparing CAPTCHA and bot-defense options

Different products solve different parts of the problem. For Reddit-style abuse, the question is not “which one is universally best,” but “which one fits the trust model and operational constraints you have.”

| Tool | Strengths | Tradeoffs | Best fit |

|---|---|---|---|

| reCAPTCHA | widely recognized, easy to deploy | can feel intrusive, less control over the full workflow | generic login/signup friction |

| hCaptcha | flexible challenge options, familiar to many teams | still mostly a challenge layer, not a full abuse stack | sites that want stronger manual verification |

| Cloudflare Turnstile | low-friction experience, simple integration | works best inside Cloudflare-centric setups | lightweight web verification |

| CaptchaLa | server validation, native SDKs, first-party data only | like any CAPTCHA, it works best when paired with risk scoring | teams that want custom step-up flows |

There is no universal winner here. If your product needs a very low-friction public-facing gate, Turnstile may be attractive. If you want challenge-centric protection, reCAPTCHA or hCaptcha may be familiar choices. If you want to keep control of the workflow and combine verification with your own detection logic, docs and pricing are worth a look.

Implementation details that matter more than the CAPTCHA brand

A lot of abuse prevention success comes from implementation discipline.

First, challenge at the action boundary, not just at signup. On Reddit-like systems, bots often look harmless until they start voting, posting links, or mass-commenting. If you only protect account creation, attackers simply wait until after onboarding.

Second, bind verification to context. A pass token should be useful for a specific action window and request context, not reusable forever. That is one reason server-side validation matters so much.

Third, keep client and server responsibilities separate. A challenge loader belongs on the client side, while the decision to trust the result belongs on the server. CaptchaLa’s loader, for example, is served from https://cdn.captcha-cdn.net/captchala-loader.js, while validation happens with your backend calling the API.

Fourth, instrument for false positives. If you are protecting a community platform, a false positive can block a legitimate moderator, a power user, or a fast typist during a live event. Track:

- challenge presentation rate

- completion rate

- post-challenge success rate

- appeal or retry rate

- action-level conversion after verification

Those metrics tell you whether your friction is helping or just irritating people.

Where the SDKs fit

If your stack spans multiple clients, native SDK coverage can simplify rollout. CaptchaLa supports Web SDKs for JS, Vue, and React, plus iOS, Android, Flutter, and Electron. It also has server SDKs for captchala-php and captchala-go, which is useful if your Reddit-style app has mixed frontend and backend services.

For mobile apps especially, SDK consistency matters. If a bot operator can exploit one platform but not another, your abuse surface becomes uneven. The cleaner your integration is across clients, the easier it is to keep risk logic consistent.

A realistic policy for Reddit-style moderation

The best policy is usually specific, not broad. For example:

- Allow low-risk browsing with no friction.

- Score new sessions on signup, login, and first content submission.

- Add step-up verification for first vote, first link post, or repeated DMs.

- Require stronger checks when accounts cluster by IP, device, or behavior.

- Escalate to moderation review when multiple signals align.

This approach works because it mirrors how abuse actually happens. Most automation is opportunistic. It looks for the cheapest route through your system. If you only make expensive actions harder, many campaigns stop there.

CaptchaLa’s free tier can be enough for early-stage communities, with 1,000 verifications per month. If your product grows, Pro covers 50K-200K, and Business supports 1M. The important part is not the tier name; it is whether your abuse controls grow with your traffic and moderation load.

Final take

Bot detection on Reddit is really about shaping incentives. You do that by combining behavioral scoring, session continuity checks, rate limits, and server-validated challenges at the moments that matter most. The better your signals, the less often you need to challenge real users.

If you are mapping out a rollout, start with your highest-value actions and design from the backend outward. Then compare how your verification layer interacts with your own scoring, moderation queue, and analytics.

Where to go next: review the docs for integration details, or compare plans on pricing if you expect verification volume to grow.