Bot detection in social media is the practice of spotting automated or coordinated abuse before it distorts signups, follows, likes, comments, DMs, and ad interactions. The goal is not to “catch bots” in the abstract; it’s to protect trust signals, keep moderation costs manageable, and preserve the user experience for real people.

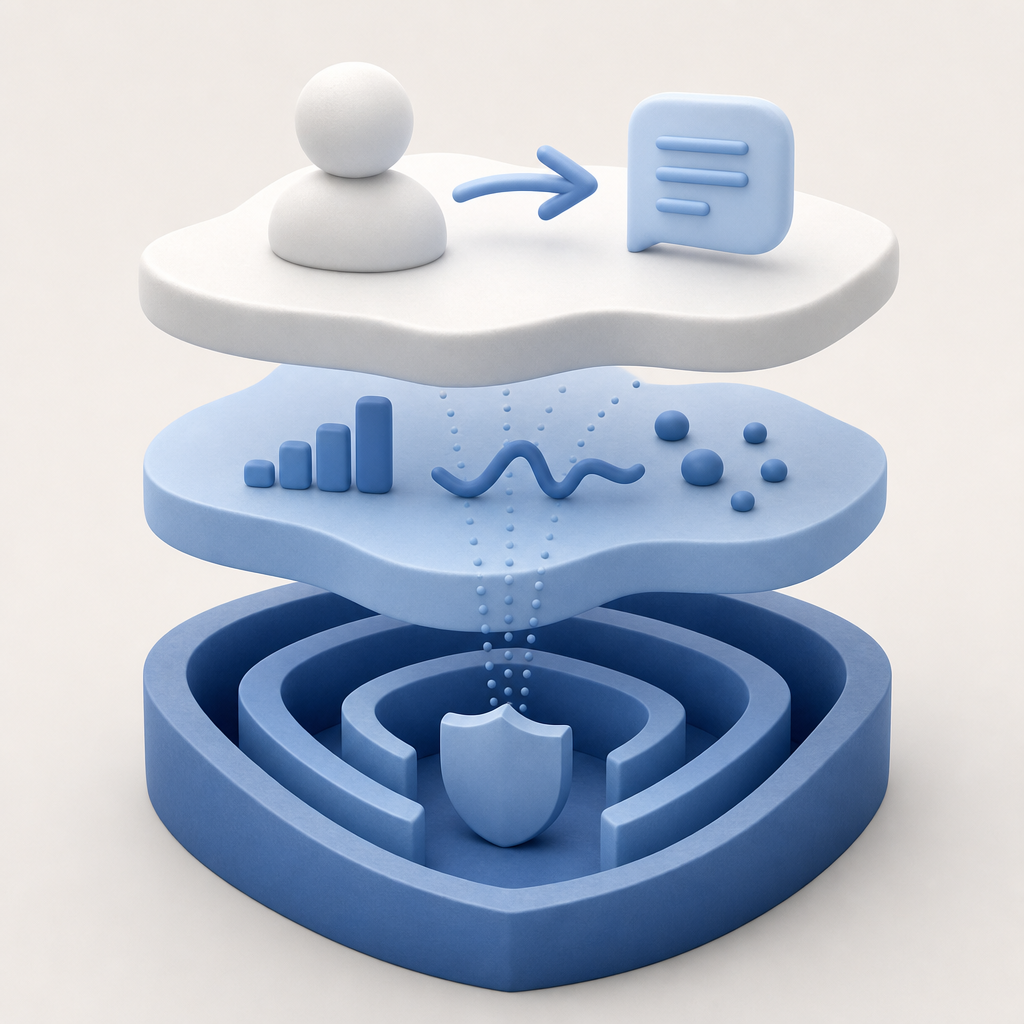

The tricky part is that social platforms rarely face one kind of bot. You may see low-quality account farms, scripted engagement, credential-stuffing attempts, spam DMs, or “gray” automation that stays just below obvious thresholds. A good defense treats bot detection as a layered system: start with request integrity, add behavioral signals, and then escalate only when risk rises.

What bot detection in social media actually needs to stop

A social product has a few especially attractive abuse surfaces:

- Account creation and onboarding

- Login and password reset

- Posting, commenting, and reacting

- Private messaging and invite flows

- API endpoints that power mobile and web clients

Each surface has different abuse goals. A spammer trying to flood comments leaves a different trail than an operator creating 10,000 disposable accounts. That means you should not rely on one universal score or one static CAPTCHA challenge.

Instead, think in terms of risk per action. For example:

- New account registration may deserve tighter friction than liking a post.

- A burst of follows from a fresh device may need step-up checks.

- A message containing links plus repeated delivery failures may warrant throttling.

- A login from a new IP, new device, and impossible travel pattern may need an additional challenge.

This is where bot detection becomes more than “show a puzzle.” The challenge is just one signal. What matters is whether the browser, app, and server all agree that the event is coming from a legitimate client.

Signals that matter most

Strong bot detection in social media usually combines several signal classes. None of these are perfect on its own, but together they raise the cost of abuse.

1) Request authenticity

You want to know whether a request came from your real frontend or a script replaying your endpoints. A server-validated token helps here because the backend can verify that the client obtained a fresh proof from your challenge flow.

With CaptchaLa, validation happens server-side with:

POST https://apiv1.captcha.la/v1/validate

# Body: { pass_token, client_ip }

# Headers: X-App-Key, X-App-SecretThat pattern keeps the trust decision on your server instead of in the browser.

2) Client and device consistency

A legitimate user usually has some continuity: cookie state, app version, OS/browser fingerprint trends, and realistic timing between actions. Bots often have one or more of the following:

- Rapid repetition with little variance

- Fresh sessions for every action

- Mismatched locale, timezone, and IP geography

- Excessive parallelism from the same subnet or ASN

- Automation artifacts in navigation timing

You do not need to collect everything. In many cases, a small set of stable signals plus rate limits and a server-side token check is enough to separate normal use from abuse.

3) Behavioral rhythm

Social interactions are noisy, but they are not random. Humans pause, scroll, backtrack, and make mistakes. Automated systems often create patterns like:

- Perfectly even intervals between actions

- Identical post/comment templates

- Repeated submission after identical delays

- Unnatural navigation from landing page to final action

A practical defense uses these rhythms to decide when to step up friction, not to block immediately. That keeps false positives lower.

4) Action sensitivity

A “view” is cheap; a “DM with a link” is expensive. Different actions should carry different protection weights. If you only protect signups, bots may shift to spam comments or fake reactions. If you only protect posting, bots may farm follower counts instead.

A useful rule: protect the actions that change trust, visibility, or cost.

How to design the defense layer

A good implementation is usually a chain, not a single gate.

Issue a client challenge when risk is non-zero.

For web, mobile, and desktop apps, use native SDKs where available. CaptchaLa supports Web (JS/Vue/React), iOS, Android, Flutter, and Electron, plus native SDKs for integration across those surfaces.Return a short-lived proof token.

The frontend obtains apass_tokenonly after the challenge is satisfied.Validate on your backend.

Your API should verify the token with the client IP and your app credentials before allowing the sensitive action.Escalate only when needed.

If the token is missing, expired, duplicated, or tied to suspicious behavior, raise friction: rate limit, additional verification, or temporary hold.Log outcomes for tuning.

Store the action, risk reason, outcome, and false-positive reviews so you can refine thresholds over time.

A simple flow can look like this:

User action -> risk check -> challenge if needed -> server validate ->

allow / rate limit / escalateAnd if you need a server-triggered proof step for certain workflows, CaptchaLa also provides a server-token issuance endpoint:

POST https://apiv1.captcha.la/v1/server/challenge/issueThat is useful when the backend wants to initiate a challenge as part of a protected flow rather than waiting for the client to request one first.

Example decision rules

if action == "register" and ip_reputation is low:

require_challenge()

if action == "comment" and burst_rate > threshold:

require_challenge()

if action == "dm" and contains_link and account_age < 1 day:

step_up_review()

if token_valid and behavior_normal:

allow()The key is to keep the system understandable. When the logic is too opaque, support teams struggle to explain why a legitimate creator, moderator, or power user got blocked.

How major CAPTCHA options compare

Different platforms solve different parts of the problem. Here’s a practical, defender-focused comparison:

| Tool | Typical strength | Tradeoff | Best fit |

|---|---|---|---|

| reCAPTCHA | Broad familiarity, strong ecosystem | Can add friction; integration choices vary | General web abuse checks |

| hCaptcha | Flexible and widely used | May still require tuning for UX | Public-facing forms and signups |

| Cloudflare Turnstile | Low-friction web challenge | Best when you already use Cloudflare | Web traffic with edge protection |

| CaptchaLa | Multi-platform support, server validation, first-party data only | Requires your backend to validate flows | Social apps spanning web, mobile, and desktop |

There is no single winner for every product. If your social app is web-only and already sits behind Cloudflare, Turnstile can be convenient. If you need multi-platform support or a tighter app-server validation loop, look at the implementation details closely. CaptchaLa is one option that fits teams wanting a compact API surface and native SDK support across several clients.

Practical deployment tips for social apps

A few choices make a big difference in production:

- Protect the highest-risk actions first. Registration, login, password reset, invite creation, and messaging usually matter more than passive engagement.

- Use progressive friction. Start with silent checks, then challenge, then rate limit, then temporary restriction.

- Keep tokens short-lived. A stale proof should not unlock a fresh sensitive action.

- Tie validation to the server. Client-side checks alone are easy to replay.

- Review false positives weekly. Social apps change fast; your thresholds should too.

- Avoid over-collecting data. CaptchaLa’s approach uses first-party data only, which helps teams stay disciplined about what they store and why.

For teams that want concrete implementation guidance, the docs cover the validation flow, SDK setup, and backend integration patterns. If you’re planning usage tiers, the pricing page shows the free tier at 1,000 requests per month, with Pro plans aimed around 50K-200K and Business around 1M.

A sensible starting architecture

If you are building bot detection in social media from scratch, start with this sequence:

- Add rate limits to registration, login, comments, and DMs.

- Instrument device, session, and action timing signals.

- Add a challenge only when risk crosses a threshold.

- Validate every token on the server.

- Review abuse samples and tune thresholds monthly.

- Expand coverage to mobile and desktop clients once web is stable.

That sequence is intentionally boring. Boring is good here. Social abuse often wins when defenses are too complex, too brittle, or too expensive to maintain.

Bot detection in social media works best when it is invisible to normal users and expensive for automated abuse. If you keep the focus on request authenticity, behavior, and action sensitivity, you can reduce spam without turning the platform into a maze.

Where to go next: if you want to compare setup options or see how validation works in practice, start with the docs or review pricing.