A bot detection challenge is an interactive or invisible verification step that helps a site decide whether a visitor is likely a human or an automated script. Used well, it blocks abuse like credential stuffing, signup spam, scraping, and ticket fraud without forcing every legitimate user through a painful hurdle.

The key idea is simple: don’t wait until abuse has already damaged your systems. Put a challenge in front of risky actions, evaluate signals quickly, and only escalate when the traffic looks suspicious. That keeps friction low for real users while making automation more expensive and less reliable.

What a bot detection challenge actually does

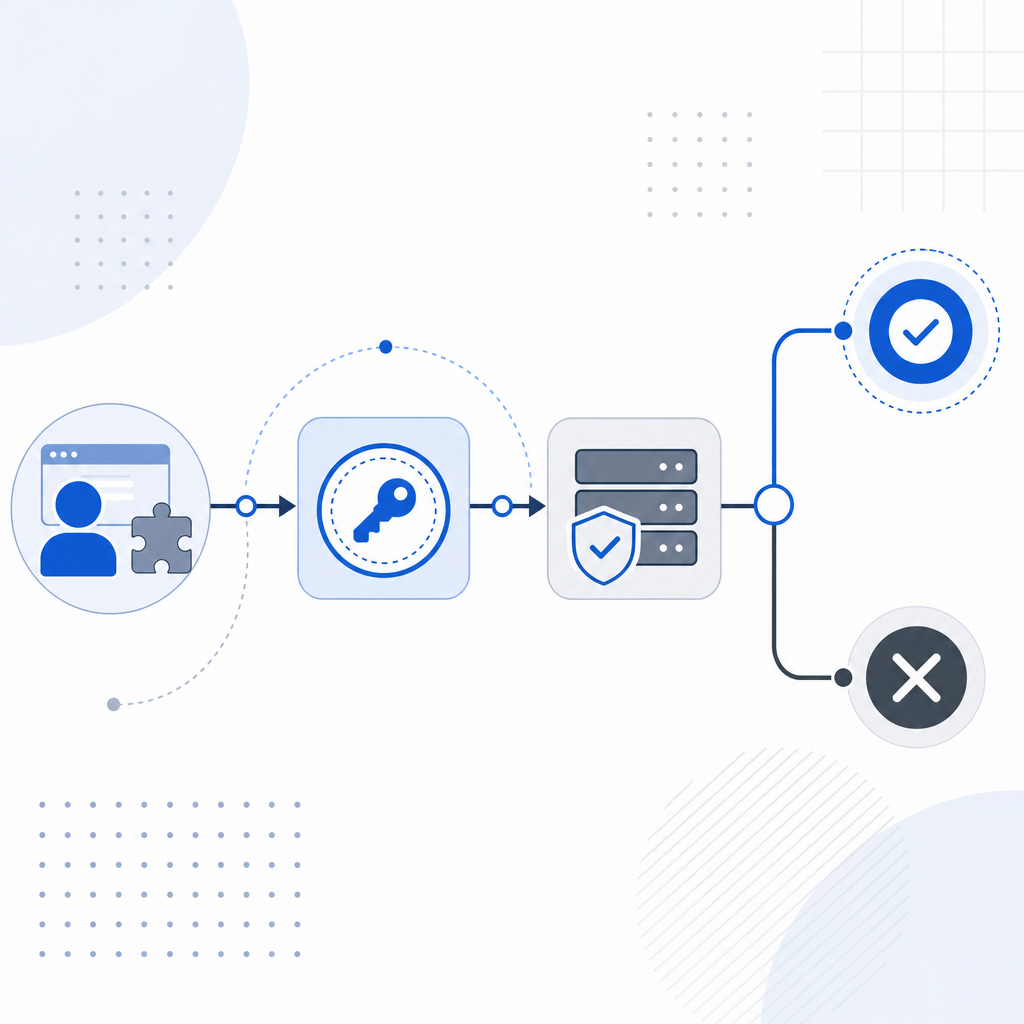

A bot detection challenge sits between a visitor’s request and your protected action. Depending on the product and risk level, it may be visible, invisible, or adaptive. Some challenges ask the user to complete a puzzle or checkbox; others rely on device, network, and behavioral signals; others do both and only show a challenge when the score is uncertain.

At a practical level, the workflow usually looks like this:

- The client loads a challenge widget or script.

- The visitor interacts with it, or the system evaluates signals in the background.

- A short-lived token is issued if the visitor passes.

- Your backend validates that token before allowing the action.

- If the token is missing, expired, or suspicious, the request is blocked or escalated.

For example, CaptchaLa uses a client-side loader and server-side validation flow that fits this pattern well. The client can load from https://cdn.captcha-cdn.net/captchala-loader.js, then your server validates the returned pass token with POST https://apiv1.captcha.la/v1/validate using X-App-Key and X-App-Secret. That separation matters because the real decision should happen on the server, not just in the browser.

Why this matters for defenders

If you’re only checking IP reputation or rate limits, modern automation can still slip through by rotating infrastructure, mimicking browsers, or staying just under thresholds. A bot detection challenge adds a proof step: the request has to demonstrate something that is harder to fake at scale.

That doesn’t mean every visitor should be challenged. The best systems use risk-based logic. Low-risk users glide through; suspicious traffic gets probed more aggressively. This is especially useful on signup forms, password reset endpoints, login pages, checkout flows, and content submission forms.

Where challenges fit in your security stack

A bot detection challenge should not be your only defense. It works best as one layer in a broader abuse-prevention strategy that includes rate limiting, IP and ASN reputation, session validation, device fingerprinting where appropriate, and application-level anomaly detection.

Here’s a useful way to think about placement:

| Control | What it stops | Strength | Limitation |

|---|---|---|---|

| Rate limiting | High-volume floods | Simple and cheap | Easy to evade with distributed traffic |

| Bot detection challenge | Automated abuse and scripted form submits | Adds proof of interaction | Needs careful UX tuning |

| Session checks | Cookie replay and session abuse | Strong for authenticated flows | Doesn’t help much pre-login |

| Behavioral signals | Headless and scripted activity | Good at nuanced detection | Requires tuning and telemetry |

| Manual review | High-value edge cases | Very accurate | Slow and expensive |

For many teams, the decision is not “challenge or not,” but “where should the challenge appear?” The most effective placements are usually high-risk actions with business impact:

- account creation

- login and password reset

- checkout and payment initiation

- promo code redemption

- form submission on public endpoints

- API endpoints that are not meant for unattended automation

If you use CaptchaLa, it can be integrated across web and mobile surfaces: Web with JS, Vue, and React; iOS and Android native SDKs; Flutter; and Electron. That matters when abuse crosses platforms and you need the same policy everywhere, not a different solution for each client type.

Objective comparison with common options

Different products lean toward different tradeoffs:

- reCAPTCHA is widely recognized and easy to encounter, but many teams want tighter control over data handling and user experience.

- hCaptcha is often chosen as a CAPTCHA-style alternative with its own risk model and challenge format.

- Cloudflare Turnstile emphasizes low-friction verification and can be attractive if you already rely on Cloudflare infrastructure.

The right choice depends on your constraints: UX tolerance, integration depth, policy requirements, privacy posture, and how much first-party control you want over the verification path.

Implementation details that matter

A bot detection challenge is only as good as its implementation. Weak integration can turn a strong anti-abuse idea into a bypassable checkbox. Strong integration makes the challenge part of your backend authorization logic.

Here are the technical details that deserve attention:

- Use short-lived verification tokens. Treat pass tokens as one-time or very short-lived artifacts. If a token can be replayed for too long, automation can reuse it.

- Validate on the server. The browser can be tampered with. Your backend should call the validation endpoint and decide whether the action is allowed.

- Bind validation to request context. Include the client IP when appropriate, and compare it against session metadata or other request signals.

- Escalate only on risk. Don’t challenge every user at every step. Trigger challenges when behavior crosses a threshold.

- Log outcomes carefully. Store pass/fail results, action type, and risk context so you can tune policy later.

- Respect regional and product constraints. If you need a first-party data posture, choose a provider and integration path that aligns with it.

A minimal server-side flow can look like this:

// Example: verify a challenge token before allowing sign-up

// English comments only, as a reminder for implementation clarity

async function verifyChallenge(passToken, clientIp) {

const response = await fetch("https://apiv1.captcha.la/v1/validate", {

method: "POST",

headers: {

"Content-Type": "application/json",

"X-App-Key": process.env.CAPTCHALA_APP_KEY,

"X-App-Secret": process.env.CAPTCHALA_APP_SECRET

},

body: JSON.stringify({

pass_token: passToken,

client_ip: clientIp

})

});

if (!response.ok) return false;

const data = await response.json();

return data?.success === true;

}CaptchaLa also supports server-token issuance through POST https://apiv1.captcha.la/v1/server/challenge/issue, which is useful when your backend needs to initiate or coordinate the challenge flow directly.

If you want to see the integration details, the docs are the right place to start. They’re especially useful when you’re deciding which SDK fits your stack: Maven la.captcha:captchala:1.0.2, CocoaPods Captchala 1.0.2, pub.dev captchala 1.3.2, plus server SDKs captchala-php and captchala-go.

Common mistakes teams make

The most common mistake is treating the challenge as a UI decoration instead of a security control. If the backend accepts the request even when validation fails, you don’t actually have protection.

Other mistakes include:

- making the challenge too frequent, which hurts conversions and frustrates legitimate users

- relying only on visible puzzles, which can be noisier than necessary

- failing to refresh expired tokens

- not separating anonymous and authenticated policies

- ignoring mobile and desktop app surfaces where abuse also occurs

- choosing a solution without checking how it handles your data and verification requirements

A good rule of thumb: if you can remove the challenge and your abuse rate barely changes, the challenge is probably not wired deeply enough into the control plane. If you add it everywhere and conversions collapse, the policy is too blunt. The goal is a measured middle ground.

Teams that need a modest starting point often begin with a free tier and expand as traffic grows. CaptchaLa’s published tiers include 1000/month on free, 50K-200K on Pro, and 1M on Business, which gives teams room to start small and tune policy before widening deployment. You can compare options on pricing.

How to decide if you need one

A bot detection challenge is worth adding when abuse is creating real cost: support burden, fake accounts, credential attacks, spam, inventory pressure, or API overuse. It is less useful when your traffic is already trusted and the protected action is low risk.

Ask these questions:

- Is the endpoint public or reachable before authentication?

- Would a scripted request cause measurable harm?

- Can rate limits alone stop the abuse?

- Do you need a better human-vs-bot proof step than a simple checkbox?

- Will a small amount of friction be acceptable at the point of risk?

If the answer to the first three is yes, a challenge is usually a good candidate. If the answer to the last two is no, you may need a more invisible approach or a challenge only for suspicious sessions.

Where to go next: review the docs for implementation guidance, or check pricing if you’re evaluating the right tier for your traffic and risk profile.