Bot detection in Akamai is usually about combining traffic intelligence, behavior signals, and edge-based rules to separate real users from automated abuse. If you’re evaluating it, the practical question is less “does it detect bots?” and more “how much control do we have over false positives, integration depth, and data privacy?”

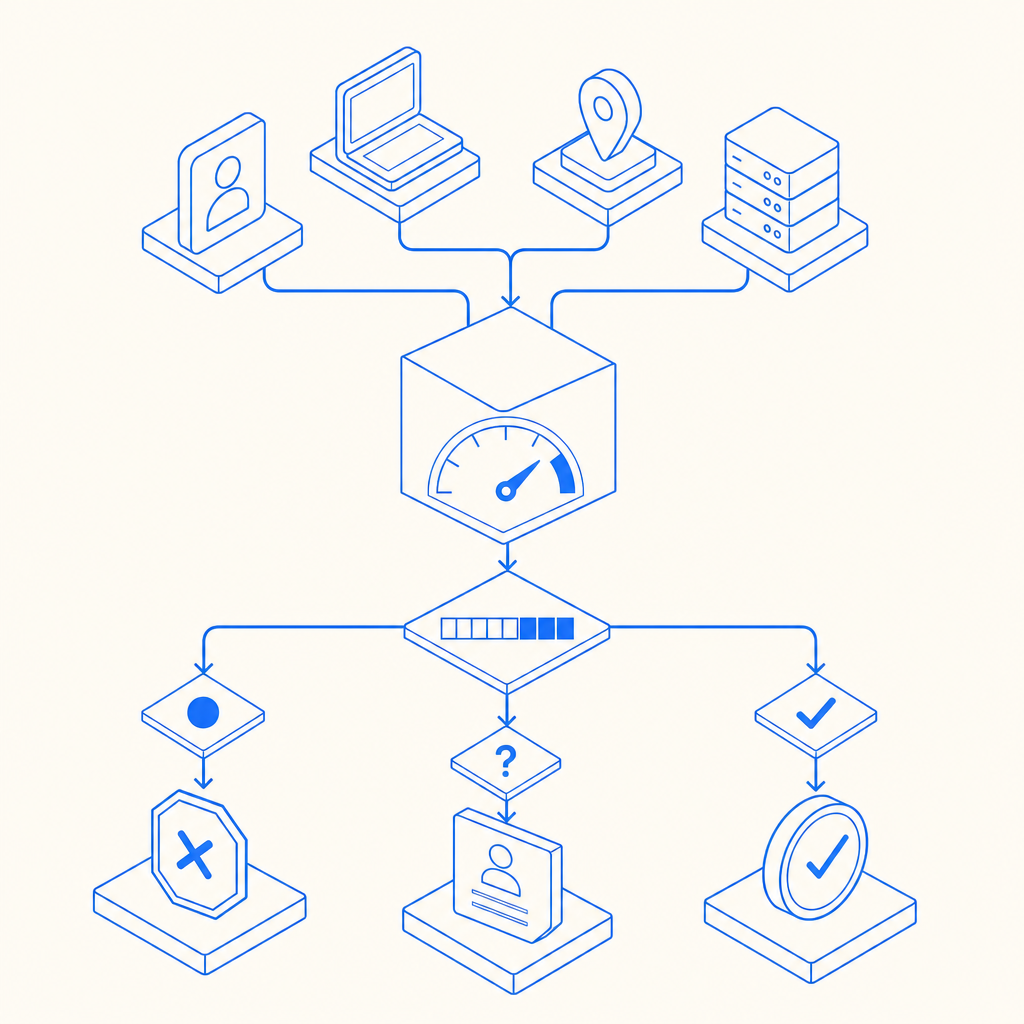

That framing matters because bot traffic rarely looks like one thing. You may be dealing with credential stuffing, signup abuse, carding, scraping, or scripted checkout fraud, and each one needs a slightly different defensive posture. A good evaluation starts with how the system sees traffic, how it scores risk, and how easy it is to act on that decision without slowing real users down.

What “bot detection Akamai” usually means

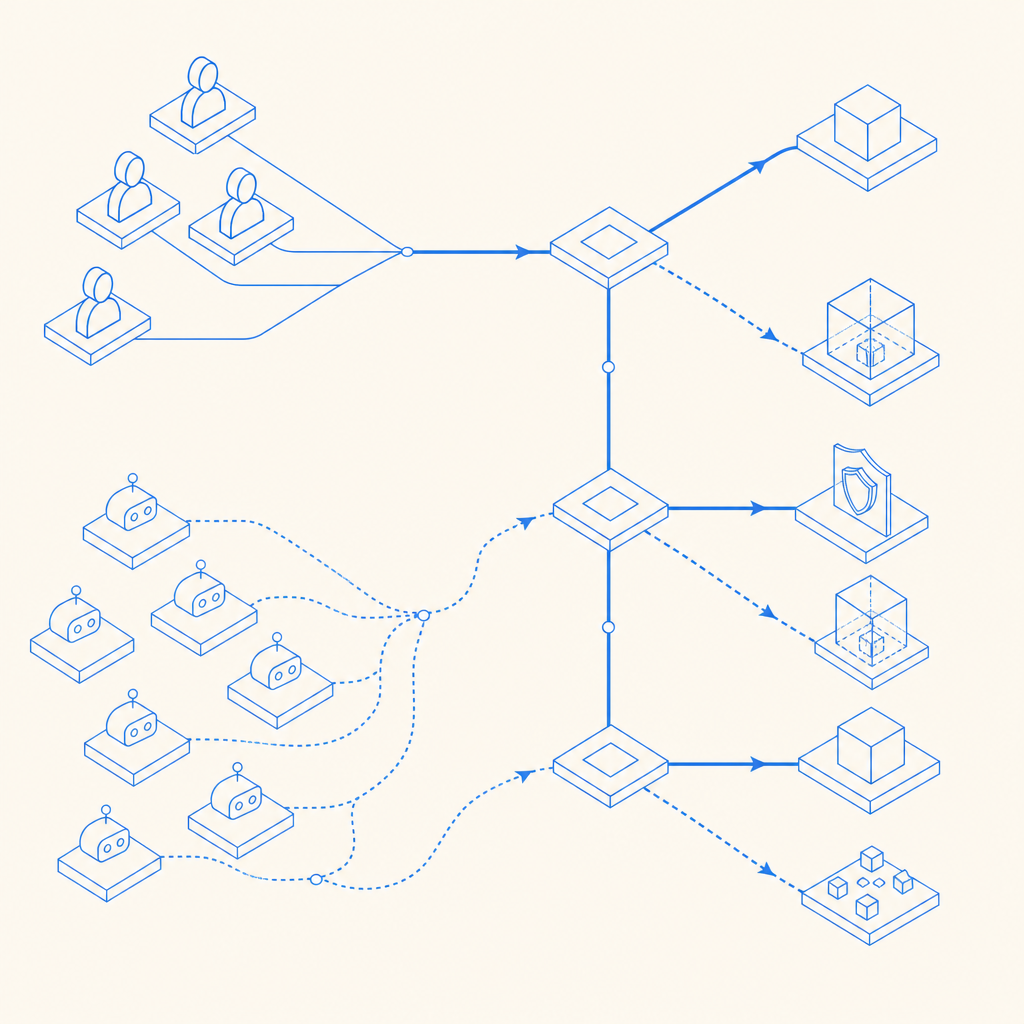

When teams say “bot detection Akamai,” they’re often referring to a layered defense model at the CDN or edge: requests are observed before they reach application logic, then scored or challenged based on patterns such as request cadence, IP reputation, header consistency, browser fingerprints, and interaction anomalies. That can be effective because the earlier you stop abuse, the less load it creates downstream.

The main advantage of edge-oriented detection is scale. It can absorb noisy, high-volume attacks and make decisions close to the source. The tradeoff is that any system relying heavily on edge telemetry can become opaque if you need to understand exactly why a session was flagged. For product teams, that opacity can make tuning and incident response harder than expected.

A practical way to think about bot defense is by outcome:

- Block: stop obviously abusive traffic immediately.

- Challenge: ask for proof of humanity when confidence is medium.

- Monitor: log suspicious behavior for later review.

- Score: feed a risk value into login, signup, or checkout logic.

The right mix depends on your risk tolerance. For example, a banking login flow may prefer aggressive challenge logic, while a publishing site may prioritize low friction and only intervene when scraping patterns emerge.

How to compare it with CAPTCHA-based approaches

Akamai-style bot management and CAPTCHA systems solve overlapping problems, but they aren’t interchangeable. Bot management tries to infer automation from many signals; CAPTCHA asks a user to prove they can complete a task that is hard to automate reliably. In practice, many teams use both: bot detection to decide when to intervene, and CAPTCHA as one of the intervention methods.

Here’s a simple comparison:

| Capability | Edge bot detection | CAPTCHA challenge |

|---|---|---|

| Passive traffic scoring | Yes | Limited |

| User proof-of-humanity | Indirect | Yes |

| Protection before app logic | Yes | Sometimes, if loaded early |

| Easier UX tuning | Moderate | Moderate to high |

| Works well for login/signup abuse | Yes | Yes |

| Helps with scraping reduction | Yes | Sometimes |

| Requires strong frontend integration | Sometimes | Usually |

| Useful as a fallback | Yes | Yes |

For teams evaluating CaptchaLa, the key question is whether you want a challenge layer that plugs cleanly into your existing application decisioning. CaptchaLa supports 8 UI languages and native SDKs for Web (JS, Vue, React), iOS, Android, Flutter, and Electron, which makes it easier to keep the challenge experience consistent across platforms. That consistency matters when you want to challenge suspicious sessions without fragmenting your UX.

A second difference is data handling. Some teams want broad behavioral telemetry; others want a narrower model based on first-party data only. If your privacy posture is strict, that boundary is worth paying attention to before you decide how much external signal you want in the loop.

What to evaluate before you commit

If you are comparing Akamai-style bot detection with a CAPTCHA layer or another bot-defense tool, don’t start with pricing alone. Start with the abuse cases you need to stop and the evidence you need to trust the decision.

1) Signal quality and false positives

Ask how the system behaves under real user diversity: older browsers, assistive technologies, mobile networks, corporate proxies, and international traffic. A strong detector is not just aggressive; it is calibrated. False positives become expensive fast when they hit signup, password reset, or checkout.

2) Integration path

You want to know how quickly the product can be wired into your stack. With CaptchaLa, the server-side validation pattern is straightforward: your backend sends a POST request to https://apiv1.captcha.la/v1/validate with {pass_token, client_ip} and authenticates with X-App-Key and X-App-Secret. If you need a server-issued challenge flow, there is also POST https://apiv1.captcha.la/v1/server/challenge/issue.

That sort of API clarity matters because it lets engineering teams place verification exactly where they need it: on login, registration, checkout, or any sensitive endpoint that should not trust the browser alone.

# Example server-side validation flow

# 1. Receive pass_token from the client

# 2. Collect the client's IP address

# 3. POST to the validation endpoint

# 4. Check the verdict before allowing the action

POST /v1/validate

Headers:

X-App-Key: your_key

X-App-Secret: your_secret

Body:

{

"pass_token": "token_from_client",

"client_ip": "203.0.113.10"

}3) Platform coverage

If your application spans web and mobile, you should not be forced into separate security experiences. CaptchaLa’s native SDK support covers Web, iOS, Android, Flutter, and Electron, with package options such as Maven la.captcha:captchala:1.0.2, CocoaPods Captchala 1.0.2, and pub.dev captchala 1.3.2. For backend teams, server SDKs are available in captchala-php and captchala-go.

4) Operational transparency

A defense system should be measurable. You need to know how often users are challenged, how often those challenges are passed, and where friction is concentrated. If a vendor cannot explain the decision path in business terms, you may end up with security that is hard to tune and even harder to defend internally.

A practical decision framework

If you’re choosing between bot detection Akamai-style controls and a CAPTCHA-centric layer, use the problem you are solving to guide the architecture:

- If abuse is mostly volumetric or highly automated, edge detection is valuable because it can suppress traffic early.

- If abuse targets specific app actions, a challenge system placed at the action boundary can be easier to reason about.

- If you need both, use scoring upstream and challenge downstream.

- If you care about rollout speed, look for SDKs and APIs that fit your existing stack without a redesign.

- If you need privacy restraint, prioritize first-party data and clear validation boundaries.

This is also where other options fit. reCAPTCHA, hCaptcha, and Cloudflare Turnstile are all widely used, and each has different tradeoffs around UX, data collection, and deployment style. The right choice depends on whether your main constraint is friction, visibility, privacy, or operational overhead.

For teams that want a smaller, more direct challenge layer rather than a broad network-security suite, a product like CaptchaLa can be a straightforward fit. Its docs are useful for implementation details and integration patterns, and the docs page is the best place to compare web and server-side flows before you wire anything into production.

Where to go next: if you’re mapping out bot defense for login, signup, or checkout, start with the implementation guide in the docs, then review pricing to match your traffic volume and rollout plan.