Bot detection is the practice of deciding whether a request, session, or account interaction is likely human or automated, then applying the right response before abuse turns into fraud, scraping, signup spam, or inventory loss. The goal is not to “spot bots” in the abstract; it is to reduce risk with enough confidence that real users still move quickly.

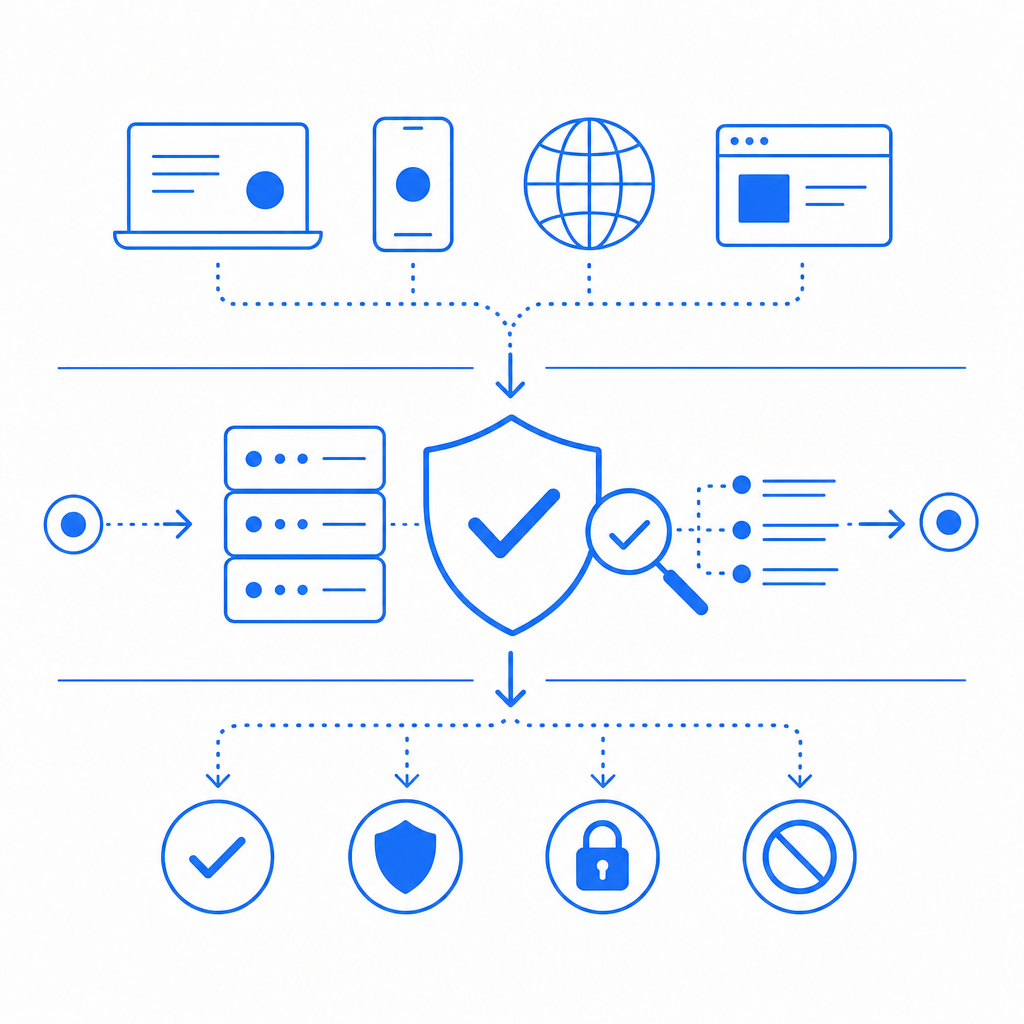

That means good bot detection is usually a layered system: client-side signals, server-side validation, rate and behavior checks, and a response policy that ranges from silent allow to step-up challenge to block. If you only use one signal, you will miss something. If you use too many signals without care, you will frustrate legitimate users. The balance is the whole game.

What bot detection actually looks at

A defender’s job is to distinguish normal human variability from automation. The strongest systems do not depend on a single fingerprint or a single “score.” They combine signals that are hard to fake at scale.

Common signal groups include:

Request integrity

- Are headers, cookies, and tokens consistent?

- Does the request follow the expected flow?

- Is the token fresh and tied to a real session?

Device and environment cues

- Browser capabilities, execution context, and storage support

- Mobile app attestation or SDK-backed session checks

- Whether the request originated from a known automation environment

Behavioral patterns

- Typing cadence, click timing, navigation sequence

- Repeated high-speed actions that exceed normal human throughput

- Unnatural retries across many accounts or IPs

Network and reputation context

- IP range, ASN, datacenter vs. residential patterns

- Velocity from a source over time

- Correlation with known abuse routes

Outcome and feedback

- Did the user complete the action after challenge?

- Did downstream abuse metrics improve or stay flat?

- Are false positives concentrated in a specific geography, device class, or browser?

A practical point: bot detection is strongest when it is integrated into your application flow, not bolted on as an afterthought. For example, a signup form, password reset, ticket checkout, and API endpoint may need different thresholds and different responses.

Client-side checks are useful, but never enough

Client-side checks help you establish that a browser or app instance participated in the interaction. They can verify freshness, continuity, and some execution context. But client-side signals alone should never be treated as absolute proof, because attackers can replay traffic, script browsers, or route through compromised infrastructure.

That is why most production setups pair a client signal with a server-side validation step. A typical flow looks like this:

1. User visits the protected page

2. Your app loads the challenge/loader

3. The client receives a pass token after completing the check

4. Your backend validates that token with the provider

5. Your app allows, challenges again, or blocks based on the responseIf you are using CaptchaLa, the loader is served from https://cdn.captcha-cdn.net/captchala-loader.js, and the server validates the result by POSTing to https://apiv1.captcha.la/v1/validate with {pass_token, client_ip} plus X-App-Key and X-App-Secret. That split matters because the browser can present evidence, but the server should make the trust decision.

CaptchaLa also supports a server-token flow via POST https://apiv1.captcha.la/v1/server/challenge/issue, which is useful when you want to trigger a challenge from backend logic rather than purely from the client path.

Comparing common bot-defense options

Different tools solve different parts of the problem. It helps to compare them on integration style, user friction, and how much control you get over policy.

| Solution | Typical strength | Typical tradeoff | Best fit |

|---|---|---|---|

| reCAPTCHA | Broad recognition and familiar patterns | Can feel familiar to attackers and sometimes adds UX friction | Simple website protection and form abuse reduction |

| hCaptcha | Flexible challenge model and strong abuse focus | May require tuning for UX and accessibility goals | High-risk forms and abuse-prone traffic |

| Cloudflare Turnstile | Low-friction checks and easy deployment in Cloudflare-heavy stacks | Best experience when your stack already aligns with Cloudflare | General web protection with minimal user interruption |

| CaptchaLa | Flexible bot-defense flows with app and server validation options | You still need to tune policy to your app’s risk | Products that want control over challenge placement and validation |

The right choice depends less on brand and more on fit: where the check happens, what you need to validate, and how much control your team wants over behavior at the edge or in the app. For example, if you need SDK coverage across web and mobile, CaptchaLa offers native SDKs for Web (JS/Vue/React), iOS, Android, Flutter, and Electron, plus server SDKs like captchala-php and captchala-go.

How to deploy bot detection without annoying users

The best bot detection systems are selective. They do not challenge every visit. They reserve stronger checks for high-risk moments and suspicious behavior.

A sensible rollout usually follows this order:

Protect the most abused endpoints first

- Signup, login, password reset, OTP requests, checkout, search, and scrape-sensitive APIs

- Start with the paths that already generate support tickets or fraud losses

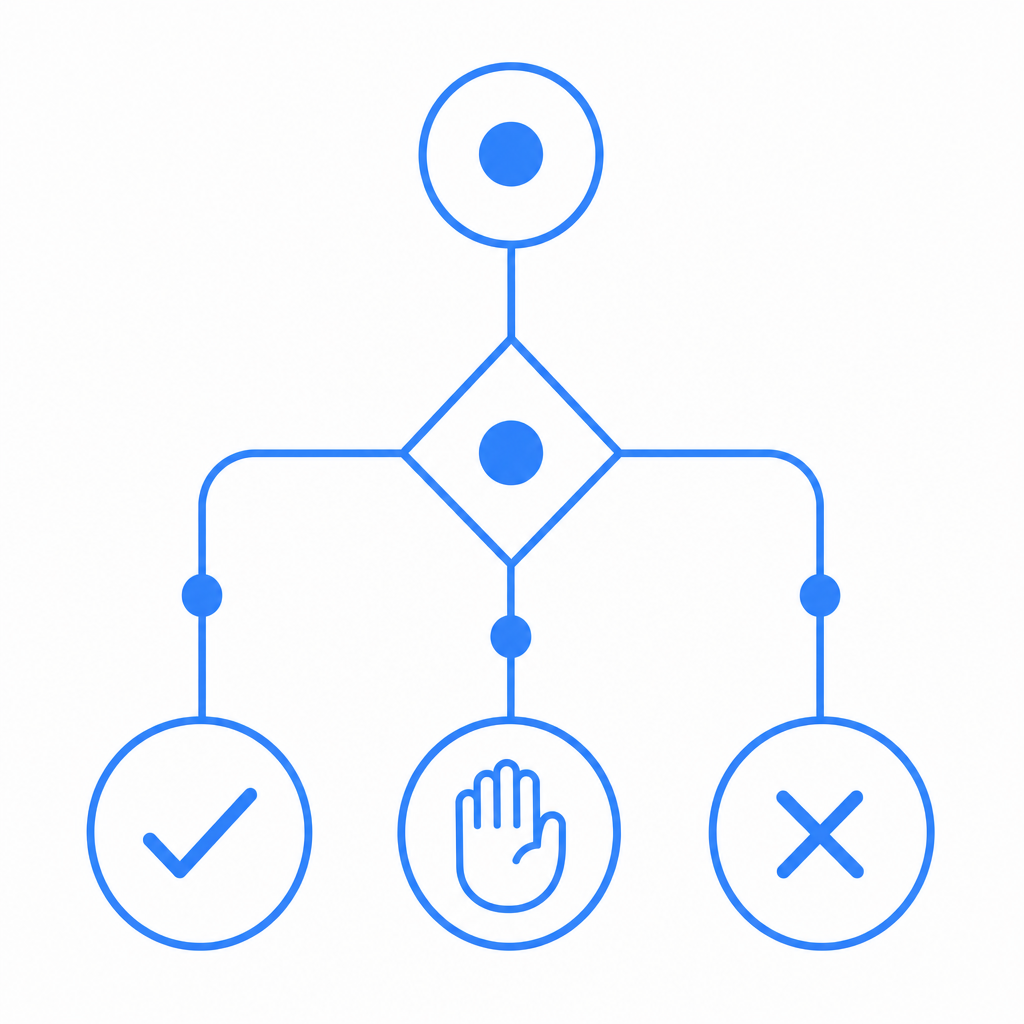

Define a response ladder

- Allow: low-risk traffic

- Challenge: uncertain traffic

- Block: clearly abusive traffic

- Review/log: borderline patterns you want to study

Validate on the server

- Never trust a client result by itself

- Verify token freshness and session continuity

- Tie the token to the request IP when appropriate

Track false positives

- Watch for regional issues, mobile network patterns, and browser-specific problems

- Inspect drop-offs after challenge introduction

- Compare challenged vs. unchallenged conversion

Tune thresholds by endpoint

- Login and password reset may need tighter policy than blog comments

- API write actions usually deserve more scrutiny than read-only requests

Keep the UX short

- One clear step is better than a multi-screen maze

- Make sure the check completes quickly on low-end devices and flaky networks

A small operational detail can save a lot of debugging later: log the validation result, request path, client IP, and downstream action. If you ever need to investigate abuse spikes, that record is more valuable than a generic “failed challenge” message.

Implementation details that matter in production

Bot detection often fails not because the detection idea is bad, but because the deployment is sloppy. A few specifics make a real difference:

- Use first-party data only when possible, especially for trust decisions tied to your own application flow.

- Keep tokens short-lived and validate them close to the action you are protecting.

- Separate challenge issuance from validation so your backend can decide when to escalate.

- Instrument outcomes so you can measure abuse reduction, friction, and conversion impact.

- Support your stack where you already work: CaptchaLa publishes platform support across web and mobile, including Maven

la.captcha:captchala:1.0.2, CocoaPodsCaptchala 1.0.2, and pub.devcaptchala 1.3.2.

If you want a concrete setup, the docs are the right place to start: docs. You can wire the client, validate on your server, and then tune policy from there rather than guessing at protection levels.

For teams comparing cost and scale, it also helps to map bot-defense spend to actual traffic and risk. CaptchaLa’s public tiers include a Free tier at 1,000 validations per month, Pro at 50K–200K, and Business at 1M, which is a useful framing for teams rolling protection out by endpoint rather than all at once. See pricing if you want to estimate a staged rollout.

The practical takeaway

Bot detection is not a single technology; it is a decision system. The winning setup combines evidence, validation, and policy so you can stop abuse without turning your product into a gauntlet for real users. Start with your highest-risk flows, validate server-side, keep responses proportional, and measure the impact.

If you are mapping out your own implementation, CaptchaLa can fit into a client-plus-server workflow without forcing you into a one-size-fits-all policy. Where to go next: read the docs or review pricing to plan a staged rollout.