Auto read captcha code usually means extracting or interpreting a CAPTCHA challenge automatically, but from a defender’s point of view the safer goal is not to “read” the challenge itself; it’s to verify users with minimal friction while making automation expensive. If you’re building a site, the practical question is how to detect bots reliably without turning every login, signup, or checkout into a puzzle box.

That distinction matters because “auto read” can be used to describe very different things: accessibility support, internal QA automation, or outright challenge-solving by bots. For legitimate product teams, the right approach is to design the flow so the server can validate a pass token, score risk, and degrade gracefully when needed. That keeps your users moving and gives you a clean control point on the backend.

What people usually mean by auto read captcha code

When someone says auto read captcha code, they often mean one of three things:

Human-assisted access

A user or accessibility tool helps interpret the challenge because the visual prompt is hard to solve.Test automation

QA scripts need to get through a CAPTCHA in staging without manual steps.Bot solving

An automated system tries to defeat the challenge at scale.

Only the first two are legitimate operational concerns for a product team. The third is a security problem. If you’re defending a login or form, you want to make sure your implementation doesn’t reward challenge scraping, replay attacks, or token sharing. That means the meaningful control is on the server side, not in a hidden client-side trick.

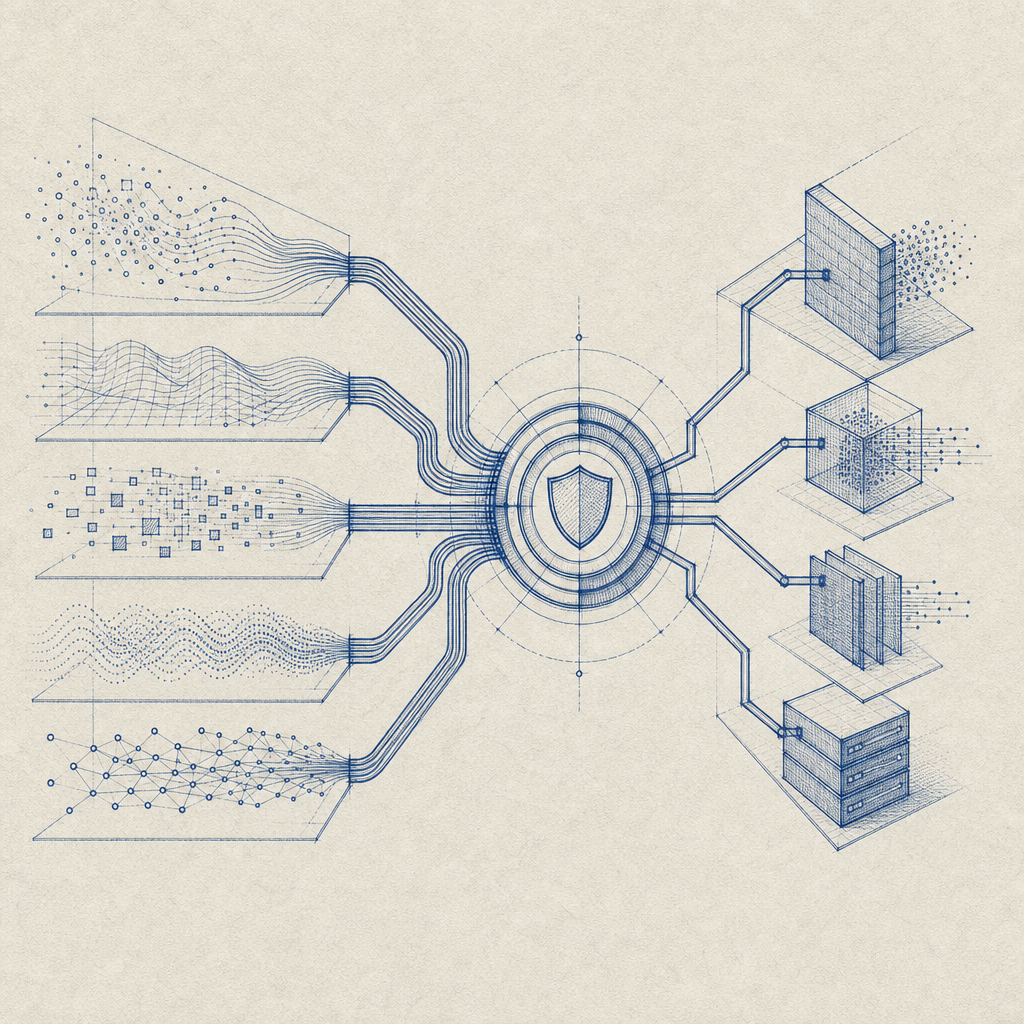

A useful mental model is this: CAPTCHA is not the defense. It is one signal in a wider decision system. Rate limits, device reputation, behavioral checks, IP risk, and server-side validation all matter. Products like reCAPTCHA, hCaptcha, and Cloudflare Turnstile all work in that broader space, though they differ in UX, privacy posture, and integration style.

Safer architectures for verification

If you are trying to improve automation resistance without making the experience brittle, focus on a validation pipeline that is simple, explicit, and server-driven. A clean flow looks like this:

- The browser or app loads the challenge widget or loader.

- The user completes the verification step.

- Your client receives a short-lived

pass_token. - Your backend verifies that token with your secret.

- Your app decides whether to allow the request, add friction, or step up auth.

For CaptchaLa, the client loader is served from https://cdn.captcha-cdn.net/captchala-loader.js, and validation happens server-to-server at POST https://apiv1.captcha.la/v1/validate with pass_token, client_ip, plus X-App-Key and X-App-Secret. That structure is valuable because the browser never becomes the source of truth.

A simple backend check might look like this:

// Example: server-side validation flow

// Comments are in English only

async function verifyCaptcha(passToken, clientIp) {

const response = await fetch("https://apiv1.captcha.la/v1/validate", {

method: "POST",

headers: {

"Content-Type": "application/json",

"X-App-Key": process.env.CAPTCHA_APP_KEY,

"X-App-Secret": process.env.CAPTCHA_APP_SECRET

},

body: JSON.stringify({

pass_token: passToken,

client_ip: clientIp

})

});

if (!response.ok) {

throw new Error("Captcha validation request failed");

}

const result = await response.json();

// Check the server response before continuing

return result.valid === true;

}That pattern helps you avoid a common mistake: treating the front end as the gatekeeper. The client can request a challenge and present a token, but the backend should decide whether that token is trustworthy.

Where defenders get the most value

A token-based design is especially useful when you need to protect:

- account creation

- password reset

- checkout or coupon abuse

- scraping-sensitive search endpoints

- free trial signup flows

The best implementations do not make every user solve a challenge. They trigger verification based on risk and keep the fallback path predictable. That reduces abandonment while still making abuse more expensive.

How this differs from solver-style thinking

It’s tempting to think about CAPTCHA as a text-reading problem, but defenders should think about it as a policy problem. If you optimize only for “can the image be interpreted automatically,” you’ll miss the bigger issue: can an attacker reuse one success across many requests?

That’s why short-lived tokens, IP-aware validation, and server-side challenge issuance matter. CaptchaLa also supports server-token issuance via POST https://apiv1.captcha.la/v1/server/challenge/issue, which can be useful when your backend needs to create or manage challenge state directly. In practice, the challenge is only one piece of the flow.

Here’s a quick comparison of common approaches from a defender’s perspective:

| Approach | Main strength | Main tradeoff | Good fit |

|---|---|---|---|

| reCAPTCHA | Familiar ecosystem, broad adoption | Can feel heavy depending on the flow | General web forms |

| hCaptcha | Strong abuse focus, flexible deployment | Some users may perceive more friction | High-risk public endpoints |

| Cloudflare Turnstile | Lower-friction verification | Usually tied into Cloudflare’s stack | Cloudflare-centered sites |

| CaptchaLa | Lightweight integration with server validation and SDK coverage | Requires your own backend validation setup | Apps that want explicit control |

The point is not that one option is universally superior. The point is that the validation model should match your threat model. If your product is being scraped, credential-stuffed, or trial-abused, then “auto read captcha code” is the wrong optimization target. You want a system that resists automation and still lets real people through.

Implementation details that actually matter

A robust setup usually depends on the basics more than the widget itself. The following specifics matter a lot in production:

Use short-lived pass tokens

The token should be useless after it expires or after it’s validated once.Bind validation to request context

Include the client IP when appropriate so replay from a different origin is harder.Keep secrets server-side

Never expose your app secret in the browser, mobile bundle, or public logs.Instrument failure modes

Distinguish invalid token, expired token, network failure, and challenge abandonment.Step up only when needed

If the score is borderline, require an additional check instead of blocking everyone.Localize the experience

CaptchaLa supports 8 UI languages, which helps reduce unnecessary friction in international flows.

If you’re integrating across platforms, the SDK coverage also matters. CaptchaLa offers native SDKs for Web (JS, Vue, React), iOS, Android, Flutter, and Electron, plus server SDKs for PHP and Go. The published package names and versions are straightforward: Maven la.captcha:captchala:1.0.2, CocoaPods Captchala 1.0.2, and pub.dev captchala 1.3.2. That makes it easier to keep client and server behavior aligned instead of improvising different verification paths per platform.

For implementation guidance, it’s worth checking the docs before wiring the flow into production. And if you’re planning usage by volume, the pricing page is the quickest place to compare tiers, including the free tier at 1000 monthly and larger plans that support higher request volumes.

Putting it together without overcomplicating the UX

The safest answer to “how do I auto read captcha code?” is usually: don’t try to automate the challenge itself on the defender side. Automate the verification workflow instead. Let the client render the challenge, let the server validate the token, and let your risk engine decide when to ask for more proof.

That approach preserves user trust better than a hard block-everything strategy. It also gives your team room to tune thresholds over time as abuse patterns change. If you’re comparing solutions, evaluate them on server validation, token design, SDK coverage, and how well they fit your existing architecture—not on how easy they are to “read.”

Where to go next: read the docs for integration details, or review pricing if you want to estimate traffic tiers before rollout.