Anti-scraping systems are the controls that detect, slow down, or block automated collection of data from websites and APIs. They work by combining client-side signals, server-side verification, challenge flows, and rate controls so legitimate users can keep moving while bulk extractors are filtered out.

That sounds simple, but the practical job is trickier: modern automation can mimic browsers, rotate IPs, reuse headless runtimes, and distribute requests across many nodes. A useful anti-scraping system does not rely on a single signal. It correlates behavior, context, and proof-of-interaction, then makes a decision that is fast enough for real traffic and strict enough for abuse.

What anti-scraping systems actually do

At a high level, anti-scraping systems try to answer one question: is this request coming from a real user or from an automated collector?

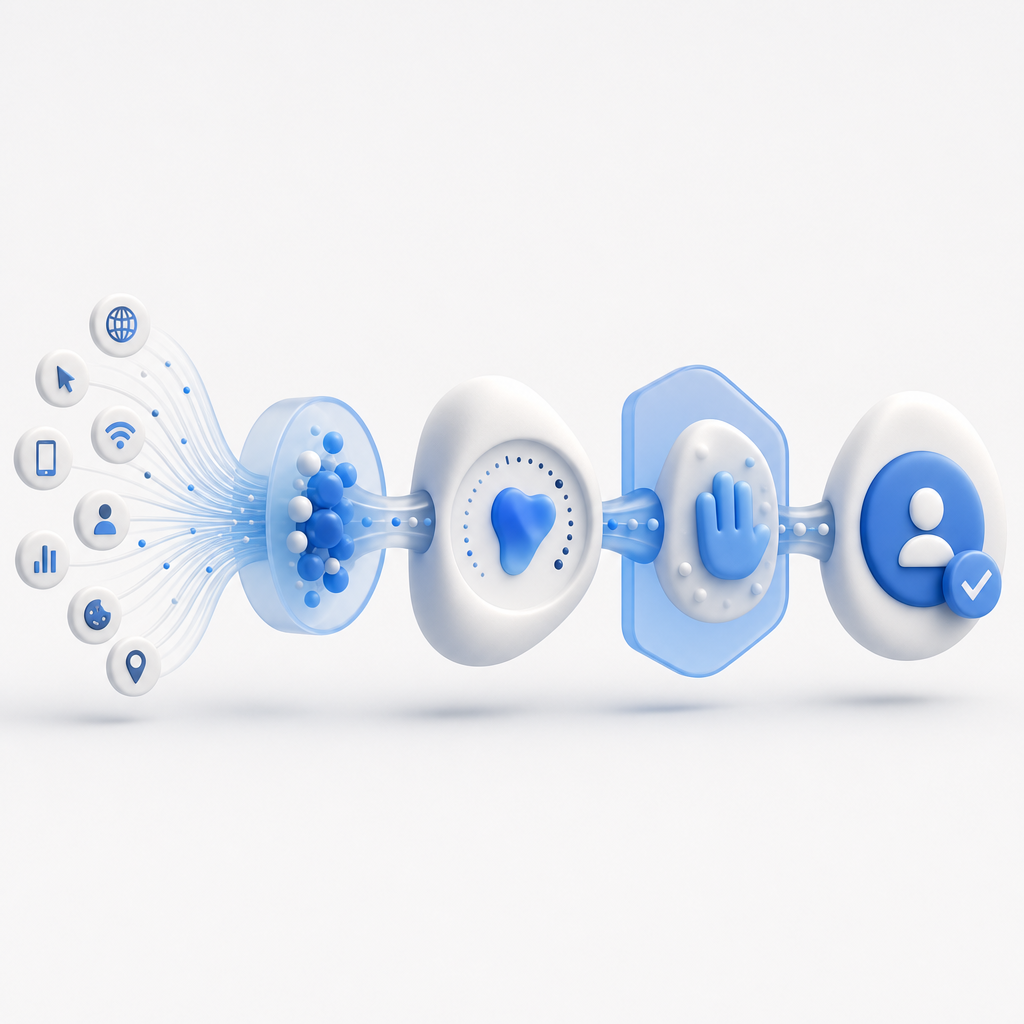

They usually operate in a sequence:

- Observe request patterns, client behavior, and session context.

- Score risk using heuristics, reputation, and anomaly detection.

- Challenge uncertain traffic with friction appropriate to the risk.

- Verify the challenge result server-side before allowing the action.

- Enforce a policy: allow, rate-limit, deny, or step up verification.

That sequence matters because scraping is rarely obvious from one signal alone. A single IP address can serve many users behind NAT. A single browser fingerprint can be shared by a fleet of headless clients. Even user agents can be copied perfectly. Strong anti-scraping systems therefore use multiple layers rather than a single gate.

A practical way to think about it is as a control plane for trust. Low-risk users should barely notice it. High-risk traffic should encounter increasing friction. And the middle—where uncertainty lives—should be handled carefully so you do not punish real customers.

Core signals defenders should combine

Good anti-scraping systems usually blend client, network, and behavioral data. None of these signals are perfect by themselves, but together they build a much stronger picture.

1) Request and network behavior

This includes:

- Request rate per IP, ASN, or account

- Burstiness over short windows

- Geographic inconsistency

- IP reputation and proxy/VPN indicators

- Session reuse across many endpoints

Scrapers often move quickly across URLs in a pattern that is hard to reproduce naturally. They may also hit high-value pages in a fixed sequence or repeatedly call search, pricing, or listing endpoints.

2) Browser and device context

Signals can include:

- Whether JavaScript executed as expected

- Cookie persistence

- Local storage continuity

- Browser feature availability

- Headless or automation artifacts

None of these should be treated as absolute proof, but they are valuable when combined with behavior. For example, if a client submits requests too quickly and shows weak browser continuity, the risk score should rise.

3) Interaction quality

Legitimate users usually do not behave like scripts. They scroll, hesitate, move the pointer, switch tabs, or pause before submission. Anti-scraping systems can measure coarse interaction patterns without collecting invasive personal data.

The trick is to keep this proportional. You want to distinguish a rushed human from an automated crawler, not build a surveillance system.

4) Server-side proof

The most reliable systems verify a client-generated token on the server before completing sensitive actions. That server check should be mandatory for anything involving protected data, account creation, checkout, search harvesting, or repeated form submission.

CaptchaLa’s validation flow is a straightforward example of this pattern: the server receives a pass_token and client_ip, then validates them with X-App-Key and X-App-Secret via POST https://apiv1.captcha.la/v1/validate. For challenge issuance, you can use POST https://apiv1.captcha.la/v1/server/challenge/issue. That server-side step is where a lot of weak implementations fail; if the token is not checked authoritatively, client-side friction is easy to bypass.

Choosing the right defense for the risk level

Not every page needs the same level of protection. A login form, a public product catalog, and a private API endpoint should not share identical controls.

| Risk area | Typical scraping goal | Best-fit controls | Notes |

|---|---|---|---|

| Search / catalog pages | Bulk extraction of listings | Rate limits, token validation, behavioral scoring | Keep latency low for real shoppers |

| Signup / login | Account abuse, credential attacks | Challenge flows, device continuity, server verification | Step up only when risk rises |

| Pricing / availability APIs | Price monitoring, competitive scraping | Signed requests, short token TTLs, IP and session correlation | Log suspicious spikes carefully |

| Content endpoints | Full-page copying | Access controls, anomaly detection, challenge-on-burst | Protect first-party data and dynamic content |

A useful anti-scraping strategy is to place the least friction at the edge and the strongest validation closer to the protected action. That way you avoid turning the whole site into a maze.

If you are implementing a new defense stack, also think about operational detail:

- Token lifetime should be short enough to reduce replay.

- Validation endpoints should be highly available and monitored.

- Fallback paths should exist for accessibility and degraded-network scenarios.

- Logging should preserve enough detail to investigate abuse without overcollecting personal data.

For teams that want to ship quickly without building everything from scratch, CaptchaLa supports web and native apps with SDKs for Web (JS/Vue/React), iOS, Android, Flutter, and Electron, plus server SDKs for captchala-php and captchala-go. It also ships with 8 UI languages, which helps if you operate internationally.

How anti-scraping systems compare with common CAPTCHA vendors

CAPTCHA is only one component of an anti-scraping system, but it is often the visible one. Different vendors trade off usability, control, and deployment complexity.

| Provider | Typical strength | Typical tradeoff | Good fit for |

|---|---|---|---|

| reCAPTCHA | Familiar deployment, broad recognition | Can feel opaque; privacy and UX considerations vary by setup | Sites that want a widely known option |

| hCaptcha | Strong anti-bot focus | User friction can be noticeable in some flows | Risk-heavy forms and abuse-prone pages |

| Cloudflare Turnstile | Low-friction challenge experience | Best when you are already in Cloudflare’s ecosystem | Teams wanting lightweight verification |

| CaptchaLa | Flexible CAPTCHA / bot-defense tooling with first-party-data focus | Requires your own policy design, like any serious defense stack | Products that want to tune defense around their own traffic |

That comparison is intentionally practical rather than ideological. The right choice depends on your traffic, geography, threat model, and tolerance for friction. If you serve a lot of mobile users, for instance, native SDK support matters. If you have mixed web and app traffic, server-side verification consistency matters even more. If your organization is careful about data handling, first-party data only may be a stronger fit than a system that encourages broad third-party dependency.

A defender’s implementation pattern that holds up

Here is a simple architecture pattern that works well for many teams:

- Add client instrumentation on high-value pages and actions.

- Send risk-relevant metadata to your backend along with the user action.

- Issue a short-lived challenge only when policy says the request is uncertain.

- Validate the pass token on the server before granting access.

- Record outcomes so your policy can adapt to abuse trends.

A minimal server-side check might look like this:

// Receive pass_token and client_ip from the application layer

// Send them to the validation API with your app credentials

// Allow the action only if validation returns success

// Otherwise return a challenge or deny the requestThis is deliberately simple because complexity should live in policy, not in fragile glue code. The more you can centralize trust decisions, the easier it is to tune thresholds, audit incidents, and maintain parity across web and mobile clients.

CaptchaLa’s published pricing tiers also make it easier to plan capacity around real traffic rather than guessing: Free tier at 1,000/month, Pro at 50K–200K, and Business at 1M. That range is useful if you want to start with a narrow rollout, then expand protection to more endpoints as you learn where abuse concentrates. You can review details on the pricing page, and implementation notes in the docs.

The main goal: protect data without punishing users

The best anti-scraping systems do not try to block every bot at any cost. They aim to preserve product quality, keep first-party data from being harvested at scale, and minimize unnecessary friction for real users. That usually means accepting that some traffic is ambiguous, then making small, reversible decisions based on evidence.

If you are starting from scratch, begin with the endpoints that are easiest to monetize or repurpose: search, listing pages, pricing, account creation, and API routes that expose valuable content. Build a policy that escalates gradually, verify on the server, and review logs often enough to catch new automation patterns before they become routine.

Where to go next: if you want to see how this fits into your stack, start with the docs or compare plans on the pricing page.