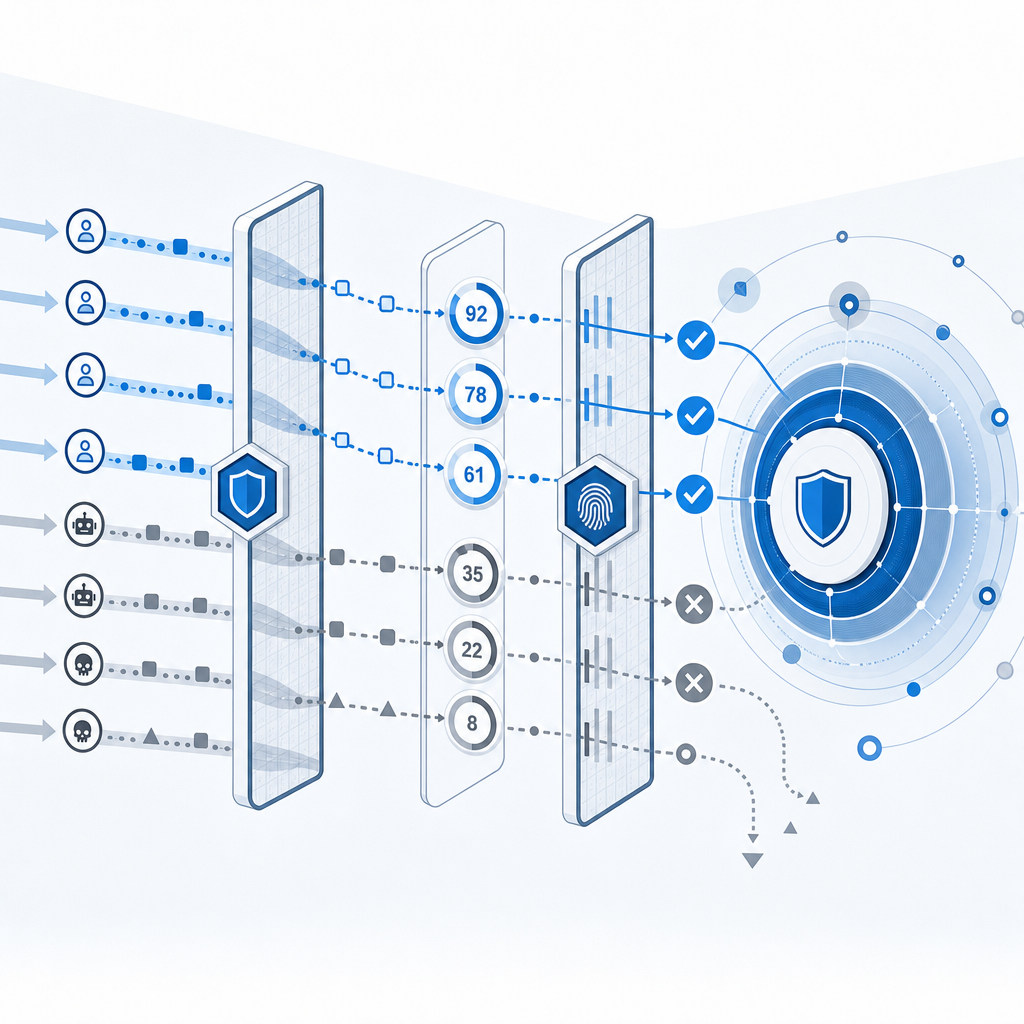

Anti scraping methods work best when they combine detection, rate control, and challenge-response checks instead of relying on a single hurdle. If you only add one layer, determined automation will usually route around it; if you stack a few lightweight controls, you can make large-scale scraping expensive without punishing normal users.

The right approach depends on what you’re protecting: public content, pricing pages, account data, or APIs. For most teams, the goal is not to “stop all bots” — that’s unrealistic — but to reduce abuse, preserve capacity, and make extraction noisy enough that it stops being worth the effort.

What anti scraping methods should do

A strong anti-scraping strategy should do four things:

- Identify abnormal automation patterns using signals like request rate, session reuse, header consistency, and navigation behavior.

- Apply friction proportionally so normal users barely notice, while suspicious sessions face stronger checks.

- Protect sensitive paths more aggressively than public pages.

- Feed outcomes back into policy so you can tune thresholds after observing real traffic.

That last point matters. Scraping is adaptive. If you only look at one signal — say, IP rate — attackers can rotate proxies. If you only rely on browser fingerprints, they can vary those too. The most durable defenses combine server-side logic, client-side checks, and challenge workflows.

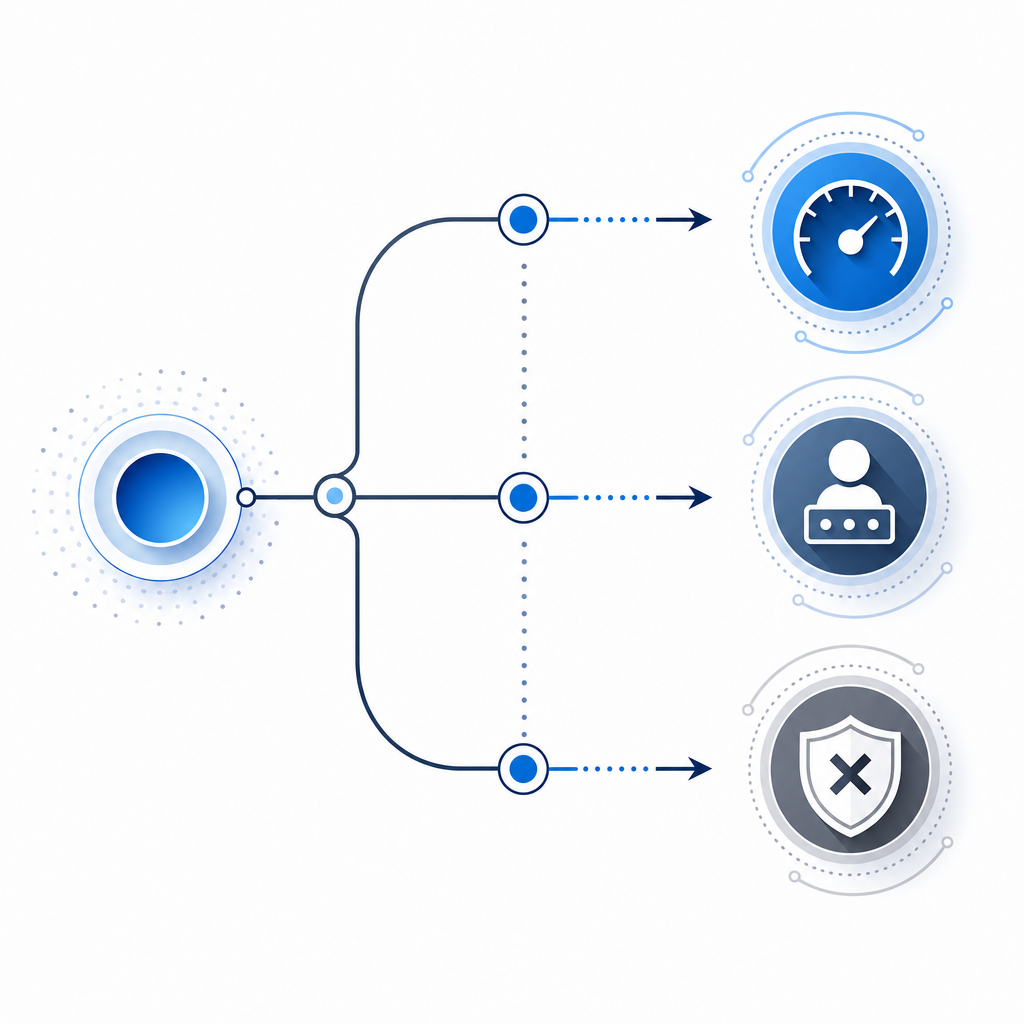

A useful mental model is:

- low risk: allow

- medium risk: step up with lightweight validation

- high risk: challenge, delay, or block

- repeated abuse: escalate to account or network-level controls

That tiered model is where tools like CaptchaLa fit naturally, because they let you add a verification step without turning every visit into a nuisance.

The main anti scraping methods, compared

Not all defenses solve the same problem. Some are better for burst traffic, others for data extraction, and some are mostly for account abuse.

| Method | What it catches | Strengths | Limitations |

|---|---|---|---|

| Rate limiting | High-frequency requests | Simple, effective, server-side | Weak against distributed scraping |

| IP reputation / ASN filtering | Known abusive infrastructure | Good early signal | Can affect shared networks or mobile users |

| Behavioral analysis | Non-human navigation patterns | Harder to mimic at scale | Needs tuning and telemetry |

| Device / browser fingerprinting | Scripted or repeated clients | Helpful for correlation | Can be brittle if overused |

| Challenge-response checks | Automated traffic that lacks real interaction | Strong friction for bots | Adds user experience cost |

| Token validation | Replays and forged submissions | Useful for sensitive flows | Must be implemented correctly |

| Edge/CDN rules | Volume and geo anomalies | Reduces origin load | Limited context on app logic |

A good deployment often uses several of these together. For example, rate limiting can dampen obvious floods, while a challenge is only triggered once a session crosses a risk threshold.

Where CAPTCHA-like challenges help most

Challenges are most useful when the cost of abuse is high, such as:

- signup and account creation

- login and password reset

- search, pricing, and inventory pages

- content export or bulk download endpoints

- form submissions that trigger downstream cost

They are less useful as your only defense on public pages, because hard blocks can push legitimate readers away. That’s why many teams reserve challenges for suspicious sessions rather than every visitor.

A practical defense stack

If you’re building anti scraping methods into an app, start with the infrastructure closest to the abuse source and work inward.

Set per-route rate limits

- Use different thresholds for homepage, search, auth, and export endpoints.

- Prefer token buckets or sliding windows over fixed windows for smoother behavior.

- Track by IP, session, account, and API key where relevant.

Add request normalization and sanity checks

- Reject malformed headers, impossible user agents, and invalid content types.

- Ensure methods and payloads match the route.

- Require CSRF protections on browser-based mutations.

Score sessions with multiple signals

- request cadence

- referer consistency

- cookie persistence

- navigation depth

- challenge pass/fail history

- region and ASN anomalies

Challenge suspicious flows

- Step up only when a session crosses your threshold.

- For browser apps, a loader-based challenge can be less intrusive than redirect-based flows.

- For APIs, validate a server-issued token before allowing the action.

Log and review outcomes

- False positives are usually more expensive than a small amount of friction.

- Track challenge rate, pass rate, abandonment, and abuse reduction.

- Revisit thresholds after traffic changes or launches.

If you want a concrete implementation path, CaptchaLa supports web, mobile, and desktop clients with native SDKs for Web (JS, Vue, React), iOS, Android, Flutter, and Electron, plus server SDKs for captchala-php and captchala-go. It also supports 8 UI languages, which matters if your audience is global and you don’t want security prompts to feel awkward or confusing.

Implementation details that matter

The difference between a useful defense and a fragile one is usually in the integration details.

// Example: validate a passed challenge on the server

// English comments only

async function validateChallenge(passToken, clientIp) {

const response = await fetch("https://apiv1.captcha.la/v1/validate", {

method: "POST",

headers: {

"Content-Type": "application/json",

"X-App-Key": process.env.CAPTCHALA_APP_KEY,

"X-App-Secret": process.env.CAPTCHALA_APP_SECRET

},

body: JSON.stringify({

pass_token: passToken,

client_ip: clientIp

})

});

if (!response.ok) {

return false;

}

const result = await response.json();

return result.valid === true;

}A few technical notes to keep in mind:

- Validate server-side, not only in the browser. Client-side checks can be observed and replayed.

- Bind the token to the client IP when appropriate. That makes simple replay less useful.

- Treat challenge tokens as short-lived. The shorter the validity window, the harder they are to reuse.

- Protect secret keys carefully. App keys and app secrets should never live in public code.

- Issue server tokens only when needed. For risk-based flows, the challenge can be triggered by a server call such as

POST https://apiv1.captcha.la/v1/server/challenge/issue.

For frontend delivery, the loader is served from https://cdn.captcha-cdn.net/captchala-loader.js, which keeps integration straightforward. If you’re evaluating plans, pricing includes a free tier at 1000 validations per month, with Pro at 50K-200K and Business at 1M, all using first-party data only.

When to choose specific competitors

It’s normal to compare multiple options. reCAPTCHA, hCaptcha, and Cloudflare Turnstile all solve parts of the problem, but they differ in experience, ecosystem, and privacy tradeoffs.

- reCAPTCHA is widely recognized and easy to find documentation for.

- hCaptcha is often considered when teams want an alternative challenge provider.

- Cloudflare Turnstile is attractive for teams already using Cloudflare’s edge stack.

The practical question is not which brand is “better” in the abstract, but which one fits your traffic, stack, and UX constraints. If you’re already running custom server logic and want a cleaner path for mobile, web, and desktop clients, it can be useful to compare those options against docs and your own operational requirements.

Measuring whether your anti scraping methods work

A defense that feels strict is not necessarily effective. You need metrics.

Track these after rollout:

- Abuse rate on protected routes: failed login spikes, duplicated requests, or abnormal exports

- Challenge pass rate: high pass rates may mean your threshold is too low or the challenge is too easy

- False positive rate: legitimate users hitting friction

- Origin load reduction: how much traffic you’ve absorbed or filtered upstream

- Revenue or cost impact: especially for endpoints tied to inventory, pricing, or scraping-heavy content

It also helps to segment by route and cohort. A threshold that is fine for a search endpoint may be too aggressive on signup. Likewise, some regions or enterprise networks can look unusual but still be legitimate, so don’t overfit on a single signal.

A measured rollout usually looks like this:

- observe traffic for a baseline period

- enable soft detection

- challenge only the highest-risk sessions

- review false positives

- tighten or loosen controls based on evidence

If you keep this loop running, your anti-scraping stack becomes less reactive and more adaptive.

Conclusion

The most effective anti scraping methods don’t try to win with a single wall. They layer rate limits, session scoring, token validation, and selective challenges so abuse becomes harder while real users keep moving. That approach is especially important if you protect high-value pages or endpoints that attract automation.

Where to go next: review the implementation patterns in docs or compare tiers at pricing to see which setup matches your traffic profile.