Anti scraping is vital for protecting websites from automated bots that steal data or overload servers. On GitHub, developers can find a variety of open-source tools and frameworks specifically designed to detect and block scraping activity. These “anti scraping GitHub” resources enable site owners to implement defensive measures ranging from request rate limiting to more advanced interaction verification. Understanding what options exist and how to integrate them effectively is key to keeping your data and services secure.

What Is Anti Scraping and Why Use GitHub Tools?

Anti scraping involves techniques that prevent unwanted automated bots from extracting information from your webpages. These bots often ignore robots.txt, use proxy servers to evade IP blocking, and mimic human behavior to avoid detection. Anti scraping solutions aim to differentiate between genuine users and these scripted clients by analyzing behavior, traffic patterns, and interaction authenticity.

GitHub hosts a wealth of open-source anti scraping repositories, making it easier for developers to customize and extend protection according to their needs. Popular options include middleware libraries, proxy detectors, and CAPTCHA implementations. Using these libraries can save time, reduce costs, and provide transparency compared to proprietary commercial services.

Popular Categories of Anti Scraping GitHub Tools

Here are common types of anti scraping tools you’ll find on GitHub, plus key features:

1. Rate Limiting and IP Blocking Middleware

These projects track and limit the number of requests per IP within a time window, automatically blocking or throttling suspicious sources.

2. Bot and Headless Browser Detection

Using techniques like analyzing request headers, JavaScript challenges, and mouse movement patterns, these tools identify traffic from headless browsers like Puppeteer or Selenium.

3. CAPTCHA Integration Plugins

Repositories offering easy integration of CAPTCHAs help confirm human presence. This includes custom challenges or hooks to well-known services like reCAPTCHA and hCaptcha.

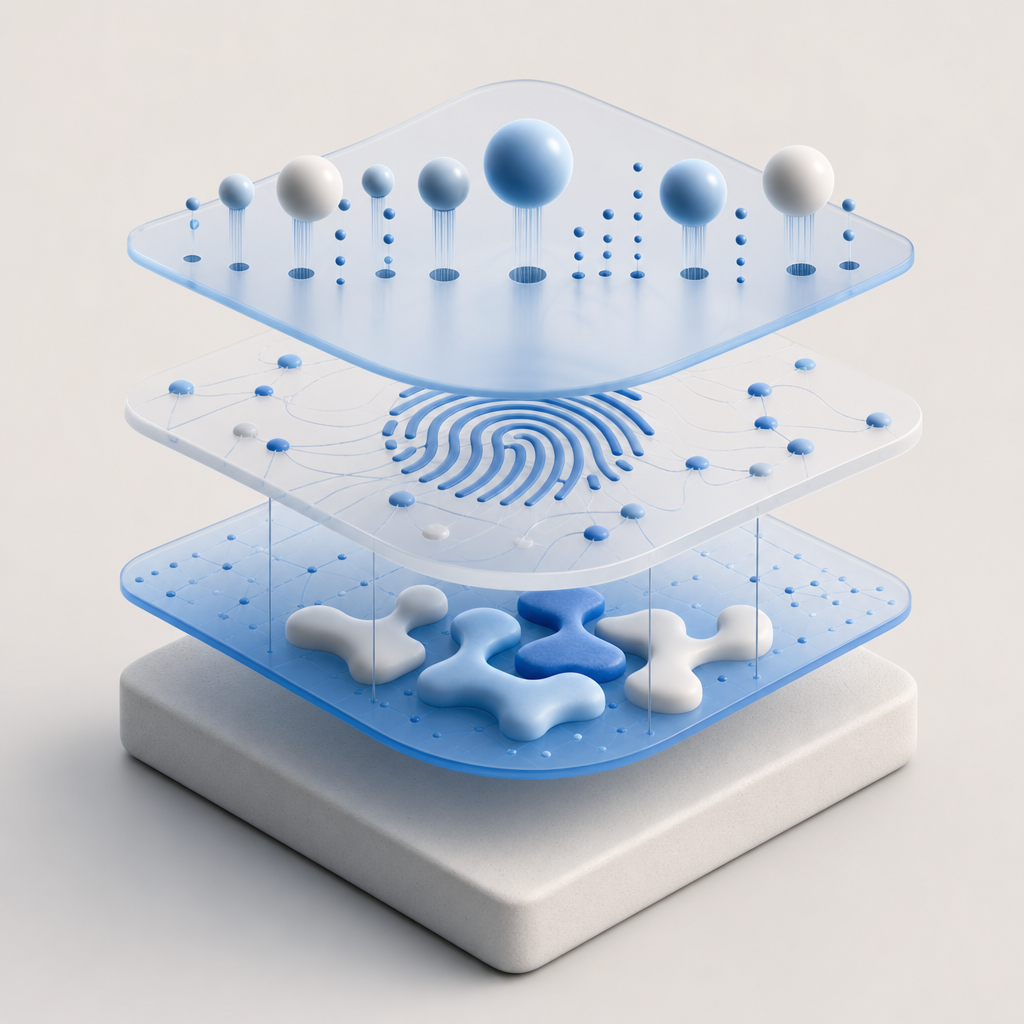

4. Fingerprinting Libraries

Track browser fingerprints, including device data and behavioral signatures, to identify and block recurring scraping clients, even if IPs rotate.

How to Choose and Use Anti Scraping GitHub Projects

When selecting a GitHub anti scraping tool, consider:

- Compatibility: Does it support your backend or frontend stack? Many projects support Node.js, Python, or PHP.

- Customization: Can you modify detection rules and challenge frequency?

- Maintenance: Is the repo actively maintained with community contributions?

- Integration with CAPTCHA and Bot Defense: Combining techniques improves efficacy.

Below is a simplified example of how you might implement rate limiting middleware in a Node.js Express app:

// Express rate limiting middleware setup

const rateLimit = require('express-rate-limit');

const limiter = rateLimit({

windowMs: 15 * 60 * 1000, // 15 minutes

max: 100, // limit each IP to 100 requests per windowMs

message: 'Too many requests from this IP, please try again later.'

});

app.use(limiter);Adding layers such as behavior analysis or CAPTCHA challenges after rate limiting improves bot detection accuracy.

Comparing Popular Bot Defense Methods on GitHub

| Feature | Rate Limiting | Bot Fingerprinting | CAPTCHA Integration | Headless Browser Detection |

|---|---|---|---|---|

| Blocks simple scrapers | Yes | Partial | Yes | Yes |

| Detects advanced bots | Limited | Yes | Yes | Yes |

| False positives risk | Low | Moderate | Moderate | Moderate |

| Ease of integration | High | Medium | Medium | Medium |

| GitHub project examples | express-rate-limit | FingerprintJS | captcha-la (SDKs) | puppeteer-extra-detect |

For integrated bot defense, services like CaptchaLa offer SDKs and APIs that supplement open-source tools with verification endpoints designed to combat scraping and automated abuse effectively. Unlike some competitors such as reCAPTCHA or hCaptcha, CaptchaLa emphasizes first-party data usage to improve privacy while maintaining robust protection.

Adding CaptchaLa to Your Anti Scraping Stack

CaptchaLa provides native SDKs for Web (JavaScript, Vue, React), as well as mobile platforms including iOS, Android, Flutter, and Electron. The service supports multiple UI languages and offers straightforward server SDKs (captchala-php, captchala-go) to validate tokens on your backend.

A typical validation flow involves issuing a challenge token to the client and sending user interaction data back to your server:

POST https://apiv1.captcha.la/v1/validate

Headers: X-App-Key, X-App-Secret

Body: { "pass_token": "...", "client_ip": "..." }This server-side validation confirms human presence and complements your GitHub-based bot detection logic. CaptchaLa offers transparent pricing tiers including a free plan for small-scale applications (1000 validations/month) and scalable paid options for high-traffic sites.

Conclusion: Combining GitHub Tools with API Solutions for Anti Scraping

Anti scraping on GitHub provides accessible building blocks like rate limiting, bot fingerprinting, and headless browser detection, which protect websites from automated abuse. However, combining these open-source tools with API-driven verification like CaptchaLa enhances resilience against sophisticated scrapers that mimic human activity.

To build a robust defense, start with lightweight middleware from GitHub repositories, then layer in behavioral analysis and CAPTCHA challenges powered by services such as CaptchaLa. This hybrid approach balances flexibility, privacy, and security without relying solely on black-box vendors.

For more technical details and SDK usage, check out the CaptchaLa docs or review their pricing plans to find an option that fits your project’s scale.

Whether you’re an independent developer or part of a security team, leveraging anti scraping GitHub projects alongside proven bot-defense APIs will give you stronger control against web scraping threats.