An anti scraping clause is a legal provision designed to prohibit unauthorized automated data extraction, or “scraping,” from a website or online service. Often embedded within terms of service (ToS) or licensing agreements, this clause helps website owners clearly state that bots or scripts attempting to copy content or data without permission are forbidden. Having an anti scraping clause is critical to protecting intellectual property, user privacy, and server resources from abusive automated activity.

Why Websites Include Anti Scraping Clauses

Many websites contain valuable data, ranging from pricing information and product listings to user-generated content. Automated scraping tools can collect this data in bulk, sometimes violating copyrights, breaching privacy policies, or distorting business models. An anti scraping clause serves several purposes:

- Legal deterrent: It creates a contractual basis to pursue legal action against unauthorized scrapers.

- Defining boundaries: It clarifies what kinds of automated access are forbidden (e.g., data mining, bulk downloading).

- Supporting technical defenses: It complements security measures like CAPTCHAs and rate limiting.

- Aligning user expectations: It informs visitors and clients about acceptable use policies.

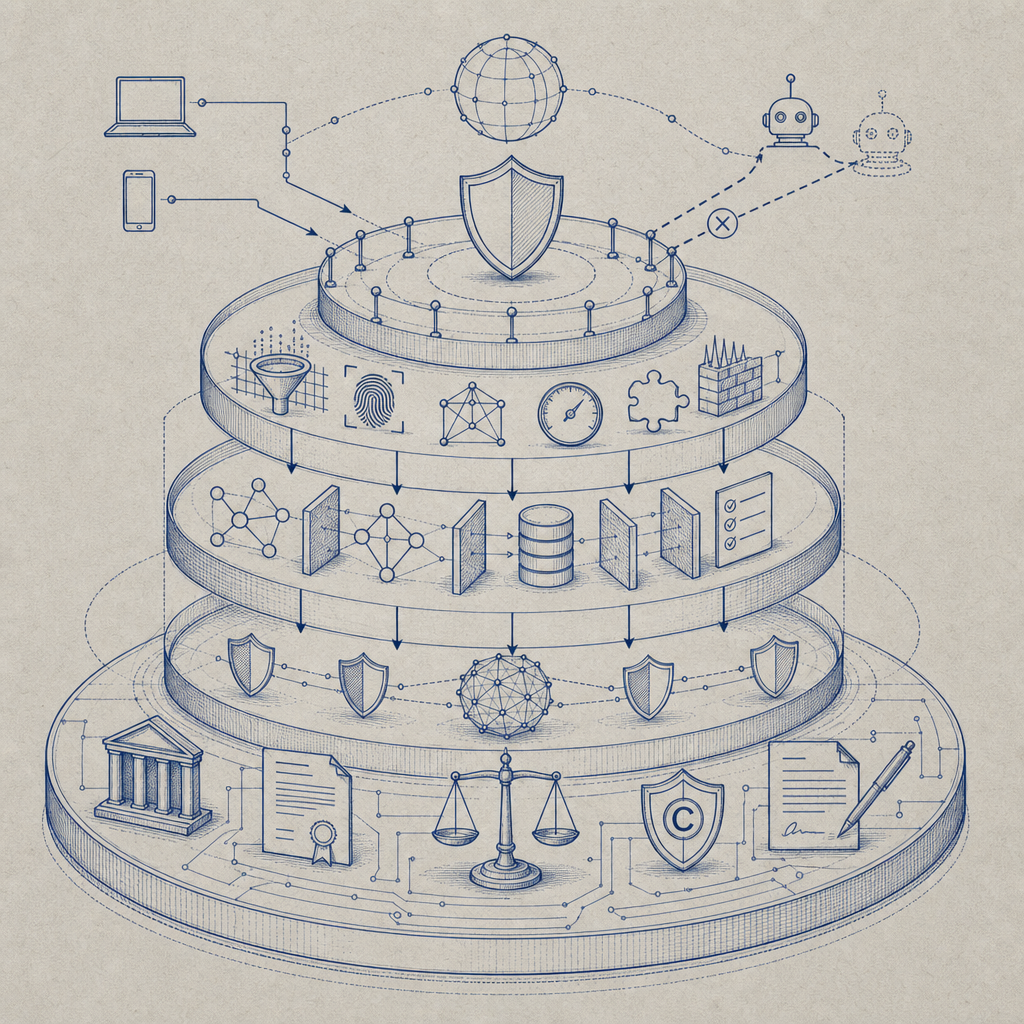

While an anti scraping clause doesn't on its own stop bots, it is an important component of multilayered bot defense — especially combined with solutions such as CaptchaLa, reCAPTCHA, hCaptcha, or Cloudflare Turnstile.

Typical Language in an Anti Scraping Clause

Anti scraping clauses usually appear in the terms of use or API agreements. They can vary in wording but commonly include provisions like:

- "Users shall not use automated scripts, bots, spiders, or scrapers to access or copy data from this website without explicit permission."

- "Any form of data harvesting, extraction, or unauthorized crawling is strictly prohibited."

- "Violation of these terms may result in suspension of access or legal measures."

The clause may also describe mechanisms for requesting authorized API access or data feeds as alternatives to scraping.

Example Anti Scraping Clause Snippet

You agree not to use any automated system, including but not limited to "robots," "spiders," or "offline readers," to access the Service for any purpose without our express written permission. Unauthorized data mining, scraping, or extraction is strictly forbidden.Strategies for Enforcing an Anti Scraping Clause

An anti scraping clause only has legal weight if enforced. That enforcement often relies on technical defenses and monitoring tactics:

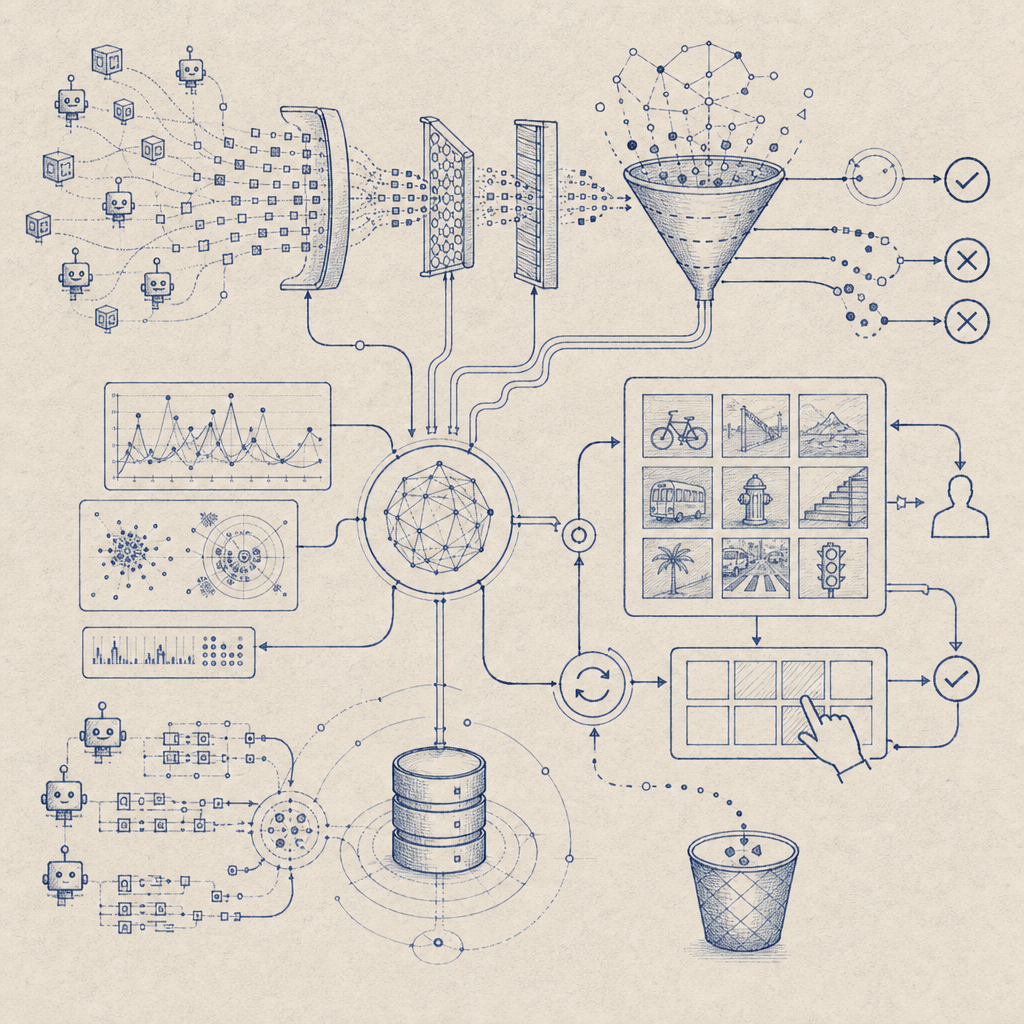

1. Bot Detection and CAPTCHAs

Inserting challenges that are hard for bots — such as image recognition or puzzle CAPTCHAs — deters automated scraping. CaptchaLa offers flexible, privacy-conscious CAPTCHA solutions with SDKs for Web, iOS, Android, and more, helping distinguish human users from bots.

2. Rate Limiting and IP Blocking

Limits on how frequently a user or IP can request data reduce aggressive scraping attempts. Suspicious IPs or user-agents may be blacklisted or throttled.

3. Honeypot Traps and Behavioral Analysis

Hidden fields or interaction patterns can detect bots. Behavioral analysis can identify scripted, repetitive, or rapid-fire requests inconsistent with normal users.

4. Legal Notices and Cease & Desist Letters

Once scraping activity is detected, site owners may use the anti scraping clause as a basis to send warnings or take legal action.

Comparing Bot-Defense Technologies Supporting Anti Scraping Enforcement

| Feature | CaptchaLa | reCAPTCHA | hCaptcha | Cloudflare Turnstile |

|---|---|---|---|---|

| SDKs and Frameworks | Web, iOS, Android, Flutter, Electron | Web, Android, iOS | Web, Android, iOS | Web only |

| Supported Languages | 8 UI languages | Multiple languages | Multiple languages | English primarily |

| Privacy Considerations | First-party data only | Google data tracking | Privacy-focused | Claims minimal data retention |

| Pricing Tiers | Free to Business tiers (up to 1M/mo free/pro) | Free with quotas | Free with enterprise options | Included with Cloudflare service |

| Customization Flexibility | High, with server and client SDKs | Moderate | Moderate | Low, managed service |

These tools integrate with anti scraping clauses by providing automated bot detection that supports enforcement efforts technically, not just legally.

Why Combine Anti Scraping Clauses With Bot Defense Solutions?

Relying solely on legal text isn’t enough. Automated bots increasingly emulate human behavior, bypassing simple rules. Solutions like CaptchaLa provide an additional technical barrier: detecting non-human traffic, challenging suspicious users, and logging verification data.

For example, integrating CaptchaLa’s loader script or server SDK provides flexible bot detection with support for global users thanks to multiple language UIs and broad SDK coverage (including Maven, CocoaPods, pub.dev, and server libraries). This makes it easier to enforce anti scraping clauses practically—only human users pass challenges, and suspicious automated clients get blocked.

Steps to Implement a Comprehensive Anti Scraping Policy

- Draft clear anti scraping clauses in your terms of service or API agreements.

- Deploy bot-detection tools like CaptchaLa, reCAPTCHA, or Cloudflare Turnstile.

- Monitor traffic for suspicious behavior, including high request rates and unusual crawling.

- Apply rate limits and IP blocking to mitigate automated data harvesting.

- Issue warnings or legal actions when violations occur.

- Keep your policies updated as scraping tactics evolve.

Conclusion

An anti scraping clause is a foundational legal tool that clearly informs users that automated data extraction is forbidden without permission. However, the practical enforcement of this clause hinges on strong technical defenses. Combining clear legal language with scalable bot management solutions like CaptchaLa strengthens your ability to protect valuable data and maintain site integrity.

By pairing an anti scraping clause with robust CAPTCHA and bot detection, businesses can better safeguard their platforms from unwanted scraping and maintain control over their digital assets.

For more on bot defenses and implementing CAPTCHA solutions, check out CaptchaLa’s documentation or explore pricing options to find a plan that fits your needs.