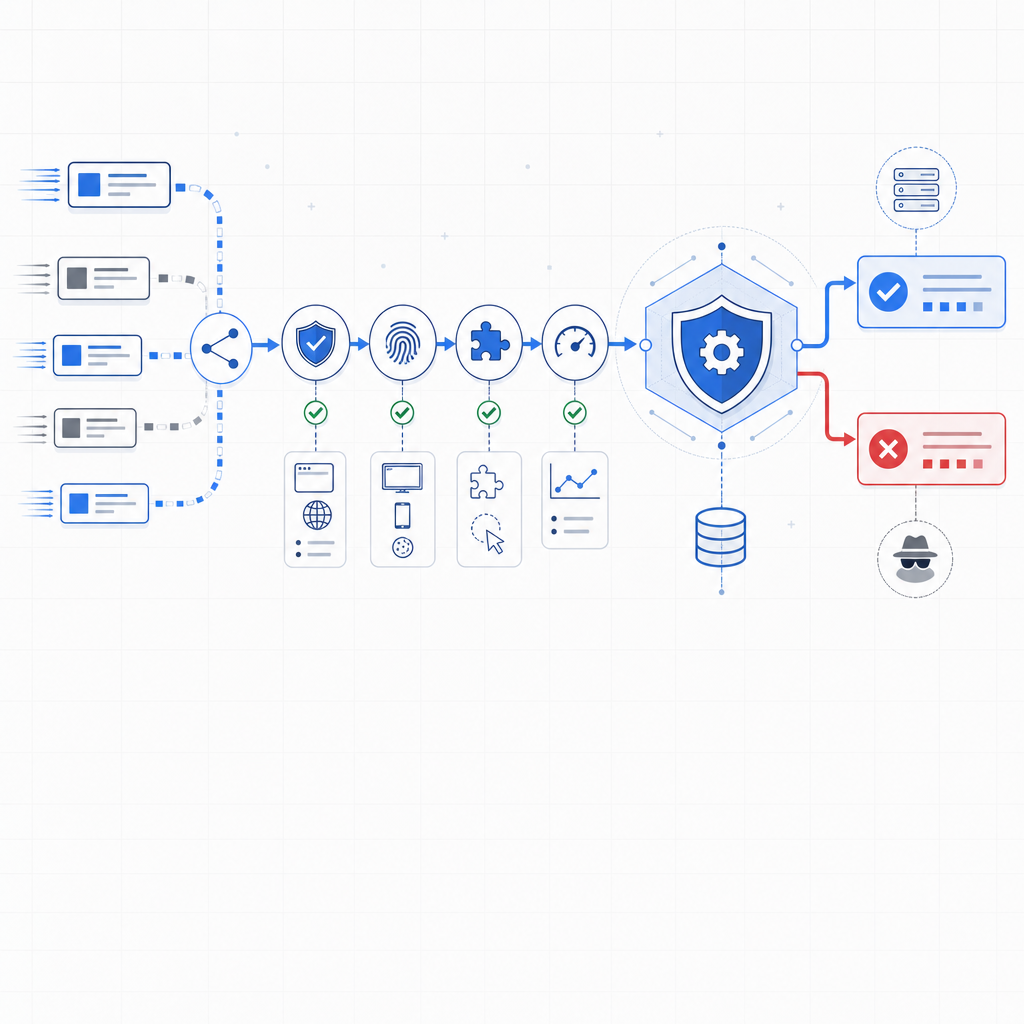

An anti scraping API is a specialized service that distinguishes genuine users from automated scrapers to protect your website’s content and resources. It acts as a gatekeeper, verifying requests and blocking suspicious or malicious automation that attempts to extract data, overload servers, or commit fraud. By integrating an anti scraping API, websites can maintain data integrity, reduce server strain, and uphold business rules without harming user experience.

What Does an Anti Scraping API Do?

An anti scraping API analyzes incoming traffic to identify, challenge, and block bots. It uses a combination of device fingerprinting, behavioral analysis, rate limiting, and challenge-response tests to detect automated tools that try to harvest or misuse your data.

Unlike traditional CAPTCHA systems that rely solely on visual or audio challenges, modern anti scraping APIs provide seamless bot detection with minimal friction for real users. They generate tokens, monitor client behavior, and verify those tokens server-side to ensure requests originate from humans.

Key Features Typically Offered

- Real-time threat detection: Spot suspicious activity early.

- Challenge issuance: Trigger challenges (CAPTCHAs, puzzles) for doubtful traffic.

- Token validation: Confirm user authenticity via server-side token checks.

- Rate limiting and IP reputation: Restrict repeated suspicious requests.

Popular Solutions: How CaptchaLa Compares

When considering anti scraping APIs, common names include Google reCAPTCHA, hCaptcha, Cloudflare Turnstile, and CaptchaLa. Each has unique approaches:

| Feature / Provider | CaptchaLa | reCAPTCHA | hCaptcha | Cloudflare Turnstile |

|---|---|---|---|---|

| Open integrations | JS, Vue, React, iOS, Android, Flutter, Electron | JS, Android, iOS | JS, Android, iOS | JS only |

| Server SDKs | PHP, Go | Limited official SDKs | Limited official SDKs | Limited official SDKs |

| Token validation | REST API with App Key/Secret | REST API | REST API | REST API |

| UI languages | 8 | 50+ | 50+ | Multiple |

| Pricing | Free tier + multi-tier plans | Free, enterprise pricing | Per challenge pricing | Included with Cloudflare services |

CaptchaLa emphasizes developer-friendly multi-platform support with native SDKs and straightforward token validation, suitable for teams wanting more control over bot detection without sacrificing user experience.

How to Implement an Anti Scraping API Effectively

Implementing an anti scraping API involves more than just copying code snippets. The goal is to balance security and usability:

- Integrate client-side SDK/library: Load the challenge widget or runtime script (e.g., via a loader URL) on your website or app.

- Trigger challenges conditionally: Decide when suspicious traffic needs to be challenged versus silently monitored.

- Validate tokens server-side: Use the API endpoint (e.g.,

https://apiv1.captcha.la/v1/validate) to verify thepass_tokenwith your app credentials. Reject requests if the token is invalid or absent. - Track suspicious patterns: Implement rate limiting and IP reputation services to detect repeated abuse attempts.

- Log and analyze events: Maintain audit trails to tune detection sensitivity and filter false positives.

Here’s a basic example of server-side validation logic in pseudocode:

# Pseudocode for server-side token validation

def validate_user_token(pass_token, client_ip):

headers = {

"X-App-Key": your_app_key,

"X-App-Secret": your_app_secret

}

data = {

"pass_token": pass_token,

"client_ip": client_ip

}

response = post("https://apiv1.captcha.la/v1/validate", headers=headers, json=data)

if response.status_code == 200 and response.json().get("success"):

return True # Allow access

else:

return False # Deny or challenge furtherNavigating Challenges and Avoiding Pitfalls

Anti scraping APIs improve security but come with considerations:

- False positives may affect UX: Strict bot filtering sometimes blocks legitimate users or bots serving valid purposes (e.g., search engine crawlers). Configuration tuning is essential.

- Performance overhead: Additional validation steps introduce latency; cached token verification can reduce delays.

- Privacy and compliance: Make sure your anti scraping service respects user privacy and complies with GDPR or similar regulations by using first-party data when possible.

- Integration complexity: Choose a provider with clear documentation and SDK support to streamline deployment.

Why Choose CaptchaLa’s Anti Scraping API?

CaptchaLa offers flexibility with native SDKs across major platforms like Web (JS/Vue/React), iOS, Android, Flutter, and Electron. Its REST API for server-side validation is simple yet powerful, utilizing first-party data only, which aligns with privacy standards. The free tier supports up to 1,000 monthly validations, scaling to millions for business use. CaptchaLa also documents comprehensive usage guidelines to accelerate developer adoption.

As an independent SaaS, CaptchaLa is continually evolving features focused on improving bot defense while accommodating organizational needs such as multilanguage support and fine-grained access controls.

Where to Go Next

For detailed instructions on integrating CaptchaLa’s anti scraping API and understanding tiered pricing, visit the CaptchaLa documentation and explore pricing plans. Implementing a solid bot defense strategy can safeguard your data and improve overall site performance with minimal impact on legitimate users.