Anti scraping AI is software that detects and slows automated collection of data before it can overwhelm your pages, APIs, or checkout flows. The practical goal is simple: identify bots early, verify suspicious sessions selectively, and keep legitimate users moving with as little friction as possible.

That sounds straightforward, but the details matter. Scrapers rarely look like a single “bad” actor anymore. They rotate IPs, mimic browsers, replay cookies, and blend into normal traffic patterns. A good anti scraping AI system looks at behavior across requests, device signals, challenge results, and risk patterns over time rather than relying on one brittle rule.

What anti scraping AI actually does

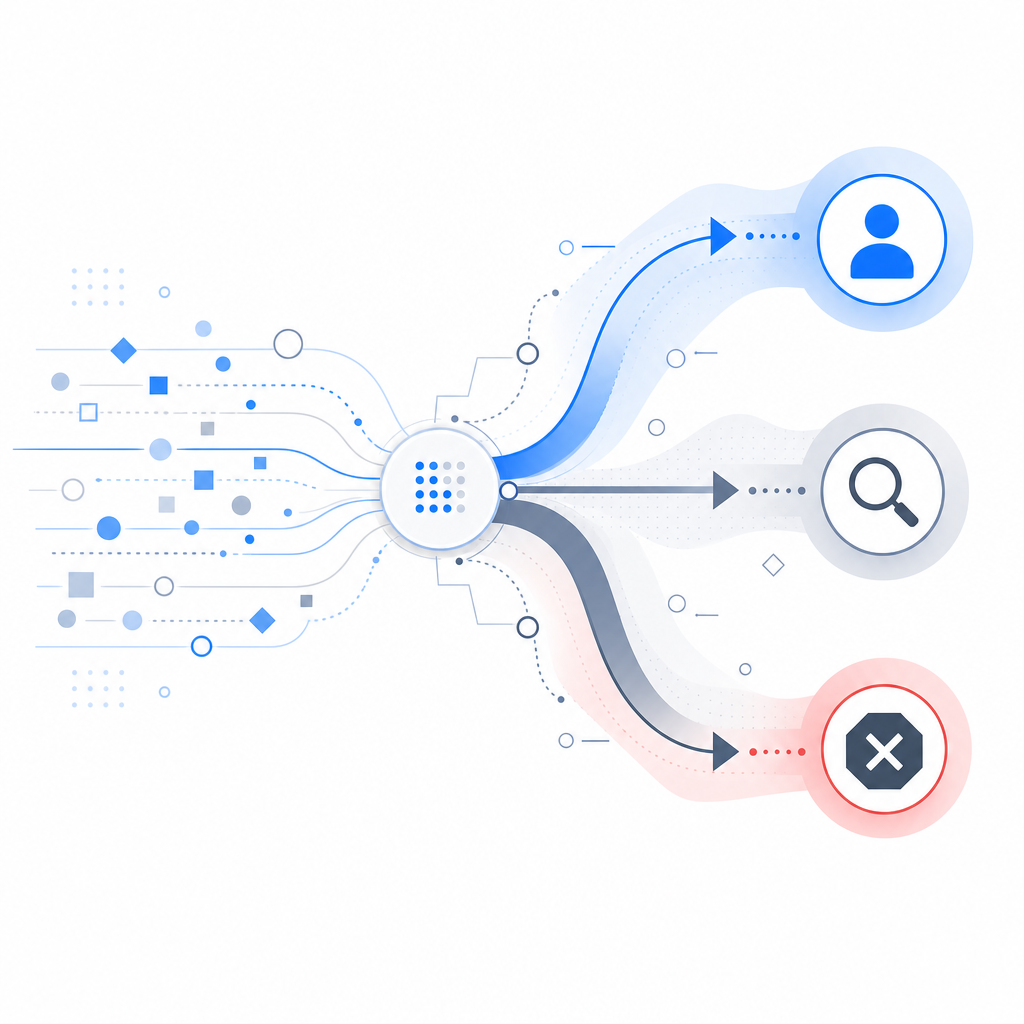

At a high level, anti scraping AI is a risk engine for automated traffic. It does not need to “understand” every scraper technique in advance. Instead, it learns which combinations of signals are unusual for your app and applies the right response: allow, step up, or block.

The signals it usually weighs

Request velocity and burst shape

A human browsing product pages behaves differently from a scraper harvesting thousands of records in a minute. Velocity alone is not enough, but it is a useful feature when combined with session context.Navigation consistency

Real users tend to follow logical page paths. Scrapers often jump directly to deep URLs, repeat endpoints, or request resources that a browser would not load in that order.Client integrity

Browser fingerprint fragments, JavaScript execution, cookie continuity, and challenge completion all help distinguish a normal session from automation.Network reputation with context

IP reputation still matters, but it is weak by itself. Proxy-heavy traffic can look clean for a moment and then become obviously synthetic when paired with timing and behavior clues.Outcome-based scoring

Instead of only asking “is this a bot?”, stronger systems ask “what should happen next?” That might mean a silent pass, a lightweight challenge, or a stricter verification path.

A well-designed system should stay mostly invisible to good traffic. If every visitor gets stopped, you are not defending your site; you are just shifting the pain to users.

Why scrapers are harder to catch now

A few years ago, many defenses leaned heavily on static rules: block this IP range, rate-limit that endpoint, challenge every repeated request. Those methods still help, but they are easier for determined automation to work around.

Today’s scraping operations often combine:

- residential or mobile proxies

- headless browsers with improved rendering

- human-like delays and cursor simulation

- session reuse across many targets

- distributed workers to avoid obvious spikes

This means the most useful defenses are adaptive. They compare current behavior to the baseline of your own traffic, not to an abstract internet average. That baseline can differ dramatically between an e-commerce catalog, a ticketing flow, and an internal admin portal.

Anti scraping AI vs traditional bot tools

| Approach | Strength | Limitation |

|---|---|---|

| Static IP blocking | Fast and simple | Easy to evade with proxies |

| Rate limiting | Good for burst control | Can hurt valid users and shared networks |

| CAPTCHA-only gates | Familiar to teams and users | Can be annoying and sometimes solveable at scale |

| WAF rules | Useful for known patterns | Requires ongoing tuning |

| Anti scraping AI | Adapts to behavior and context | Needs good telemetry and careful thresholds |

This is why many teams combine layers instead of expecting one control to do everything. For example, a challenge platform may be used only when the risk score crosses a threshold, while low-risk sessions are allowed through quietly.

Where it fits in a real application

Anti scraping AI works best when it sits close to the request path and can make quick decisions. Common places include:

- product listing pages

- pricing and inventory endpoints

- login and signup flows

- search and checkout funnels

- public APIs with valuable data

A typical integration pattern is:

- Issue a challenge token or session signal

- Collect client-side and request-side proof

- Validate server-side

- Return an allow/step-up/block decision

- Log the outcome for tuning and investigation

That server-side validation step matters. If your defense only checks a browser-side flag, it is easier to replay. Stronger implementations validate against a backend endpoint and tie the result to your own secret key.

For example, CaptchaLa exposes a validation flow that posts to https://apiv1.captcha.la/v1/validate with pass_token and client_ip, authenticated with X-App-Key and X-App-Secret. There is also a server-token issuance endpoint at https://apiv1.captcha.la/v1/server/challenge/issue, which is useful when you want the backend to drive challenge creation rather than trusting the client alone. If you are evaluating implementation details, the docs are the best place to start.

// Pseudocode for a server-side validation flow

async function validateCaptcha(passToken, clientIp) {

const response = await fetch("https://apiv1.captcha.la/v1/validate", {

method: "POST",

headers: {

"Content-Type": "application/json",

"X-App-Key": process.env.CAPTCHA_APP_KEY,

"X-App-Secret": process.env.CAPTCHA_APP_SECRET

},

body: JSON.stringify({

pass_token: passToken,

client_ip: clientIp

})

});

// English comments only:

// Check the verification result before allowing the request.

return await response.json();

}CaptchaLa also supports native SDKs for Web, iOS, Android, Flutter, and Electron, plus server SDKs such as captchala-php and captchala-go. That matters if you want one verification model across web and mobile rather than stitching together multiple vendors with different semantics.

Choosing the right defense for your use case

Not every team needs the same level of strictness. A content site that worries about aggressive indexing needs a different configuration than a fintech onboarding flow or a public API.

When comparing tools like reCAPTCHA, hCaptcha, and Cloudflare Turnstile, the right question is usually not “which one is universally better?” It is “which one fits my UX, risk tolerance, and data policy?”

A few practical considerations:

- User experience: some products are more visible than others. If your audience is high-intent and mobile-heavy, friction can have a real conversion cost.

- Privacy and data handling: some teams prefer a first-party model with tighter control over what is collected and where it is processed.

- Integration surface: check whether you need only a web widget or also native mobile and desktop coverage.

- Operational flexibility: can you tune challenge rates by endpoint, country, session age, or risk score?

- Telemetry quality: if you cannot explain why a session was challenged, it is hard to improve the system later.

CaptchaLa is one option in that space, especially if you want first-party data only and need support across web and native clients. Its pricing is also straightforward to evaluate if you are planning rollout stages, with a free tier at 1,000 verifications per month and paid tiers that scale from 50K–200K to 1M monthly volume. The pricing page is useful if you are mapping defense cost to traffic.

How to make anti scraping AI work without hurting real users

The best anti scraping AI deployments are tuned, not just enabled. The aim is not maximum blocking; it is maximum confidence per interaction.

Start with a narrow set of endpoints and measure:

- challenge rate

- pass rate by device and country

- conversion impact

- support tickets related to access issues

- repeat offender patterns after enforcement

Then gradually expand. If you challenge everyone on day one, you will not know whether you are stopping scrapers or just frustrating your most valuable users.

A good rollout plan usually looks like this:

Observe first

Run in monitor mode if available. Understand the traffic shape before enforcing anything.Protect the highest-value paths

Begin with search, signup, inventory, or API endpoints that are easy to abuse.Use step-up challenges selectively

Challenge only sessions that look risky or expensive.Review false positives regularly

A defense that blocks legitimate browsers on older devices is too aggressive.Feed outcomes back into policy

Every allowed or blocked session is a training signal for your thresholds and routing logic.

When implemented thoughtfully, anti scraping AI becomes a quiet layer of operational security rather than a visible obstacle. That is usually the sweet spot: bots lose efficiency, humans barely notice, and your team gets more reliable traffic.

Where to go next: if you are evaluating a rollout, start with the docs or review the pricing tiers to match your expected verification volume.