An anti gore bot strategy is simply a set of controls that blocks automated abuse aimed at posting, spreading, or amplifying graphic or violent content before it reaches users. If your site accepts logins, comments, uploads, invites, or messages, you need more than moderation alone: you need bot defense at the edge and on the server.

The tricky part is that “gore bot” abuse usually looks like normal traffic at first. It may come from fresh accounts, rotating IPs, scripted signups, or replayed requests, and it often targets the weakest entry point rather than the most obvious public page. That means the defender’s job is to detect abnormal behavior early, challenge it proportionally, and keep the experience smooth for legitimate people.

What “anti gore bot” really means

The phrase is not about one single product or one single attack pattern. It describes a defensive posture against automated accounts or scripts that try to seed violent, graphic, or disturbing content across a service. In practice, that can include:

- Mass account creation for abuse campaigns.

- Automated posting into comment threads, chat rooms, or forums.

- Upload abuse where scripts attempt to submit restricted media at scale.

- Invitation or referral abuse that helps bad actors re-enter after takedowns.

- Token replay attempts that reuse previously observed client sessions.

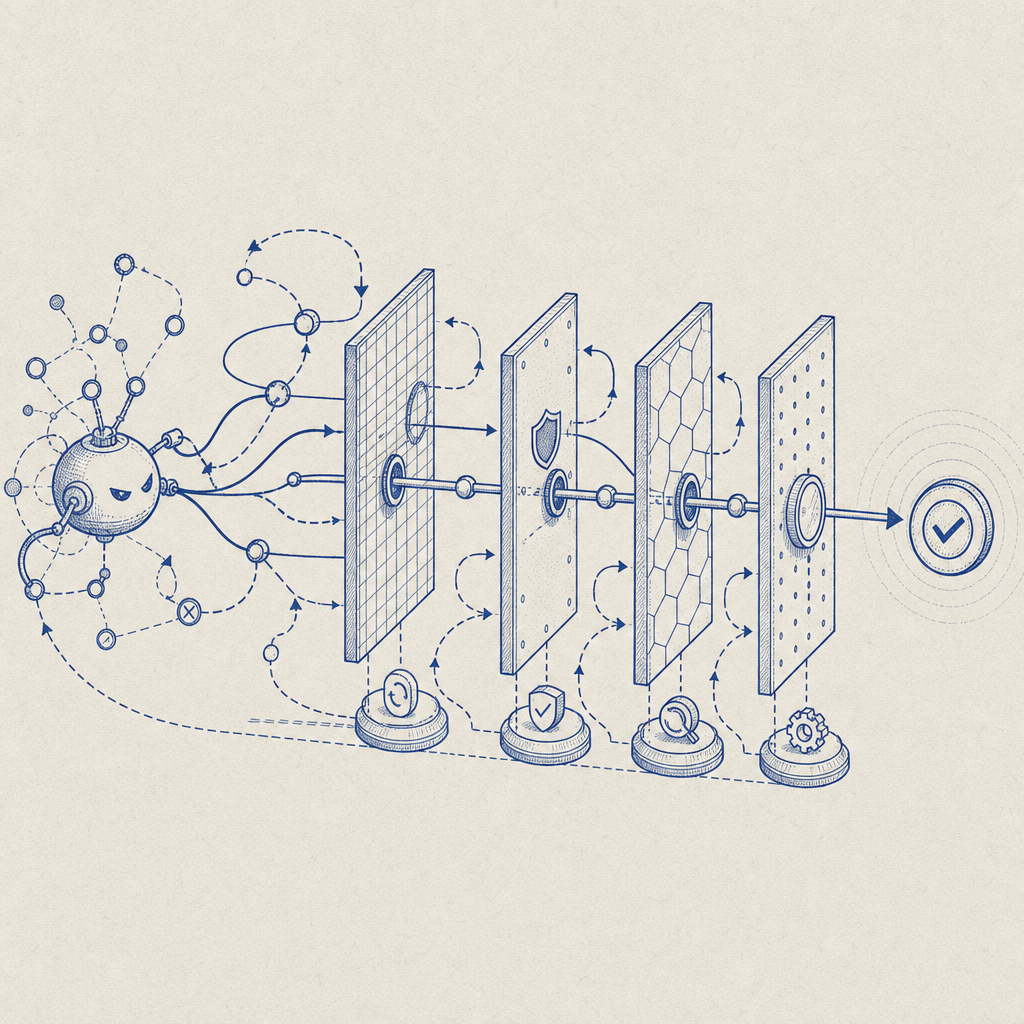

The right response is not to block everything. It is to raise the cost of automation while keeping normal interactions fast. That usually means a mix of client-side signals, server validation, rate controls, and human-friendly challenge steps when risk increases.

A common mistake is to treat all suspicious traffic the same. A brand-new account posting once from a consumer network is different from a script issuing 200 submissions a minute across many IPs. Your controls should reflect that difference.

Detection signals defenders should watch

A practical anti gore bot setup starts with signals you can trust. Some signals are easy to fake on their own, so the goal is correlation rather than a single “magic” indicator.

Useful signals for abuse scoring

- Behavioral pacing: submissions arriving at impossible human speed.

- Session consistency: whether the same client maintains stable cookies, tokens, and navigation order.

- IP and ASN patterns: bursts from hosting providers or known proxy-heavy ranges.

- Device continuity: repeated use of the same browser fingerprint, storage pattern, or WebView-like behavior.

- Action sequencing: challenge page skipped, then direct POST to a protected endpoint.

- Content reuse: identical payloads, usernames, captions, or file hashes across many accounts.

The best implementations score these signals together. A single suspicious field should not automatically ban a user, but a cluster of anomalies often justifies a step-up challenge.

You can think of it as a funnel:

- Low risk: allow.

- Medium risk: challenge.

- High risk: block or require server-side verification.

- Very high risk: rate-limit, quarantine, or escalate to moderation.

A defender-first architecture for anti gore bot protection

If you are designing controls from scratch, aim for layered verification. The client should obtain a pass token after completing a challenge, and the server should verify that token before allowing the risky action.

For CaptchaLa, the standard flow is straightforward: load the challenge script from the CDN, collect a pass token on the client, then validate it on the server using your app key and secret. The loader is available at https://cdn.captcha-cdn.net/captchala-loader.js, and validation uses:

POST https://apiv1.captcha.la/v1/validate

Body: { pass_token, client_ip }

Headers: X-App-Key, X-App-SecretA separate server-token flow is also available for issuing challenge-related server tokens:

POST https://apiv1.captcha.la/v1/server/challenge/issueThat gives you a clean split between front-end interaction and back-end trust. The challenge happens in the browser or app, but the decision happens on your server, where policy can be enforced consistently.

Implementation pattern

A practical flow looks like this:

- Render a challenge only when risk is present or the action is sensitive.

- Receive the pass token in the client after challenge completion.

- Submit the protected form or API request with the token attached.

- Validate the token server-side before writing data or performing the action.

- Log outcomes for later tuning, especially for false positives and replay attempts.

If you need native support, CaptchaLa offers SDKs for Web via JS, Vue, and React, plus iOS, Android, Flutter, and Electron. On the server side, there are SDKs for captchala-php and captchala-go. For mobile or desktop apps, that matters because abusive automation often doesn’t stay in one channel.

Comparing common CAPTCHA and bot-defense options

Different stacks handle different constraints well. The right choice depends on whether you care most about friction, deployment simplicity, ecosystem fit, or data handling.

| Option | Strengths | Tradeoffs | Good fit for |

|---|---|---|---|

| reCAPTCHA | Widely recognized, broad familiarity | Can add user friction; privacy and ecosystem concerns vary by use case | General web forms and signups |

| hCaptcha | Strong abuse focus, flexible integrations | May require tuning to reduce friction for some audiences | Public-facing forms with abuse pressure |

| Cloudflare Turnstile | Low-friction UX, easy if you already use Cloudflare | Best experience when your stack already aligns with Cloudflare | Sites already on Cloudflare |

| CaptchaLa | Native SDK coverage, first-party data only, clear validation flow | Like any system, it still needs policy tuning and good server checks | Teams that want direct control and multiple app platforms |

No option replaces moderation or content policy. CAPTCHA only helps with the automation layer. If your service is being targeted for harmful content, you still need:

- account review and takedown workflows,

- upload scanning where relevant,

- abuse reporting,

- and rate-based controls on the sensitive endpoints.

For teams that want implementation guidance rather than guesswork, the docs are the best place to start. If you are mapping rollout costs, the pricing page shows the current tiers, including free and higher-volume plans.

Tuning the system without punishing real users

The hardest part of anti gore bot defense is false positives. A good system should catch the bad automation while preserving trust for real people who are just trying to post, sign in, or upload a file.

That usually means three things:

- Challenge only when needed.

- Keep token lifetimes and validation logic clear.

- Treat repeated failures as a stronger signal than one-off anomalies.

Here is a simple server-side policy sketch:

if request.endpoint in sensitive_endpoints:

risk = score_ip(request.ip) + score_session(request.session) + score_behavior(request)

if risk >= HIGH:

block_request()

else if risk >= MEDIUM:

require_captcha_challenge()

verify_pass_token_with_server()

else:

allow_request()A few details matter a lot in production:

- Bind the validation to the current

client_ipwhen your architecture supports it. - Validate before any permanent write, not after.

- Use per-endpoint thresholds instead of one global rule.

- Re-evaluate thresholds by region, device type, and abuse volume.

- Keep logs on first-party data only, especially if you operate in regulated environments.

If you ship across web, mobile, and desktop, make sure the same policy is enforced everywhere. Attackers love the weakest surface, and a mobile API endpoint can become the path of least resistance if the web app is well protected.

Where anti gore bot efforts go wrong

Most failures are operational, not technical. Teams either over-block and frustrate legitimate users, or under-block and let scripts scale faster than moderation can keep up.

Common mistakes include:

- trusting the client without server validation,

- using a CAPTCHA only on sign-up, not on posting or upload endpoints,

- ignoring replayed tokens,

- failing to monitor challenge success rates by endpoint,

- and deploying the same threshold for all risk levels.

A better approach is to treat bot defense as a feedback loop. Review challenge outcomes, inspect blocked traffic patterns, and adjust your policy as abuse changes. If one endpoint starts receiving strange bursts of activity, isolate it quickly instead of raising friction across the whole product.

That is the real meaning of anti gore bot defense: not a single widget, but an adaptive system that protects users, content, and moderation teams from automated abuse.

Where to go next: explore the docs for implementation details, or check pricing if you want to estimate rollout cost for your traffic volume.