Anti bot web scraping is a crucial defense mechanism websites use to prevent unauthorized automated tools from extracting content, data, or resources. It works by identifying and blocking bots that attempt to mimic human behavior to scrape websites at scale, which can harm your service, steal proprietary data, or overload servers. Anti bot solutions apply challenges, rate limiting, fingerprinting, and other heuristics to distinguish legitimate users from malicious automation. Deploying effective anti bot web scraping technology balances security without compromising user experience.

What is Anti Bot Web Scraping and Why Does It Matter?

Web scraping involves automatically extracting web data, often using scripted bots. While scraping can be used for legitimate purposes like market research or archiving, malicious scraping is typically aimed at content theft, price harvesting, spamming, or competitor intelligence gathering. Anti bot web scraping is the set of technologies and practices that actively detect and mitigate unauthorized automated requests.

Without effective protection, web scraping bots can:

- Strip your site of valuable content or intellectual property

- Distort analytics and business insights

- Increase server load, affecting uptime and cost

- Create security vulnerabilities due to unchecked automation

The main goal is to challenge suspicious visitors in a way that only genuine humans can pass, thereby curbing the scale and speed of automated scraping attacks.

Common Anti Bot Web Scraping Techniques

Websites use a mix of defensive layers to deter automated scraping:

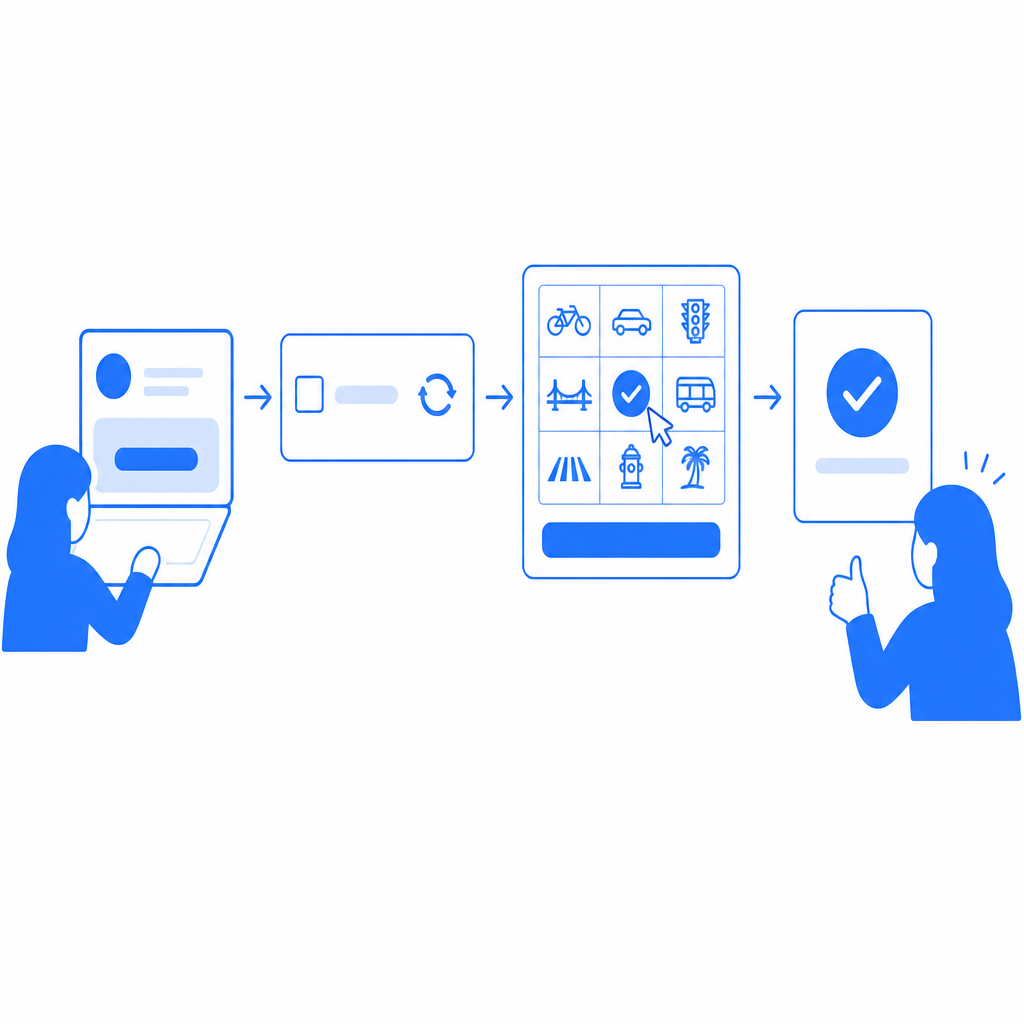

1. CAPTCHA Challenges

Traditional CAPTCHAs (Completely Automated Public Turing test to tell Computers and Humans Apart), like reCAPTCHA, hCaptcha, and Cloudflare Turnstile, show users puzzles or tasks designed to be easy for humans but hard for bots. Image recognition, checkbox challenges, or logic puzzles are typical. Consistent innovation in CAPTCHA design helps address increasingly sophisticated bot behavior.

2. Behavioral Analysis

Monitoring mouse movements, typing patterns, navigation speed, and timing of requests helps infer if the user is human. Bots often generate repetitive or unnatural interaction patterns which advanced detection algorithms flag.

3. Rate Limiting and IP Reputation

Limiting the number of requests from a single IP or range within a timeframe can slow down rapid scraping. Collaborating with IP reputation databases flags known bad actors and proxy services.

4. Browser Fingerprinting

Collecting details like screen resolution, installed fonts, plugins, and canvas fingerprinting can identify suspicious headless browsers or scripted tools masquerading as real users.

5. JavaScript and Cookie Challenges

Bots that don’t execute JavaScript or cannot store/return cookies struggle to pass interactive tests, exposing their automation when challenges are implemented at the browser level.

How CaptchaLa Fits Into Anti Bot Web Scraping

CaptchaLa provides a flexible, privacy-conscious CAPTCHA and bot defense service designed to integrate across multiple platforms and programming environments. Key benefits relevant for anti bot web scraping include:

- Native SDK support for Web (JS, React, Vue), mobile (iOS, Android, Flutter), and desktop apps (Electron)

- Multi-language UI for regional deployments

- Server-side token validation endpoints to minimize false positives

- Lightweight JavaScript loader to preserve site performance

- A free tier allowing up to 1000 validations per month, scaling to business needs

Unlike some competitors, CaptchaLa emphasizes first-party data usage and transparency without relying on extensive user tracking. This approach helps maintain compliance with privacy regulations while still providing reliable bot detection capabilities.

| Feature | CaptchaLa | reCAPTCHA | hCaptcha | Cloudflare Turnstile |

|---|---|---|---|---|

| SDKs / Language Support | Web, iOS, Android, Flutter | Web only | Web only | Web only |

| Privacy Focus | First-party data only | Collects user data | Mix of first/third-party | Minimal user data |

| UI Languages | 8 | Multiple | Multiple | Multiple |

| Validation API | Yes | Yes | Yes | Yes |

| Pricing | Free & scalable tiers | Free with limits | Free & paid tiers | Free |

| Server SDKs | PHP, Go | Limited | Limited | Limited |

This table highlights the broad platform support and privacy-conscious model that CaptchaLa offers compared to popular providers.

Implementing Anti Bot Measures Without Hurting UX

A balanced anti bot system should minimize friction for legitimate users while effectively weeding out bots. Best practices include:

Adaptive Challenge Levels

Assign challenges dynamically depending on risk signals rather than presenting CAPTCHAs to every visitor. For example, a visitor from a new device with suspicious behavior might receive a challenge, while returning users bypass it.Invisible or Passive Checks

Use browser fingerprinting, behavior monitoring, and JavaScript tests that don’t interrupt the natural flow for humans.Fallback and Recovery Options

Allow users to contact support or retry challenges if they are mistakenly blocked.Monitor and Tune Continuously

Regularly analyze logs and success rates to adjust thresholds and minimize false positives.Integrate Server-Side Validation

Validations like those provided by CaptchaLa’s POST endpoints help verify tokens beyond the client side, providing stronger assurance.

// Example request to CaptchaLa verification API (Node.js)

/*

This snippet sends a token and client IP to verify the CAPTCHA response.

*/

const fetch = require('node-fetch');

async function validateCaptcha(pass_token, client_ip) {

const response = await fetch('https://apiv1.captcha.la/v1/validate', {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'X-App-Key': 'your-app-key',

'X-App-Secret': 'your-app-secret'

},

body: JSON.stringify({ pass_token, client_ip })

});

const result = await response.json();

return result.success === true;

}

Conclusion

Anti bot web scraping solutions are vital for securing your website against unauthorized automated access that can steal data, degrade performance, or disrupt service. By combining CAPTCHAs, behavior analysis, fingerprinting, and smart rate limiting, you can build a layered defense tailored to your application’s specific needs.

CaptchaLa offers a modern approach with broad SDK support, privacy awareness, and flexible pricing tiers—from free for low volume to plans meeting million-request scales. Its developer-friendly APIs and multi-platform integration options make it a strong candidate alongside established options like reCAPTCHA, hCaptcha, or Cloudflare Turnstile.

If you want to explore how to get started or scale your anti bot web scraping defenses with CaptchaLa, check out the documentation or review the pricing plans. Protect your content and user experience with smarter bot defense today.