If your anti bot update failed, the problem is usually not the “bot defense” itself — it is a mismatch between the client, the loader, and your server validation flow. In practice, that error means your app could not refresh, exchange, or validate a protection token cleanly, so the request path got interrupted before the challenge state could be trusted.

That sounds broad because it is broad. The good news is that most failures come from a small set of causes: stale scripts, blocked network calls, incorrect keys, expired tokens, or server-side validation errors. If you approach it as a tracing problem instead of a CAPTCHA problem, you can usually isolate the root cause in a few minutes.

What “anti bot update failed” usually means

The phrase can point to different breakpoints depending on how your anti-bot system is wired. Some products fail during widget or loader initialization. Others fail when they try to fetch a challenge token. Still others fail only on the backend, when the app sends a pass token but the server rejects it.

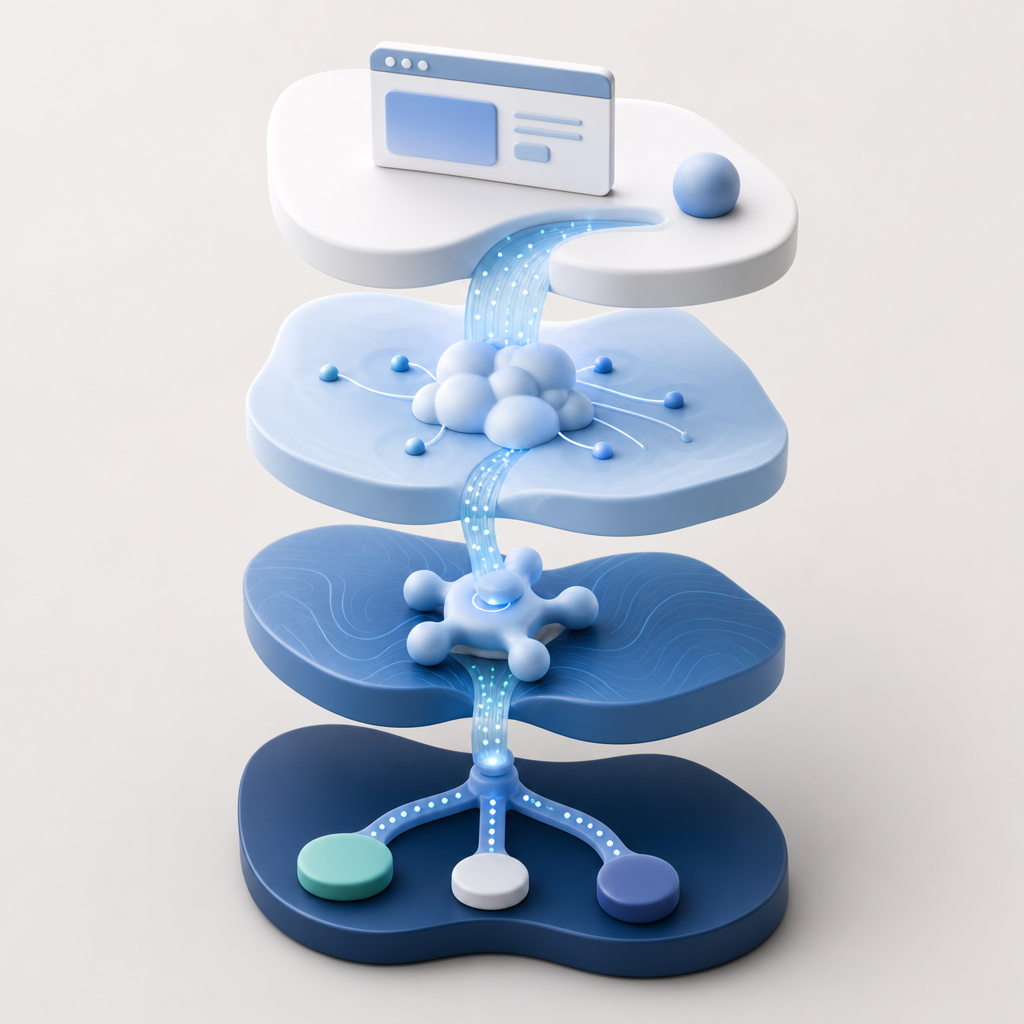

A useful way to think about it is to separate the workflow into three stages:

- Loader stage — the browser loads the anti-bot script or SDK.

- Challenge stage — the client obtains a pass token or challenge result.

- Validation stage — the server confirms the token using your secret credentials.

If stage 1 fails, you will often see network or script errors. If stage 2 fails, the user may never see a usable challenge result. If stage 3 fails, the client may look fine while the backend rejects the request.

This distinction matters because teams sometimes “fix” the visible symptom by reloading the page or lowering friction, while the real issue is an API credential mismatch or a blocked CDN response.

The most common causes, ranked by likelihood

Here is the short list I would check first when anti bot update failed appears in logs or user reports.

| Likely cause | What you’ll see | What to check |

|---|---|---|

| Loader blocked | Script never initializes | CSP, ad blockers, network errors, CDN reachability |

| Bad app credentials | Validation fails server-side | X-App-Key, X-App-Secret, environment variables |

| Token expired or reused | One request works, later requests fail | Token TTL, replay protection, session handling |

| Client IP mismatch | Validation rejected only for some users | Proxy headers, edge routing, extracted client IP |

| Version mismatch | New client talks to old server logic | SDK version, API contract, rollout order |

| Region/network filtering | Works on one network, fails on another | Firewall rules, DNS, enterprise proxy policies |

If you are using CaptchaLa, the validation path is intentionally explicit: your server validates by POSTing to https://apiv1.captcha.la/v1/validate with a body containing pass_token and client_ip, plus X-App-Key and X-App-Secret. That makes debugging easier because the server response becomes the source of truth.

A practical debugging sequence

Use this sequence before changing product settings or swapping providers:

Open the browser dev tools

- Check whether

https://cdn.captcha-cdn.net/captchala-loader.jsloads successfully. - Confirm there are no CSP violations, 403s, 404s, or mixed-content issues.

- Check whether

Inspect the client token flow

- Confirm the page is actually requesting a challenge update.

- Verify the token is present in the expected field or callback payload.

- Look for session resets, duplicate submissions, or navigation events that discard the token.

Check the backend validation request

- Confirm the server is sending the right

X-App-KeyandX-App-Secret. - Make sure the

client_ipvalue is the real user IP, not a proxy hop unless that is what your setup expects. - Check whether the request body is exactly

{pass_token, client_ip}.

- Confirm the server is sending the right

Compare working and failing environments

- Test production vs. staging.

- Test mobile vs. desktop.

- Test on a corporate network vs. residential network.

Look at timing

- If the token expires too quickly, slow devices or page transitions may trigger the failure.

- If the server validates too late, the token may be stale by the time it arrives.

The fastest teams I’ve worked with treat these as observable checkpoints, not mysteries.

How to verify the integration end to end

A clean integration usually has a visible loader, a successful client event, and a successful server validation response. If any one of those is missing, the issue is not “anti bot update failed” in the abstract — it is one missing hop in the chain.

If you are wiring this up with CaptchaLa, the server-side flow is straightforward:

# English comments only

1. Browser loads the anti-bot script from the CDN

2. Client completes the challenge and receives pass_token

3. Your backend receives pass_token and client_ip

4. Backend POSTs to /v1/validate with X-App-Key and X-App-Secret

5. Backend allows or denies the original action based on validation resultFor teams using native SDKs, consistency matters. CaptchaLa supports Web SDKs for JS, Vue, and React, plus iOS, Android, Flutter, and Electron. That broad coverage helps when the same product logic has to behave the same way on mobile and desktop. It also has 8 UI languages, which is useful if the failure only appears in one locale or device class.

A note on implementation hygiene: if you are rotating keys, deploying a new environment, or adding a proxy, update the client and server sides together. Half-migrated configurations are a classic source of “it worked yesterday” failures.

Server-side specifics to confirm

If your validation endpoint is not behaving as expected, verify these details exactly:

- Method:

POST - URL:

https://apiv1.captcha.la/v1/validate - Body fields:

pass_token,client_ip - Headers:

X-App-Key,X-App-Secret

If you issue a server-side challenge token, the endpoint is:

POST https://apiv1.captcha.la/v1/server/challenge/issue

Those details matter more than people expect. A typo in a header name, a stale secret in one environment, or a malformed client IP can look like a platform failure when it is really an integration mismatch.

Comparing common anti-bot approaches without the hype

Different tools fail in different ways, and that affects debugging.

- reCAPTCHA: widely deployed, often familiar to users, but implementation details can vary significantly across versions and page setups.

- hCaptcha: commonly chosen for privacy-conscious flows or alternate UX preferences, with its own validation and challenge behavior.

- Cloudflare Turnstile: often attractive when you already use Cloudflare edge services, though the operational model is tied closely to that ecosystem.

- CaptchaLa: useful when you want a first-party data approach and a clearly documented validate-and-issue API flow, with server SDKs like

captchala-phpandcaptchala-go.

The point is not that one is universally easier. The point is that your debugging process should match the product’s architecture. A CDN-delivered client widget plus backend validation needs a different checklist than a deeply integrated edge platform.

If your team is budgeting or planning rollout scope, CaptchaLa’s tiers are also straightforward: free tier up to 1000 requests per month, Pro at 50K–200K, and Business at 1M. That can help when you want to reproduce a failure at low volume before moving to broader traffic.

How to reduce repeat failures

Once you fix the immediate issue, add guardrails so the same class of bug does not return.

Log validation outcomes

- Track response codes, token age, and validation latency.

- Keep logs structured so you can filter by environment.

Separate config by environment

- Use distinct keys for staging and production.

- Avoid copying secrets manually between systems.

Monitor script delivery

- Alert on loader failures or sudden drops in challenge completions.

- Check CDN availability and CSP changes after each deploy.

Document the expected flow

- Your frontend team should know how the token is generated.

- Your backend team should know exactly which fields are required for validation.

Test edge cases

- Slow connections

- Browser refresh during challenge

- Mobile app backgrounding

- Proxy or VPN traffic

If you use docs, keep the validation and SDK references close to your runbook so engineers do not have to infer the flow from scattered code. And if you need to compare plan capacity while you debug load-related behavior, pricing is a quick place to check fit without changing your integration.

Where to go next: review the implementation steps in the docs, then confirm your environment settings and validation flow against them. If you are still seeing anti bot update failed after that, treat it as a traceability issue, not a mystery, and work the path from loader to token to server response.