An anti bot panel should help you detect automation, tune defenses, and verify risk without turning every user journey into a friction trap. At minimum, it needs clear signals, policy controls, challenge outcomes, and enough audit detail to explain why a request was allowed or blocked.

If you’re evaluating one, the real question is not “does it show traffic?” but “can my team confidently act on bot behavior with it?” That means the panel should support operational decisions: which flows are under attack, which rules are working, where legitimate users are getting slowed down, and what to change next.

What an anti bot panel is for

A good anti bot panel is less like a dashboard and more like a control room. It should centralize the things defenders need to manage automated traffic across login, signup, checkout, password reset, and API endpoints.

At a practical level, the panel should help you answer five questions fast:

- Where is automation hitting hardest?

- Which endpoints are being abused repeatedly?

- Are challenge outcomes changing after a rule update?

- Which clients, geos, or ASNs need closer review?

- Are real users being challenged too often?

That last point matters more than many teams expect. Anti-bot systems fail when they treat every spike as hostile, or when they hide the tradeoff between protection and friction. Your panel should show both sides of the equation.

For teams comparing platforms like reCAPTCHA, hCaptcha, or Cloudflare Turnstile, the panel experience can be a deciding factor. The underlying challenge model matters, but so does visibility into outcomes, logs, and policy knobs. If the panel is opaque, tuning becomes guesswork.

Core controls the panel should expose

The best panels are opinionated in the right places. They should give you enough control to respond to abuse patterns without forcing you into custom code for every adjustment.

1. Policy configuration

Look for controls that let you define how traffic is handled by route, session state, or risk level. Common controls include:

- allow, challenge, or block actions

- per-endpoint policies

- sensitivity thresholds

- IP, ASN, or country-based rules

- escalation rules for repeated failures

A practical panel should make these settings readable at a glance, with clear defaults and a simple audit trail when someone changes them.

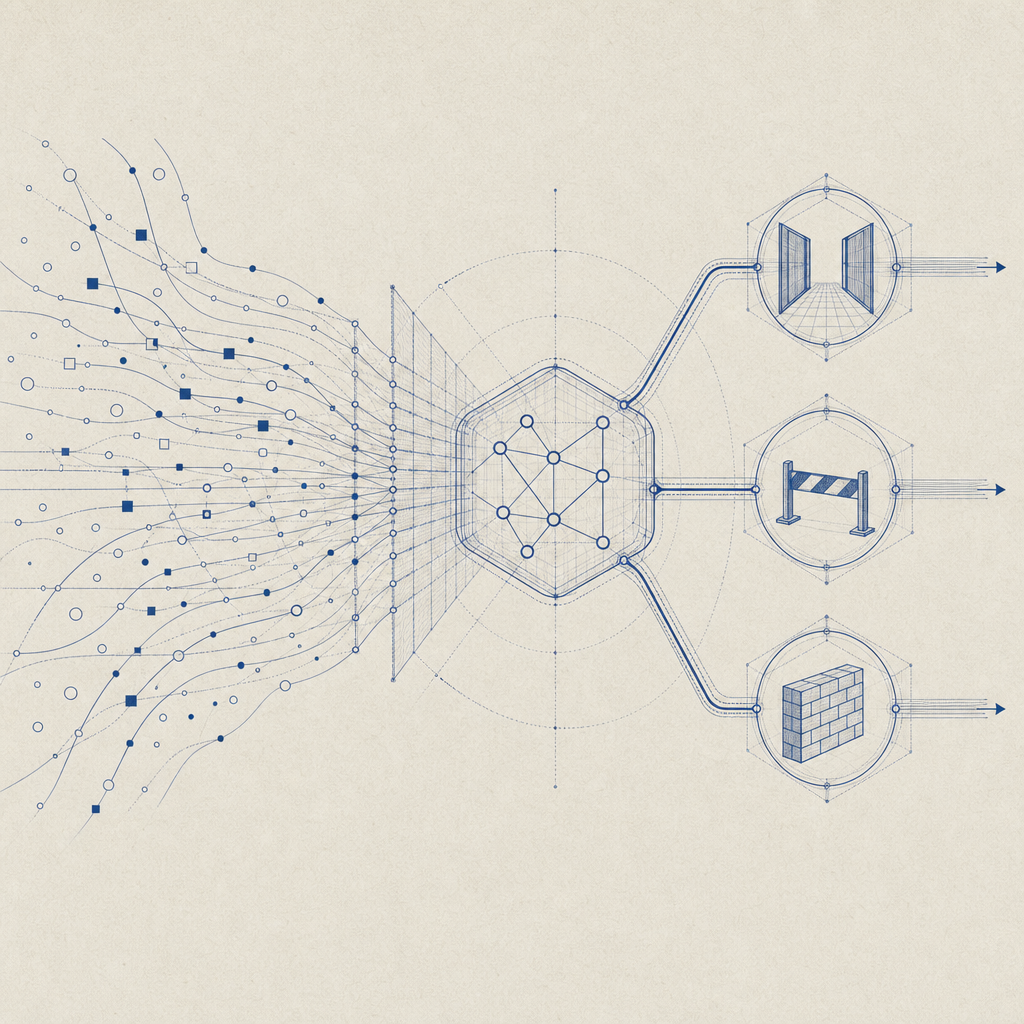

2. Challenge and validation visibility

You need to see whether the challenge was presented, solved, skipped, or failed. Without that, it’s hard to know if the system is catching bots or merely adding noise.

A typical validation flow looks like this:

1. Client requests a protected action

2. Panel rules decide whether to issue a challenge

3. Client receives a pass token after successful interaction

4. Server validates the token with request context

5. The request is allowed, challenged again, or deniedOn the server side, a clean validation API matters. CaptchaLa, for example, documents validation via POST https://apiv1.captcha.la/v1/validate with pass_token and client_ip, authenticated using X-App-Key and X-App-Secret. That kind of straightforward server check is important because the panel should reflect what the backend actually verifies, not just what the browser saw.

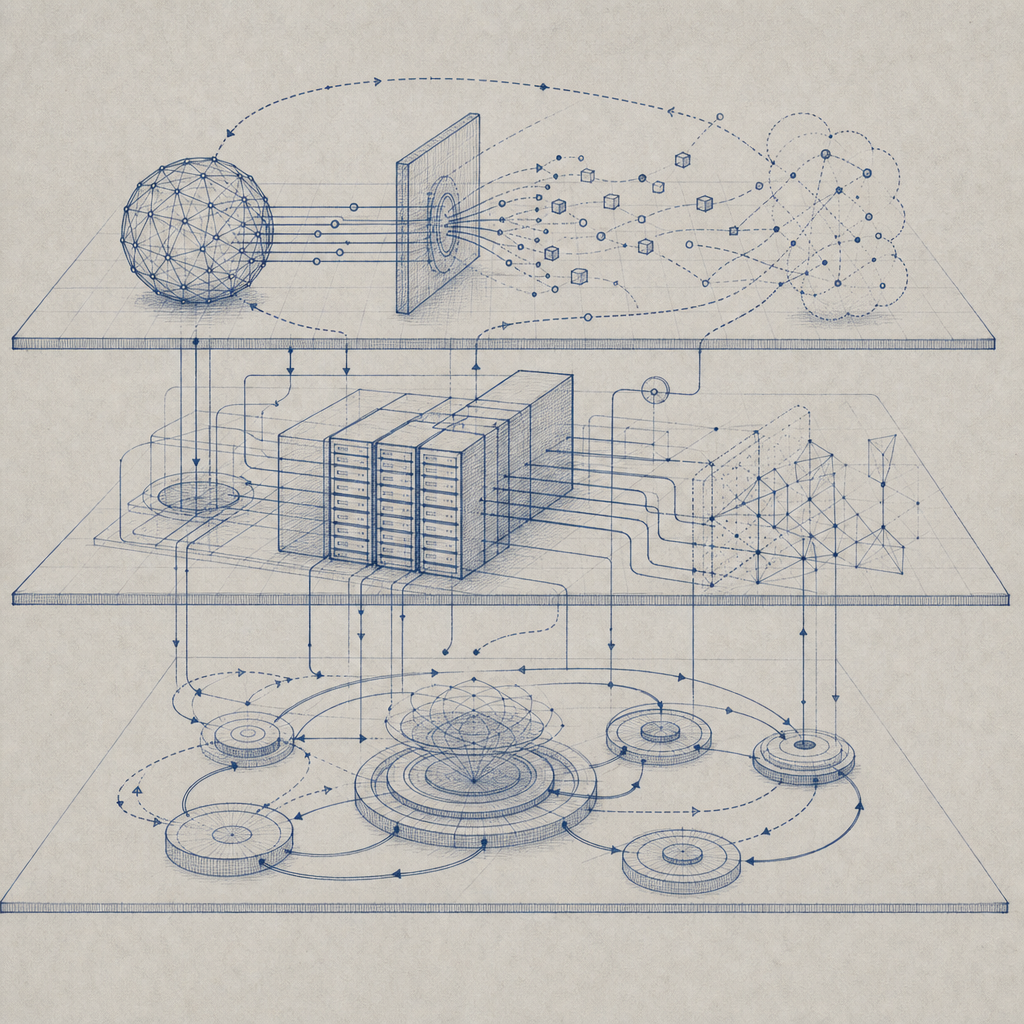

3. Environment and integration controls

A useful panel should also show where your protections are deployed and how they’re integrated. That includes:

- web apps and SPA frameworks

- mobile apps

- desktop clients

- server-side checks

- loader/script status

- versioned SDK usage

CaptchaLa’s current integrations are a good example of what teams often need: native SDKs for Web (JS, Vue, React), iOS, Android, Flutter, and Electron, plus server SDKs like captchala-php and captchala-go. It also supports 8 UI languages, which matters when your user base is multilingual and you don’t want challenge friction caused by localization gaps.

Metrics that belong in the panel

A panel is only useful if it shows the right metrics. Raw request counts are not enough. You want operational metrics that help distinguish attack traffic from normal use.

Key metrics to track

| Metric | Why it matters | What to look for |

|---|---|---|

| Challenge rate | Shows how often users are being gated | Sudden jumps after a rule change |

| Solve success rate | Indicates whether legitimate users can complete challenges | Drops by browser, device, or locale |

| Block rate | Measures how much abusive traffic is stopped | Spikes on signup or login routes |

| Validation latency | Affects user experience and backend timing | Slow token checks during traffic bursts |

| Retry frequency | Can reveal scripted abuse or fragile UX | Repeated failures from same IP/session |

| False positive indicators | Helps protect real users | Elevated friction on trusted traffic |

The panel should also surface trends over time, not just current state. Day-over-day and week-over-week comparisons are especially useful when a campaign or scraper changes tactics. If your traffic is seasonal, the panel should make that obvious rather than treating normal bursts as anomalies.

Another metric worth tracking is first-party data usage. Tools that rely on your own request context, rather than broad third-party tracking, can give you a cleaner operational picture. That is relevant for privacy-conscious teams, and it aligns with how many modern security programs want to work.

How defenders should evaluate a panel

When comparing anti-bot platforms, try to evaluate the panel as if you were the person on call during an incident. A nice UI is helpful, but operational clarity wins.

A simple evaluation checklist

- Can I see which routes are under attack within 30 seconds?

- Can I tell whether challenges are being solved by real users?

- Can I change a rule without redeploying the app?

- Can I audit who changed what, and when?

- Can I correlate server validation failures with client-side challenges?

- Can I segment traffic by device, locale, and trust level?

- Can I export data for incident review or compliance work?

If the answer is “yes” to most of those, you’re closer to a usable control plane than a decorative dashboard.

For implementation details, teams often need both client and server guidance in one place. That’s where docs matter as much as the panel itself. And if you’re estimating rollout cost or traffic tiers, a transparent pricing page is part of the decision too, especially when you’re planning for growth from free-tier volumes up into sustained production usage.

A note on the challenge lifecycle

A good anti bot panel should map cleanly to the actual lifecycle:

- loader delivery

- challenge presentation

- token issuance

- server validation

- policy feedback

For example, if the client loads the challenge script from https://cdn.captcha-cdn.net/captchala-loader.js, your panel should still let you reason about load health and challenge success without conflating the CDN step with validation success. That distinction helps when debugging whether a failure is network-related, integration-related, or policy-related.

Choosing the right fit for your traffic

The right anti bot panel depends on your risk profile. A consumer signup flow, a fintech login page, and a high-volume API gateway all need different levels of visibility and control. That’s why platforms differ even when they solve the same broad problem.

Some teams prefer lightweight friction and broad ecosystem familiarity; others need more explicit server validation and tighter policy control. reCAPTCHA, hCaptcha, and Cloudflare Turnstile each have their own operating model and tradeoffs. The better choice is the one that fits your app architecture, team workflow, and tolerance for false positives.

If you want a panel that aligns with a defend-first workflow, look for:

- clear request and validation logs

- route-level policy controls

- SDK coverage for your stack

- straightforward backend verification

- multilingual UX support

- pricing that matches your traffic bands

CaptchaLa’s published tiers, for instance, start with a free tier for 1,000 monthly requests and scale into Pro ranges around 50K-200K and Business at 1M. Those numbers are useful not because price alone decides the tool, but because they help teams plan how the panel will fit both launch traffic and sustained abuse pressure.

Where to go next: if you’re designing or evaluating your own anti bot panel, start with the docs, then compare rollout needs against pricing.