An anti bot engineer’s job is to make automation expensive, noisy, and unreliable while keeping legitimate users moving. That means spotting scripted behavior, designing challenges and checks that fit the risk, and continuously tuning defenses so bots do not turn signups, logins, scraping, checkout abuse, or credential attacks into a business problem.

The role is less about “blocking bots” and more about decision-making under uncertainty. You are balancing false positives, user friction, privacy constraints, and operational cost. A good anti bot engineer treats bot traffic like a changing adversary, not a fixed rule set.

The core job: detect, decide, and reduce risk

At a practical level, an anti bot engineer builds and operates a pipeline that answers one question: should this request be trusted enough to proceed?

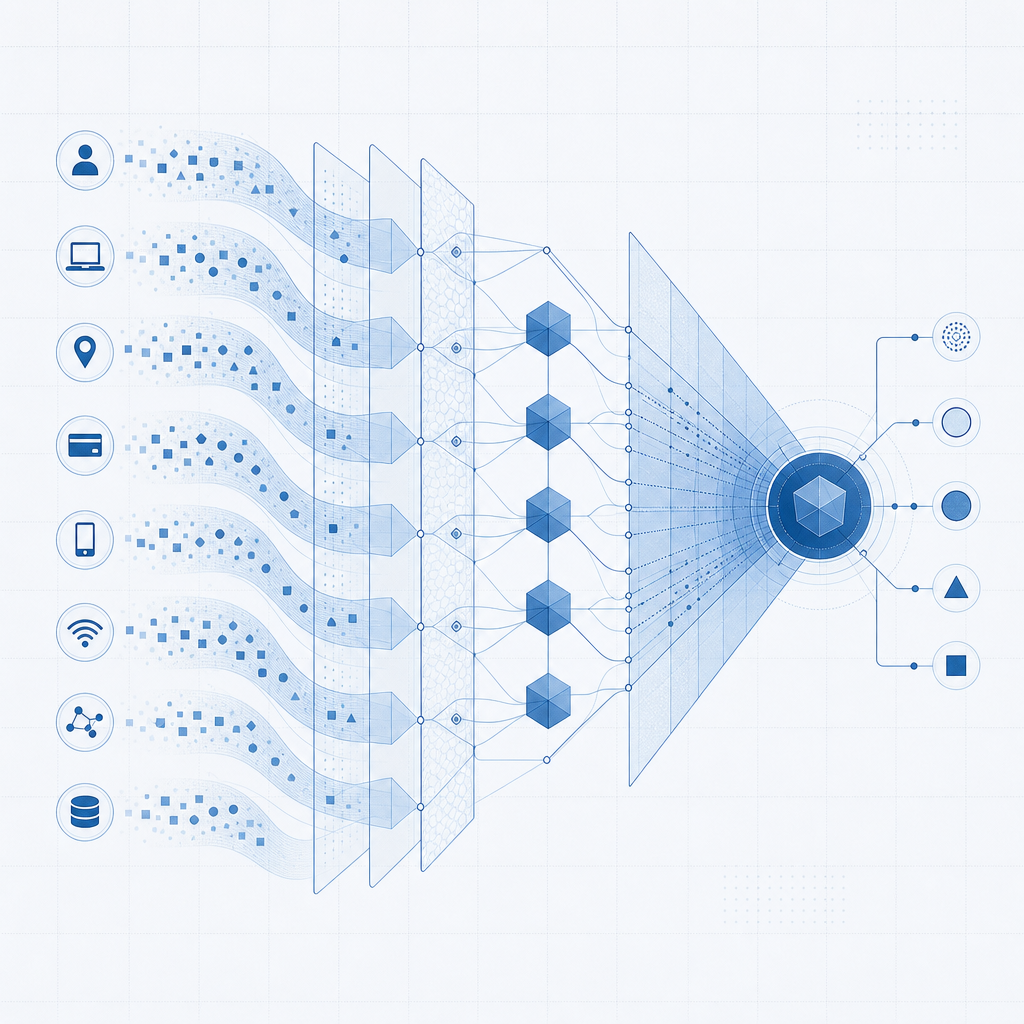

That pipeline usually combines:

Request context

IP reputation, ASN, geolocation mismatch, user agent consistency, headers, cookies, and session age.Behavioral signals

Mouse movement patterns, timing between events, typing cadence, focus changes, navigation flow, and retry frequency.Device and browser integrity

Evidence that a real browser stack is present, whether the environment looks automated, and whether the session is stable across requests.Business context

Is this login from a new device? Is this signup on a high-value domain? Is checkout risk elevated because of promo abuse?Response strategy

Allow, challenge, rate-limit, require step-up verification, or block.

A strong system does not rely on one “magic” signal. It scores confidence from several weak signals and then chooses a response that matches the risk. That is why anti bot engineering is usually a collaboration between security, product, infra, and fraud teams.

What “good” looks like

A good defense has three properties:

- Low friction for humans

- High cost for automation

- Observable outcomes

If you cannot explain why traffic was challenged, blocked, or allowed, tuning becomes guesswork. If you cannot measure challenge completion, abandonment, or attack suppression, you cannot improve the system. And if the defense is so strict that real users churn, the bot problem is “solved” at the expense of the business.

How anti bot engineers structure defenses

A useful way to think about bot defense is in layers. Each layer catches a different class of abuse, and each one has tradeoffs.

| Layer | What it checks | Strengths | Limitations |

|---|---|---|---|

| Edge controls | IP, ASN, rate limits, WAF rules | Fast, cheap, broad coverage | Easy to overblock shared networks |

| Client checks | Browser/runtime integrity, interaction patterns | Useful for automation detection | Can be affected by device diversity |

| Challenge layer | CAPTCHA or step-up verification | Forces human-like interaction | Adds friction; must be tuned carefully |

| Server-side validation | Token verification, session binding | Harder to fake than client-only checks | Requires clean integration |

| Behavioral monitoring | Sequence and anomaly analysis | Adapts to new tactics | Needs data, tooling, and maintenance |

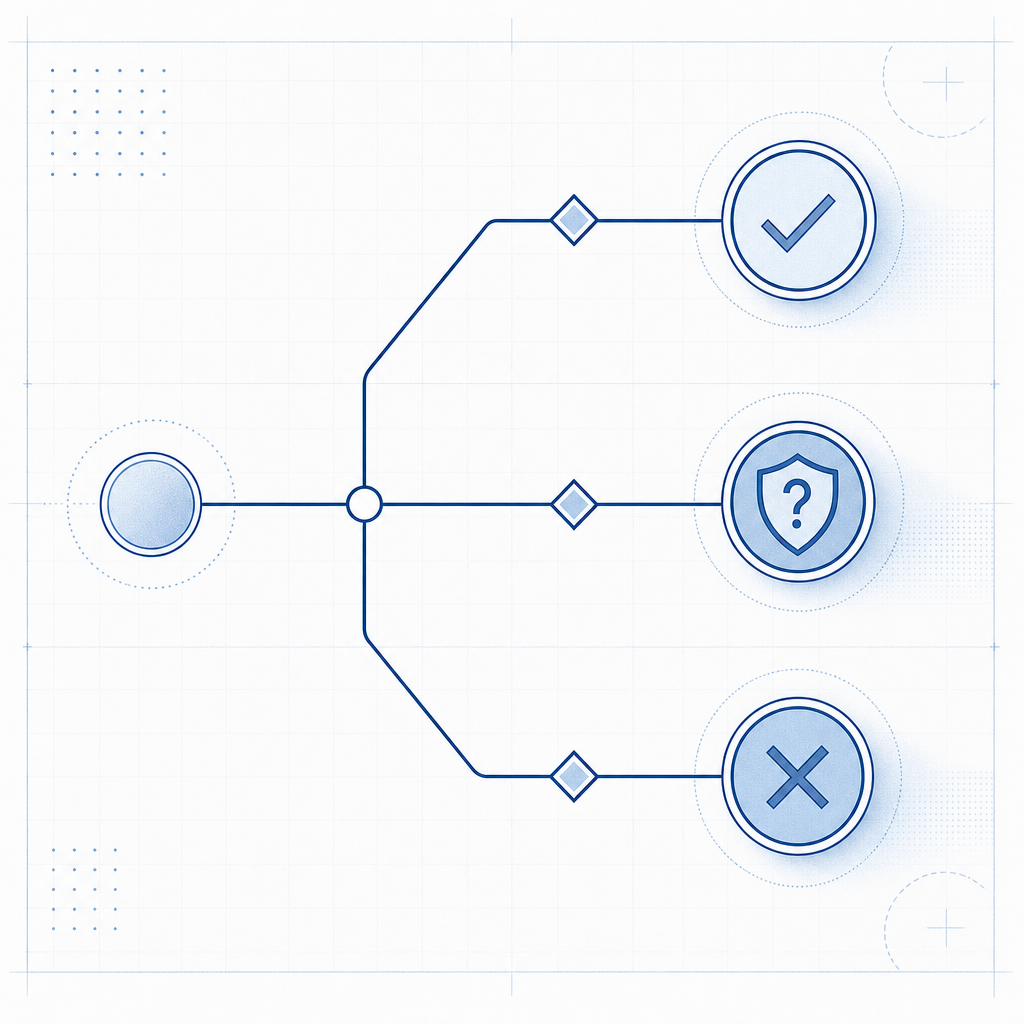

In practice, many teams use a challenge system as one part of a larger decision engine. For example, a login flow might pass silently for low-risk sessions, challenge suspicious ones, and escalate only if the risk score remains high.

If you are evaluating a CAPTCHA or bot-defense workflow, products like CaptchaLa are typically used at this middle layer: the browser loads a challenge, the client receives a pass token, and the server validates that token before trusting the action. The important part is not the challenge alone, but how cleanly it plugs into your own risk policy.

The technical loop: from browser to server and back

A defender’s integration needs to be simple enough to ship, but strict enough to trust. A typical flow looks like this:

- The browser loads a challenge script.

- The user completes the challenge when risk requires it.

- The client receives a

pass_token. - Your backend validates that token before accepting the sensitive action.

- You log the outcome for analysis and tuning.

For CaptchaLa, the loader is served from:

https://cdn.captcha-cdn.net/captchala-loader.jsAnd token validation happens server-side via:

POST https://apiv1.captcha.la/v1/validatewith a body such as:

{

"pass_token": "token-from-client",

"client_ip": "203.0.113.42"

}using X-App-Key and X-App-Secret headers. There is also a server-token issuance endpoint for backend-driven flows:

POST https://apiv1.captcha.la/v1/server/challenge/issueFor teams that need to support multiple platforms, CaptchaLa also exposes native SDKs for Web (JS/Vue/React), iOS, Android, Flutter, and Electron, plus server SDKs for captchala-php and captchala-go. The practical benefit is consistency: the same anti-bot policy can be applied across web and mobile surfaces without inventing separate mechanisms for each stack.

A small validation example

// English comments only

// Send the pass token to your backend after challenge completion

async function submitChallengeResult(passToken) {

const response = await fetch("/api/validate-captcha", {

method: "POST",

headers: {

"Content-Type": "application/json"

},

body: JSON.stringify({

pass_token: passToken

})

});

if (!response.ok) {

throw new Error("Challenge validation failed");

}

return await response.json();

}The key security point is that validation belongs on the server, not in the browser. Client-side checks are useful for user experience and pre-filtering, but trust should be established by your backend after verification.

Choosing the right defense for the risk

Not every endpoint deserves the same treatment. An anti bot engineer usually segments flows by value and abuse likelihood.

Common use cases

- Signup forms: protect against fake account creation and email bombing

- Login forms: slow credential stuffing and password spraying

- Password reset: stop enumeration and abuse of reset workflows

- Checkout and promo redemption: reduce coupon abuse and scripted carting

- Public APIs: protect against scraping and abusive query patterns

- Lead forms: limit spam submissions and low-quality fraud

Different products handle these cases differently. reCAPTCHA is widely known and often used for broad coverage. hCaptcha is popular where publishers want alternative monetization and different privacy tradeoffs. Cloudflare Turnstile is commonly chosen for low-friction website protection integrated into Cloudflare’s edge ecosystem. The right choice depends on your stack, privacy requirements, observability needs, and how much control you want over policy.

For some teams, the major question is not “Which challenge is smartest?” but “Which system will let us tune risk without rebuilding the app every quarter?” That is where documentation quality and SDK coverage matter as much as the challenge itself. The docs should answer implementation and validation questions quickly; the pricing page should help you match traffic volume to plan shape before rollout.

Operational habits that separate mature teams from fragile ones

The best anti bot engineers do not stop at integration. They build feedback loops.

Track challenge rate by endpoint

A sudden spike may mean an attack, a broken client integration, or an overly aggressive rule.Measure completion and abandonment

If humans cannot finish the challenge, you are creating your own conversion loss.Segment by risk cohort

New users, returning users, high-value transactions, and suspicious geographies should not all share the same policy.Review false positives weekly

Every blocked real user is a signal that your policy needs tuning.Version your policies

Changes to thresholds, challenge triggers, or allowlists should be auditable.Protect first-party data only

Keep the decisioning focused on signals you legitimately collect in your own product. That reduces privacy risk and makes the system easier to explain internally.

A mature anti bot program often starts with conservative enforcement and expands only after the team can show it improves abuse metrics without hurting conversion.

Where to go next: if you are mapping out a bot-defense rollout, start with the implementation details in the docs and compare plan fit on the pricing page.