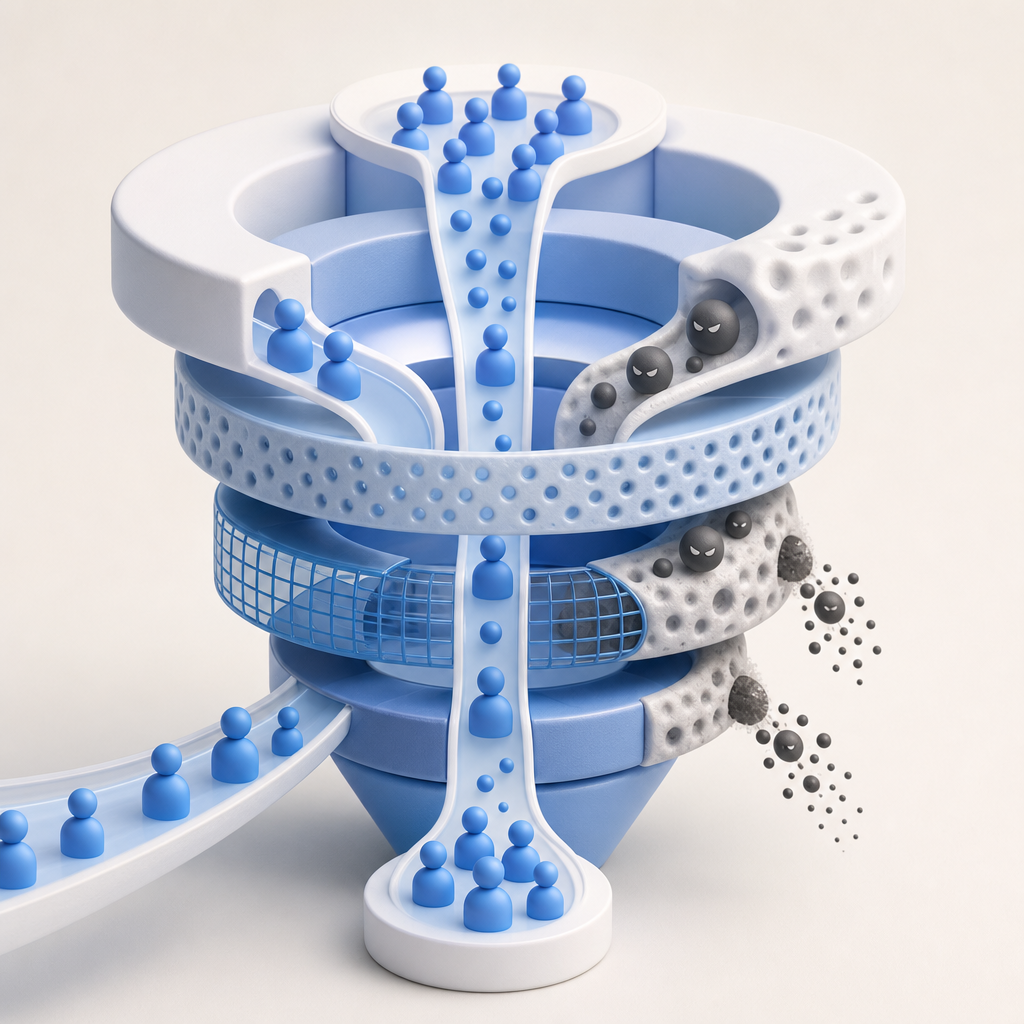

An anti ai scraping tool should do one thing well: stop automated collection of your content without adding friction for real humans. That means detecting scraping patterns early, challenging suspicious traffic selectively, and giving you clear signals you can use to tune defenses over time.

The tricky part is that “AI scraping” is not one behavior. It can look like fast content harvesting, headless browsing, credential stuffing, distributed low-and-slow requests, or browser automation pretending to be normal traffic. A useful anti ai scraping tool has to understand that spectrum and respond with layered controls, not just a single checkbox.

What an anti ai scraping tool actually needs to do

At a minimum, the tool should help you separate humans from automated clients at the edge or right before sensitive actions. That sounds simple, but scraping defense fails when the system is too blunt: either it blocks legitimate users, or it lets bots adapt too easily.

A practical defense stack usually includes:

Request context analysis

Look at rate, route, method, referrer patterns, IP reputation, user-agent anomalies, and session continuity. No single signal is enough, but several weak signals together can be convincing.Adaptive challenge flows

The best anti ai scraping tool does not challenge everyone. It applies checks only when traffic looks suspicious, so normal visitors do not pay the cost.Server-side validation

Client-side checks are useful, but they are only part of the picture. You want a server verification step that confirms the pass result before granting access.Policy control

Different endpoints deserve different treatment. A product detail page, an API endpoint, and a login form should not use the same threshold.Observability

You need logs, metrics, and validation results to see what is happening. If you cannot measure challenge pass rates or bot pressure, tuning becomes guesswork.

Here is a simple way to think about the decision flow:

if request looks normal:

allow

else if request is suspicious but not clearly malicious:

challenge

if validation passes:

allow with lower trust

else:

deny or throttle

else:

blockThat logic is boring on purpose. Security that works usually is.

How anti-scraping tools differ from CAPTCHAs and bot platforms

People often use “CAPTCHA,” “bot defense,” and “anti scraping” interchangeably, but they are not the same thing.

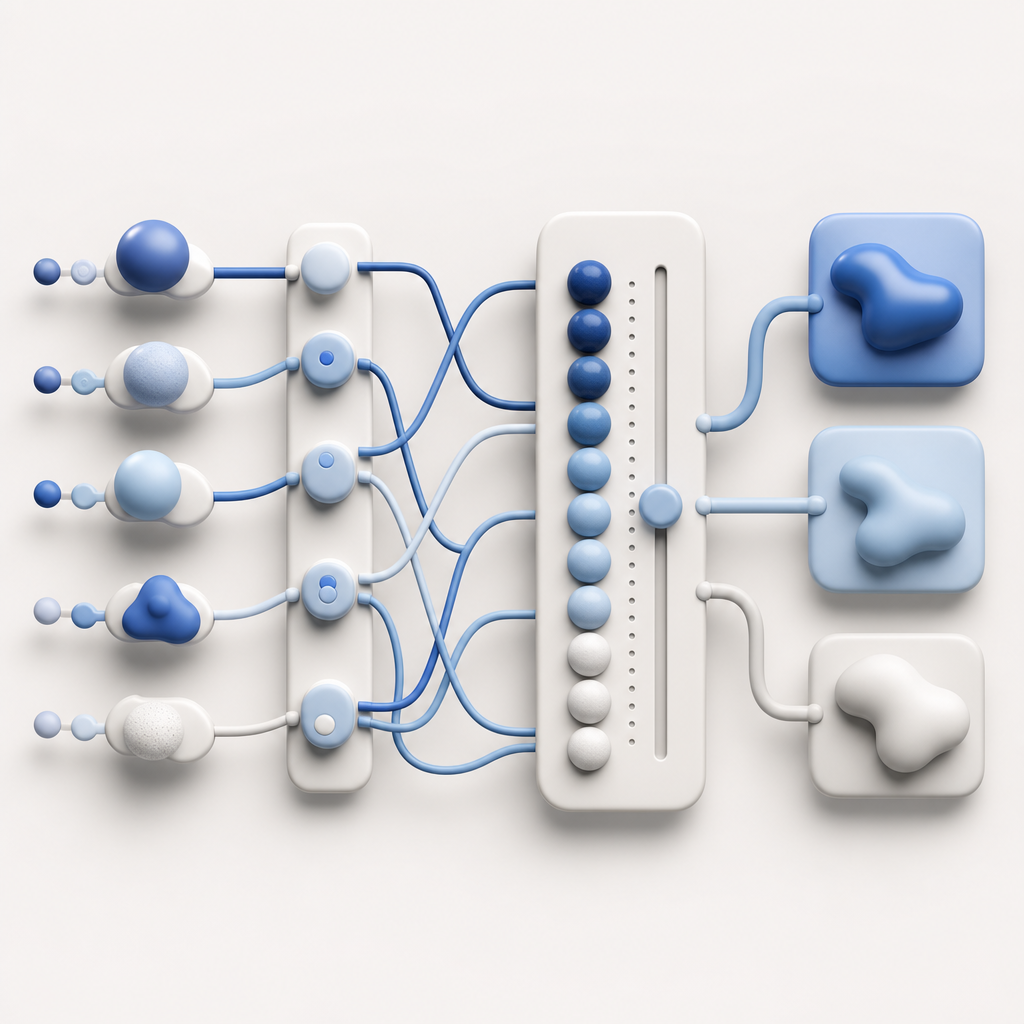

A CAPTCHA is usually the user-facing challenge. A bot platform is the broader control layer that decides when to challenge, how to validate, and what to do after validation. An anti ai scraping tool may include CAPTCHA-style challenges, but it should also include policy, telemetry, and server enforcement.

Quick comparison

| Approach | Primary purpose | Strengths | Tradeoffs |

|---|---|---|---|

| reCAPTCHA | User verification and risk scoring | Widely recognized, easy to add | Can add friction; some teams prefer more control over data handling |

| hCaptcha | Challenge-based bot detection | Flexible deployment, common alternative | Still requires thoughtful tuning to avoid user friction |

| Cloudflare Turnstile | Low-friction verification | Good user experience, simple integration | Tied to Cloudflare ecosystem decisions |

| Purpose-built anti scraping layer | Scraping-specific policy and validation | Better endpoint-level control and observability | Usually requires more explicit configuration |

That does not mean one is “better” in the abstract. It means you should match the tool to the problem. If your main issue is content harvesting, you may need request-level policies, token validation, and route-specific controls more than a generic site-wide challenge.

CaptchaLa fits in this category as a bot-defense layer rather than just a widget. It supports 8 UI languages, native SDKs for Web (JS, Vue, React), iOS, Android, Flutter, and Electron, plus server SDKs like captchala-php and captchala-go. That matters because scraping pressure often touches both web and app surfaces, not just one homepage form.

What to evaluate before you choose a tool

If you are comparing options, the best filter is not marketing language. It is whether the system can be operated safely under real traffic.

1) Integration path

Ask how fast you can wire the client and the server together. A good setup should have a clear client-side loader and a straightforward validation endpoint. For example, CaptchaLa uses a loader script at https://cdn.captcha-cdn.net/captchala-loader.js, and server validation happens with a POST request to https://apiv1.captcha.la/v1/validate using pass_token and client_ip with X-App-Key and X-App-Secret.

2) Trust model

Prefer tools that keep the verification loop tight. Your server should not assume a token is valid until it checks it. If you are issuing challenge tokens server-side, a dedicated endpoint such as POST https://apiv1.captcha.la/v1/server/challenge/issue can help keep the flow controlled.

3) Data boundaries

For many teams, the question is not only “does it work?” but “what data is used?” First-party data only is a cleaner story for privacy and compliance than mixing in unnecessary third-party signals.

4) Scale and pricing fit

Scraping traffic can spike fast. Make sure the plan matches your request volume without forcing awkward architectural compromises. CaptchaLa’s public tiers are structured around volume, with Free at 1,000/month, Pro at 50K–200K, and Business at 1M. That range is useful when you need to start small but expect growth.

5) Developer ergonomics

If integration is hard, teams postpone deployment until after the damage starts. SDK coverage matters: web, mobile, desktop, and server libraries reduce the odds that one surface stays unprotected.

A common implementation pattern looks like this:

// English comments only

// 1. Render the challenge on suspicious requests

// 2. Receive the pass token after completion

// 3. Send token to server for validation

// 4. Allow or deny based on validation result

async function verifyAccess(passToken, clientIp) {

const response = await fetch("https://apiv1.captcha.la/v1/validate", {

method: "POST",

headers: {

"Content-Type": "application/json",

"X-App-Key": process.env.APP_KEY,

"X-App-Secret": process.env.APP_SECRET

},

body: JSON.stringify({

pass_token: passToken,

client_ip: clientIp

})

});

return response.json();

}Practical deployment patterns that reduce scraping without punishing users

The most effective defenses are usually selective, not universal. If you challenge everyone, you train attackers and annoy your audience. If you challenge no one, you invite harvesters.

Use endpoint-specific policies

Protect the pages and routes that matter most:

- product listings

- search results

- pricing pages

- login and signup

- account recovery

- API endpoints with valuable content

Search result pages often need stricter rate controls than static pages. Login should usually have stronger verification than blog reading. A single global rule rarely fits both.

Combine verification with rate limiting

Verification and throttling solve different problems. A bot can pass one challenge and still be too fast. A human can fail one challenge and still deserve another chance. The right system uses both.

Watch for adaptive scraping behavior

Modern scraping often mimics normal browsing: slower rates, realistic headers, distributed IPs, and cookie reuse. That means you should look beyond raw request counts and watch for patterns such as:

- repeated navigation across deep page ranges

- high-volume access to similar templates

- unusual retry behavior after challenge failures

- session churn without meaningful engagement

- access concentrated on content with high training value

Keep the user experience honest

If a real user gets challenged, the flow should be understandable and quick. Confusing, looping, or overly aggressive checks can hurt conversion more than the scraping you were trying to stop.

CaptchaLa’s multi-language support helps here if you have international traffic, because challenge copy and prompts should not become a barrier for legitimate users. That is especially true when your audience spans web and mobile apps.

A sane way to decide whether a tool is good enough

The question is not whether an anti ai scraping tool can stop every automated request. Nothing can. The question is whether it reduces abuse enough to protect your content while keeping operations manageable.

Use this checklist:

- Can it validate on the server, not just in the browser?

- Can it target specific routes and actions?

- Does it provide enough telemetry to tune thresholds?

- Does it preserve a good experience for normal users?

- Can you deploy it across web and app surfaces?

- Does its data handling fit your privacy requirements?

- Can you start small and scale with traffic?

If the answer to most of those is yes, you are probably looking at a serious option rather than a cosmetic widget.

For teams that want to test a bot-defense layer without overcommitting, CaptchaLa is a reasonable place to start reading the docs and evaluating how the validation flow fits your stack. The docs and pricing pages are enough to judge implementation effort and volume fit before you commit to anything.

Where to go next

If you are comparing approaches, start with the integration details and the plan that matches your traffic. Review the docs, then check pricing to see whether the volume tiers fit your current and expected usage.