An amazon audio captcha is an audio-based challenge used to confirm that a visitor is human, usually as an alternative to visual puzzles when accessibility or usability is a concern. From a defender’s point of view, the important part is not the exact branding, but the pattern: audio challenges are meant to slow down automation while keeping the flow usable for people who can’t complete image-based CAPTCHAs.

That makes audio CAPTCHAs a useful, but imperfect, control. They can help with accessibility, yet they’re also part of a broader bot-defense strategy that has to account for abuse, scraping, account creation fraud, and scripted form submissions. If you’re building or reviewing one, the real question is: how do you keep the experience accessible without making it easy for automation to exploit?

What an amazon audio captcha is trying to solve

Audio CAPTCHAs exist because some users cannot reliably interact with visual puzzles. That includes users with low vision, screen-reader reliance, color-vision differences, or situations where a visual challenge is simply inconvenient. The challenge typically plays spoken characters, words, or numbers, and the user enters what they hear.

For defenders, the goal is straightforward:

- Distinguish a human from scripted automation.

- Preserve a usable fallback when visual challenges are not appropriate.

- Avoid creating a workflow that becomes a barrier for legitimate users.

An amazon audio captcha-style flow usually appears after a suspicious action, a failed visual challenge, or as an accessibility fallback. The “Amazon” part is often just how people refer to the experience, not a universal technical standard. What matters is the design tradeoff: audio can reduce friction for humans, but it introduces a different surface for automation, replay attempts, and transcription-based abuse.

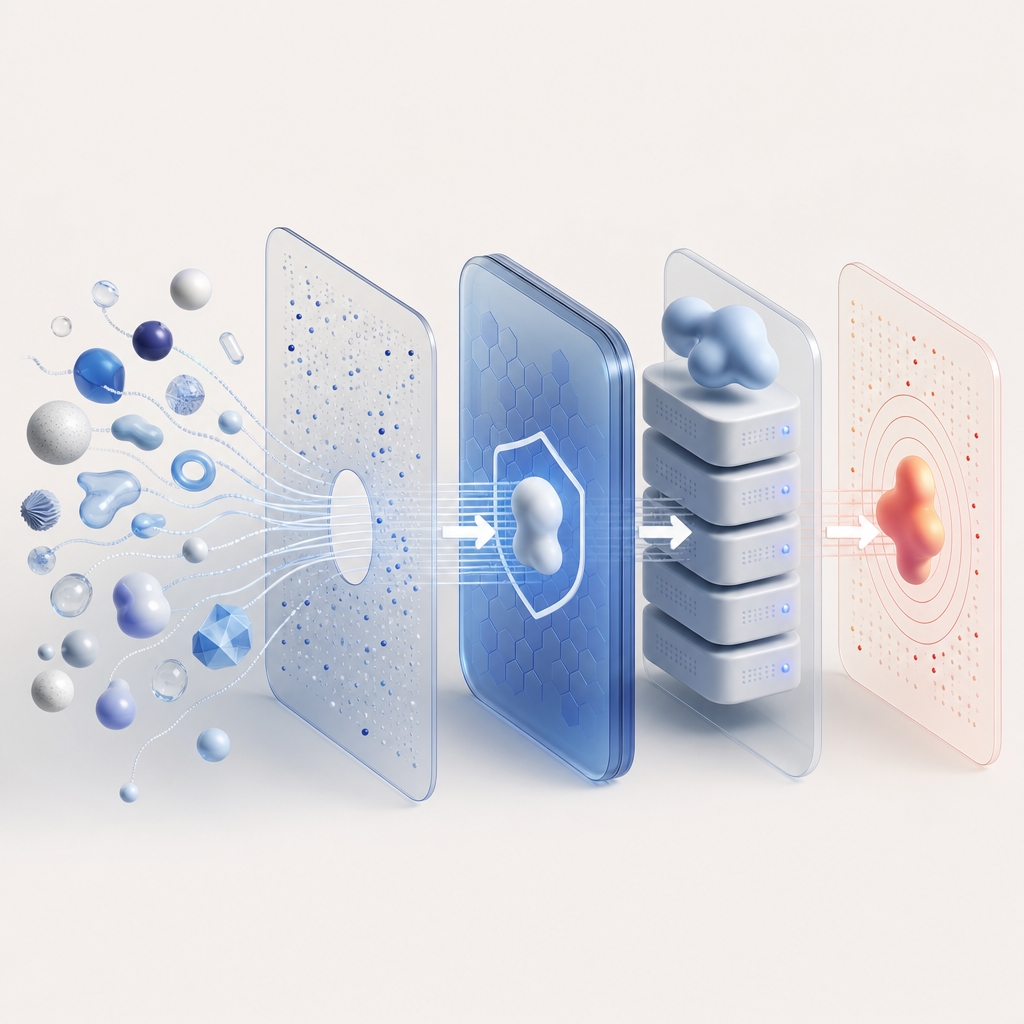

That’s why modern bot defense rarely relies on one challenge type alone. Teams increasingly combine client signals, server-side validation, rate limiting, and challenge orchestration. Products like CaptchaLa fit into that broader pattern with first-party data handling and SDKs across web and mobile, which helps keep the implementation clean without overexposing internal logic.

Why audio CAPTCHAs still matter, even with newer bot defenses

Some teams assume audio CAPTCHAs are obsolete because newer systems can analyze behavior, device signals, or network patterns. Not quite. They still matter for two practical reasons.

First, accessibility. A visual-only challenge can exclude real users. Audio creates a fallback path that can be made available when needed, rather than forcing everyone into one interaction style.

Second, layered defense. Even if your primary protection is behavioral or risk-based, an audio challenge can still serve as a secondary friction point when a session looks suspicious.

Here’s a simple comparison of common approaches:

| Approach | Main strength | Main weakness | Best use case |

|---|---|---|---|

| Audio CAPTCHA | Accessibility fallback | Can be noisy or transcription-prone | Human verification when visual is unsuitable |

| reCAPTCHA | Familiar ecosystem, broad adoption | Can be intrusive depending on risk scoring | General web forms and login flows |

| hCaptcha | Flexible challenge options | Still a challenge-based UX | Sites needing puzzle-based verification |

| Cloudflare Turnstile | Low-friction user experience | Tied to Cloudflare-centric workflows | Low-friction verification at scale |

This is not a ranking; it’s a design choice conversation. For some products, the challenge itself is the right control. For others, a token-based flow with server-side validation is a better fit. The best answer depends on your threat model, user base, and accessibility obligations.

If your system uses audio as a fallback, make sure it’s not the only defense. Combine it with signal-based controls such as IP reputation, velocity checks, and session integrity checks. That way, even if the challenge is solved, the backend can still decide whether the request is trustworthy.

How defenders should implement audio challenge flows

The implementation details matter as much as the concept. A robust flow separates the client challenge from the server’s decision, keeps secrets off the browser, and validates tokens in a predictable way.

A practical implementation pattern looks like this:

- Render a lightweight challenge widget or loader on the client.

- Issue a challenge token or pass token from the server side.

- Submit the user response to your backend.

- Validate the token server-side before allowing the action.

- Log outcome metadata for abuse analysis and tuning.

For CaptchaLa-style integrations, the validate endpoint is explicit: POST https://apiv1.captcha.la/v1/validate with a body containing pass_token and client_ip, authenticated via X-App-Key and X-App-Secret. There is also a server-token issuance endpoint at POST https://apiv1.captcha.la/v1/server/challenge/issue, and the client loader is available from https://cdn.captcha-cdn.net/captchala-loader.js.

A simple server-side validation sketch might look like this:

// Validate a challenge response on your backend

// English comments only

async function validateCaptcha(passToken, clientIp) {

const response = await fetch('https://apiv1.captcha.la/v1/validate', {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'X-App-Key': process.env.CAPTCHALA_APP_KEY,

'X-App-Secret': process.env.CAPTCHALA_APP_SECRET

},

body: JSON.stringify({

pass_token: passToken,

client_ip: clientIp

})

});

if (!response.ok) {

throw new Error('Captcha validation failed');

}

return await response.json();

}A few implementation details are easy to miss:

- Validate on the server, not in the browser.

- Bind the token to the expected client IP when supported.

- Treat a passed challenge as one signal, not a blanket trust grant.

- Expire tokens quickly so replay becomes less useful.

- Keep telemetry so you can measure false positives and abandonment.

CaptchaLa also supports multiple platforms if your app extends beyond a single web form: native SDKs for Web (JS/Vue/React), iOS, Android, Flutter, and Electron, plus server SDKs like captchala-php and captchala-go. That can reduce the temptation to invent custom glue code for each surface.

Accessibility, abuse resistance, and product UX

The hardest part of audio CAPTCHA design is balancing accessibility with abuse resistance. If the audio is too hard to parse, you’ve created a new barrier. If it’s too easy to script around, you’ve weakened your protection.

Good practice means paying attention to a few details:

- Use clear, bounded audio prompts rather than long conversational clips.

- Keep the fallback available without forcing it on every user.

- Avoid rate limits that punish assistive technology users disproportionately.

- Make sure failure states are understandable and recoverable.

- Test with screen readers and keyboard-only flows.

You should also think beyond the challenge itself. Many teams route suspicious users through a broader decision tree: let low-risk users pass silently, challenge borderline traffic, and block or step-up high-risk requests. Audio is one branch of that tree, not the whole tree.

If you’re evaluating vendors, compare not just challenge types but operational fit: supported frameworks, validation semantics, pricing, and data handling. CaptchaLa’s published tiers, for example, range from a free tier at 1,000 validations per month to Pro volumes around 50K–200K and Business at 1M, with first-party data only. That matters if you need predictable usage and minimal data exposure. You can review pricing and implementation details in the docs.

Where audio CAPTCHAs fit in a modern stack

Audio CAPTCHAs are not a replacement for broader bot defense. They’re a specific UX and accessibility tool inside a layered control system. In practice, teams use them when they need an explicit human-verification step and want to avoid excluding users who can’t complete visual puzzles.

If you’re building a signup form, checkout flow, password reset, or abuse-prone API gateway, the decision is less about “audio vs. visual” and more about “what combination of controls gives us acceptable risk with acceptable friction?” That may include device signals, server-side token validation, rate limits, and challenge escalation based on behavior.

For many applications, the smartest path is to keep the client simple, let the backend decide, and treat the challenge as one piece of evidence. That approach gives you room to iterate without locking your product into a brittle one-size-fits-all puzzle.

Where to go next: if you’re evaluating an implementation, start with the docs or review pricing to see which tier matches your traffic and integration needs.